Prerequisites

To follow along with this example, ensure that you have the following:- Golioth account with an organization and a connected compatible device (e.g., nrf9160).

- Edge Impulse enterprise account.

- Trained Edge Impulse project ready for deployment.

- AWS account with access to S3.

- Basic knowledge of AWS S3, Docker, and Zephyr.

- Example from the Golioth on AI tutorial repository here

Step 1: Create a Managed Golioth Device with Configured Firmware

Before proceeding with the integration, ensure that your Golioth device is set up with the appropriate firmware. For detailed instructions on initializing your Zephyr workspace, building, and flashing the firmware, please refer to the Golioth on AI repository README.1. Initialize the Zephyr workspace:

- Clone the Golioth on AI repository and initialize the Zephyr workspace with the provided manifest file.

2. Create a project on Golioth.

- Log in to Golioth and create a new project. This project will handle the routing of sensor data and classification results.

3. Create a project on Edge Impulse.

- Log in to Edge Impulse Studio and create a new project. This project will receive and process the raw accelerometer data uploaded from the S3 bucket.

Step 2: Create Golioth Pipelines

Once your device is set up, follow the instructions in the repository README to create the necessary Golioth pipelines. This includes setting up the Classification Results Pipeline and the Accelerometer Data Pipeline. Latest detailed steps can be found in the repository Golioth on AI repository README. Golioth Pipelines allows you to route data between different services and devices efficiently. You will need to configure two pipelines for this demo:1. Classification Results Pipeline:

- This pipeline routes classification results (e.g., gesture predictions) to Golioth’s LightDB Stream, which stores data in a timeseries format.

- You will configure a path for classification results (e.g., /class) and ensure that the data is converted to JSON format.

2. Accelerometer Data Pipeline to S3:

- This pipeline handles raw accelerometer data by forwarding it to an S3 object storage bucket. Ensure the pipeline is set up to transfer binary data.

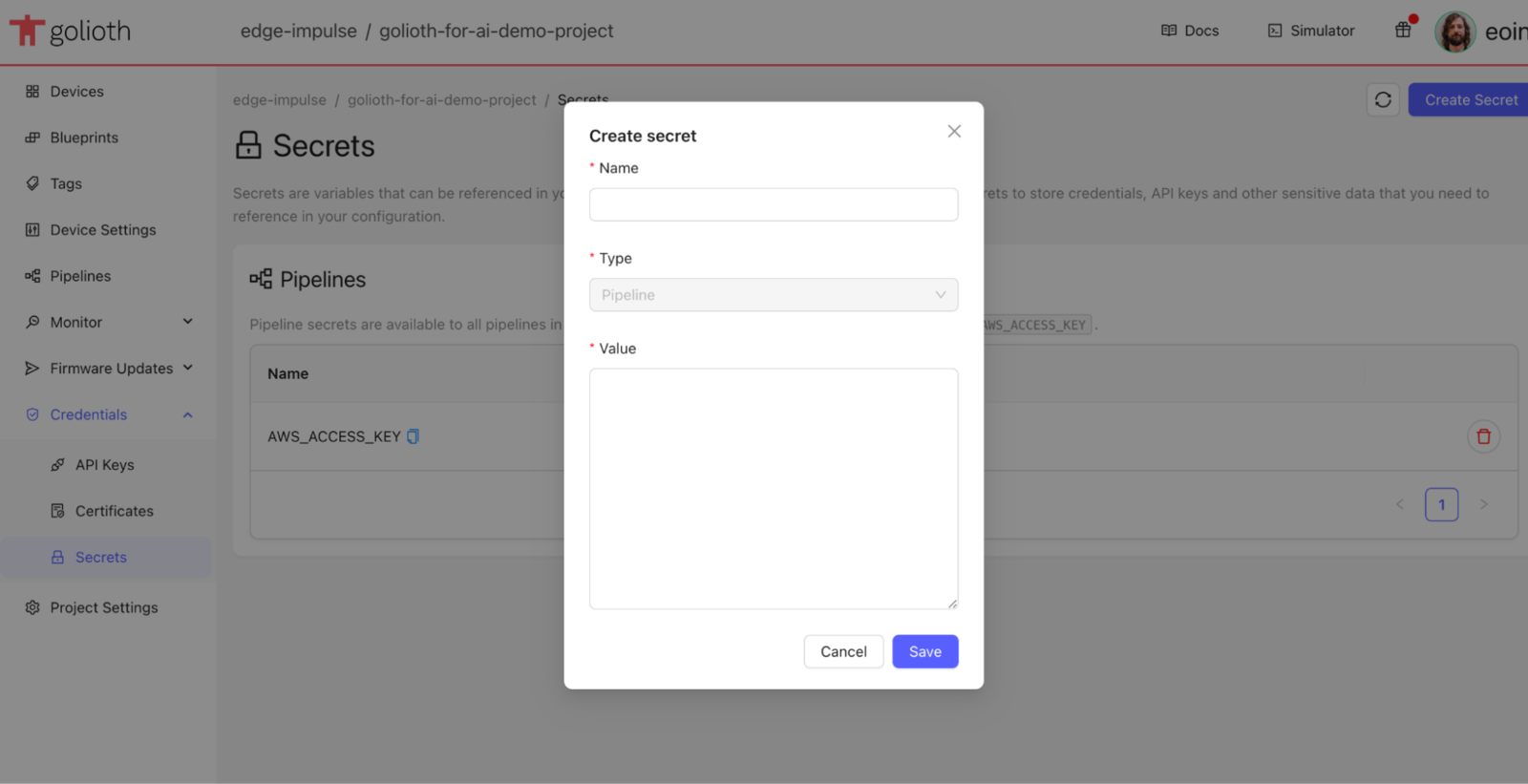

- Important: Configure your AWS credentials by creating secrets in Golioth for AWS_ACCESS_KEY and AWS_SECRET_KEY, and specify the target bucket name and region.

Step 3: Deploy the Edge Impulse Inferencing Library

1. Generate the Model:

- Follow the instructions in the continuous motion recognition tutorial to generate a gesture recognition model.

2. Download and Extract the Library:

- Download the generated library from Edge Impulse Studio and extract the contents.

3. Build and Flash Firmware:

- Build the firmware:

- Set Golioth Credentials::

- Add the same configuration to the Golioth secret store

- Navigate to your Golioth Secret Store:

https://console.golioth.io/org/<organization>/project/<application>/secrets - Add your AWS credentials (Access Key ID and Secret Access Key) and the S3 bucket details to the Golioth secret store.

Step 4: Data Acquisition

1. Trigger Data Acquisition:

- Press the button on the Nordic Thingy91 to start sampling data from the device’s accelerometer.

2. Data Routing:

- Raw accelerometer data will be automatically routed to your S3 bucket via the Golioth pipeline. You can later import this data into Edge Impulse Studio for further model training.

3. View Classification Results:

- Classification results will be stored in Golioth’s LightDB Stream. You can access this data for further analysis or visualization.

Step 5: Import Data into Edge Impulse

1. Access Data from S3:

- In Edge Impulse Studio, use the Data acquisition page to import your raw accelerometer data directly from the S3 bucket

2. Label and Organize Data:

Once imported, label your data appropriately to prepare it for model training.Example Classification and Raw Data

Below is an example of classification data you can expect to see in the Golioth console:Step 6: Data Processing

From here we can perform a number of data processing steps on the collected data:1. Data Transformation:

- Use the custom CBOR transformation block to convert raw accelerometer data to a format suitable for training a model in Edge Impulse.

2. Data Quality:

- Apply custom transformation blocks to perform data quality checks or preprocessing steps on the collected data.

3. Model Training:

- Import the transformed data into Edge Impulse Studio and train a new model using the collected accelerometer data.

4. Model Deployment:

- Deploy the trained model back to the Golioth device for real-time gesture recognition.