- Your project requires to use a grayscale camera because you already purchased the hardware.

- Your engineers have already spent hours working on a dedicated digital signal processing method that has been proven to work with your sensor data.

- You have the feeling that a particular neural network architecture will be more suited for your project.

Understanding Search Space Configuration

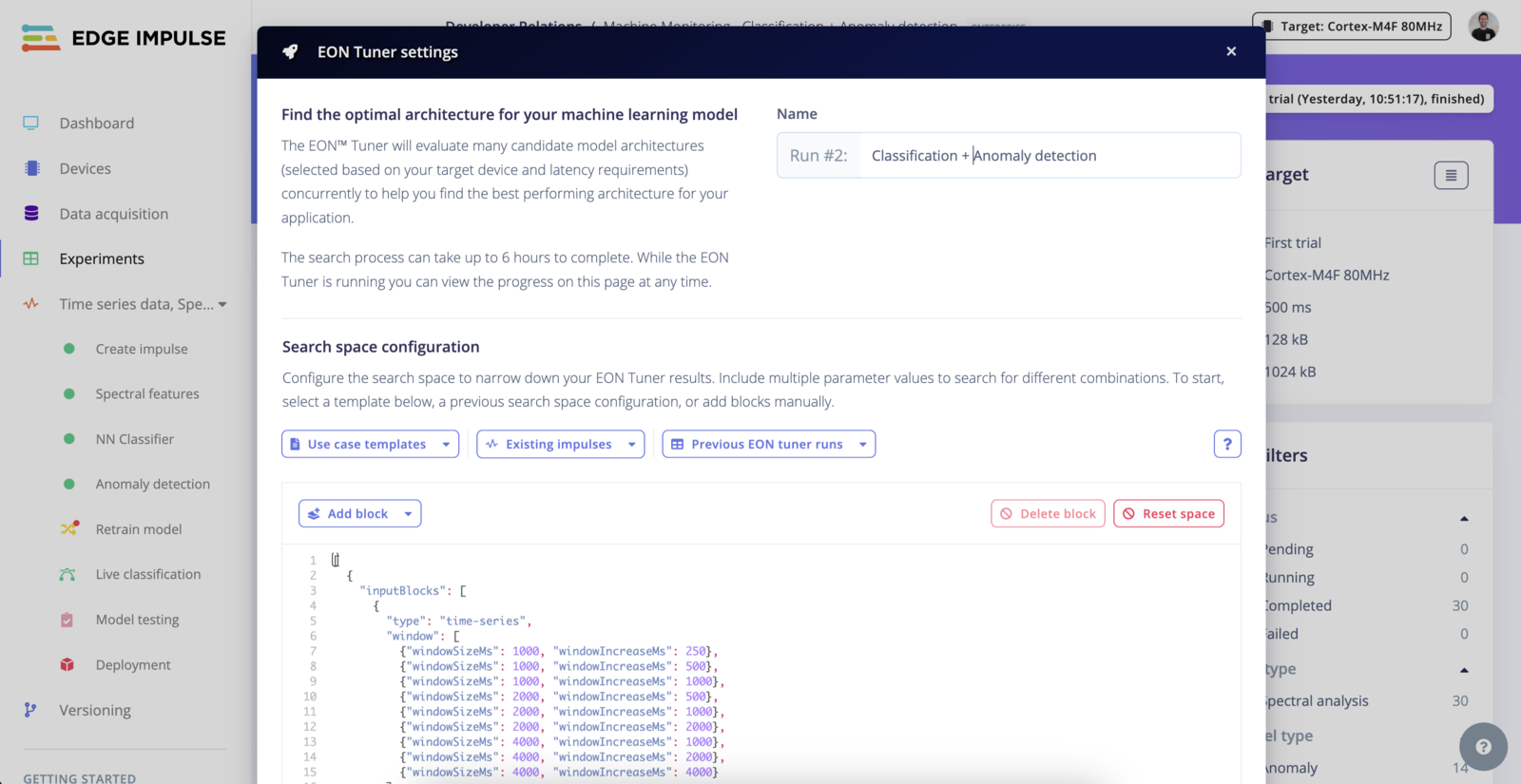

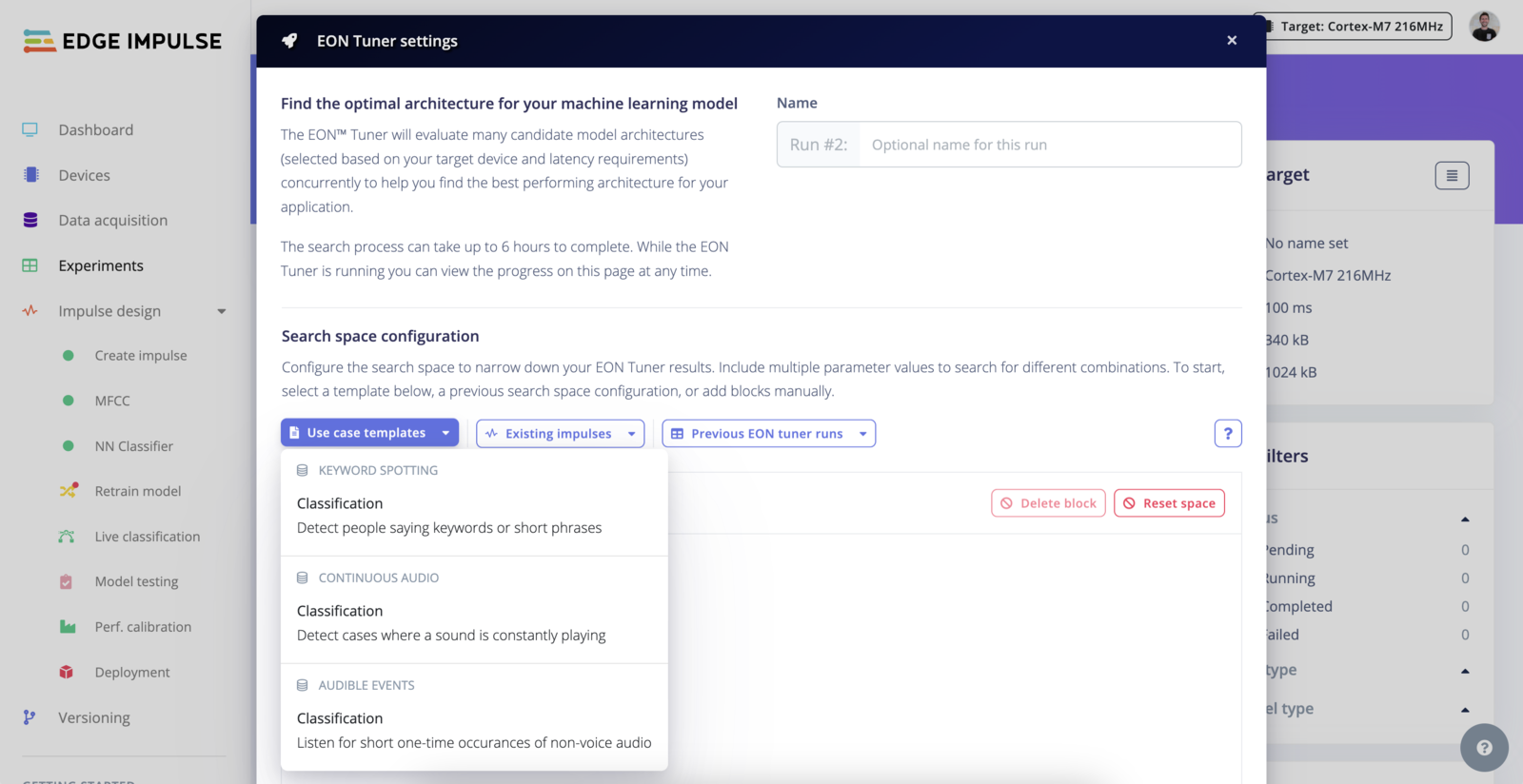

The EON Tuner Search Space allows you to define the structure and constraints of your machine learning projects through the use of templates.Templates

The Search Space works with templates. The templates can be considered as a config file where you define your constraints. Although templates may seem hard to use in the first place, once you understand the core concept, this tool is highly capable.

Load a template

To understand the core concepts, we recommend having a look at the available templates. We provide templates for different task categories as well as one for your current impulse if it has already been trained.

Search parameters

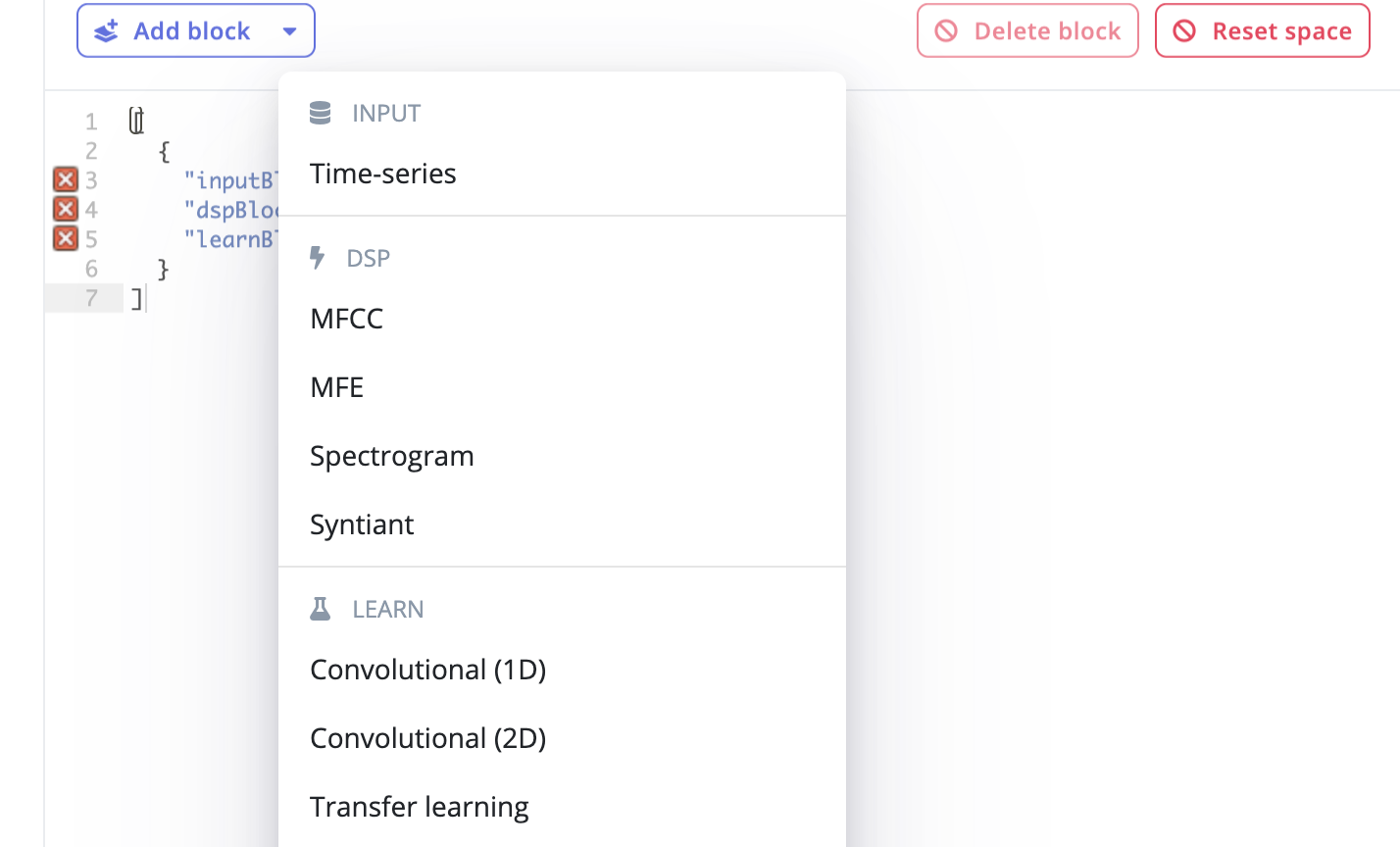

Elements inside an array are considered as parameters. This means, you can stack several combinations ofinputBlocks|dspBlocks|learnBlocks in your templates and each block can contain several elements:

Format

- Input Blocks

- DSP Blocks

- Learning Blocks

Input Blocks (inputBlocks)

Common Fields for All Input Blocks

id: Unique identifier for the block.- Type:

number

- Type:

type: The nature of the input data.- Type:

string - Valid Options:

time-series,image

- Type:

title: Optional descriptive title for the block.- Type:

string

- Type:

Specific Fields for Image Type Input Blocks

dimension: Dimensions of the images.- Type:

arrayofarrayofnumber - Example Valid Values: [[32, 32], [64, 64], [96, 96], [128, 128], [160, 160], [224, 224], [320, 320]]

- Enterprise Option: All dimensions available with full enterprise search space.

- Type:

resizeMode: How the image should be resized to fit the specified dimensions.- Type:

arrayofstring - Valid Options:

squash,fit-short,fit-long

- Type:

resizeMethod: Method used for resizing the image.- Type:

arrayofstring - Valid Options:

nearest,lanczos3

- Type:

cropAnchor: Position on the image where cropping is anchored.- Type:

arrayofstring - Valid Options:

top-left,top-center,top-right,middle-left,middle-center,middle-right,bottom-left,bottom-center,bottom-right

- Type:

Specific Fields for Time-Series Type Input Blocks

window: Details about the windowing approach for time-series data.- Type:

arrayofobjectwith fields:windowSizeMs: The size of the window in milliseconds.- Type:

number

- Type:

windowIncreaseMs: The step size to increase the window in milliseconds.- Type:

number

- Type:

- Type:

windowSizeMs: Size of the window in milliseconds if not specified in thewindowfield.- Type:

arrayofnumber

- Type:

windowIncreasePct: Percentage to increase the window size each step.- Type:

arrayofnumber

- Type:

frequencyHz: Sampling frequency in Hertz.- Type:

arrayofnumber

- Type:

padZeros: Whether to pad the time-series data with zeros.- Type:

arrayofboolean

- Type:

Specifying ranges

Fields of type number can have a search space specified as a range rather than an array. In this case, pass a dictionary. Here are some examples:Additional Notes

- The actual availability of certain dimensions or options can depend on whether your project has full enterprise capabilities (

projectHasFullEonTunerSearchSpace). This might unlock additional valid values or remove restrictions on certain fields. - Fields within

arrayofarraystructures (likedimensionorwindow) allow for multi-dimensional setups where each sub-array represents a different configuration that the EON Tuner can evaluate.

Examples

- Image classification

- Object detection

- Audio

- Motion classification + anomaly detection

- Visual anomaly detection

- Akida Image Classification

Image classification

Example of a template where we constrained the search space to use 96x96 grayscale images to compare a neural network architecture with a transfer learning architecture using MobileNetv1 and v2:Public project: Cars binary classifier - EON Tuner Search SpaceCustom DSP and ML Blocks

Custom DSP block

Only available on the Enterprise planThis feature is only available on the Enterprise plan. Review our plans and pricing or sign up for our free expert-led trial today.