This library lets you run machine learning models and collect sensor data on Linux machines using Python. The SDK is open source and hosted on GitHub: edgeimpulse/linux-sdk-python. See our Linux EIM executable guide to learn more about the .eim executable file format.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Installation guide

- Generic Linux/macOS

- Raspberry Pi

- Jetson Nano

- Windows Subsystem for Linux (WSL)

- Install a recent version of Python 3 (>=3.7).

- Install the SDK

- Clone this repository to get the examples:

- (Optional) If you want to use the camera or microphone examples, install the dependencies:

Classifying data

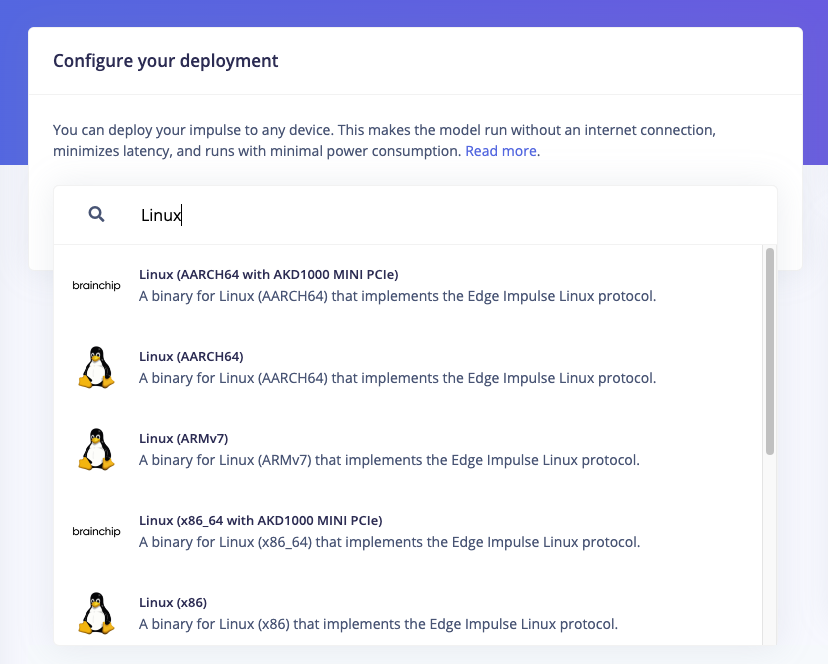

This SDK provides a simple interface to run Edge Impulse machine learning models on Linux machines using Python. To classify data (whether this is from the camera, the microphone, or a custom sensor) you’ll need a model file. This model file contains all signal processing code, classical ML algorithms and neural networks - and typically contains hardware optimizations to run as fast as possible. To grab a model file:- From the Edge Impulse Linux CLI

- From Edge Impulse Studio

- Train your model in Edge Impulse.

- Install the Edge Impulse for Linux CLI.

-

Download the model file via:

This downloads the file into

modelfile.eim. (Want to switch projects? Add--clean)

- Audio - grabs data from the microphone and classifies it in realtime.

- Camera - grabs data from a webcam and classifies it in realtime.

- Camera (full frame) - grabs data from a webcam and classifies it twice (once cut from the left, once cut from the right). This is useful if you have a wide-angle lens and don’t want to miss any events.

- Still image - classifies a still image from your hard drive.

- Video - grabs frames from a video source from your hard drive and classifies it.

- Custom data - classifies custom sensor data.

--help argument for more information.

Collecting data

Collecting data from other sensors

The Linux Python SDK includes an example for uploading raw sensor data to an Edge Impulse project via the Ingestion API using a python script here..Troubleshooting

Collecting print out from the model

To display the logging messages (ie, you may be used to in other deployments), init the runner like soModel timeouts / crashes

For some very large models you may find the default timeout value is too low. If your model is crashing or timing out, you can try increasing the timeout value when initializing theImpulseRunner:

[Errno -9986] Internal PortAudio error (macOS)

If you see this error you can re-install portaudio via:Abort trap (6) (macOS)

This error shows when you want to gain access to the camera or the microphone on macOS from a virtual shell (like the terminal in Visual Studio Code). Try to run the command from the normal Terminal.app.Exception: No data or corrupted data received

This error is due to the length of the results output. To fix this, you can overwrite this line in theclass ImpulseRunner from the runner.py.