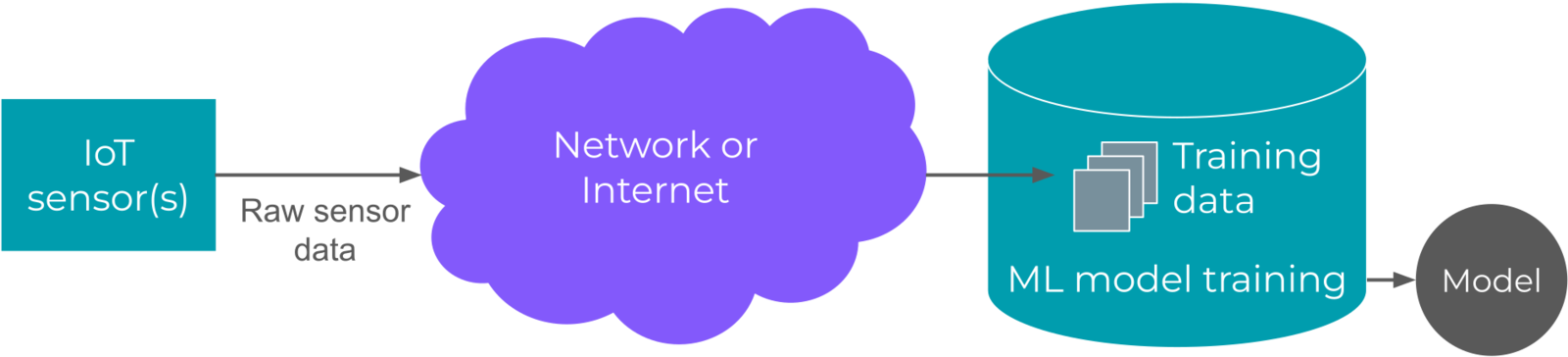

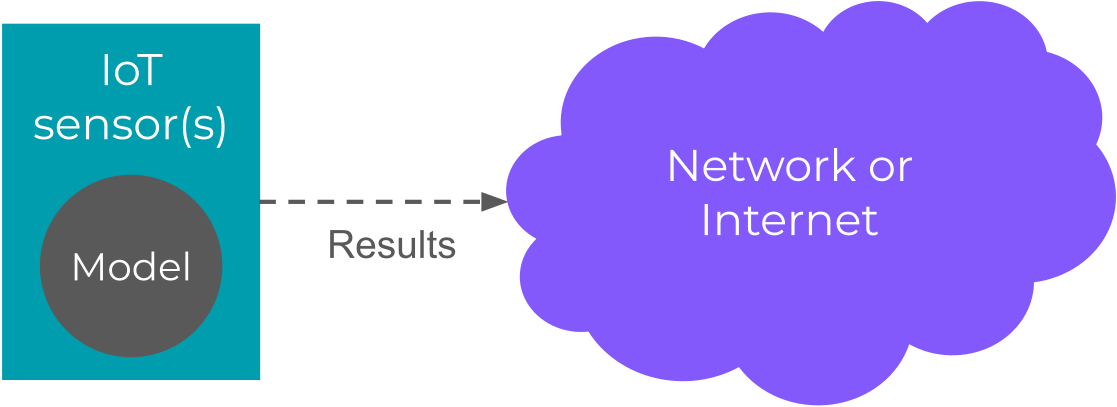

Edge machine learning (edge ML) is the process of running machine learning algorithms on computing devices at the periphery of a network to make decisions and predictions as close as possible to the originating source of data. It is also referred to as edge artificial intelligence or edge AI. In traditional machine learning, we often find large servers processing heaps of data collected from the Internet to provide some benefit, such as predicting what movie to watch next or to label a cat video automatically. By running machine learning algorithms on edge devices like laptops, smartphones, and embedded systems (such as those found in smartwatches, washing machines, cars, manufacturing robots, etc.), we can produce such predictions faster and without the need to transmit large amounts of raw data across a network.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

To accurately describe edge ML, we first need to understand the history of artificial intelligence (AI).

Check out our Edge AI Fundamentals course to learn more about edge computing, the difference between AI and machine learning, and edge MLOps.

Artificial intelligence vs. machine learning

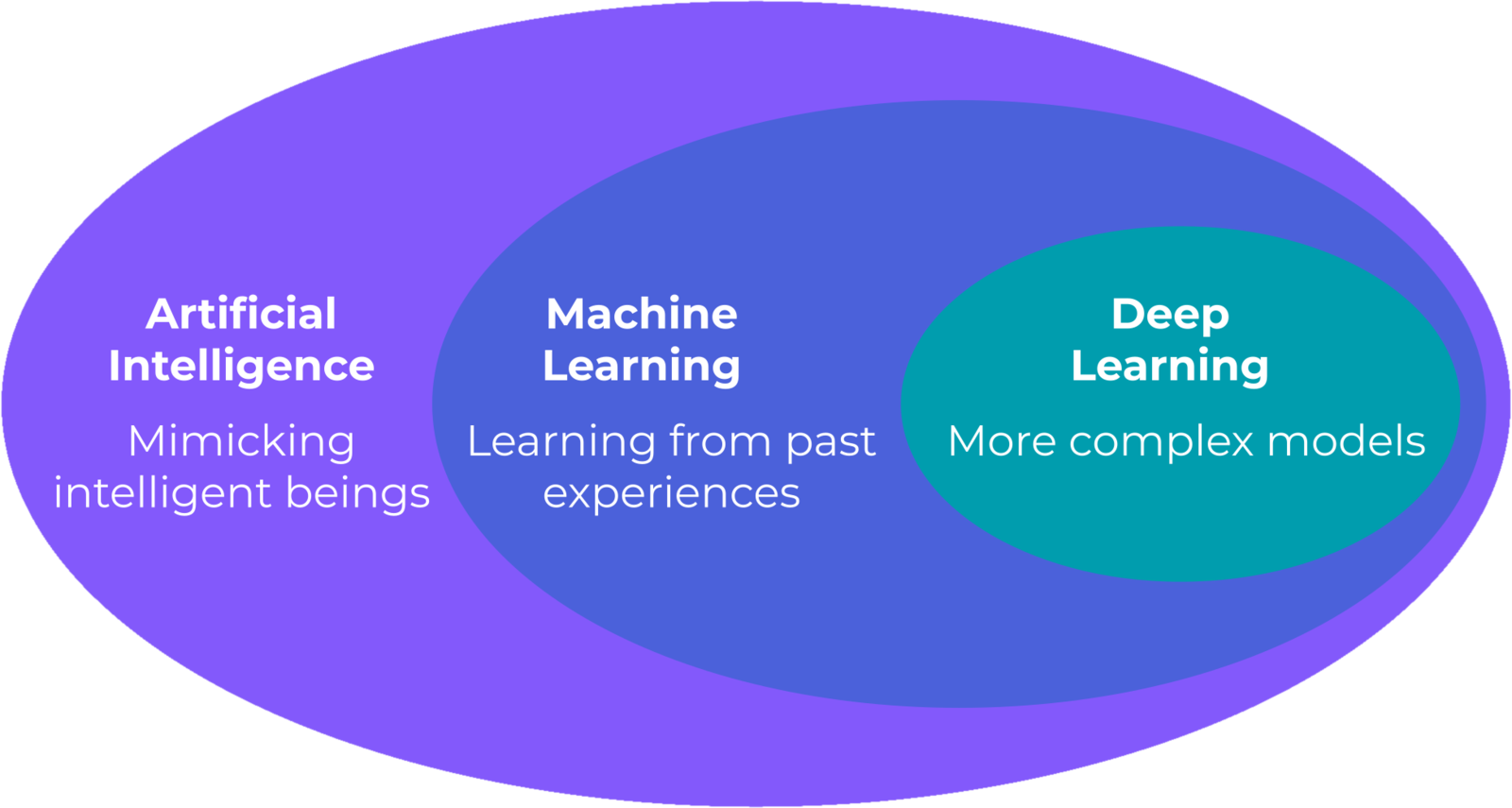

The name “artificial intelligence” originates from a proposal in 1956 by John McCarty, Marvin Minsky, Nathaniel Rochester, and Claude Shannon to host a summer research conference exploring the possibility of programming computers to “simulate many of the higher functions of the human brain.” Many years later, McCarthy would define AI as “the science and engineering of making intelligent machines, especially intelligent computer programs,” where the definition of intelligence is “the computational part of the ability to achieve goals in the world.” From this definition, we see that AI is an extremely broad field of study involving the use of computers to make decisions to achieve arbitrary goals. AI researcher Arthur Samuel trained a computer to play checkers better than most humans by having the program play thousands of games against itself and learning from each iteration. He coined the term “machine learning” in his 1959 paper to mean any program that can learn from experience. The term “deep learning” (DL) comes from a 1986 paper by the mathematician and computer scientist Rina Dechter. She used the term to describe ML models that can be trained to automatically learn features or representations. We often use the term deep learning to describe artificial neural networks with more than a few layers, but it can be used more broadly to refer to other forms of machine learning. From these definitions, we can view deep learning as a subset of machine learning, which is a subset of artificial intelligence. As a result, all DL algorithms can be considered ML and AI. However, not all AI is ML.

Modern machine learning

In the early 2010s, the Google Brain team worked to make deep learning more accessible, which resulted in the creation of the popular TensorFlow framework. The team made headlines in 2012 when they created a model that could accurately classify an image as “cat or not cat.” Since then, AI has soared in popularity, mostly due to the research and development of complex deep neural networks. Powerful graphics cards and server clusters could be employed to speed up the training and inference processes required for deep learning.

- Image and video labeling

- Speech recognition and synthesis

- Language translation

- Product and content recommendations

- Email spam filtering

- Credit card fraud detection

- Market and customer segmentation

- Stock market trading

The Internet of Things

The Internet of Things (IoT) is the collection of sensors, hardware devices, and software that exchange information with other devices and computers across communication networks. We often think of IoT as a series of sensors with WiFi or Bluetooth connectivity that can relay to us information about the environment.

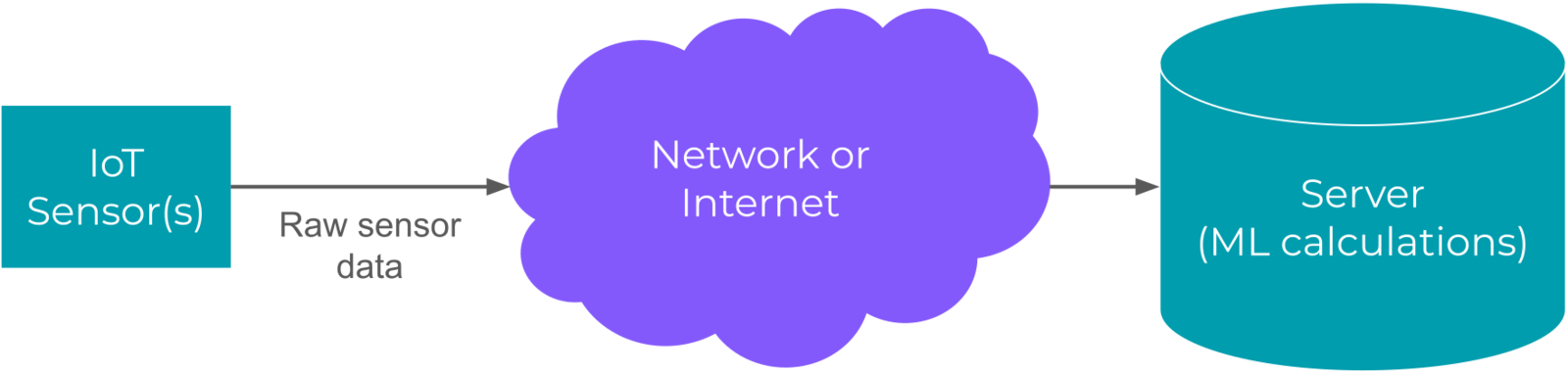

- Transmitting large sensor data, such as images, may hog network bandwidth

- Transmitting data also requires power

- The sensors require constant connection to the server to provide near real time ML computations

Edge and embedded machine learning

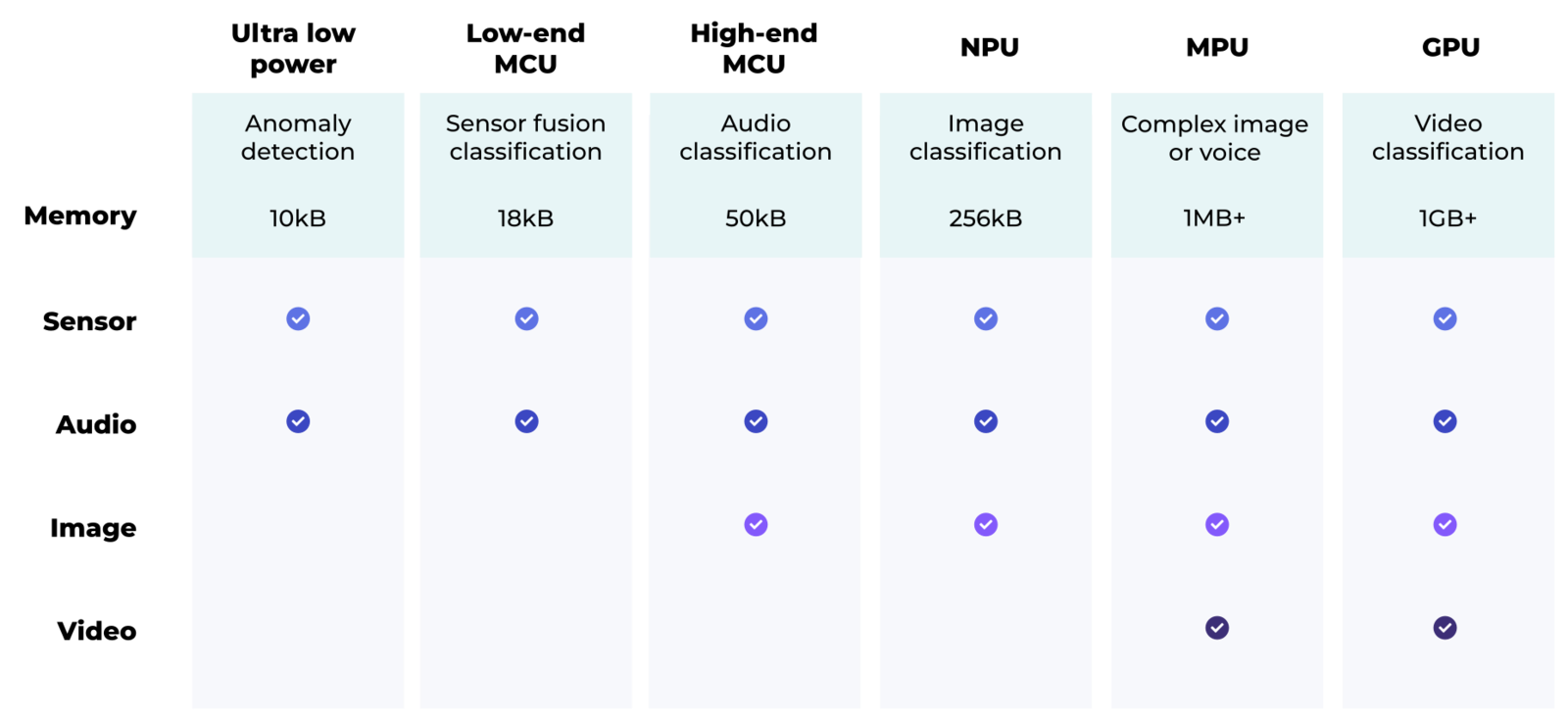

Advances in hardware and machine learning have paved the way for running deep ML models efficiently on edge devices. Complex tasks, such as object detection, natural language processing, and model training, still require powerful computers. In these cases, raw data is often collected and sent to a server for processing. However, performing ML on low-power devices offers a variety of benefits:- Less network bandwidth is spent on transmitting raw data

- While some information may need to be transmitted over a network (e.g. inference results), less communication often means reduced power usage

- Prediction results are available immediately without the need to send them across a network

- Inference can be performed without a connection to a network

- User privacy is ensured, as data is only stored long enough to perform inference (not including data collected for model training)

Model: the mathematical formula that attempts to generalize information from a given set of data.Training: the process of automatically updating the parameters in a model from data. The model “learns” to draw conclusions and make generalizations about the data.Inference: the process of providing new, unseen data to a trained model to make a prediction, decision, or classification about the new data.

Edge ML use cases

The ability to run machine learning on edge devices without the need to maintain a connection to a more powerful computer allows for a variety of automation tools and smarter IoT systems. Here are a few examples where edge ML is enabling innovation in various industries.

- Automatically identifying irrigation requirements

- ML-powered robots for recording crop trials

- Smart HVAC systems that can adapt to the number of people in a room

- Security sensors that listen for the unique sound signature of glass breaking

- Smart grid monitoring that looks for early faults in power lines

- Wildlife tracking

- Portable medical devices that can identify diseases from images

- Digital Health Solution Guide

- Keyword spotting and wake word detection to control household appliances

- Gesture control as assistive technology

- Safety systems that automatically detect the presence of hard hats

- Predictive maintenance that identities faults in machinery before larger problems arise