Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

The Nicla Sense ME is a tiny, low-power tool that sets a new standard for intelligent sensing solutions. With the simplicity of integration and scalability of the Arduino ecosystem, the board combines four state-of-the-art sensors from Bosch Sensortec:

- BHI260AP motion sensor system with integrated AI.

- BMM150 magnetometer.

- BMP390 pressure sensor.

- BME688 4-in-1 gas sensor with AI and integrated high-linearity, as well as high-accuracy pressure, humidity and temperature sensors.

Designed to easily analyze motion and the surrounding environment – hence the “M” and “E” in the name – it measures rotation, acceleration, pressure, humidity, temperature, air quality and CO2 levels by introducing completely new Bosch Sensortec sensors on the market.

Its tiny size and robust design make it suitable for projects that need to combine sensor fusion and AI capabilities on the edge, thanks to a strong computational power and low-consumption combination that can even lead to standalone applications when battery-operated.

The Arduino Nicla Sense ME is available on the Arduino Store.

Installing dependencies

To set this device up in Edge Impulse, you will need to install the following software:

- Edge Impulse CLI.

- Arduino CLI.

- On Linux:

- GNU Screen: install for example via

sudo apt install screen.

Connecting to Edge Impulse

With all the software in place it’s time to connect the development board to Edge Impulse.

1. Connect the development board to your computer

Use a micro-USB cable to connect the development board to your computer.

2. Update the firmware

The development board does not come with the right firmware yet. To update the firmware:

-

Download the latest Edge Impulse ingestion sketch.

-

Open the

nicla_sense_ingestion.ino sketch in a text editor or the Arduino IDE.

-

For data ingestion into your Edge Impulse project, at the top of the file, select 1 or multiple sensors by un-commenting the defines and select a desired sample frequency (in Hz). For example, for the Environmental sensors:

/**

* @brief Sample & upload data to Edge Impulse Studio.

* @details Select 1 or multiple sensors by un-commenting the defines and select

* a desired sample frequency. When this sketch runs, you can see raw sample

* values outputted over the serial line. Now connect to the studio using the

* `edge-impulse-data-forwarder` and start capturing data

*/

// #define SAMPLE_ACCELEROMETER

// #define SAMPLE_GYROSCOPE

// #define SAMPLE_ORIENTATION

#define SAMPLE_ENVIRONMENTAL

// #define SAMPLE_ROTATION_VECTOR

/**

* Configure the sample frequency. This is the frequency used to send the data

* to the studio regardless of the frequency used to sample the data from the

* sensor. This differs per sensors, and can be modified in the API of the sensor

*/

#define FREQUENCY_HZ 10

-

Then, from your sketch’s directory, run the Arduino CLI to compile:

arduino-cli compile --fqbn arduino:mbed_nicla:nicla_sense --output-dir . --verbose

-

Then flash to your Nicla Sense using the Arduino CLI:

arduino-cli upload --fqbn arduino:mbed_nicla:nicla_sense --input-dir . --verbose

-

Wait until flashing is complete, and press the RESET button once to launch the new firmware.

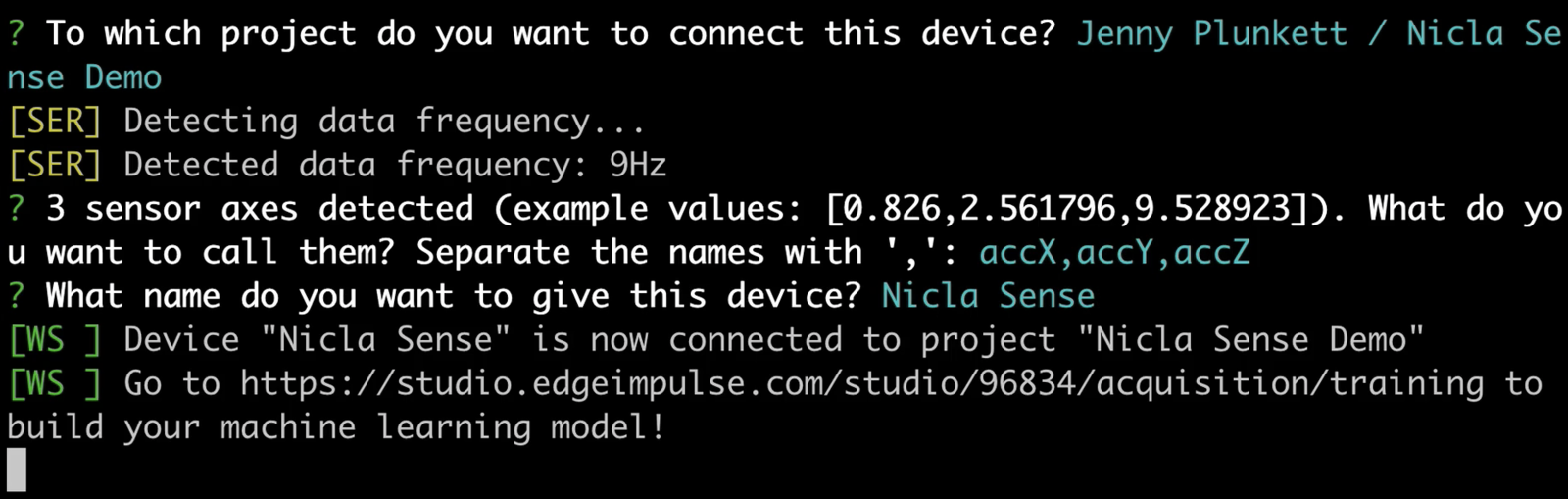

3. Data forwarder

From a command prompt or terminal, run:

edge-impulse-data-forwarder

nicla_sense_ingestion.ino sketch). If you want to switch projects/sensors run the command with --clean. Please refer to the table below for the names used for each axis corresponding to the type of sensor:

| Sensor | Axis names |

|---|

#define SAMPLE_ACCELEROMETER | accX, accY, accZ |

#define SAMPLE_GYROSCOPE | gyrX, gyrY, gyrZ |

#define SAMPLE_ORIENTATION | heading, pitch, roll |

#define SAMPLE_ENVIRONMENTAL | temperature, barometer, humidity, gas |

#define SAMPLE_ROTATION_VECTOR | rotX, rotY, rotZ, rotW |

Starting inferencing in 2 seconds...

ERR: Nicla sensors don't match the sensors required in the model

Following sensors are required: accel.x + accel.y + accel.z + gyro.x + gyro.y + gyro.z + ori.heading + ori.pitch + ori.roll + rotation.x ...

eiSensors nicla_sensors[] (near line 70) in the sketch example to add your custom names. e.g.:

eiSensors nicla_sensors[] =

{

“accel.x”, &get_accX,

“accel.y”, &get_accY,

“accel.z”, &get_accZ,

“gyro.x”, &get_gyrX,

“gyro.y”, &get_gyrY,

“gyro.z”, &get_gyrZ,

“ori.heading”, &get_oriHeading,

“ori.pitch”, &get_oriPitch,

“ori.roll”, &get_oriRoll,

“rotation.x”, &get_rotX,

“rotation.y”, &get_rotY,

“rotation.z”, &get_rotZ,

“rotation.w”, &get_rotW,

“temperature”, &get_temperature,

“barometer”, &get_barrometric_pressure,

“humidity”, &get_humidity,

“gas”, &get_gas,

};

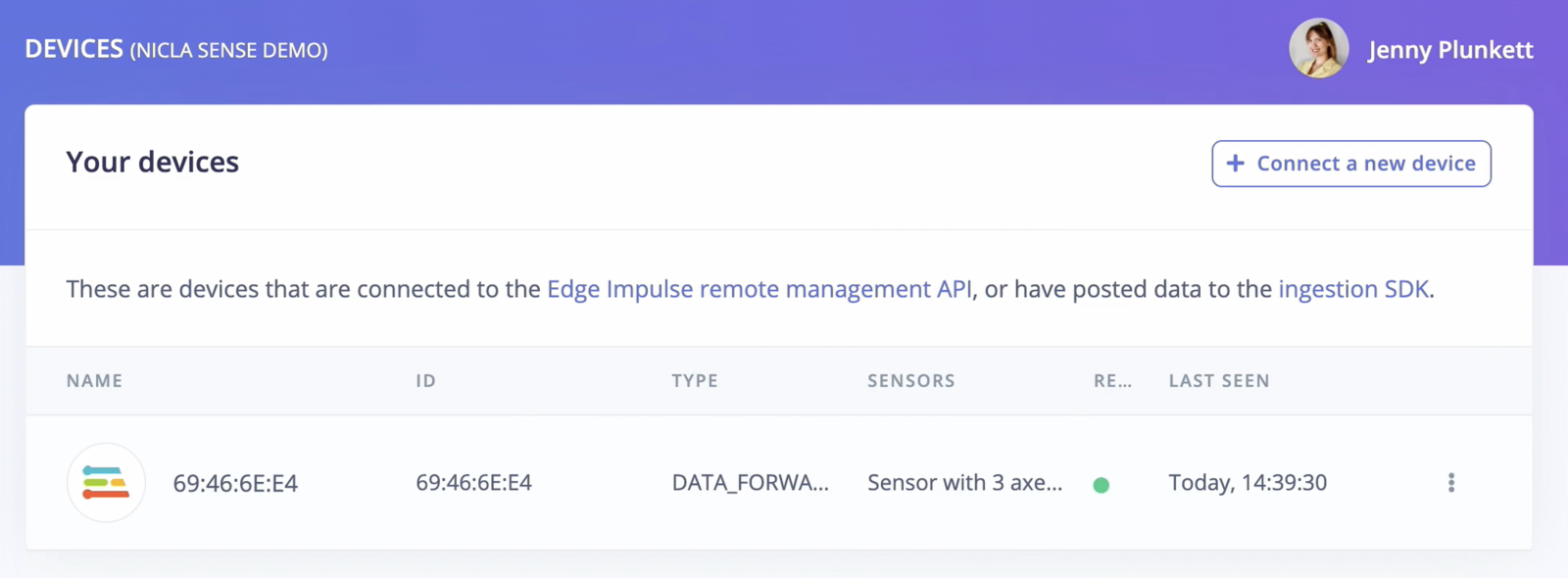

4. Verifying that the device is connected

That’s all! Your device is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with the Edge Impulse continuous motion recognition tutorial.

Looking to connect different sensors? Use the nicla_sense_ingestion sketch and the Edge Impulse Data forwarder to easily send data from any sensor on the Nicla Sense into your Edge Impulse project.

Deploying back to device

With the impulse designed, trained and verified you can deploy this model back to your Arduino Nicla Sense ME. This makes the model run without an internet connection, minimizes latency, and runs with minimum power consumption. Edge Impulse can package the complete impulse - including the signal processing code, neural network weights, and classification code - up into a single library that you can run on your development board.

Use the Run on Arduino tutorial and select one of the Nicla Sense examples.