Installing dependencies

To enable this device for Edge Impulse deployments you must install the following dependencies on your Linux target that has an Akida PCIe board attached.- Python 3.8: Python 3.8 is required for deployments via the Edge Impulse CLI or AKD1000 deployment blocks because the binary file that is generated is reliant on specific paths generated for the combination of Python 3.8 and Python Akida™ Library 2.3.3 installations. Alternatively, if you intend to write your own code with the Python Akida™ Library or the Edge Impulse SDK via the BrainChip MetaTF Deployment Block option you may use Python 3.7 - 3.10.

- Python Akida™ Library 2.3.3: A python package for quick and easy model development, testing, simulation, and deployment for BrainChip devices

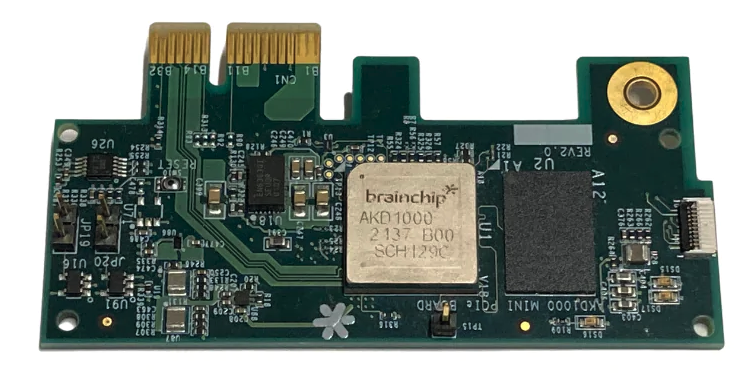

- Akida™ PCIe drivers: This will build and install the driver on your system to communicate with the above AKD1000 reference PCIe board

- Edge Impulse Linux: This will enable you to connect your development system directly to Edge Impulse Studio. For all Brainchip target systems please follow the x86_64 Linux guide as it is the most generic and applicable: https://docs.edgeimpulse.com/hardware/devices/linux-x86_64

Connecting to Edge Impulse

With all software set up, connect your camera or microphone to your operating system and run:--clean.

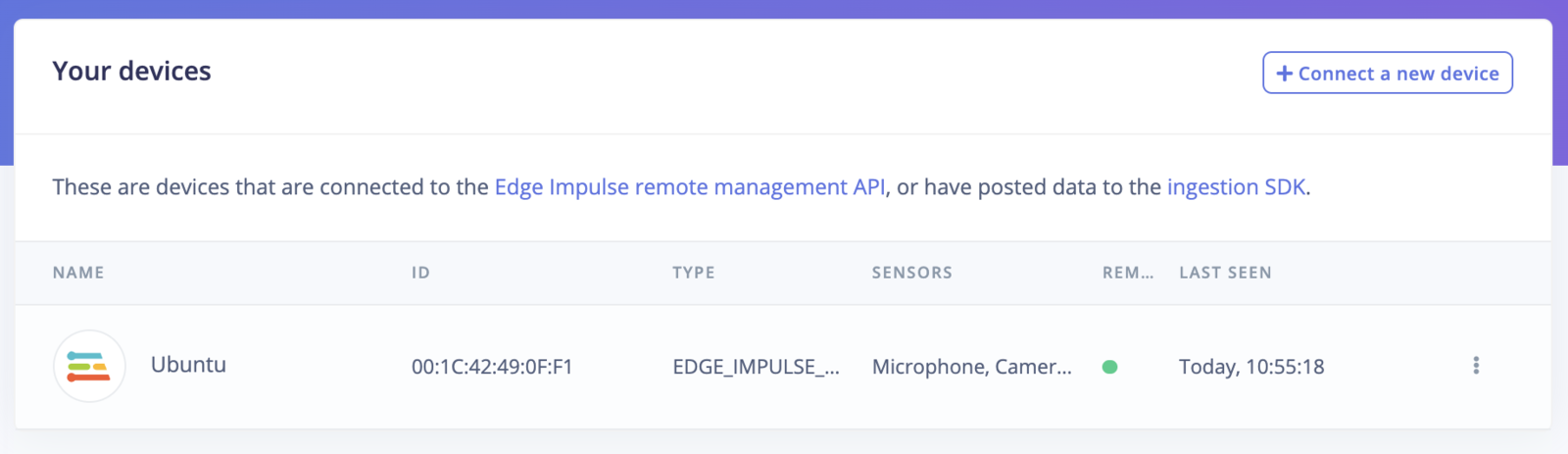

Verifying that your device is connected

That’s all! Your machine is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

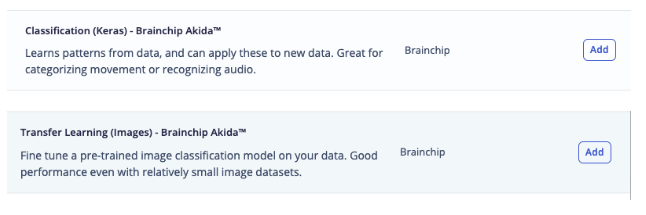

With everything set up you can now build your first machine learning model with these tutorials:Design an Impulse with BrainChip Akida™ Learning Blocks

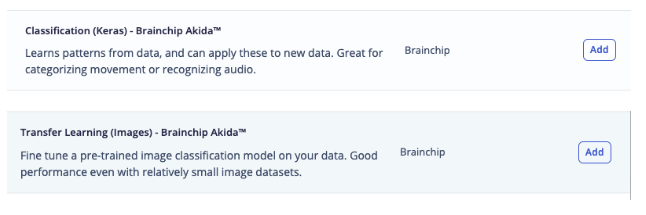

After adding data via Data acquisition starting an Impulse Design you can add BrainChip Akida™ Learning Block. The type of Learning Blocks visible depend on the type of data collected. Using BrainChip Akida™ Learning Blocks will ensure that models generated for deployment will be compatible with BrainChip Akida™ devices.

Training a BrainChip Akida™ Compatible Model

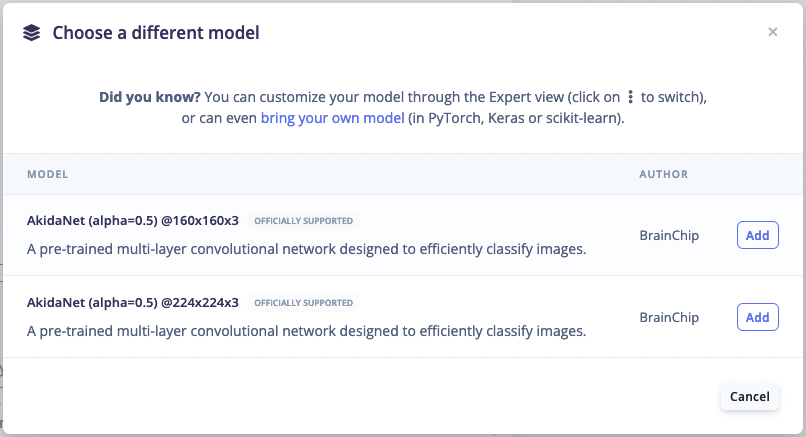

In the Learning Block of the Impulse Design one can compare between Float, Quantized, and Akida™ versions of a model. If you added a Processing Block to your Impulse Design you will need to generate features before you can train your model. If the project uses a transfer learning block you may be able to select a base model from BrainChip’s Model zoo to transfer learn from. More models will be available in the future, but if you have a specific request please let us know via the Edge Impulse forums.

Deploying back to device

In order to achieve full hardware acceleration models must be converted from their original format to run on an AKD1000. This can be done by selecting the BrainChip MetaTF Block from the Deployment Screen. This will generate a .zip file with models that can be used in your application for the AKD1000. The block uses the CNN2SNN toolkit to convert quantized models to SNN models compatible for the AKD1000. One can then develop an application using the Akida™ python package that will call the Akida™ formatted model found inside the .zip file.BrainChip MetaTF Deployment Block

AKD1000 Deployment Block

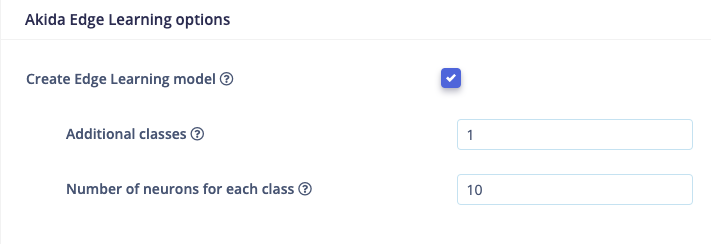

Akida™ Edge Learning

The AKD1000 has a unique ability to conduct training on the edge device. This means that new classification features can be added or completely replace the existing classes in a model. A model must be specifically configured and compiled with MetaTF to access the ability of the AKD1000. To enable the Edge Learning features in Edge Impulse Studio please follow these steps:- Select a BrainChip Akida™ Learning Block in your Impulse design

- In the Impulse design of the learning block, enable Create Edge Learning model under Akida Edge Learning options

- Set the Additional classes and Number of neurons for each class and train the model. For more information about these parameters please visit BrainChip’s documentation of the parameters. Note that Edge Learning compatible models require a specific setup for the feature extractor and classification head of the model. You can view how a model is configured by switching to Keras (expert) mode in the Neural Network settings and searching for “Feature Extractor” and “Build edge learning compatible model” comments in the Keras code.

Public projects using Akida™ learning blocks

We have multiple projects that are available to clone immediately to quickly train and deploy models for the AKD1000.- FOMO project using BrainChip MetaTF and Akidanet models

- Image Classification project using BrainChip MetaTF and Akidanet models

- Image Classification - Deck of Cards - BrainChip Akida - Edge Learning

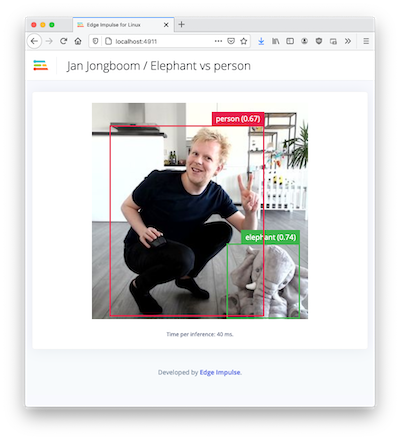

Image model?

If you have an image model then you can get a peek of what your device sees by being on the same network as your device, and finding the ‘Want to see a feed of the camera and live classification in your browser’ message in the console. Open the URL in a browser and both the camera feed and the classification are shown:

Troubleshooting

Error: Classifying failed, error code was -23 (missing Python akida library)

It is mainly related to initialization of the Akida™ NSoC and model and is could be caused by lack of Akida Python libraries. Please check if you have an Akida™ Python library installed:

WARNING: Package(s) not found: akida) then install it:

debug option:

Location directory from pip show akida command is listed in your sys.path output. If not (usually it happens if you are using Python virtual environments), then export PYTHONPATH:

edge-impulse-linux-runner once again.

Error: Classifying failed, error code was -23 (other issues)

If the previous step didn’t help, try to get additional debug data. With your EIM model downloaded, open one terminal window and do:Failed to run impulse Capture process failed with code 1

This error could mean that your camera is in use by another process. Check if you don’t have any application open that is using the camera. This error could all exists when your previous attempt to runedge-impulse-linux-runner failed with exception. In that case, check if you have a gst-launch-1.0 process running. For example:

5615) is a process ID. Kill the process:

edge-impulse-linux-runner once again.