You can use your Linux x86_64 device or computer as a fully-supported development environment for Edge Impulse for Linux. This lets you sample raw data, build models, and deploy trained machine learning models directly from the Studio. If you have a webcam and microphone plugged into your system, they are automatically detected and can be used to build models.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

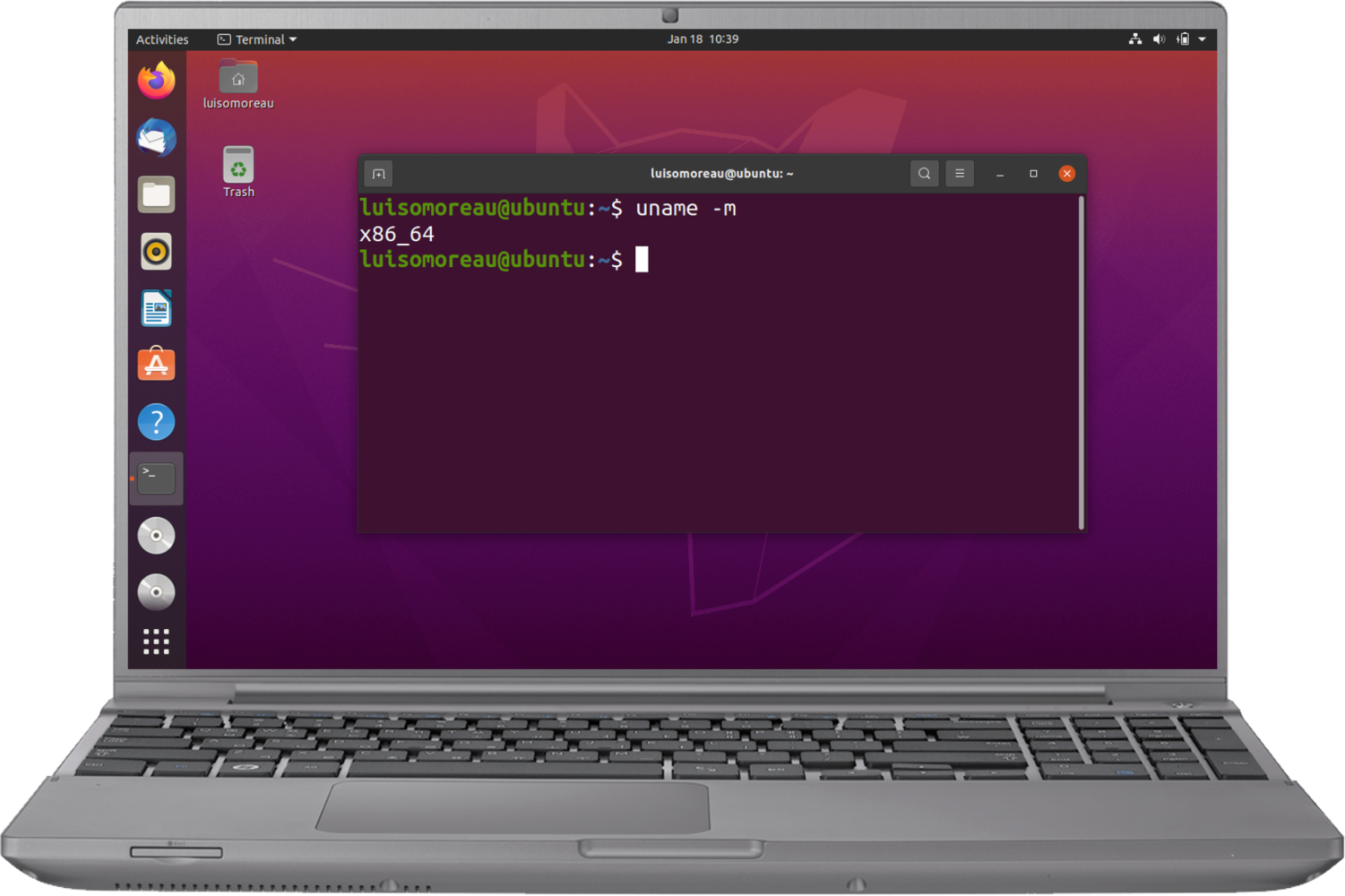

Instruction set architecturesIf you are not sure about your instruction set architectures, use:

Installing dependencies

To set this device up in Edge Impulse, run the following commands: Ubuntu/Debian:Connecting to Edge Impulse

With all software set up, connect your camera or microphone to your operating system (see ‘Next steps’ further on this page if you want to connect a different sensor), and run:--clean.

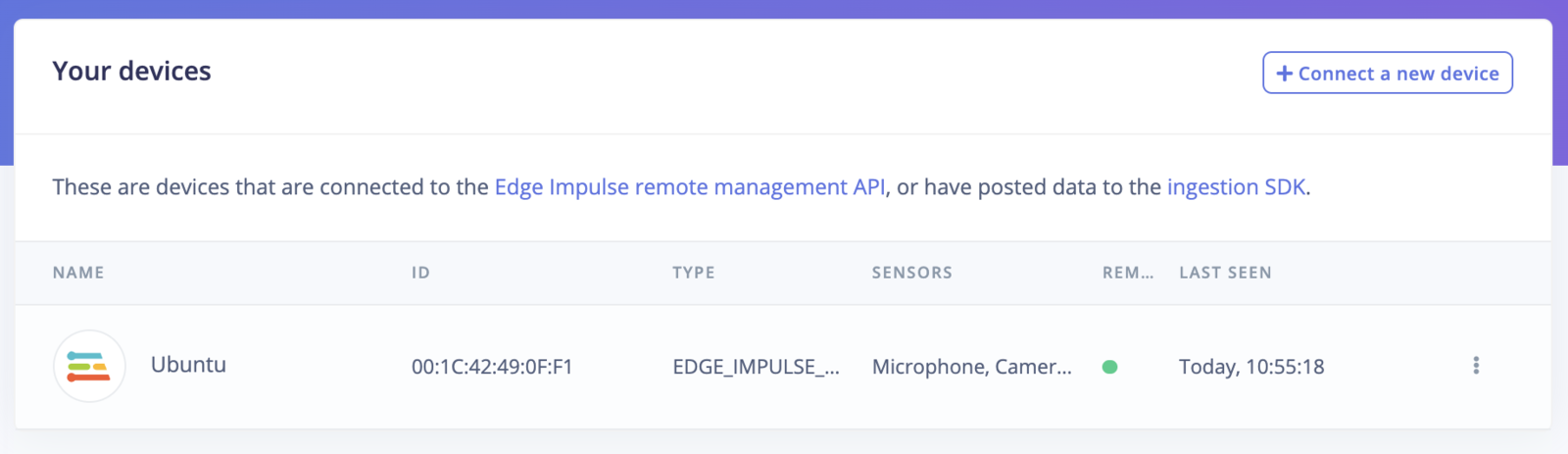

Verifying that your device is connected

That’s all! Your machine is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Keyword spotting

- Sound recognition

- Image classification

- object detection

- Object detection with centroids (FOMO) Looking to connect different sensors? Our Linux SDK lets you easily send data from any sensor and any programming language (with examples in Node.js, Python, Go and C++) into Edge Impulse.

Deploying back to device

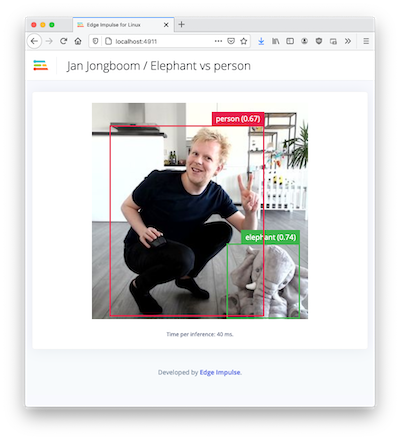

To run your impulse locally run on your Linux platform:Image model?

If you have an image model then you can get a peek of what your device sees by being on the same network as your device, and finding the ‘Want to see a feed of the camera and live classification in your browser’ message in the console. Open the URL in a browser and both the camera feed and the classification are shown: