Only available on the Enterprise planThis feature is only available on the Enterprise plan. Review our plans and pricing or sign up for our free expert-led trial today.

Block structure

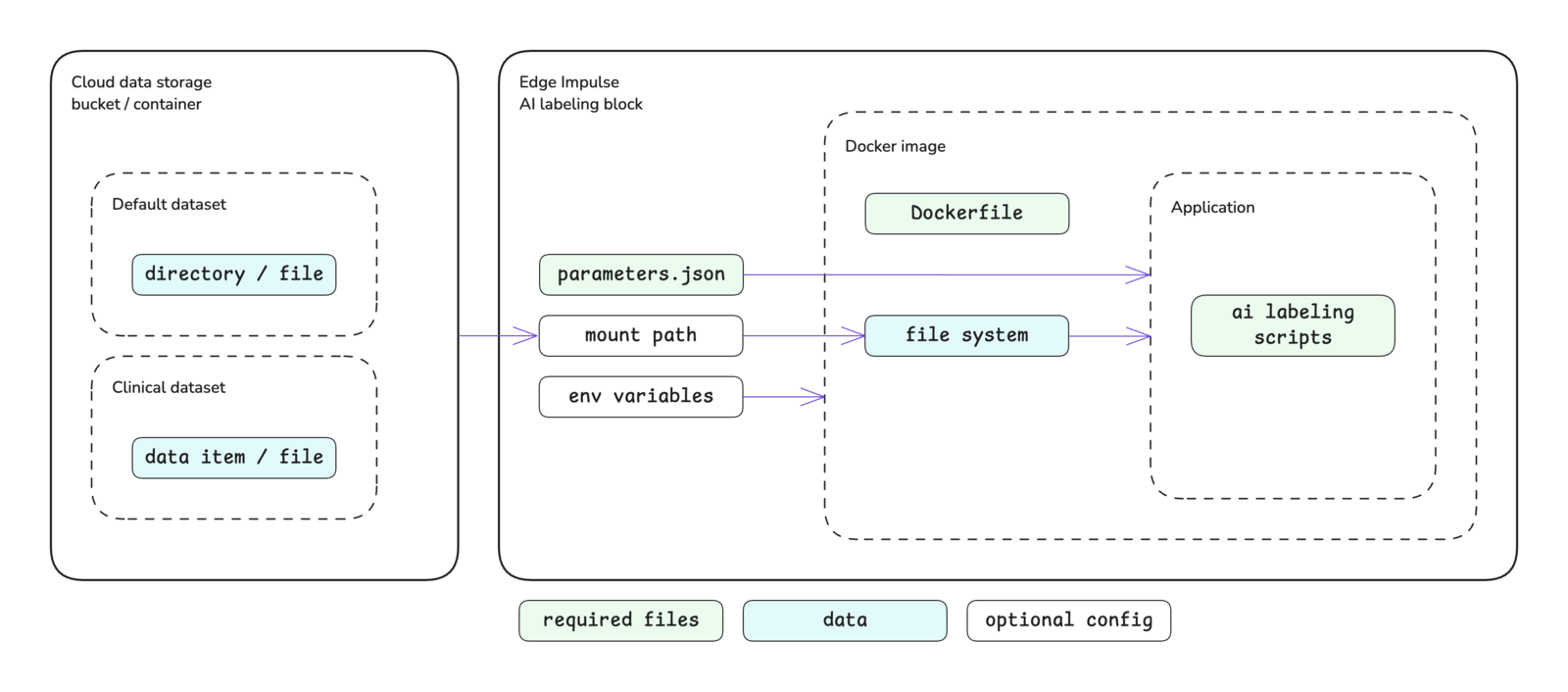

AI labeling blocks are an extension of transformation blocks operating instandalone mode and, as such, follow the same structure without being able to pass a directory or file directly to your scripts. Please see the custom blocks overview page for more details.

Block interface

The sections below define the required and optional inputs and the expected outputs for custom AI labeling blocks.Inputs

AI labeling blocks have access to environment variables, command line arguments, and mounted storage buckets.Environment variables

The following environment variables are accessible inside of AI labeling blocks. Environment variable values are always stored as strings.| Variable | Passed | Description |

|---|---|---|

EI_API_ENDPOINT | Always | The API base URL: https://studio.edgeimpulse.com/v1 |

EI_API_KEY | Always | The organization API key with member privileges: ei_2f7f54... |

EI_INGESTION_HOST | Always | The host for the ingestion API: edgeimpulse.com |

EI_ORGANIZATION_ID | Always | The ID of the organization that the block belongs to: 123456 |

EI_PROJECT_ID | Always | The ID of the project: 123456 |

EI_PROJECT_API_KEY | Always | The project API key: ei_2a1b0e... |

requiredEnvVariables key in the metadata section of the parameters.json file. You will then be prompted for the associated values for these keys when pushing the block to Edge Impulse using the CLI. Alternatively, these values can be added (or changed) by editing the block in Studio.

Command line arguments

The parameter items defined in yourparameters.json file will be passed as command line arguments to the script you defined in your Dockerfile as the ENTRYPOINT for the Docker image. Please refer to the parameters.json documentation for further details about creating this file, parameter options available, and examples.

In addition to the items defined by you, specific arguments will be automatically passed to your AI labeling block.

AI labeling blocks are an extension of transformation blocks operating in standalone mode, the arguments that are automatically passed to transformation blocks in this mode are also automatically passed to AI labeling blocks. Please refer to the custom transformation blocks documentation for further details on those parameters.

Along with the transformation block arguments, the following AI labeling specific arguments are passed as well.

| Argument | Passed | Description |

|---|---|---|

--data-ids-file <file> | Always | Provides the file path for id.json as a string. The ids.json file lists the data sample IDs to operate on as integers. See ids.json. |

--propose-actions <job-id> | Conditional | Only passed when the user wants to preview label changes. If passed, label changes should be staged and not directly applied. Provides the job ID as an integer. See preview mode. |

Mounted storage buckets

One or more cloud data storage buckets can be mounted inside of your block. If storage buckets exist in your organization, you will be prompted to mount the bucket(s) when initializing the block with the Edge Impulse CLI. The default mount point will be:Outputs

There are no required outputs from AI labeling blocks. In general, all changes are applied to data using API calls inside the block itself.Running in preview mode

AI labeling blocks can run in “preview” mode, which is triggered when a user clicksLabel preview data within an AI labeling action configuration. When a user is previewing label changes, the changes are staged and not applied directly.

For preview mode, the --propose-actions <job-id> argument is passed into your block. When you see this option, you should not apply changes directly to the data samples (e.g. via raw_data_api.set_sample_bounding_boxes or raw_data_api.set_sample_structured_labels) but rather use the raw_data_api.set_sample_proposed_changes API call.

Initializing the block

When you are finished developing your block locally, you will want to initialize it. The procedure to initialize your block is described in the custom blocks overview page. Please refer to that documentation for details.Testing the block locally

AI labeling blocks are not currently supported by the blocks runner in the Edge Impulse CLI. To test you custom AI labeling block, you will need to build the Docker image and run the container directly. You will need to pass any environment variables or command line arguments required by your script to the container when you run it.Pushing the block to Edge Impulse

When you have initialized and finished testing your block locally, you will want to push it to Edge Impulse. The procedure to push your block to Edge Impulse is described in the custom blocks overview page. Please refer to that documentation for details.Using the block in a project

After you have pushed your block to Edge Impulse, it can be used in the same way as any other built-in block.Examples

Edge Impulse has developed several AI labeling blocks that are built into the platform. The code for these blocks can be found in public repositories under the Edge Impulse GitHub account. The repository names typically follow the convention ofai-labeling-<description>. As such, they can be found by going to the Edge Impulse account and searching the repositories for ai-labeling.

Below are direct links to some examples:

- Bounding box labeling with OWL-ViT

- Bounding box re-labeling with GPT-4o

- Image labeling with GPT-4o

- Image labeling with pretrained models

- Audio labeling with Audio Spectrogram Transformer

Troubleshooting

No common issues have been identified thus far. If you encounter an issue, please reach out on the forum or, if you are on the Enterprise plan, through your support channels.