On November 18, 2024, we have replaced the auto-labeler with a new AI-enabled labeling flow, which allows prompt-based labeling (and much more). See the AI labeling documentation page. Our auto-labeling feature relies on the Segment Anything foundation model, creates embeddings or segmentation maps for your image datasets and then clusters (or groups) these embeddings based on your settings. In the Studio, you can then associate a label with a cluster and it will automatically create the labeled bounding boxes around each of the objects present in that cluster. We developed this feature to ease your labeling tasks in your object detection projects. Also, see our Label image data using GPT-4o tutorial to see how to leverage the power of LLMs to automatically label your data samples based on simple prompts.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- Make sure your project belongs to an organization. See transfer ownership for more info.

- Make sure your project is configured as an object detection project. You can change the labeling method in your project’s dashboard. See Dashboard for more info.

- Add some images to your project, either by collecting data or by uploading existing datasets. See Data acquisition for more info.

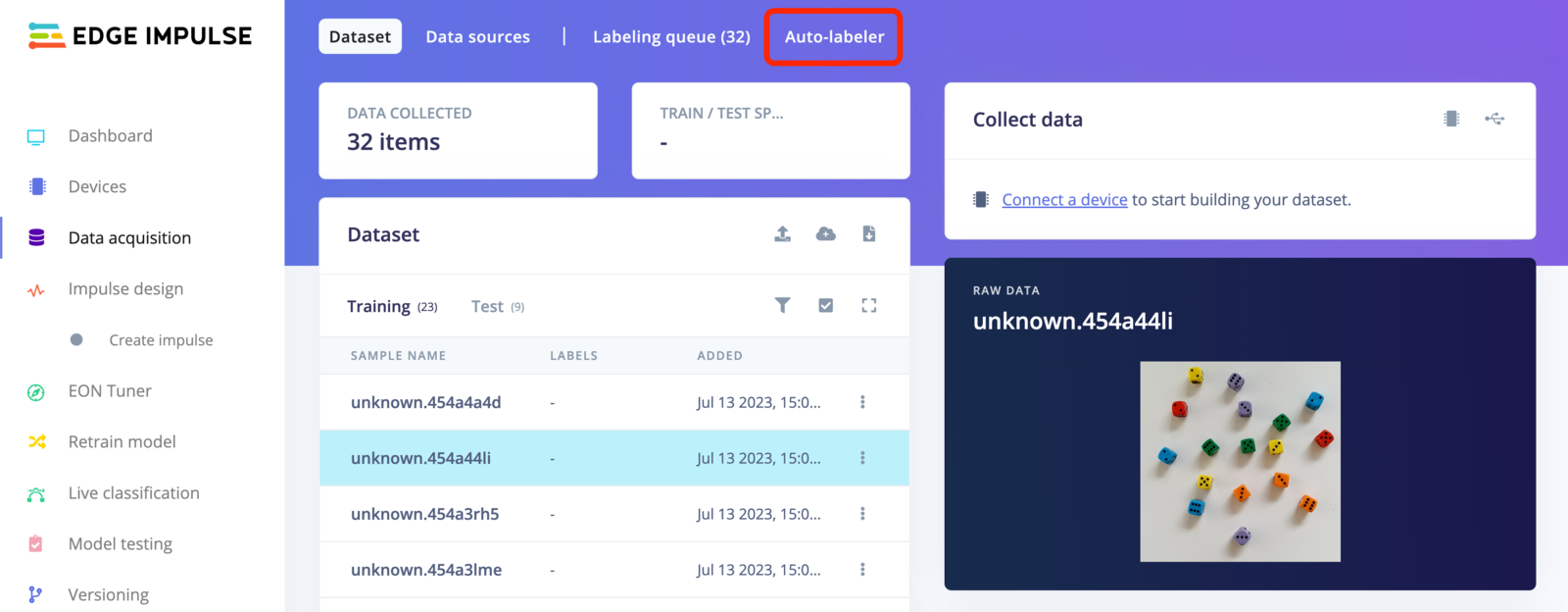

- You now should be able to see the Auto-labeler tab in your Data acquisition view:

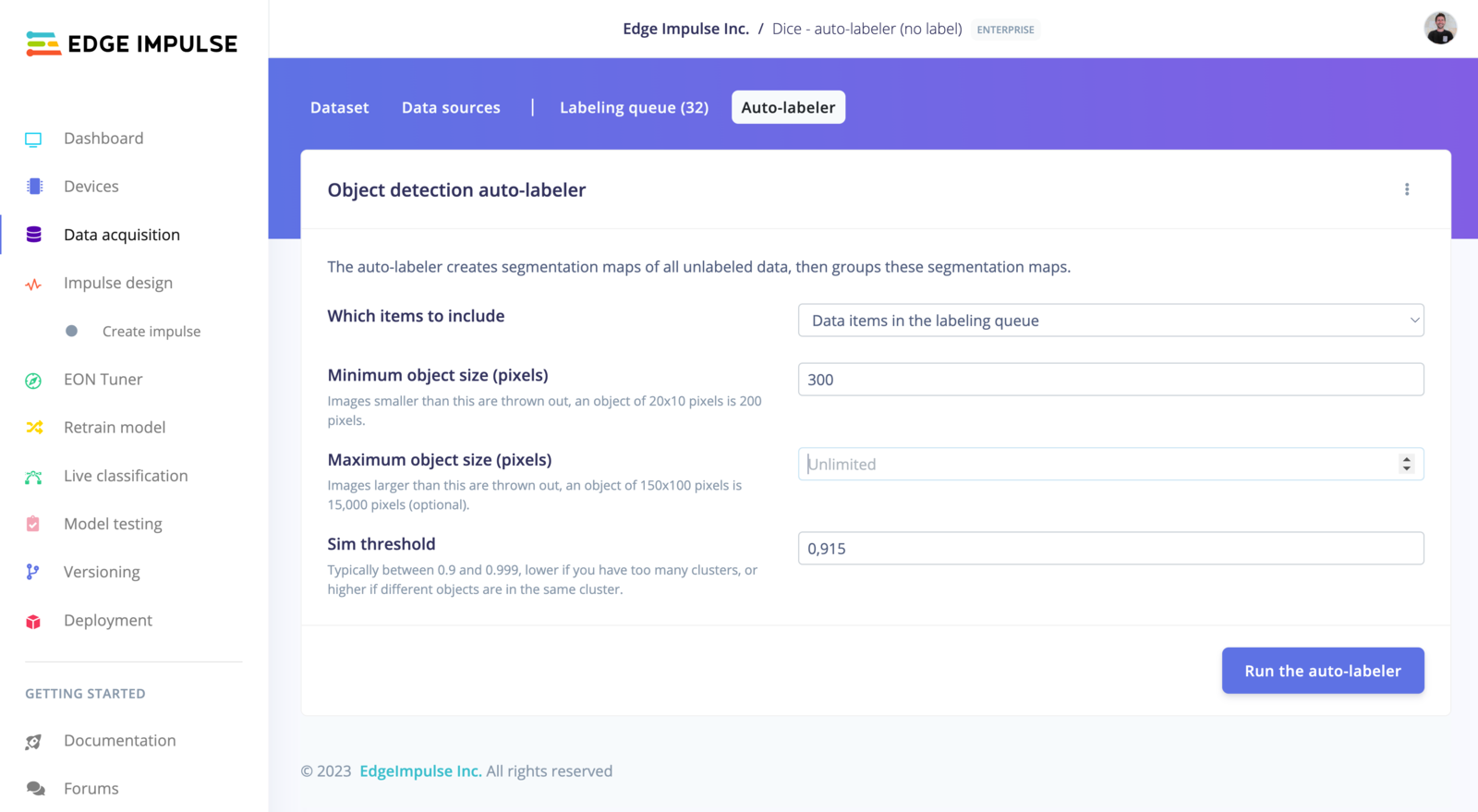

Object detection auto-labeler settings

Which items to include:- All data items present in your dataset

- Data items in the labeling queue

- Data items without a given class

Note that this process is slow (a few seconds per image, even on GPUs). However, we apply a strong cache on the results, so once you have ran the auto-labeler once, your iterations will be must faster. This will allow you to change the settings with less friction.

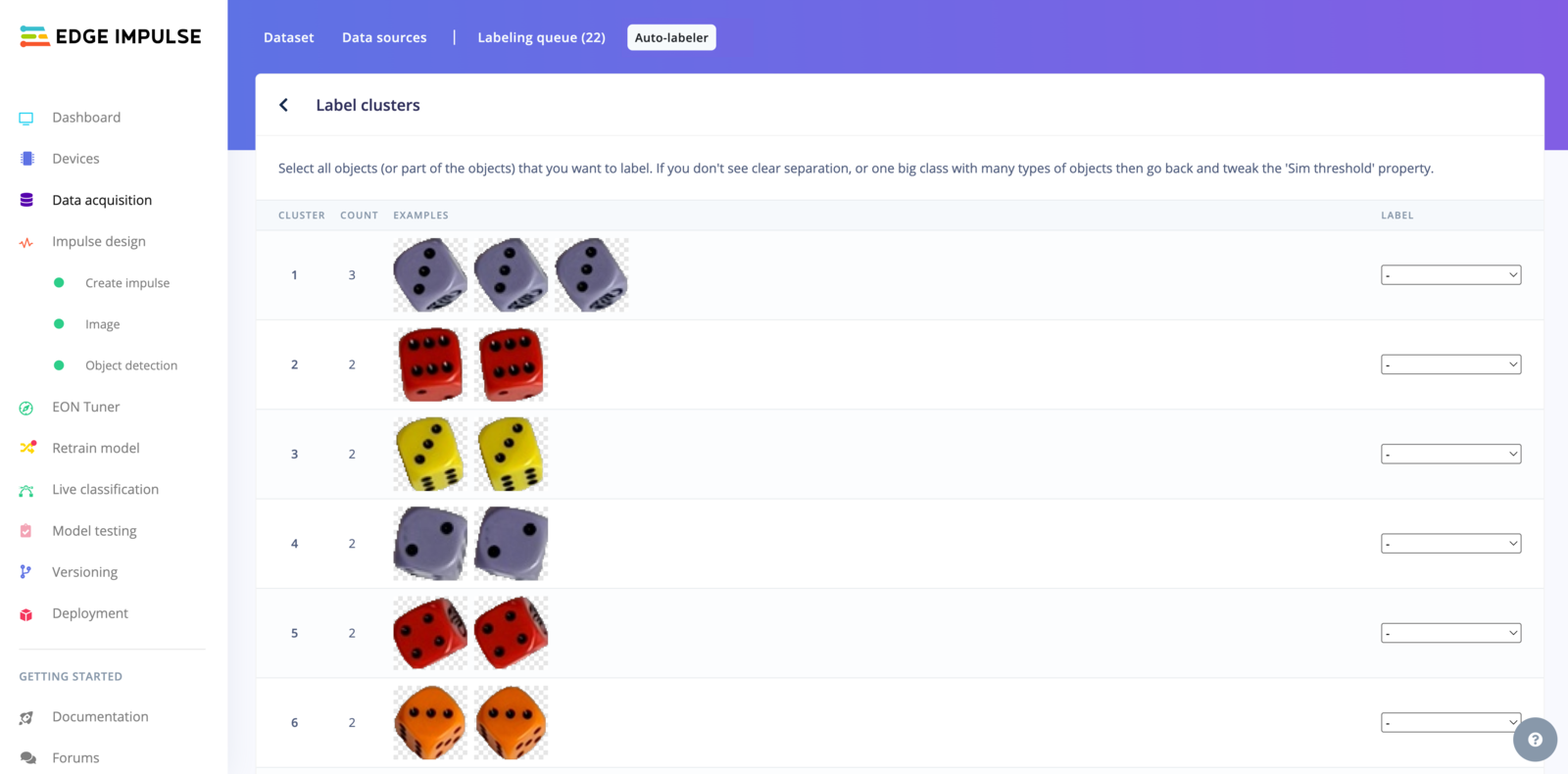

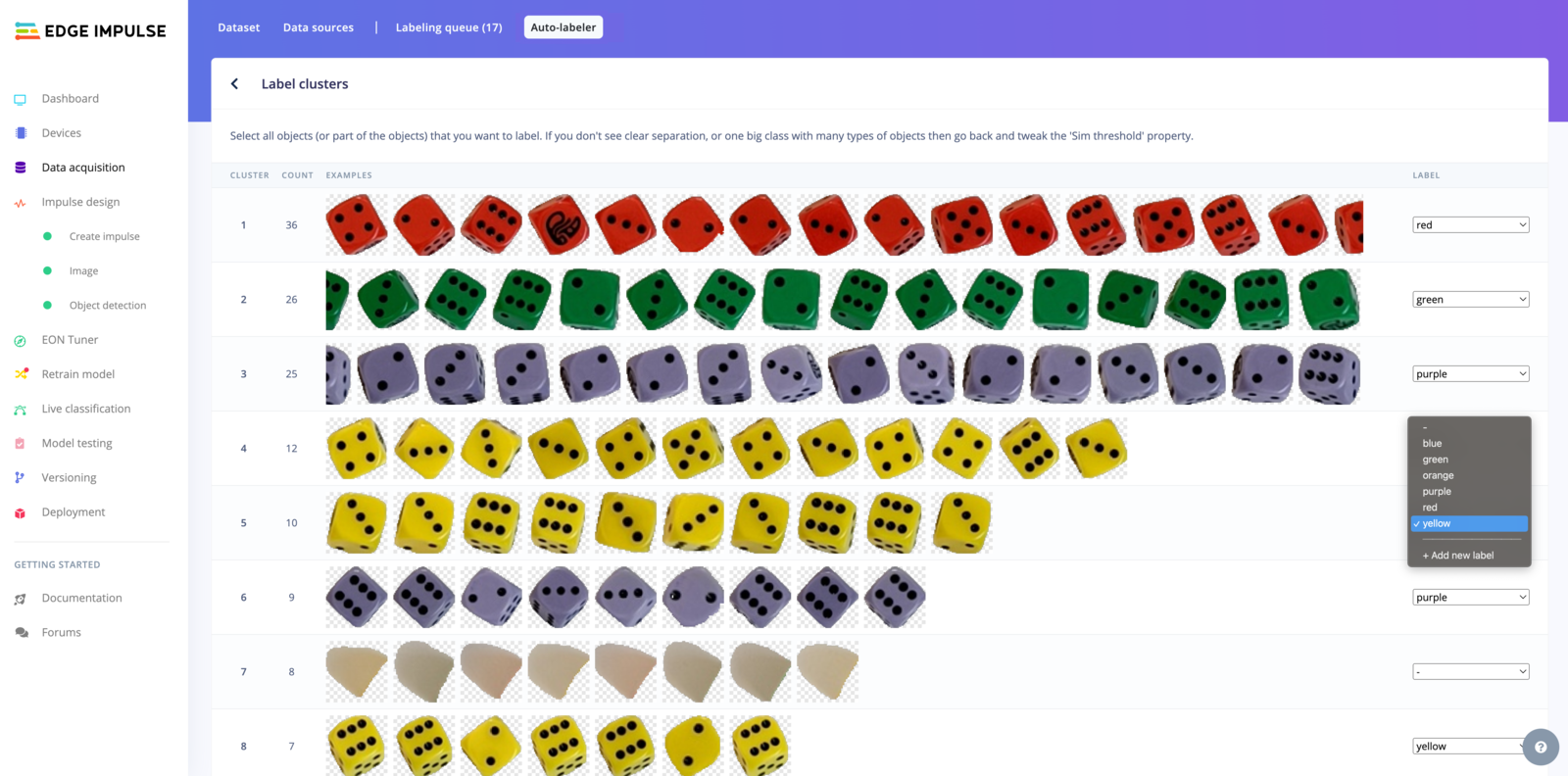

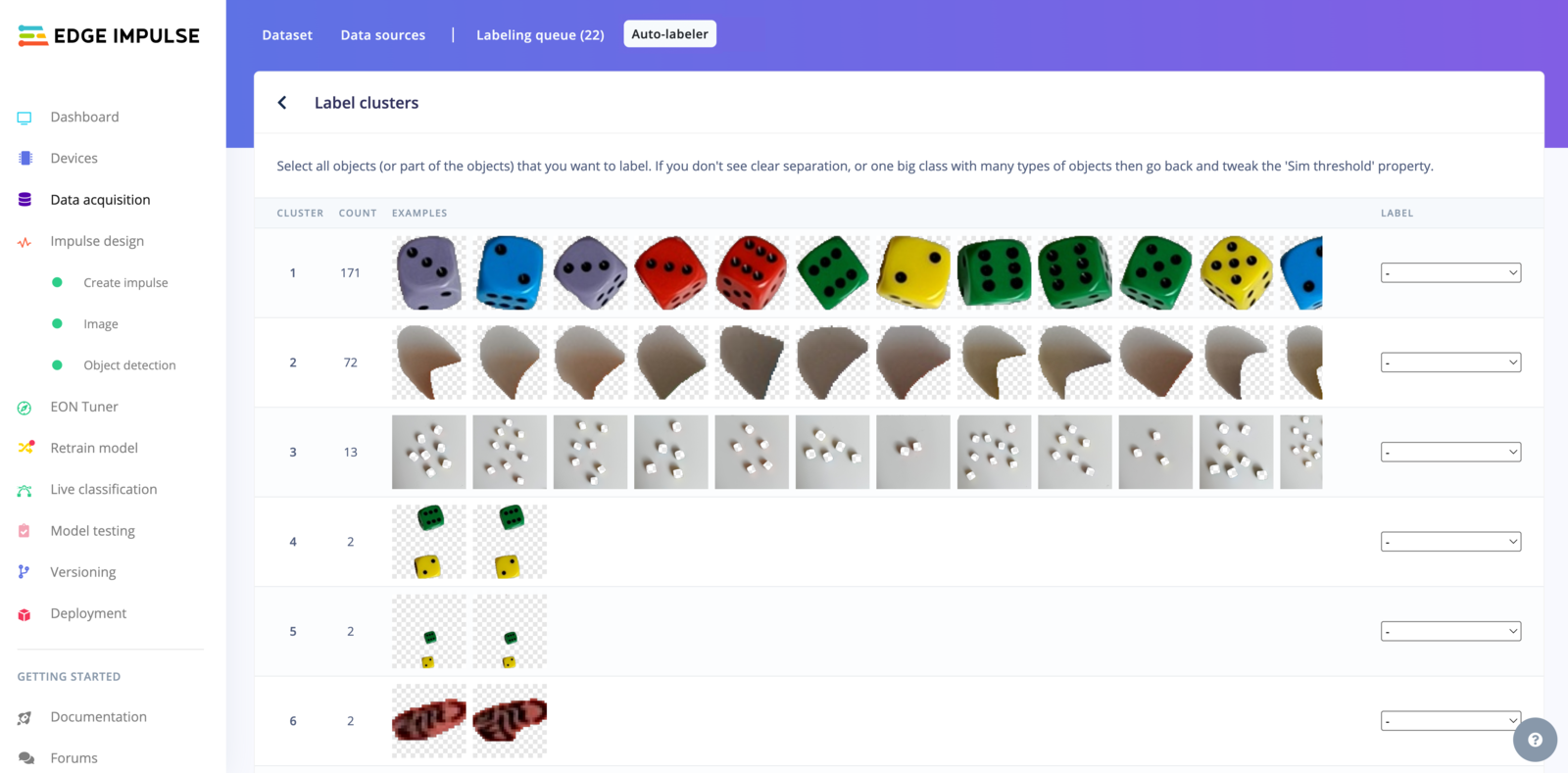

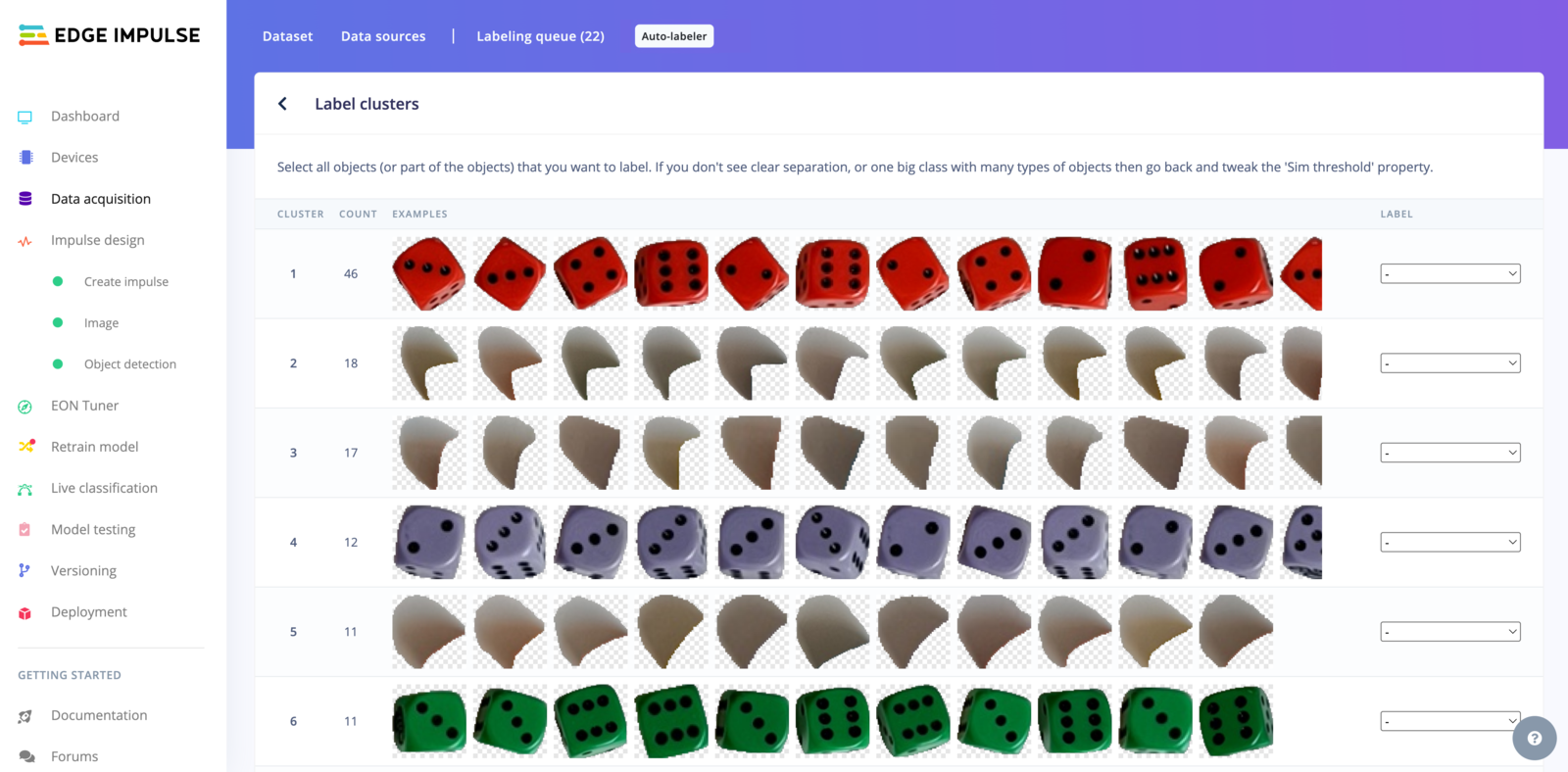

Label clusters

Once the process is finished, you will be redirected to a new page to associate a label with a cluster:

Example

Each project is different, to write this documentation page, we have collected images containing several dice. This dataset can be used in several ways - you can either label the dice only, the dice color or the dice figures. You can find the dataset, with the dice labeled per color in this public project. To adjust the granularity, you can use the Sim threshold parameter.1. Group all the dice together:

Here we have been setting the Sim threshold to0.915

2. Group the dice by color:

Here we have been setting the Sim threshold to0.945

3. Group the dice by color and by figure:

Here we have been setting the Sim threshold to0.98