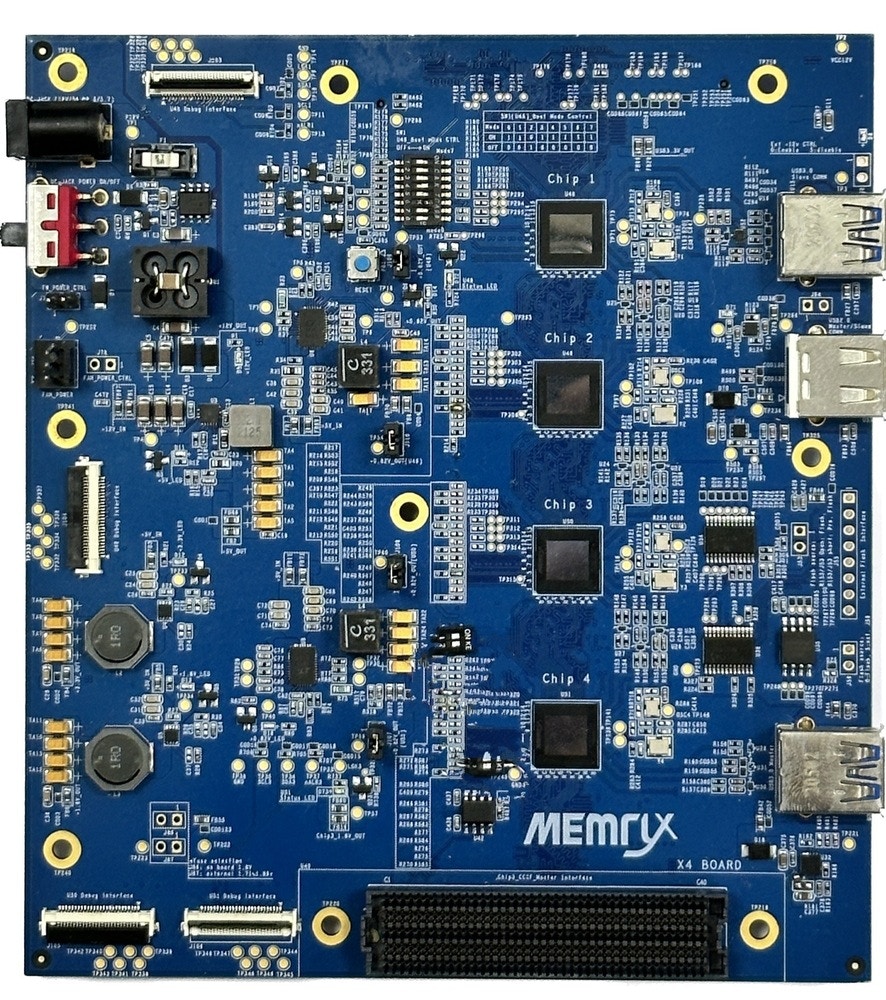

The MemryX MX3 is the latest state-of-the-art AI inference co-processor for running trained Computer Vision (CV) neural network models built using any of the major AI frameworks (TensorFlow, LiteRT (previously Tensorflow Lite), ONNX, PyTorch, Keras) and offering the widest operator support. Running alongside any Host Processor, the MX-3 offloads CV inferencing tasks providing power savings, latency reduction, and high accuracy. The MX-3 can be cascaded together optimizing performance based on the model being run. The MX3 Evaluation Board (EVB) consists of PCBA with 4 MX3’s installed. Multiple EVBs can be cascaded using a single interface cable. MemryX Developer Hub provides simple 1-click compilation. This portal is intuitive and easy-to-use and includes many tools, such as a Simulator, Python and C++ APIs, and example code. Contact MemryX to request an EVB and access to the online Developer Hub.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Installing dependencies

To use MemryX devices with Edge Impulse deployments you must install the following dependencies on your Linux target that has a MemryX device installed.- Python 3.8+: This is a prerequisite for the MemryX SDK.

- MemryX tools and drivers: Please contact MemryX for access to their tools and drivers

- Edge Impulse Linux: This will enable you to connect your development system directly to Edge Impulse Studio

Connecting to Edge Impulse

After working through a getting started tutorial, and with all software set up, connect your camera or microphone to your operating system and run:--clean.

Need sudo?Some commands require the use of

sudo in order to have proper access to a connected camera. If your edge-impulse-linux or edge-impulse-linux-runner command fails to enumerate your camera please try the command again with sudoVerifying that your device is connected

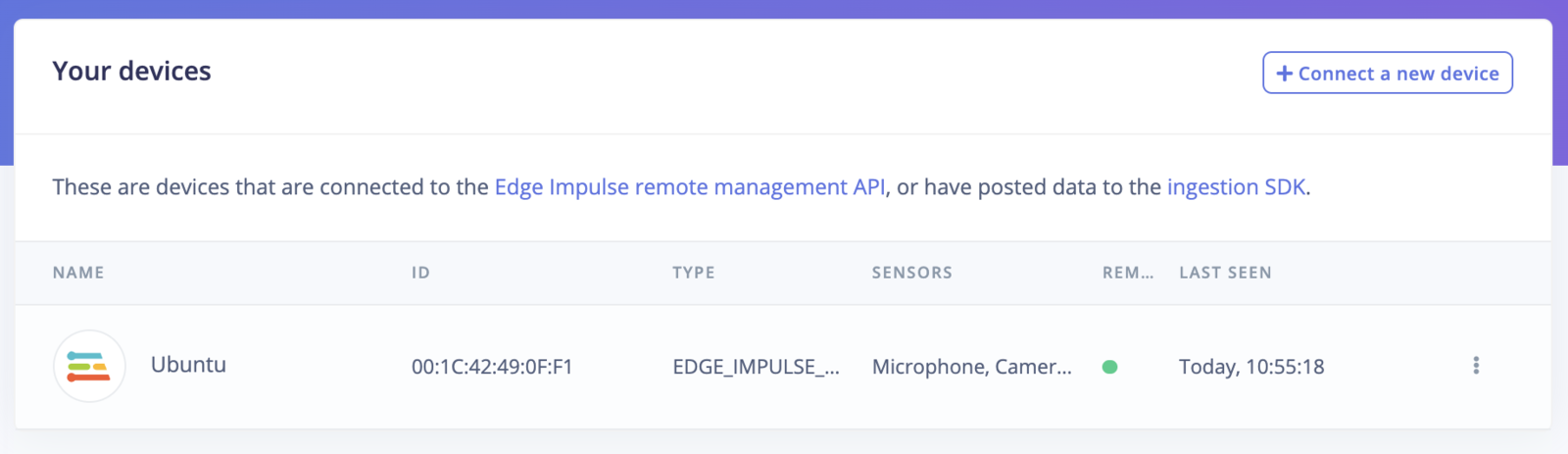

Your machine is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

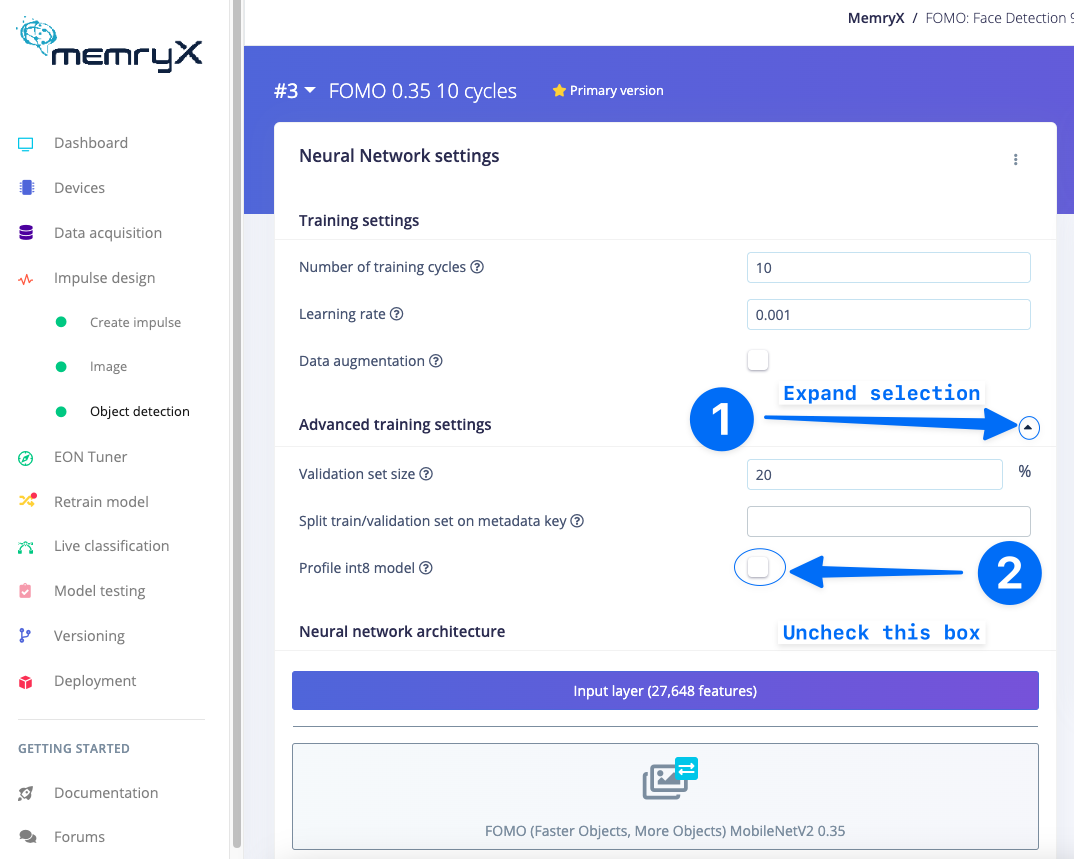

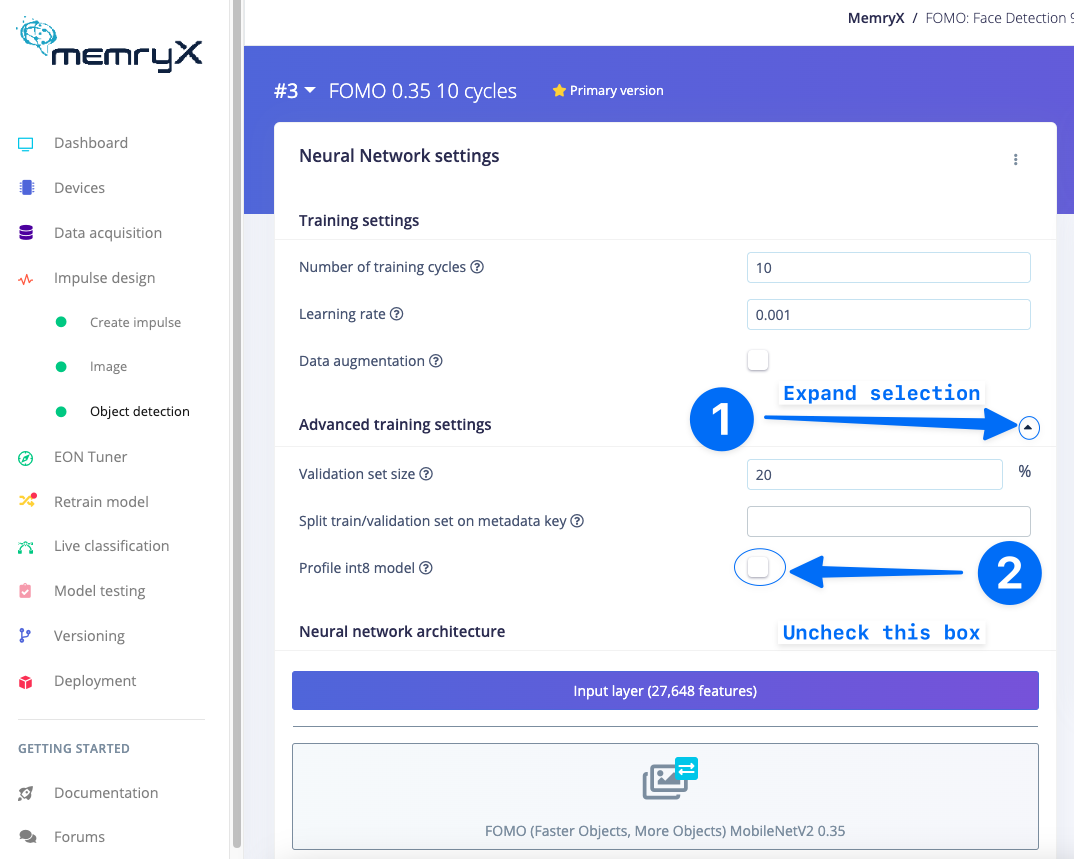

With everything set up you can now build your first machine learning model with these tutorials:No need to int8 modelMemryX devices only need a float32 model to be passed into the compiler. Therefore, when developing models with Edge Impulse it is better not to profile int8 (quantize) models. You may prevent generation, profiling, and deployment of int8 models by deselecting Profile int8 model under the Advanced training settings of your Impulse Design model training section.

Frames per second (FPS), Latency, and Synchronous Calls

The implementation of MemryX MX3 devices into the Edge Impulse SDK uses synchronous calls to the evaluation board. Therefore, frame per second information is relative to that API. For faster performance there is an asynchronous API from MemryX that may be used in place for high performance applications. Please contact Edge Impulse Support for assistance in getting the best performance out of your MemryX MX3 devices!Deploying back to device

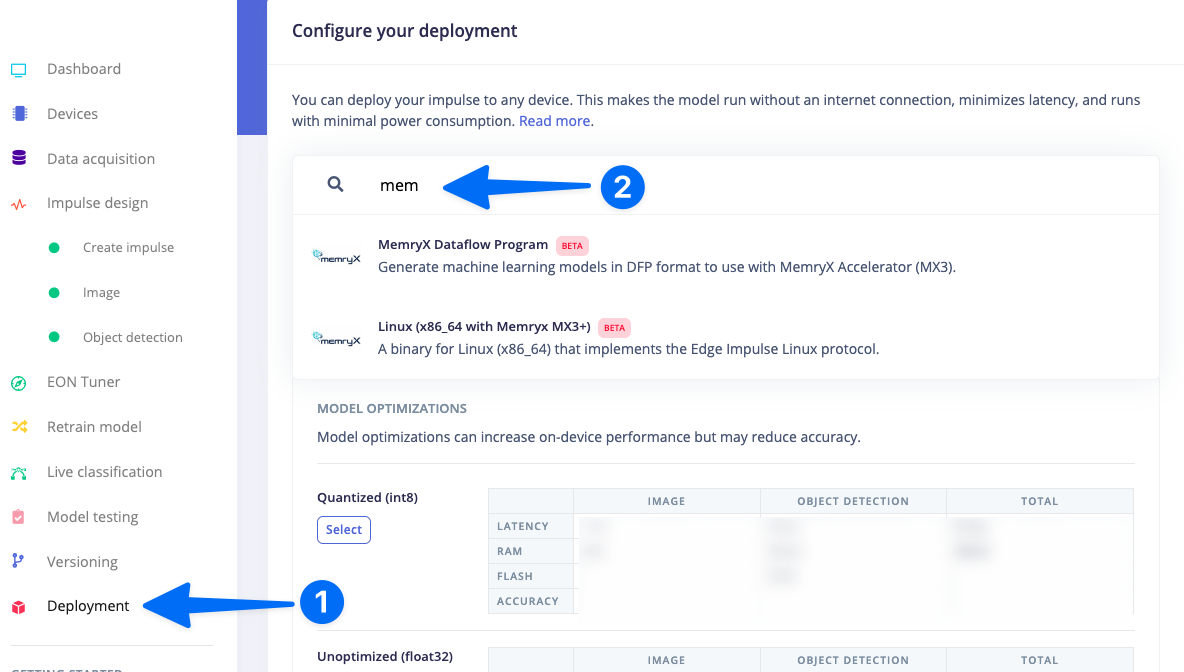

In order to achieve full hardware acceleration models must be converted from their original format to run on the MX3. This can be done by selecting the MX3 from the Deployment Screen. This will generate a .zip file with models that can be used in your application for the MX3. The block uses the MemryX compiler so that the model will run accelerated on the device.

MemryX Dataflow Program Deployment Block

The MemryX Dataflow Program Deployment Block generates a .zip file that contains the converted Edge Impulse model usable by MX3 devices (.dfp file). One can use the MemryX SDK to develop applications using this file.Linux (x86_64 with MemryX MX3)

The output from this Block is an Edge Impulse .eim file that, once saved onto the computer with the MX3 connected, can be run with the following command.Need sudo?Some commands require the use of

sudo in order to have proper access to a connected camera. If your edge-impulse-linux or edge-impulse-linux-runner command fails to enumerate your camera please try the command again with sudoImage model?

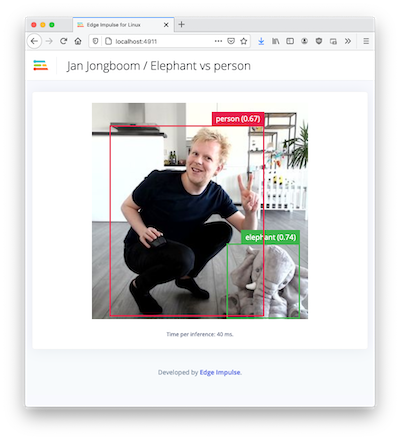

If you have an image model then you can get a peek of what your device sees by being on the same network as your device, and finding the ‘Want to see a feed of the camera and live classification in your browser’ message in the console. Open the URL in a browser and both the camera feed and the classification are shown:

Troubleshooting

UnhandledPromiseRejectionWarning: No response within 5 seconds

Please restart your MX3 evaluation board by using the reset button. Then use theedge-impulse-linux-runner command again. If you are still having issues please contact Edge Impulse support.

Failed to run impulse Capture process failed with code 1

- You may need to use

sudo edge-impulse-linux-runnerto be able to access the camera on your system. - Ensure that you do not have any open processes still using the camera. For example, if you have the Edge Impulse web browser image acquisition page open or a virtual meeting software, please close or disable the camera usage in those applications.

- This error could mean that your camera is in use by another process. Check if you have any application open that is using the camera. This error could exist when your previous attempt to run

edge-impulse-linux-runnerfailed with exception. In that case, check if you have agst-launch-1.0process running. For example:

5615) is a process ID. Kill the process:

edge-impulse-linux-runner once again.