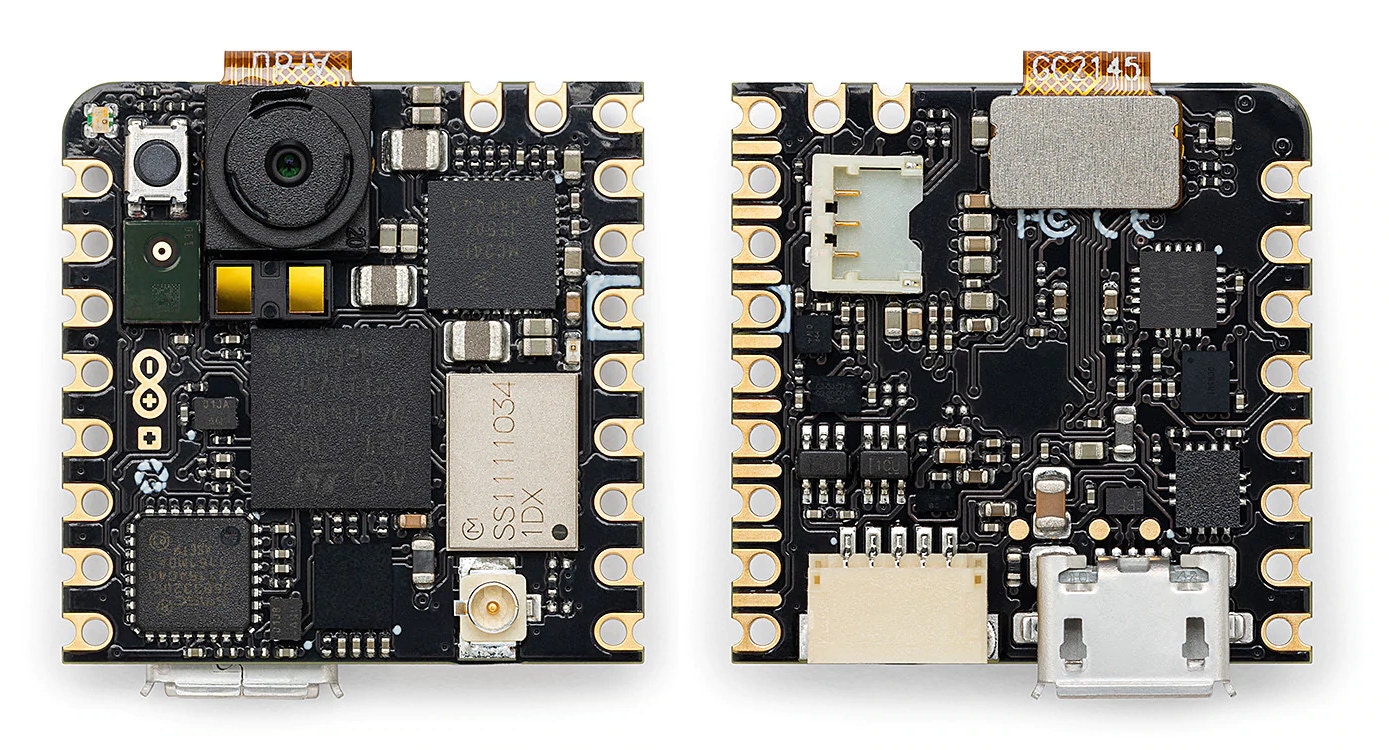

- Powerful microcontroller equipped with a 2MP color camera

- Tiny form factor of 22.86 x 22.86 mm

- Integrated microphone, distance sensor, and intelligent 6-axis motion sensor

- Onboard Wi-Fi and Bluetooth® Low Energy connectivity

- Standalone when battery-powered

- Expand existing projects with sensing capabilities

- Enable fast Machine Vision prototyping

- Compatible with Nicla, Portenta, and MKR products

Installing dependencies

To set this device up in Edge Impulse, you will need to install the following software:- Edge Impulse CLI.

- Arduino CLI.

- Here’s an instruction video for Windows.

- The Arduino website has instructions for macOS and Linux.

- On Linux:

- GNU Screen: install for example via

sudo apt install screen.

- GNU Screen: install for example via

Connecting to Edge Impulse

There are two ways to connect the Nicla Vision to Edge Impulse:- Using the official Edge Impulse firmware - it supports all onboard sensors, including camera.

- Using an ingestion script. This supports analog, IMU, proximity sensors and microphone (limited to 8 kHz), but not the camera. It is only recommended if you want to modify the ingestion flow for third-party sensors.

1. Connect the development board to your computer

Use a micro-USB cable to connect the development board to your computer. Under normal circumstances, flash process should work without entering the bootloader manually. However if run into difficulties flashing the board, you can enter the bootloader by pressing RESET twice. The onboard LED should start pulsating to indicate this.

2. Update the firmware

The development board does not come with the right firmware yet. To update the firmware:- Download the latest Edge Impulse firmware, and unzip the file.

- Open the flash script for your operating system (

flash_windows.bat,flash_mac.commandorflash_linux.sh) to flash the firmware. - Wait until flashing is complete, and press the RESET button once to launch the new firmware.

3. Setting keys

From a command prompt or terminal, run:--clean.

Alternatively, recent versions of Google Chrome and Microsoft Edge can collect data directly from your development board, without the need for the Edge Impulse CLI. See this blog post for more information.

Data ingestion

1. Connect the development board to your computer

Use a micro-USB cable to connect the development board to your computer.2. Update the firmware

The development board does not come with the right firmware yet. To update the firmware:- Download the latest Edge Impulse ingestion sketches and unzip the file.

- Open the

nicla_vision_ingestion.ino(for IMU/proximity sensor) ornicla_vision_ingestion_mic.ino(for microphone) sketch in a text editor or the Arduino IDE. - For IMU/proximity sensor data ingestion into your Edge Impulse project, at the top of the file, select 1 or multiple sensors by un-commenting the defines and select the desired sample frequency (in Hz). For example, for the accelerometer sensor:

nicla_vision_ingestion_mic.ino sketch.

-

Then, from your sketch’s directory, run the Arduino CLI to compile:

-

Then flash to your Nicla Vision using the Arduino CLI:

Alternatively if you open the sketch in the Arduino IDE, you can compile and upload the sketch from there.

- Wait until flashing is complete, and press the RESET button once to launch the new firmware.

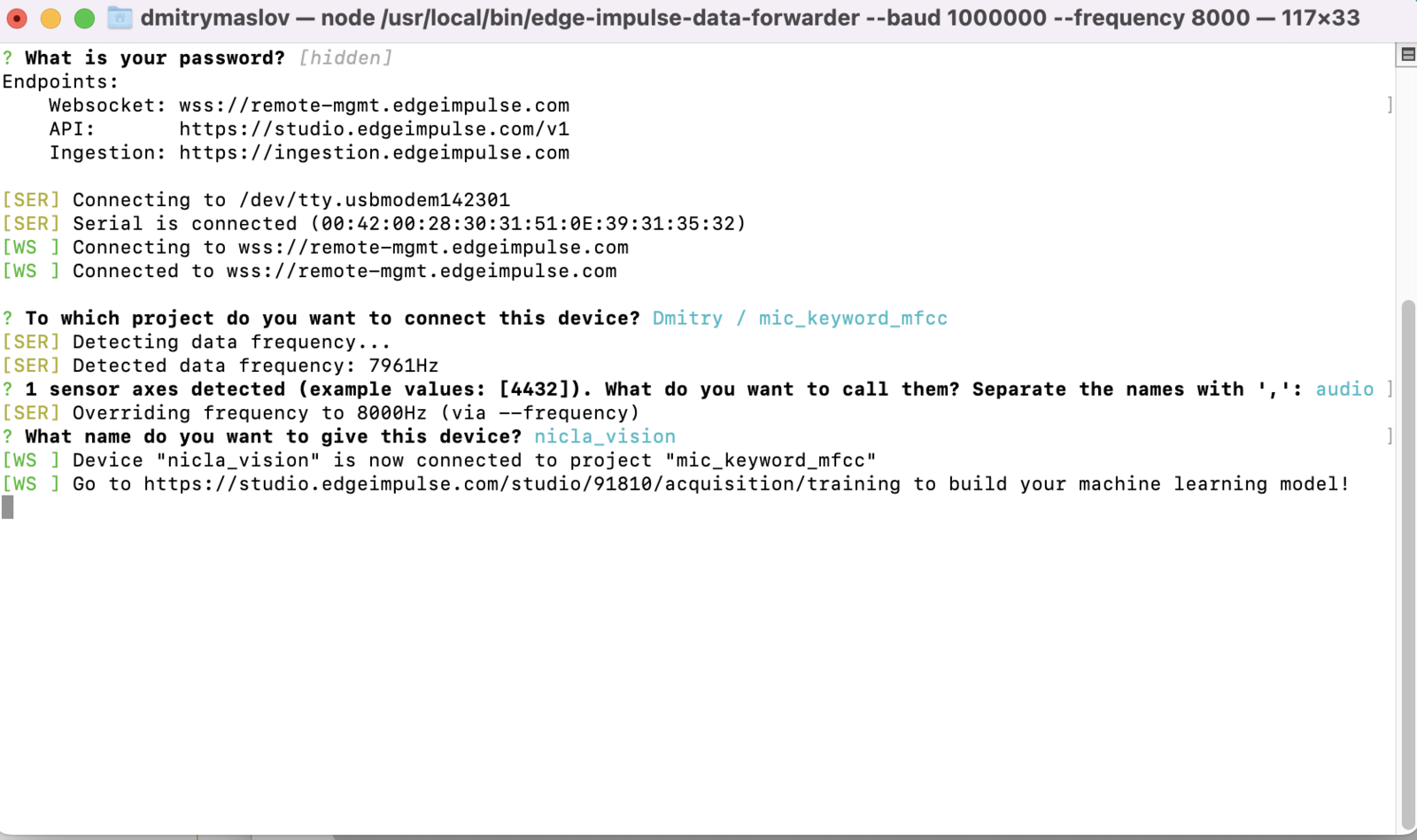

3a. Data forwarder (Fusion sensors)

From a command prompt or terminal, run:nicla_vision_ingestion.ino sketch). If you want to switch projects/sensors run the command with --clean. Please refer to the table below for the names used for each axis corresponding to the type of sensor:

| Sensor | Axis names |

|---|---|

#define SAMPLE_ACCELEROMETER | accX, accY, accZ |

#define SAMPLE_GYROSCOPE | gyrX, gyrY, gyrZ |

#define SAMPLE_PROXIMITY | cm |

3b. Data forwarder (Microphone)

From a command prompt or terminal, run:audio. If you want to switch projects/sensors run the command with --clean.

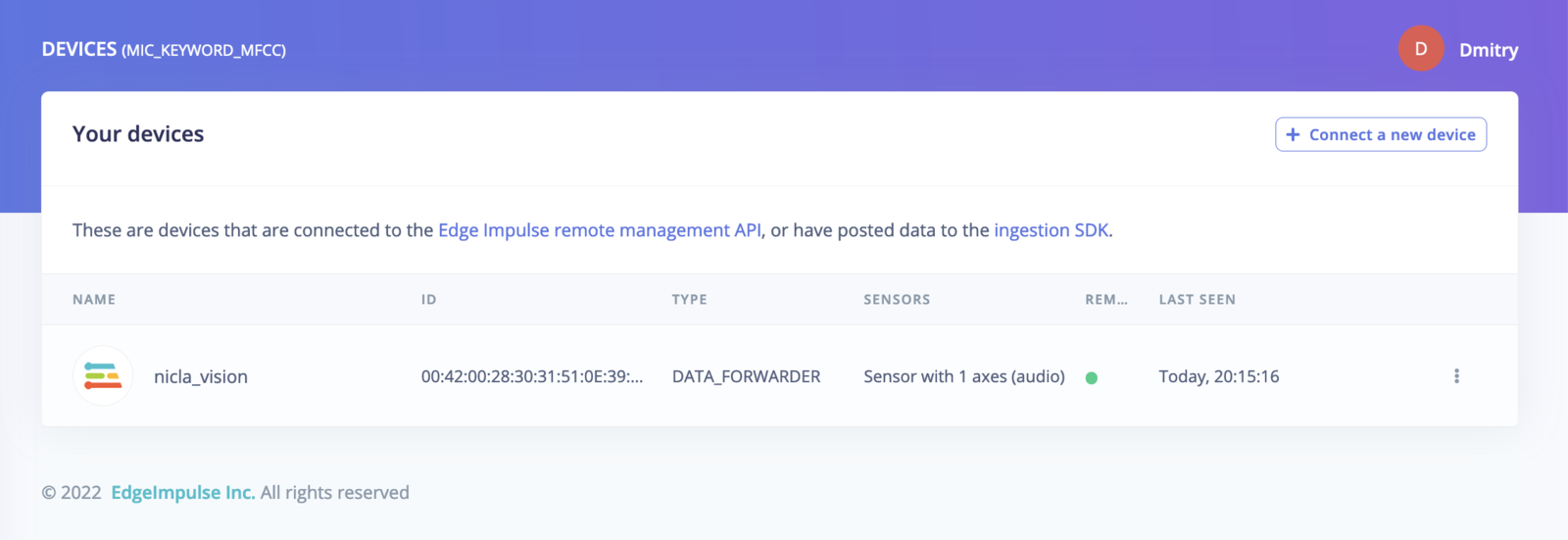

4. Verifying that the device is connected

That’s all! Your device is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

The above screenshots are for Edge Impulse Ingestion scripts and Data forwarder. If you use the official Edge Impulse firmware for the Nicla Vision, the content will be slightly different.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Building a continuous motion recognition system.

- Recognizing sounds from audio.

- Keyword spotting

- Image classification

- Object detection with centroids (FOMO)

nicla_vision_ingestion.ino sketch and the Edge Impulse data forwarder to easily send data from any sensor on the Nicla Vision into your Edge Impulse project.