Seeed SenseCAP A1101 - LoraWAN Vision AI Sensor is an image recognition AI sensor designed for developers. SenseCAP A1101 - LoRaWAN Vision AI Sensor combines TinyML AI technology and LoRaWAN long-range transmission to enable a low-power, high-performance AI device solution for both indoor and outdoor use. This sensor features Himax high-performance, low-power AI vision solution which supports the Google LiteRT (previously Tensorflow Lite) framework and multiple TinyML AI platforms.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Installing dependencies

To set A1101 up in Edge Impulse, you will need to install the following software:- Edge Impulse CLI.

- On Linux:

- GNU Screen: install for example via sudo apt install screen.

- Download the latest Bouffalo Lab Dev Cube-All-Platform

Connecting to Edge Impulse

With all the software in place, it’s time to connect the A1101 to Edge Impulse.1. Update BL702 chip firmware

BL702 is the USB-UART chip which enables the communication between the PC and the Himax chip. You need to update this firmware in order for the Edge Impulse firmware to work properly.- Get the latest bootloader firmware (tinyuf2-sensecap_vision_ai_X.X.X.bin.)

-

Connect the A1101 to the PC via a USB Type-C cable while holding down the Boot button on the A1101.

-

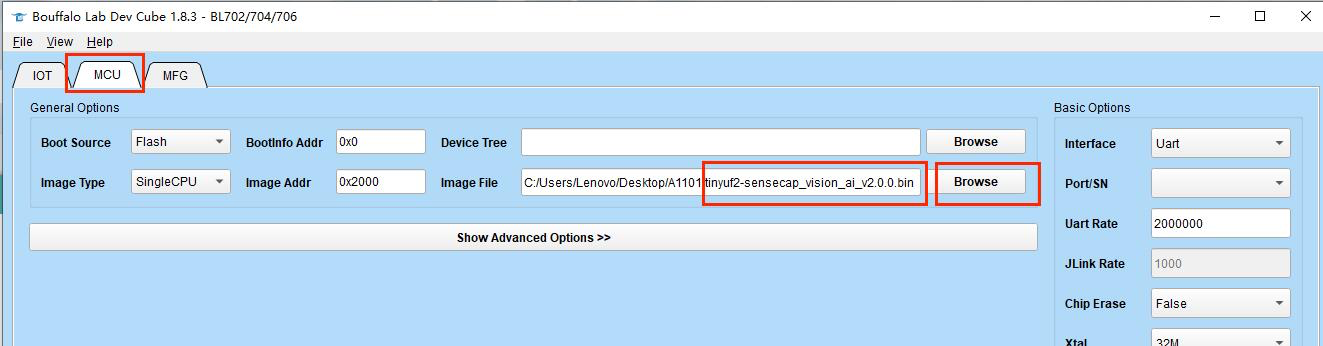

Open previously installed Bouffalo Lab Dev Cube software, select BL702/704/706, and then click Finish

-

Go to the MCU tab. Under Image file, click Browse and select the firmware you just downloaded.

-

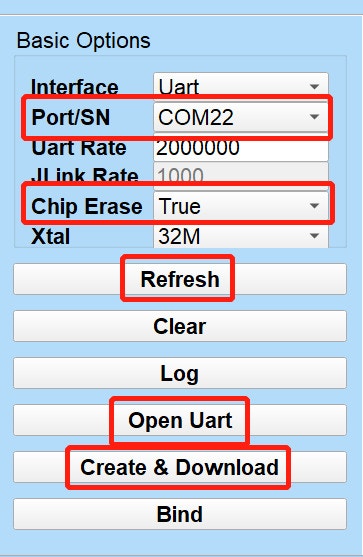

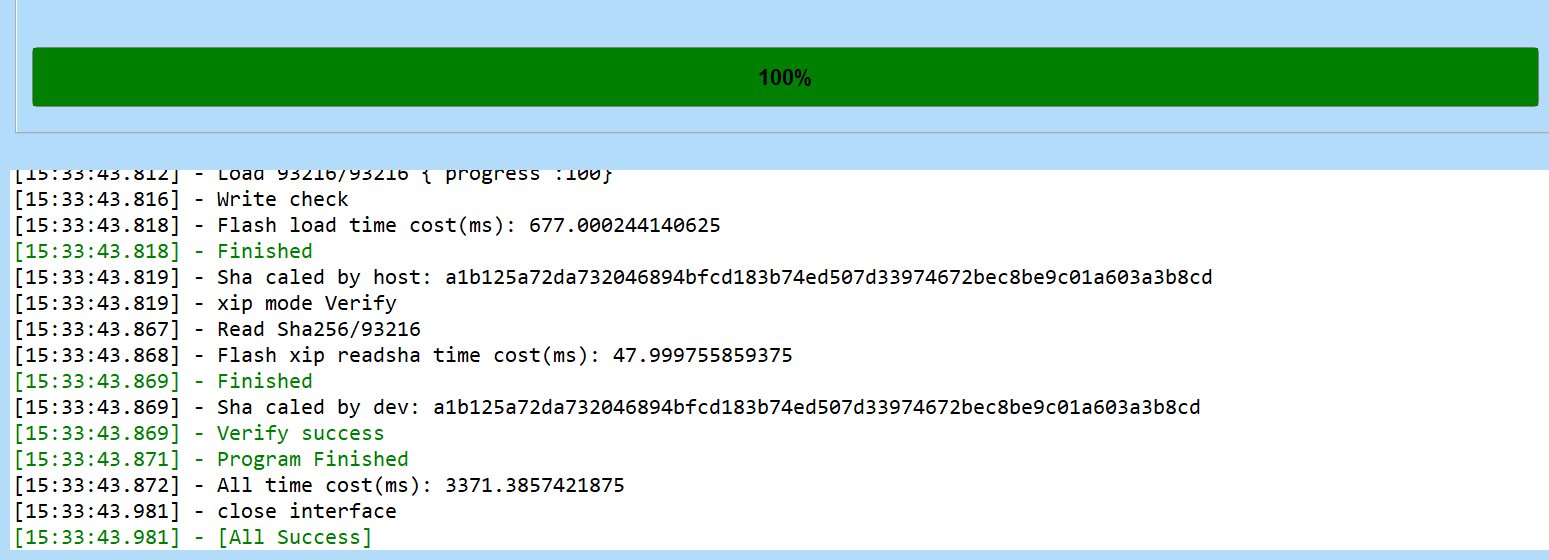

Click Refresh, choose the Port related to the connected A1101, set Chip Erase to True, click Open UART, click Create & Download and wait for the process to be completed .

If the flashing throws an error, click Create & Download multiple times until you see the All Success message.

2. Update Edge Impulse firmware

A1101 does not come with the right Edge Impulse firmware yet. To update the firmware:- Download the latest Edge Impulse firmware and extract it to obtain firmware.uf2 file

- Connect the A1101 again to the PC via USB Type-C cable and double-click the Boot button on the A1101 to enter mass storage mode

-

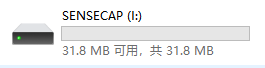

After this you will see a new storage drive shown on your file explorer as SENSECAP. Drag and drop the firmware.uf2 file to SENSECAP drive

3. Setting keys

From a command prompt or terminal, run:--clean.

Alternatively, recent versions of Google Chrome and Microsoft Edge can collect data directly from your A1101, without the need for the Edge Impulse CLI. See this blog post for more information.

4. Verifying that the device is connected

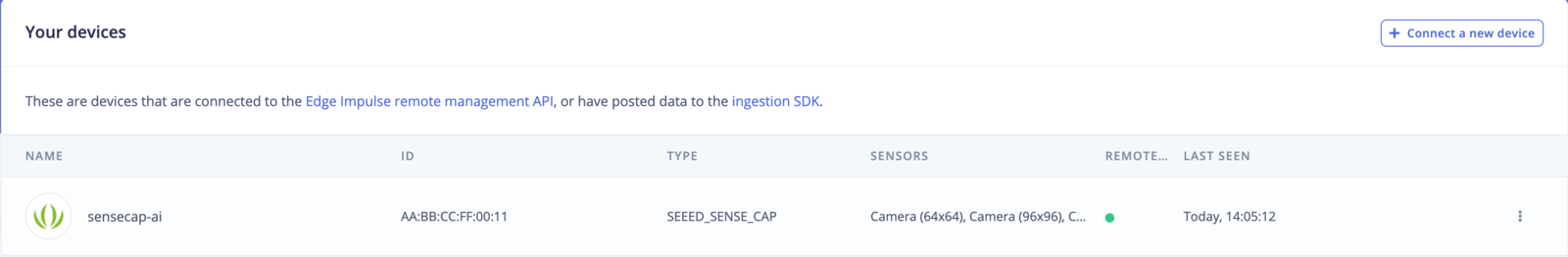

That’s all! Your device is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up, you can now build and run your first machine learning model with these tutorials: Looking to connect different sensors? The Data forwarder lets you easily send data from any sensor into Edge Impulse.Collecting data from Seeed SenseCAP A1101

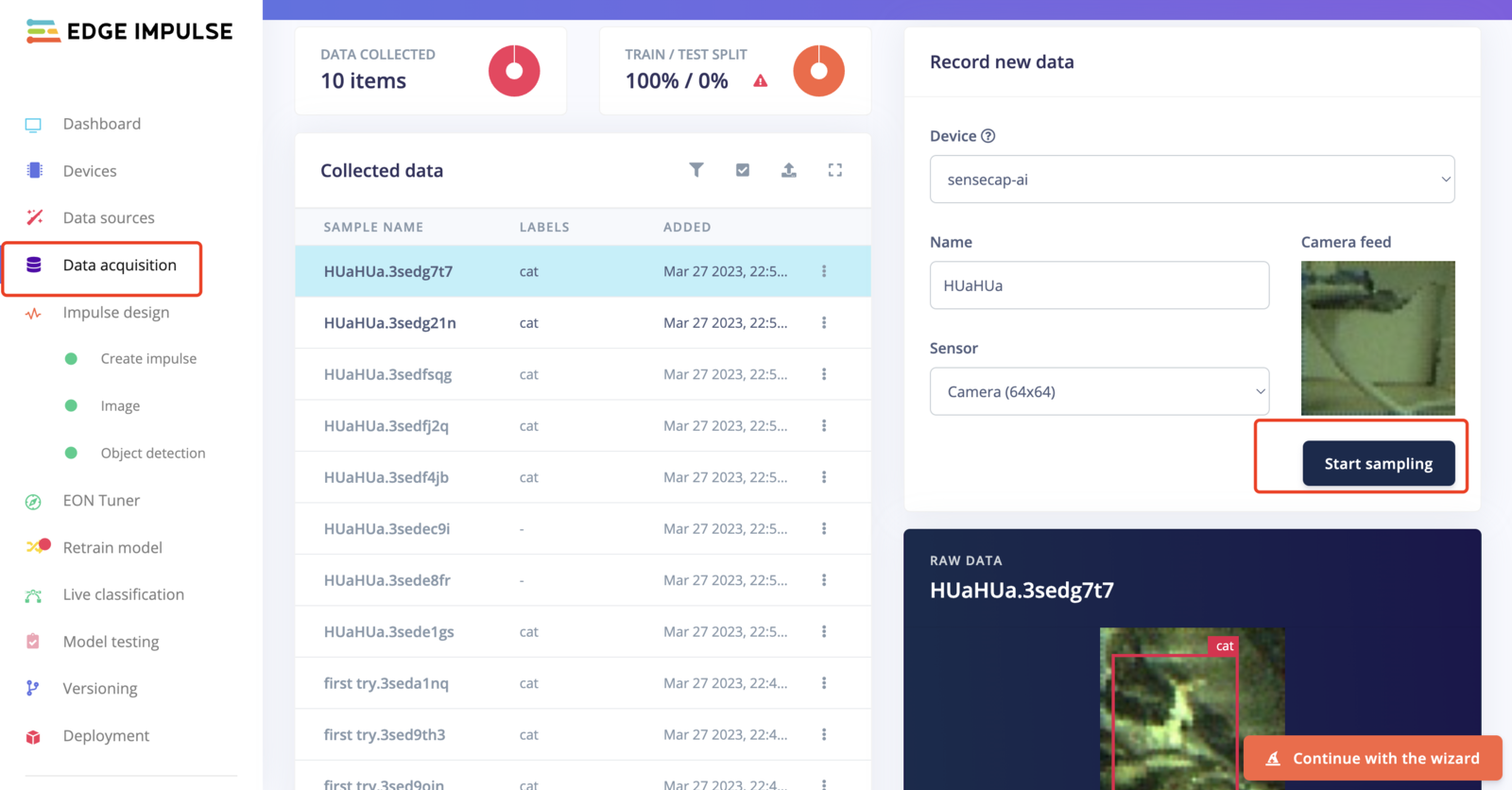

Frames from the onboard camera can be directly captured from the studio:

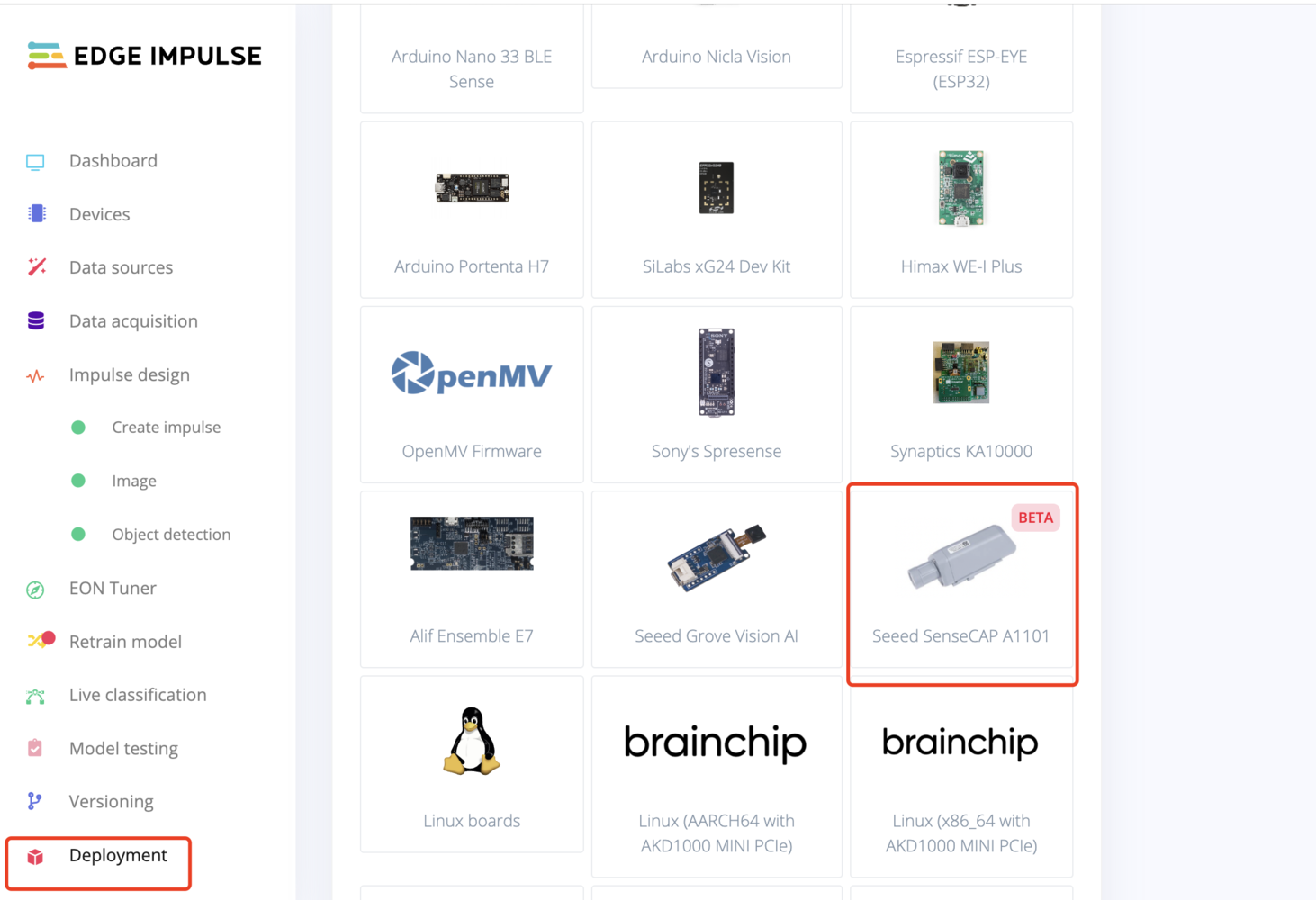

Deploying back to device

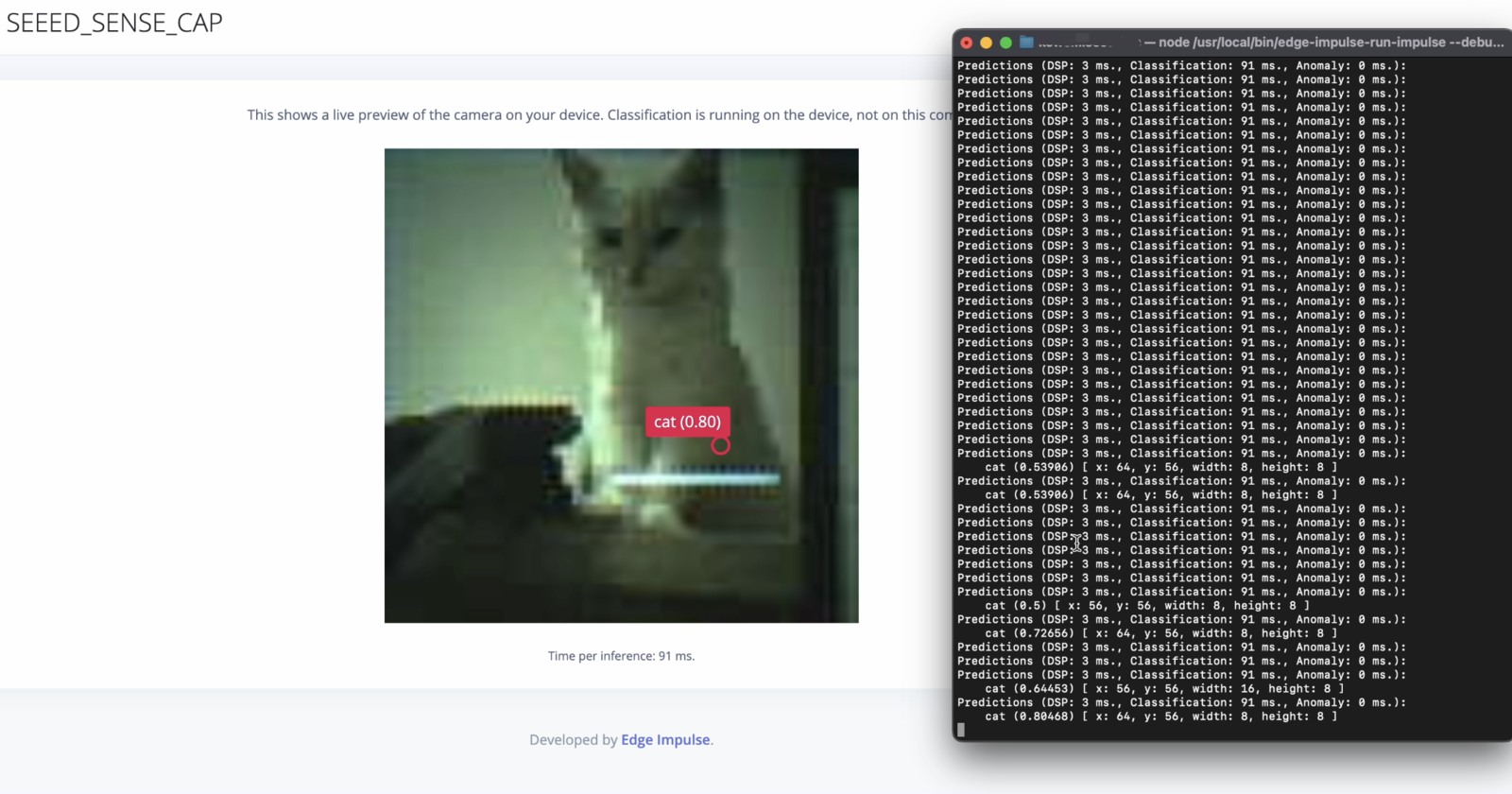

After building the machine learning model and downloading the Edge Impulse firmware from Edge Impulse Studio, deploy the model uf2 to SenseCAP - Vision AI by following steps 1 and 2 under Update Edge Impulse firmware. Drag and drop the firmware.uf2 file from EDGE IMPULSE to SENSECAP drive. When you run this on your local interface:

Compile Edge Impulse firmware from source

If you want to compile the Edge Impulse firmware from the source code, you can visit this GitHub repo and follow the instructions included in the README. The model used for the official firmware can be found in this public project.Connect to the LoraWAN® Network

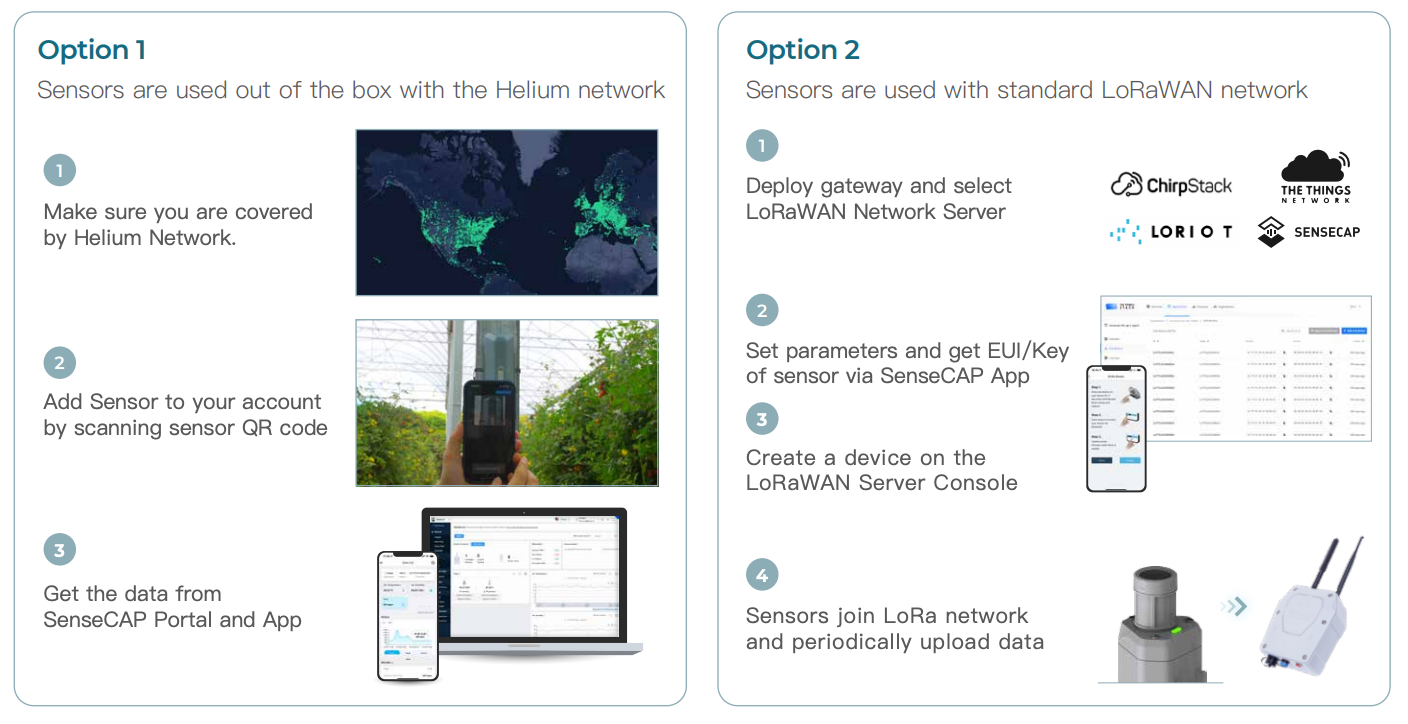

In addition to connecting directly to a computer to view real-time detection data, you can also transmit these data through LoraWAN® and finally upload them to the SenseCAP cloud platform or a third-party cloud platform. On the SenseCAP cloud platform, you can view the data in a cycle and display it graphically through your mobile phone or computer. The SenseCAP cloud platform and SenseCAP Mate App use the same account system. Since our focus here is on describing the model training process, we won’t go into the details of the cloud platform data display. But if you’re interested, you can always visit the SenseCAP cloud platform to try adding devices and viewing data. It’s a great way to get a better understanding of the platform’s capabilities!

How to Select a LoRaWAN Gateway

LoRaWAN® network coverage is required when using sensors, there are two options.

- SenseCAP M2 for Helium network

- SenseCAP M2 Multi-Platform for standard LoraWAN® network

Configure your model on the SenseCraft App

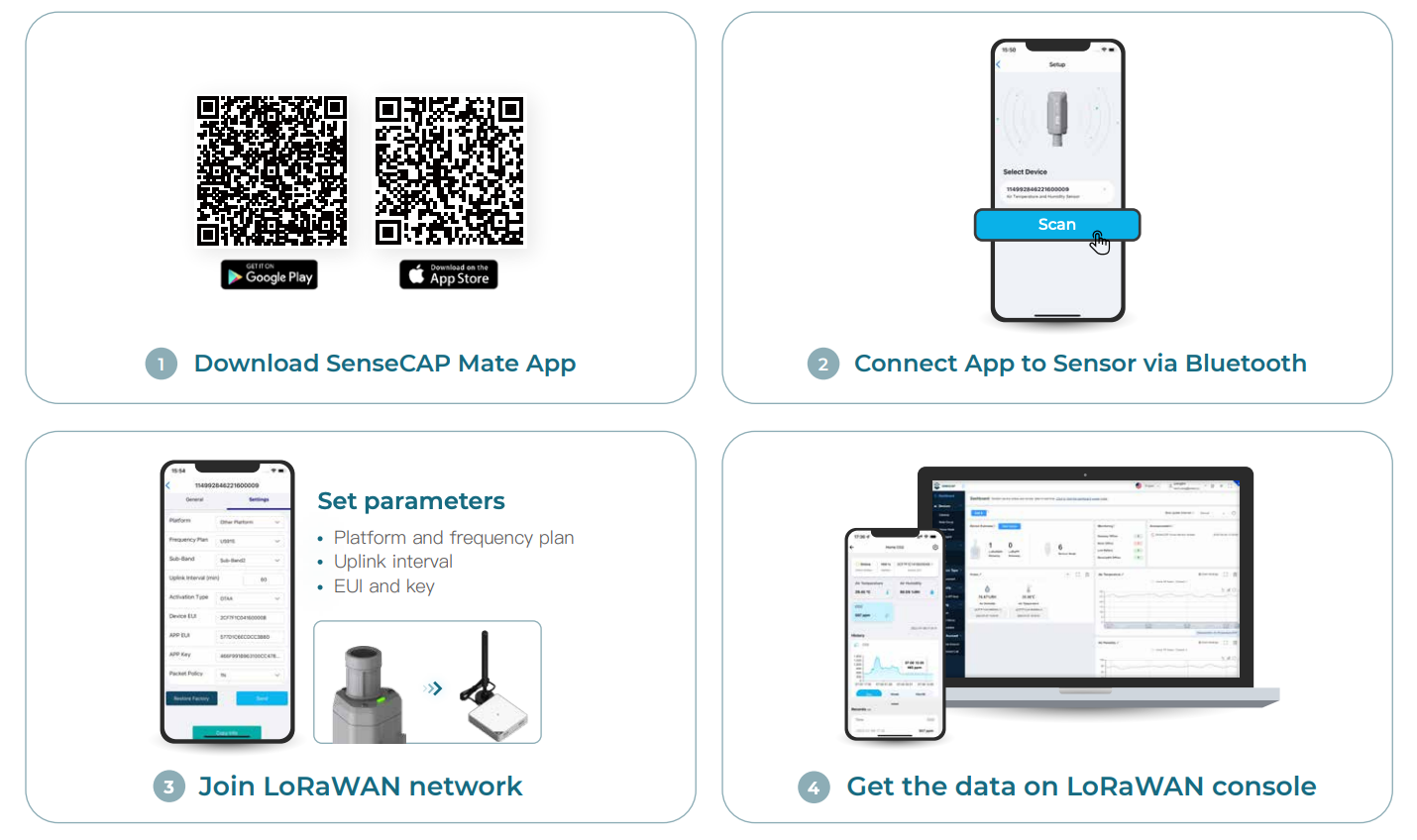

- Download SenseCraft App (formerly SenseCAP Mate App)

-

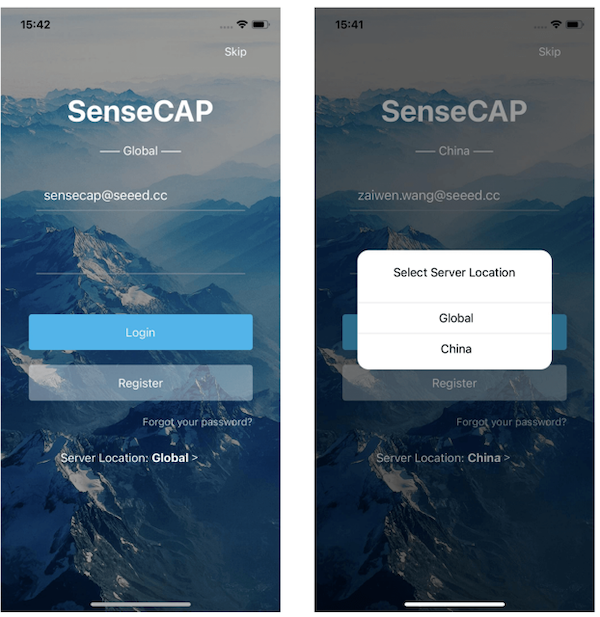

Open SenseCraft and login

-

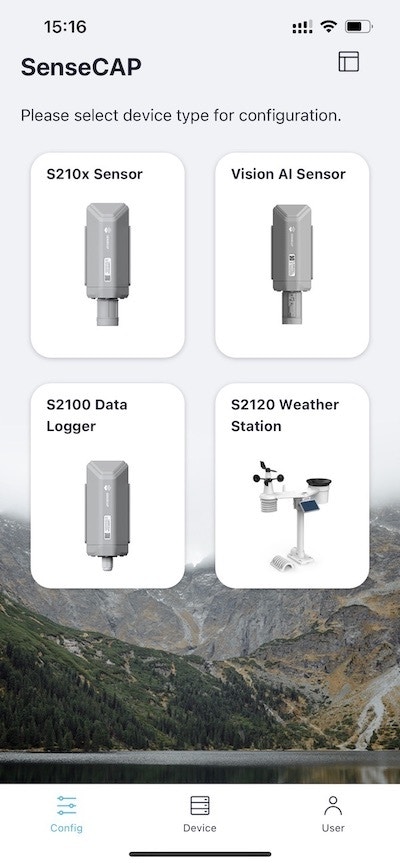

Under Config screen, select Vision AI Sensor

-

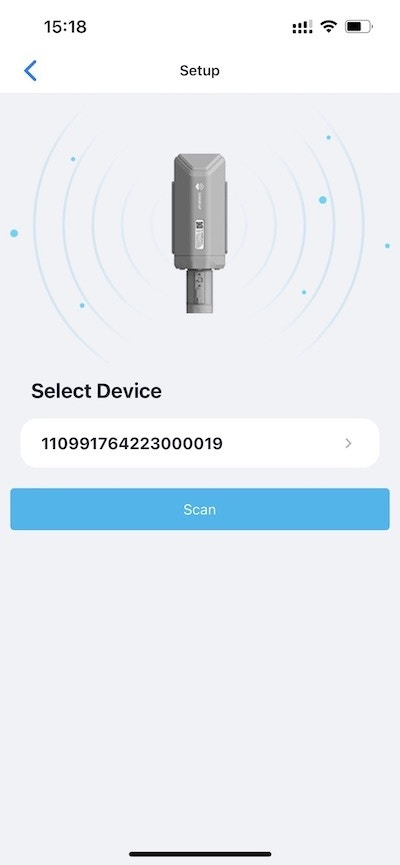

Press and hold the configuration button on the SenseCap A1101 for 3 seconds to enter bluetooth pairing mode

-

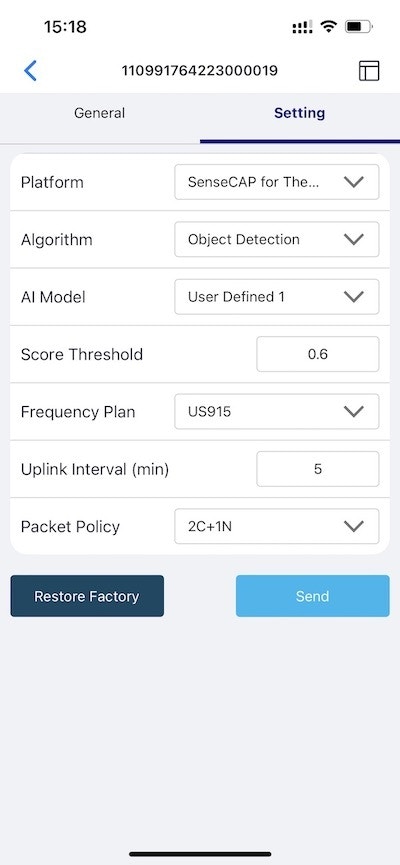

Click Setup and it will start scanning for nearby SenseCAP A1101 devices- Go to Settings and make sure Object Detection and User Defined 1 is selected. If not, select it and click Send

-

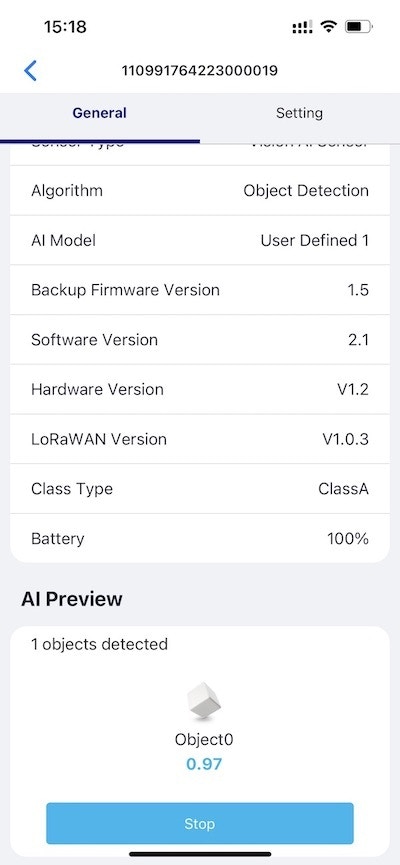

Go to General and click Detect, you’ll see the actual data here.

-

Click here to open a preview window of the camera stream

-

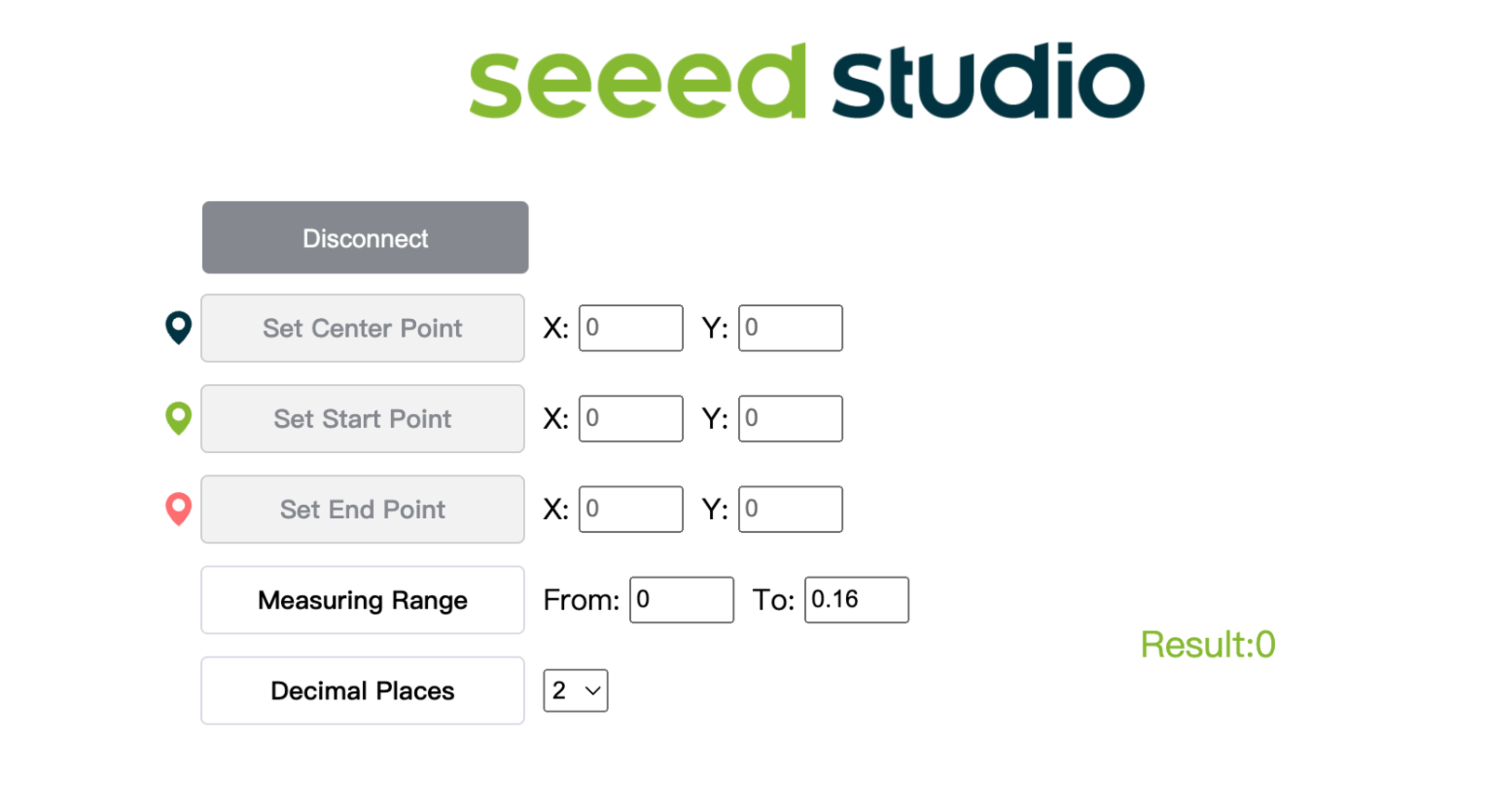

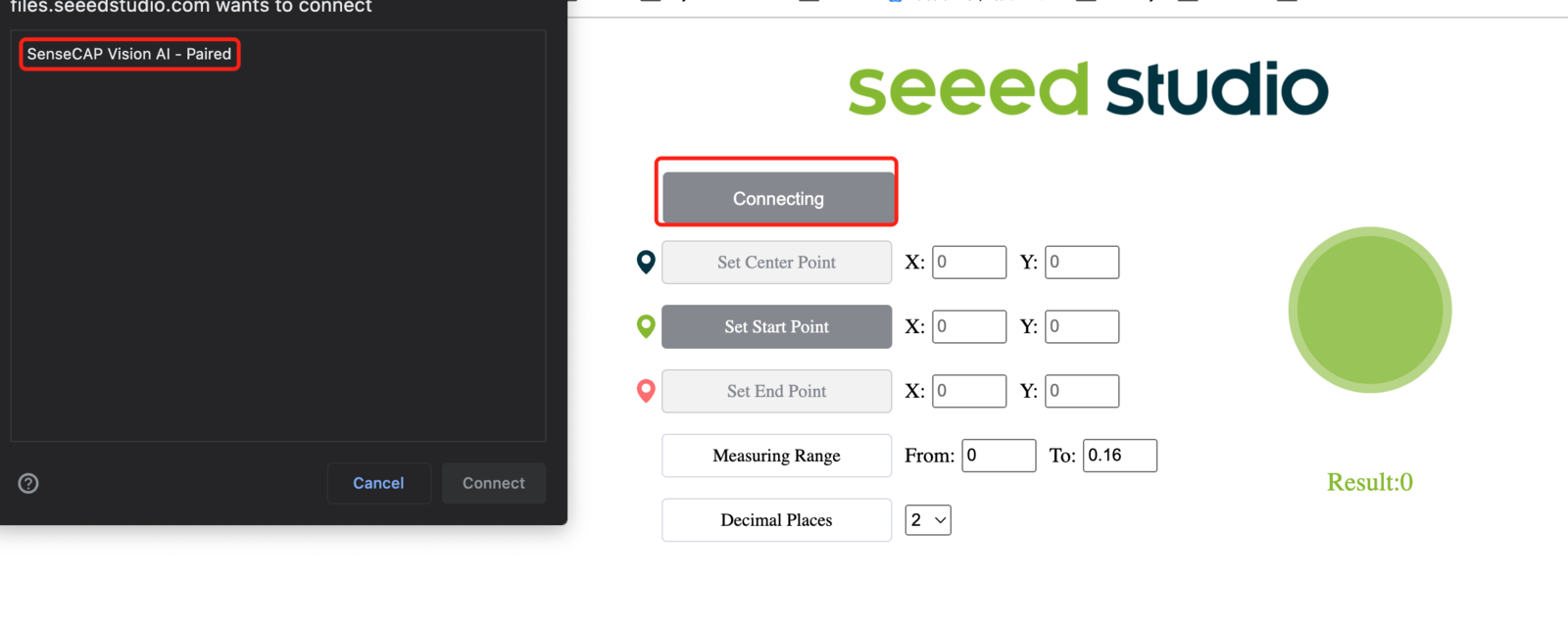

Click Connect button. Then you will see a pop up on the browser. Select SenseCAP Vision AI - Paired and click Connect

-

View real-time inference results using the preview window!

0, 1, 2, 3, 4 and so on. Also the confidence score for each detected object (0.72 in above demo) is displayed!