Already got a trained model?

If you already have a trained model, you can bring this model to Edge Impulse using our BYOM feature.Bring your own model or BYOM allows you to profile, optimize and deploy your own pretrained model (TensorFlow SavedModel, ONNX, or LiteRT (previously Tensorflow Lite)) to any edge device, directly from your Edge Impulse project.How do I enable Keras (expert) mode?

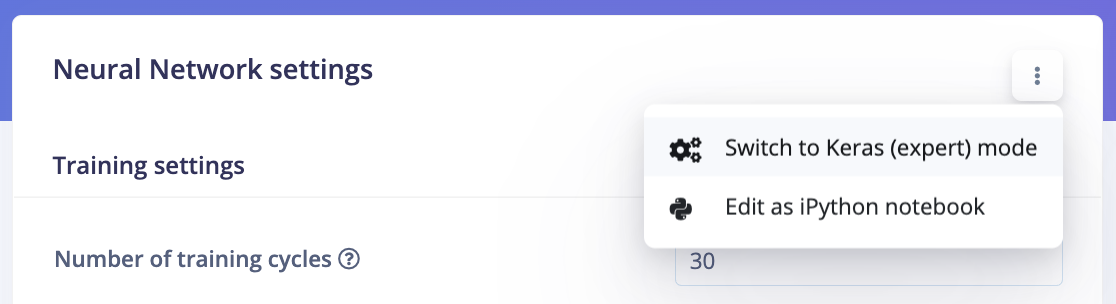

To enable Keras (expert) mode in Edge Impulse:- Navigate to the model configuration page in Edge Impulse Studio.

- Click on the ”⋮” button (three vertical dots) to open the options menu.

- Toggle the “Expert Mode” option.

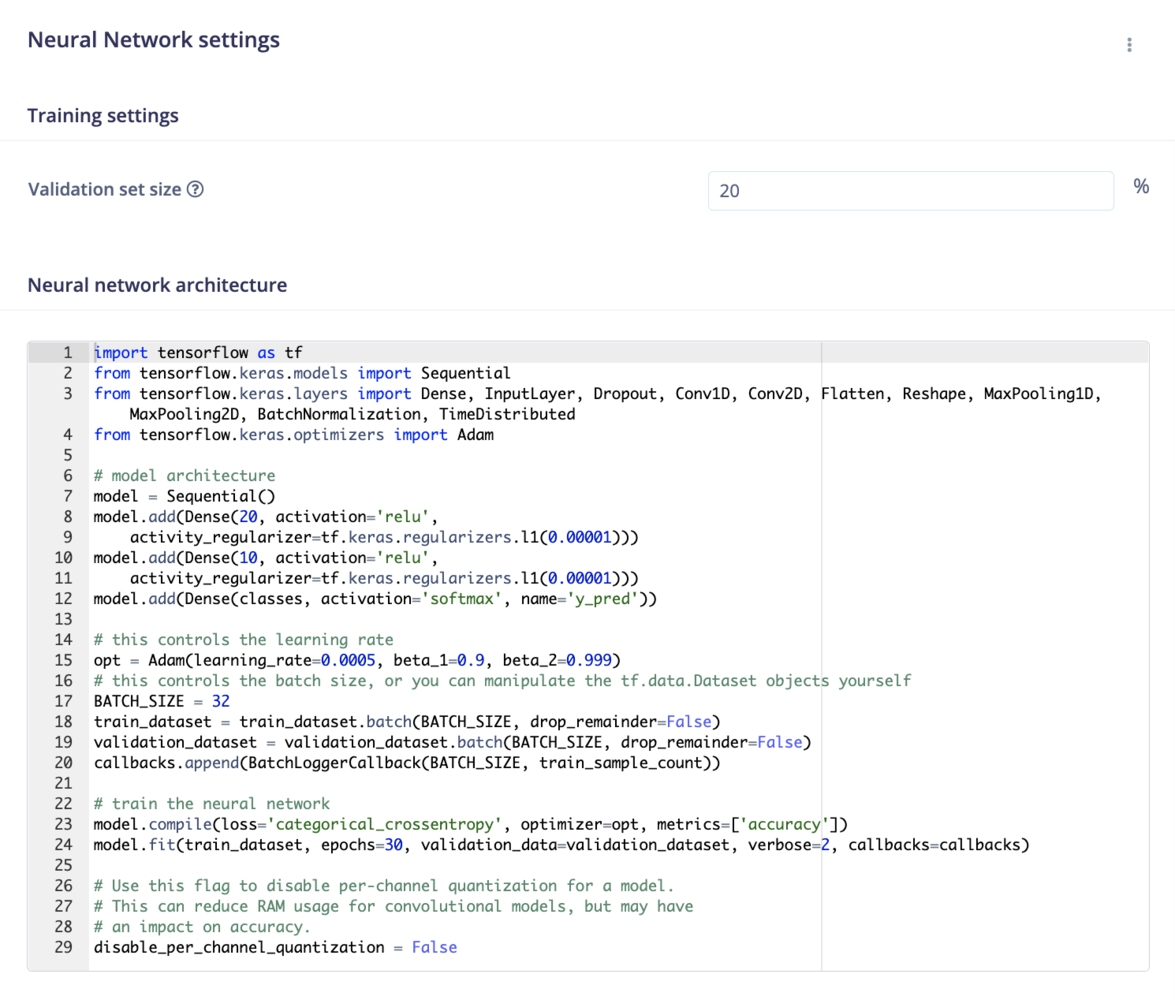

What can I do in Keras (expert) mode?

In expert mode, you have access to a wide range of advanced features, including:- Custom Architectures: Use the full Keras API to create and modify neural network architectures. This includes changing loss functions, optimizers, and printing model architectures.

-

Early Stopping Callback: Prevent overfitting by setting up early stopping callbacks, with control over parameters such as

monitor,min_delta,patience, andrestore_best_weights. Learn more about Early Stopping. - Object Weighting for FOMO: Adjust the weighting of object output cells in the loss function, particularly useful for rare object detection scenarios. Expert Mode Tips for FOMO.

- MobileNet Cut Point Adjustment: Customize cut points in the MobileNetV2 architecture to modify spatial reduction and model capacity. MobileNet Cut Point Adjustment.

- FOMO Classifier Capacity Enhancement: Increase the capacity of the FOMO classifier by adding layers or increasing filters. Enhance FOMO Classifier.

- Transfer Learning Adjustments: Customize the final layers of pre-trained models like MobileNetV1 for specific tasks, such as audio or image classification. Learn more about Transfer Learning.

- Activation Functions: Implement custom activation functions to enhance model performance. Implementing Activation Functions.

- Optimizer Customization: Integrate advanced optimizers like VeLO for superior training efficiency. Using VeLO Optimizer.

- Loss Function Customization: Define and implement custom loss functions tailored to your specific problem. Customizing Loss Functions.

- Epoch Management: Control the number of training cycles and apply early stopping techniques to optimize training time and model accuracy. Epoch Management.