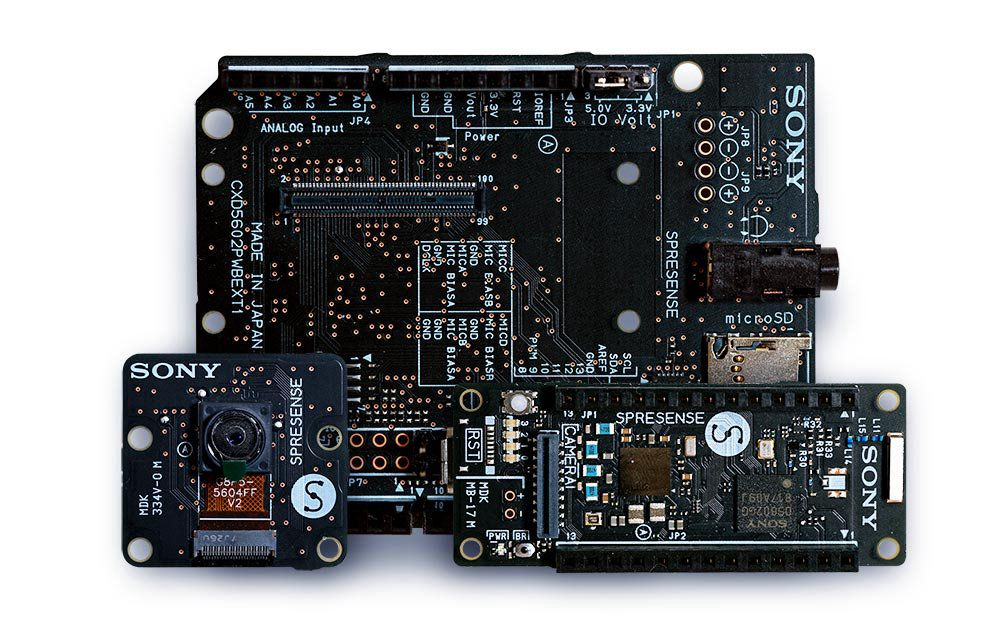

Sony’s Spresense is a small, but powerful development board with a 6 core Cortex-M4F microcontroller and integrated GPS, and a wide variety of add-on modules including an extension board with headphone jack, SD card slot and microphone pins, a camera board, a sensor board with accelerometer, pressure, and geomagnetism sensors, and Wi-Fi board - and it’s fully supported by Edge Impulse. You’ll be able to sample raw data, build models, and deploy trained machine learning models directly from the studio. To get started with the Sony Spresense and Edge Impulse you’ll need:Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

- The Spresense Main Development board.

- The Spresense Extension Board - to connect external sensors.

- A micro-SD card to store samples - this is a necessary add-on as the board will not be able to operate without storing samples.

- For image models: the Spresense CXD5602PWBCAM1 camera add-on or the Spresense CXD5602PWBCAM2W HDR camera add-on.

- For accelerometer models: the Spresense Sensor EVK-70 add-on.

- For audio models: an electret microphone and a 2.2K Ohm resistor, wired to the extension board’s audio channel A.s) [Here is an example picture]](https://cdn.edgeimpulse.com/images/spresense-audio.jpg)).

- Note: for audio models you must also have a FAT formatted SD card for the extension board, with the Spresense’s DSP files included in a

BINfolder on the card. Here is a screenshot of the SD card directory.

- Note: for audio models you must also have a FAT formatted SD card for the extension board, with the Spresense’s DSP files included in a

- For other sensor models: see below for SensiEDGE CommonSense support.

Installing dependencies

To set this device up in Edge Impulse, you will need to install the following software:- Edge Impulse CLI.

- On Linux:

- GNU Screen: install for example via

sudo apt install screen.

- GNU Screen: install for example via

Connecting to Edge Impulse

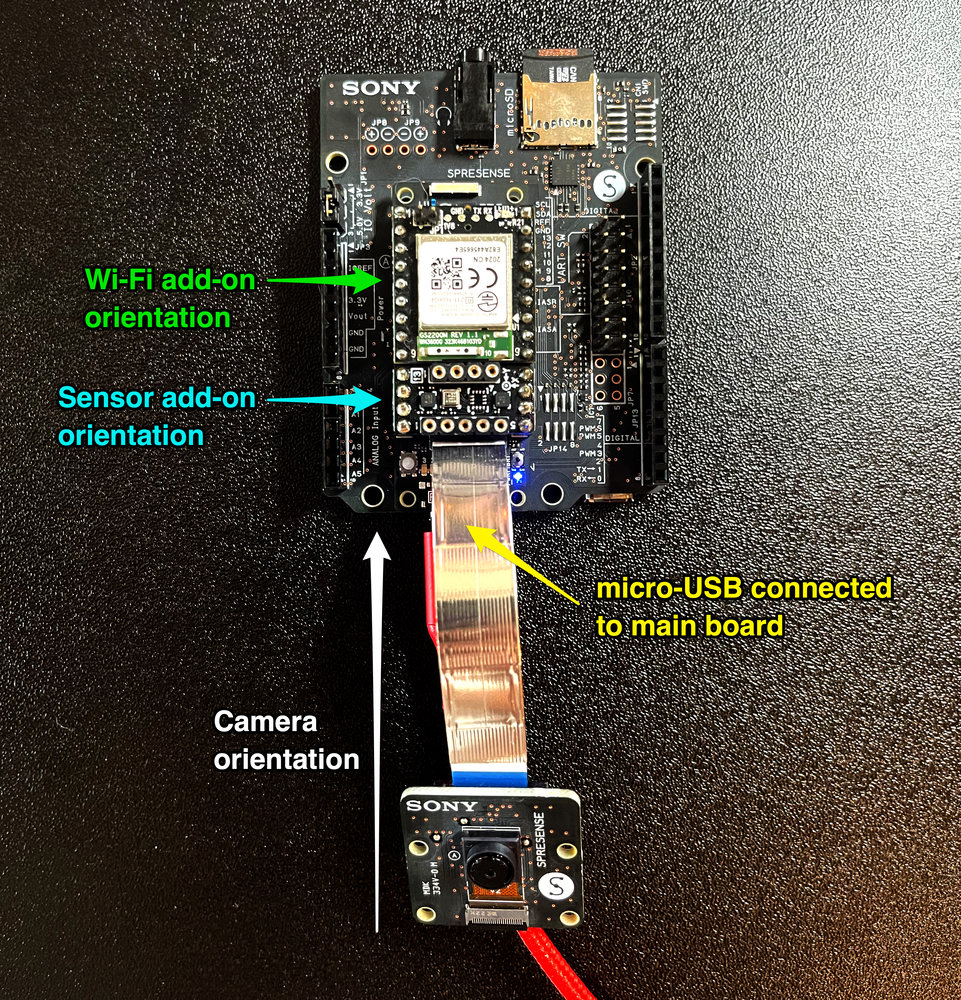

With all the software in place it’s time to connect the development board to Edge Impulse.1. Connect the optional camera, sensor, extension board, Wi-Fi add-ons, and SD card

2. Connect the development board to your computer

Use a micro-USB cable to connect the main development board (not the extension board) to your computer.3. Update the bootloader and the firmware

The development board does not come with the right firmware yet. To update the firmware:- Install Python 3.7 or higher.

- Download the latest Edge Impulse firmware, and unzip the file.

- Open the flash script for your operating system (

flash_windows.bat,flash_mac.commandorflash_linux.sh) to flash the firmware. - Wait until flashing is complete. The on-board LEDs should stop blinking to indicate that the new firmware is running.

4. Setting keys

From a command prompt or terminal, run:Mac: Device choiceIf you have a choice of serial ports and are not sure which one to use, pick /dev/tty.SLAB_USBtoUART or /dev/cu.usbserial-*

--clean.

Alternatively, recent versions of Google Chrome and Microsoft Edge can collect data directly from your development board, without the need for the Edge Impulse CLI. See this blog post for more information.

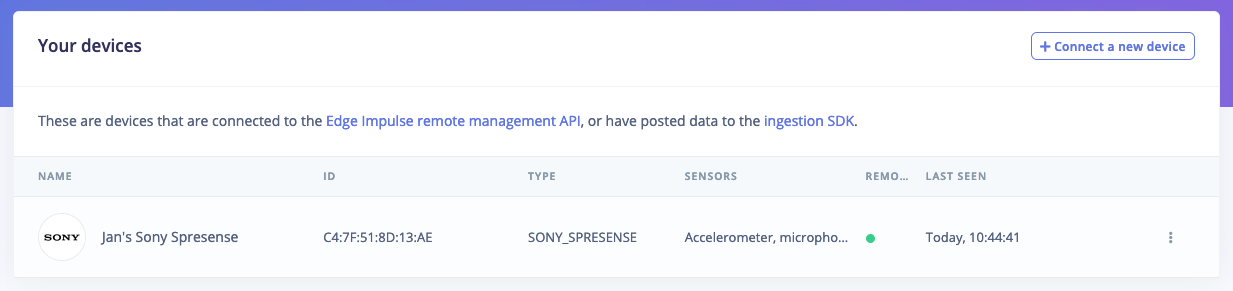

5. Verifying that the device is connected

That’s all! Your device is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Keyword spotting

- Sound recognition

- Image classification

- object detection.

- Object detection with centroids (FOMO)

Troubleshooting

Error when flashing

If you see:Daemon does not start

If theedge-impulse-daemon or edge-impulse-run-impulse commands do not start it might be because of an error interacting with the SD card or because your board has an old version of the bootloader. To see the debug logs, run:

Welcome to nash you’ll need to update the bootloader. To do so:

- Install and launch the Arduino IDE.

- Go to Preferences and under ‘Additional Boards Manager URLs’ add

https://github.com/sonydevworld/spresense-arduino-compatible/releases/download/generic/package_spresense_index.json(if there’s already text in this text box, add a,before adding the new URL). - Then go to Tools > Boards > Board manager, search for ‘Spresense’ and click Install.

- Select the right board via: Tools > Boards > Spresense boards > Spresense.

- Select your serial port via: Tools > Port and selecting the serial port for the Spresense board.

- Select the Spresense programmer via: Tools > Programmer > Spresense firmware updater.

- Update the bootloader via Tools > Burn bootloader.

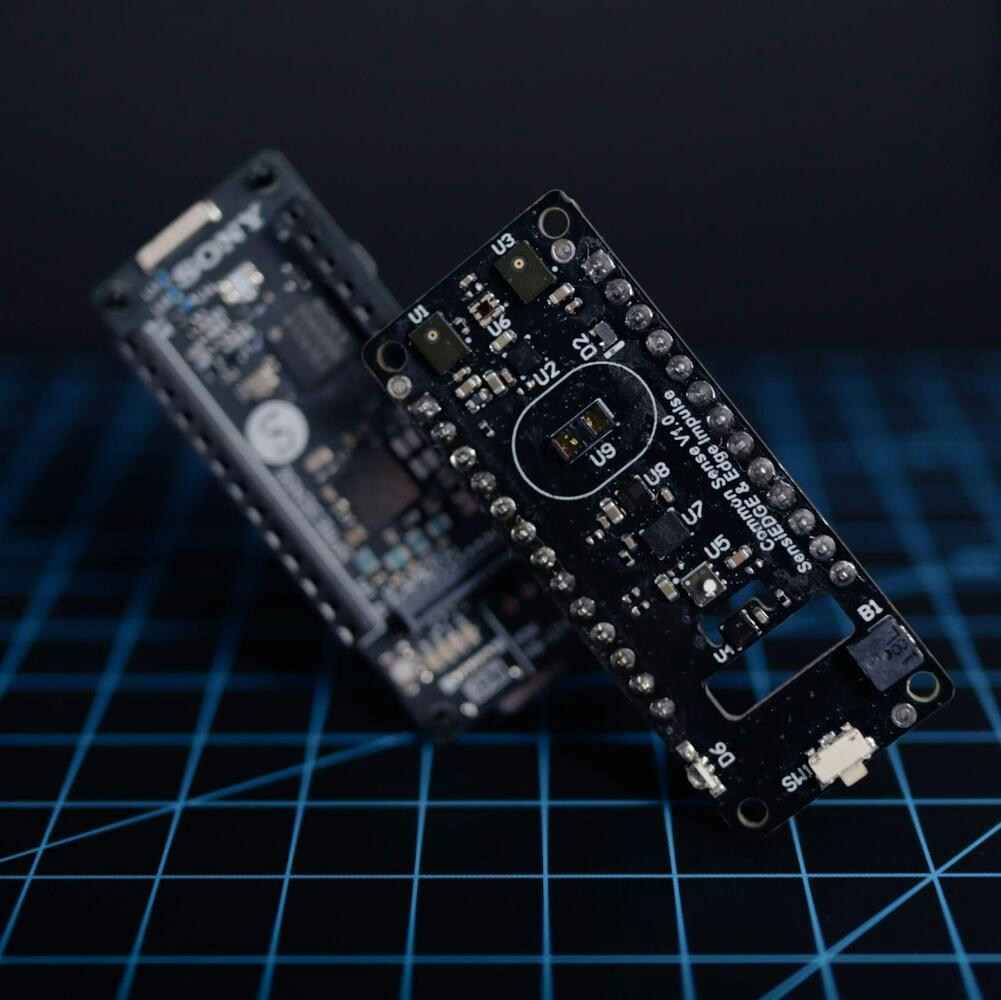

Sensor Fusion with Sony Spresense and SensiEDGE CommonSense

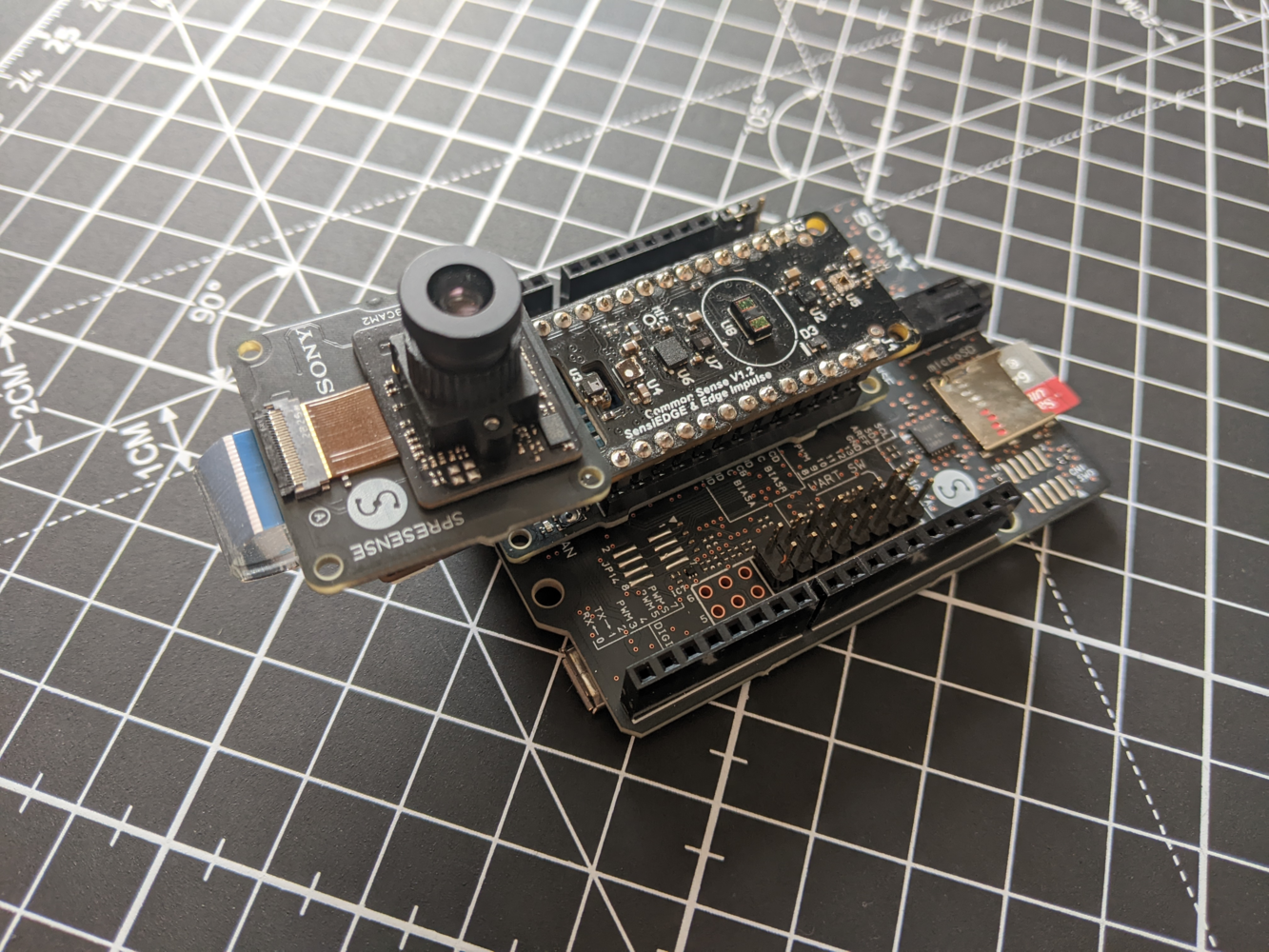

Edge Impulse has partnered with SensiEdge to add support for sensor fusion applications to the Sony Spresense by integrating the SensiEDGE CommonSense sensor extension board. The CommonSense comes with a wide array of sensor functionalities that connect seamlessly to the Spresense and the Edge Impulse studio. In addition to the Sony Spresense, the Spresense extension board and a micro-SD card, you will need the CommonSense board which is available to purchase on Mouser.

Getting started with CommonSense

Connect the Sony Spresense extension board to the Sony Spresense ensuring that the micro-SD card is loaded. Connect the SensiEDGE CommonSense in the orientation shown below - with the connection ports facing the same direction. The HD camera is optional but can be attached if you want to create an image based application.

--clean.

If prompted to select a device, choose commonsense: