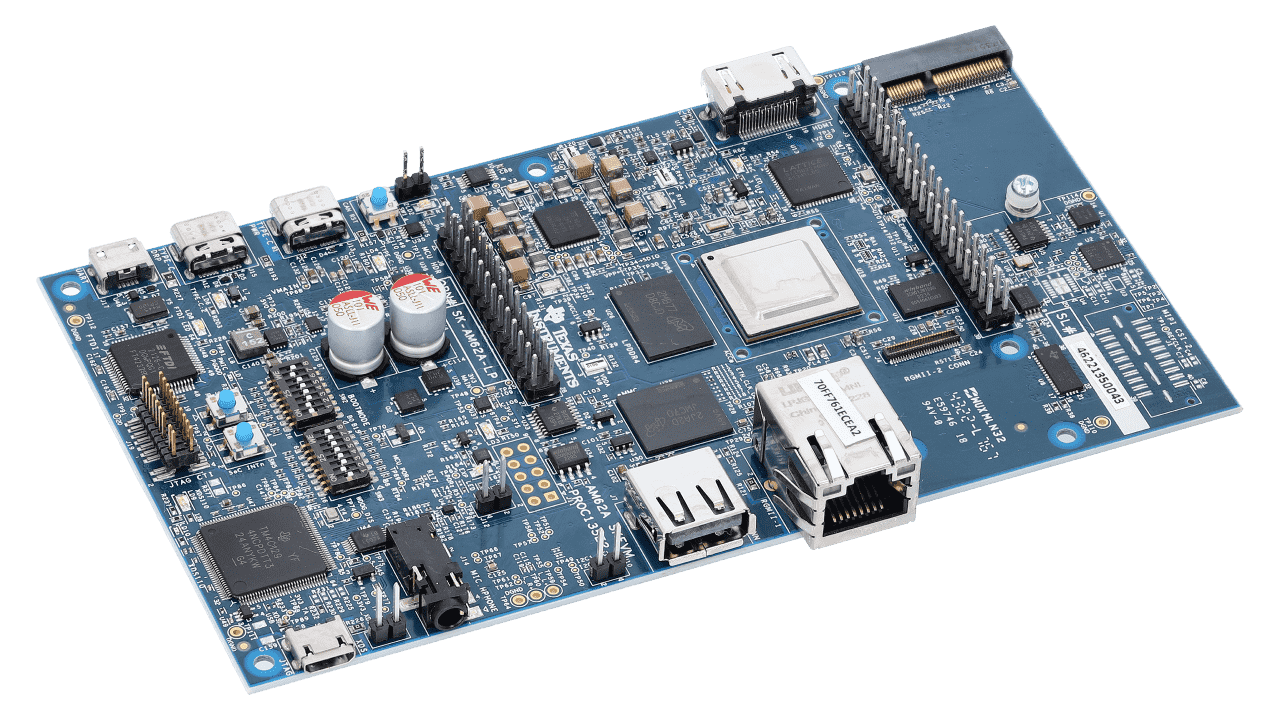

The SK-AM62A-LP is built around TI’s AM62A AI vision processor, which includes an image signal processor (ISP) supporting up to 5 MP at 60 fps, a 2 teraoperations per second (TOPS) AI accelerator, a quad-core 64-bit Arm® Cortex®-A53 microprocessor, a single-core Arm Cortex-R5F and an H.264/H.265 video encode/decode. SK-AM62A-LP is an ideal choice for those looking to develop low-power smart camera, dashcam, machine-vision camera and automotive front-camera applications.

In order to take full advantage of the AM62A’s AI hardware acceleration Edge Impulse has integrated TI Deep Learning Library and AM62A optimized Texas Instruments Edge AI Model Zoo for low-to-no-code training and deployments from Edge Impulse Studio.

Installing dependencies

First, one needs to follow the AM62A Quick Start Guide to install the Linux distribution to the SD card of the device.

Edge Impulse supports PSDK 8.06.

npm config set user root && sudo npm install edge-impulse-linux -g --unsafe-perm

Connecting to Edge Impulse

With all software set up, connect your camera or microphone to your operating system (see ‘Next steps’ further on this page if you want to connect a different sensor), and run:

This will start a wizard which will ask you to log in, and choose an Edge Impulse project. If you want to switch projects run the command with --clean.

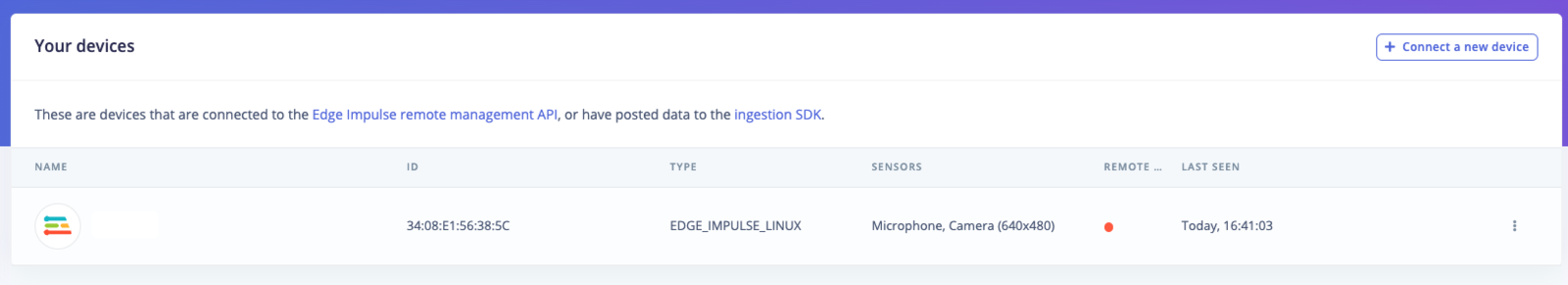

Verifying that your device is connected

That’s all! Your machine is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:

Looking to connect different sensors? Our Linux SDK lets you easily send data from any sensor and any programming language (with examples in Node.js, Python, Go and C++) into Edge Impulse.

Deploying back to device

To run your impulse locally run on your Linux platform:

edge-impulse-linux-runner

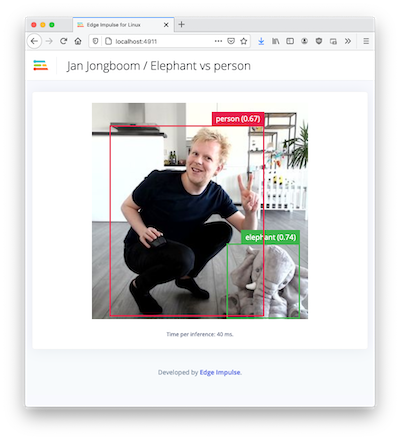

Image model?

If you have an image model then you can get a peek of what your device sees by being on the same network as your device, and finding the ‘Want to see a feed of the camera and live classification in your browser’ message in the console. Open the URL in a browser and both the camera feed and the classification are shown:

Example projects

Optimized Models for the AM62A

Texas Instruments provides models that are optimized to run on the AM62A. Those that have Edge Impulse support are found in the links below. Each Github repository has instructions on installation to your Edge Impulse project. The original source of these optimized models are found at Texas Instruments Edge AI Model Zoo.

Further resources