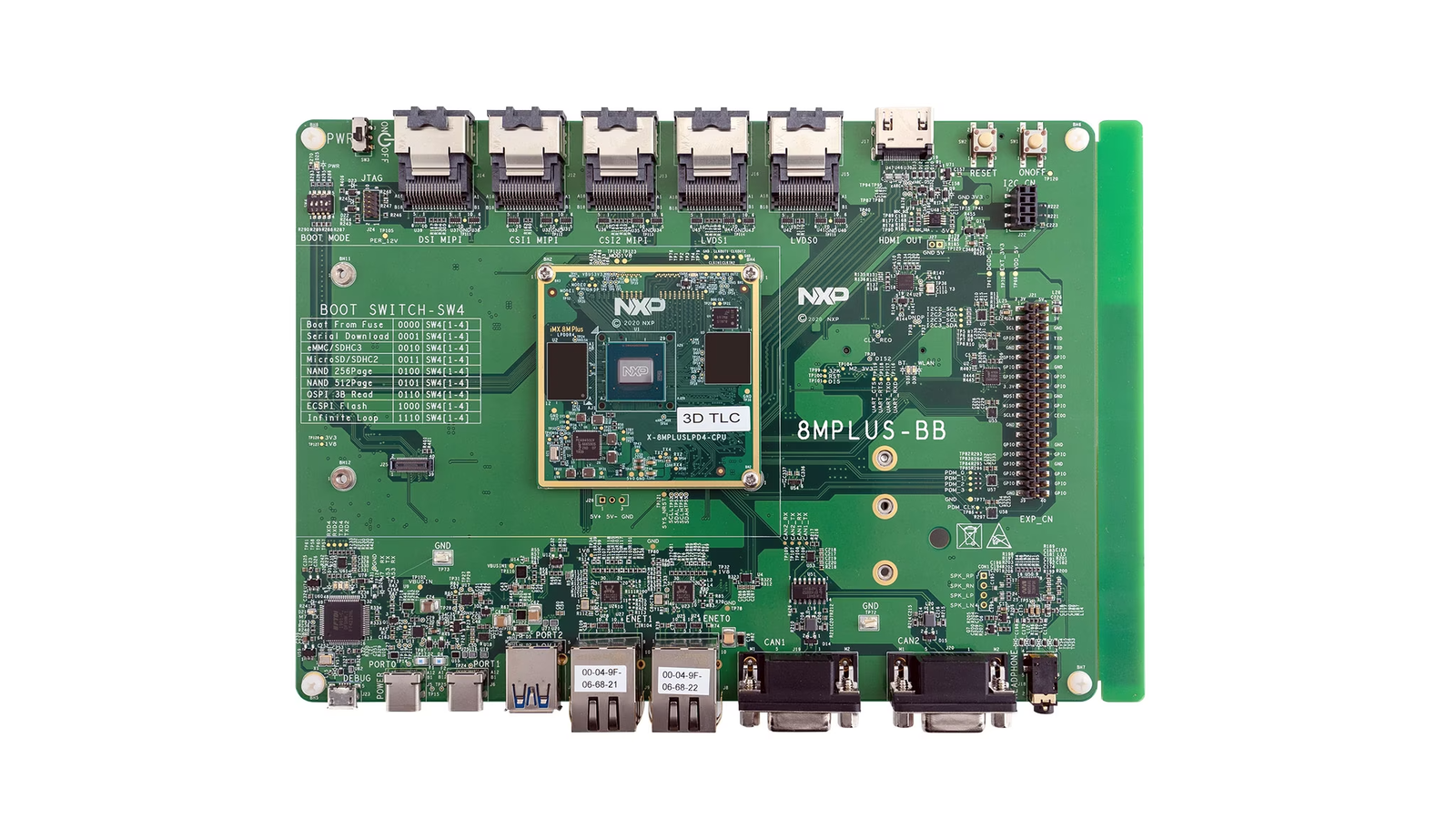

The NXP I.MX 8M Plus is a popular SoC found in many single board computers, development kits, and finished products. When prototyping, many users turn to the official NXP Evaluation Kit for the i.MX 8M Plus, known simply as the i.MX 8M Plus EVK. The board contains many of the ports, connections, and external components needed to verify hardware and software functionality. The board can also be used with Edge Impulse, to run machine learning workloads on the edge.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

- i.MX 8M Plus Quad applications processor

- 4x Arm® Cortex-A53 up to 1.8 GHz

- 1x Arm® Cortex-M7 up to 800 MHz

- Cadence® Tensilica® HiFi4 DSP up to 800 MHz

- Neural Processing Unit

- 6 GB LPDDR4

- 32 GB eMMC 5.1

Special Note: The NPU is not used by Edge Impulse by default, but CPU-inferencing alone is adequate in most situations. The NPU can be leveraged however, if you export the Tensorflow from your Block Output after your model has been trained, by following these instructions: Edge Impulse Studio -> Dashboard -> Block outputs. Once download, you can build an application, or use Python, to run the model accelerated via the i.MX8’s NPU.Accessories included in the Evaluation Kit:

- i.MX 8M Plus CPU module

- Base board

- USB 3.0 to Type C cable.

- USB A to micro B cable

- USB Type C power supply

Installing dependencies

A few steps need to be performed to get your board ready for use.Prerequisites

You will also need the following equipment to complete your first boot.- Monitor

- Mouse and keyboard

- Ethernet cable or WiFi

Operating System Installation

NXP provides a ready-made operating system based on Yocto Linux, that can be downloaded from the NXP website. However, we’ll need a Debian or Ubuntu-based image for Edge Impulse purposes, so you’ll have to run an OS build and come away with a file that can be flashed to an SD Card and then booted up. The instructions for building the Ubuntu-derived OS for the board are located here: https://github.com/nxp-imx/meta-nxp-desktop Follow the instructions, and once you have an image built, flash it to an SD Card, insert into the i.MX 8M Plus EVK, and power on the board. Once booted up, open up a Terminal on the device, and run the following commands:Connecting to Edge Impulse

You may need to reboot the board once the dependencies have finished installing. Once rebooted, run:--clean.

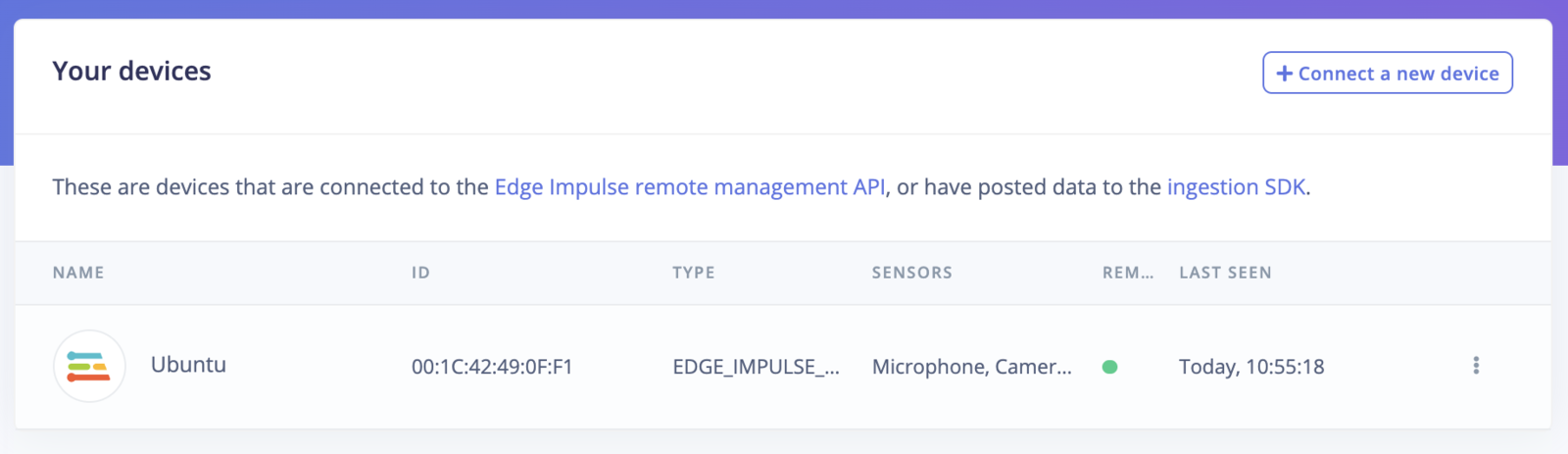

Verifying that your device is connected

That’s all! Your i.MX 8M Plus EVK is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Keyword spotting

- Sound recognition

- Image classification

- object detection.

- Object detection with centroids (FOMO)

Deploying back to device

To run your impulse locally on the i.MX 8M Plus EVK, open up a terminal and run:Image model?

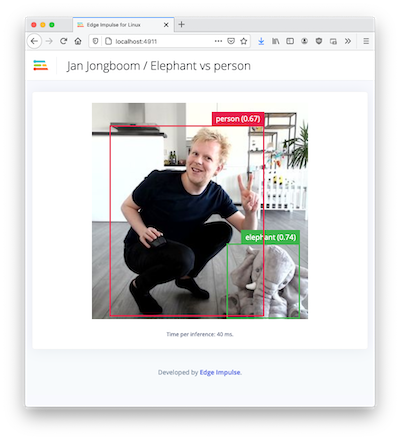

If you have an image model then you can get a peek of what your i.MX 8M Plus EVK sees by being on the same network as your device, and finding the ‘Want to see a feed of the camera and live classification in your browser’ message in the console. Open the URL in a browser and both the camera feed and the classification are shown: