Knowledge requiredThis tutorial assumes that you’re familiar with building applications using the Texas Instruments SimpleLink SDK as well as ARM GCC toolchains. You will also need

make set up in your environment. If you’re unfamiliar with these tools you can build binaries directly for your development board from the Deployment page in the studio.Prerequisites

- Make sure you followed the Continuous motion recognition tutorial, and have a trained impulse.

- Clone the example-standalone-inferencing-ti-launchxl repository to your working directory.

- Install Texas Instruments UniFlash

- Install the desktop version for your operating system here

- Add the installation directory to your PATH

- See Flash the board for more details

Deploying your impulse

Head over to your Edge Impulse project, and go to Deployment. From here you can create the full library which contains the impulse and all external required libraries. Select C/C++ Library and click Build to create the library. Then download and extract the.zip file.

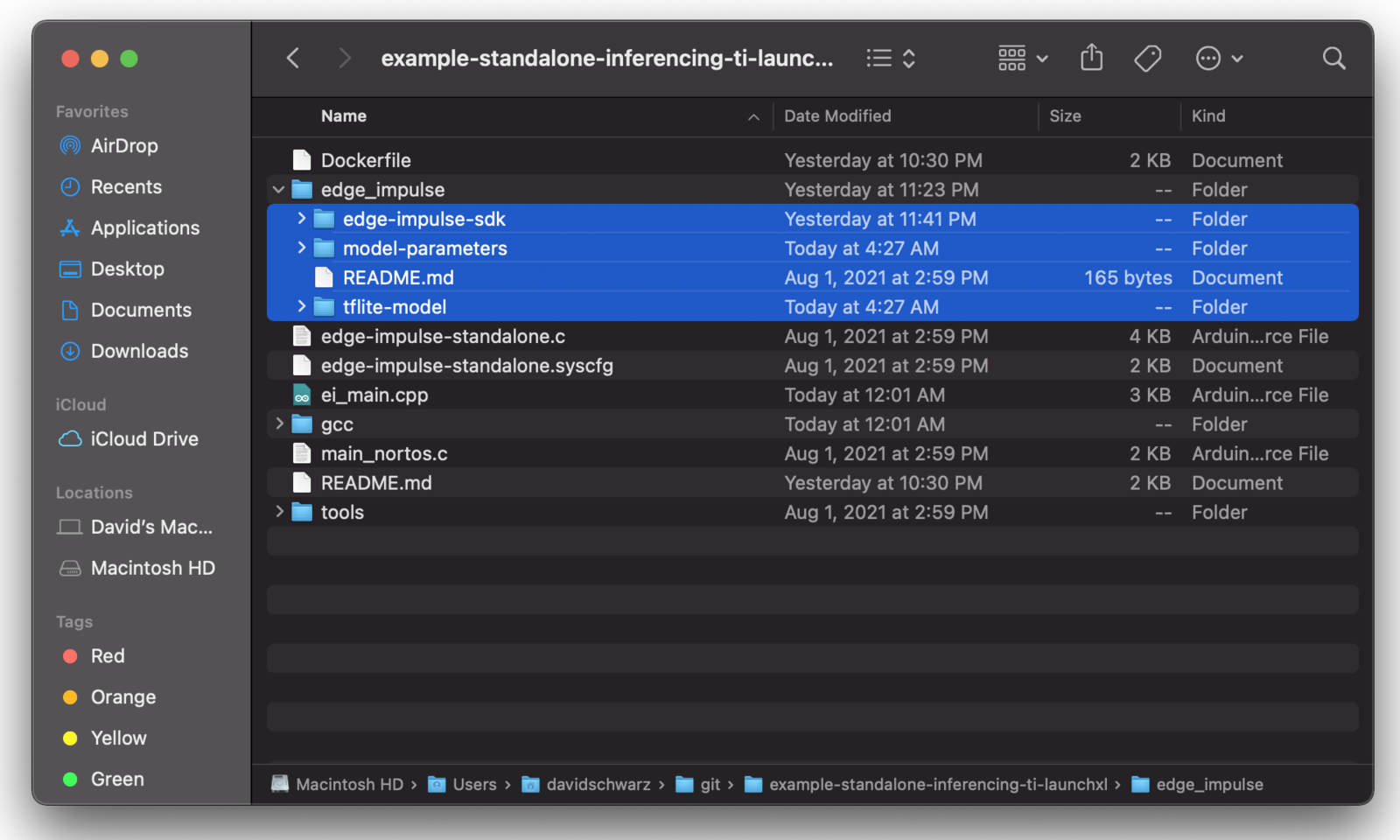

To add the impulse to your firmware project, paste the edge-impulse-sdk/, model-parameters and tflite-model directories from the downloaded ‘.zip’ file into the edge_impulse/ directory of the example-standalone-inferencing-ti-launchxl repository. Make sure to overwrite any existing files in the edge_impulse/ directory.

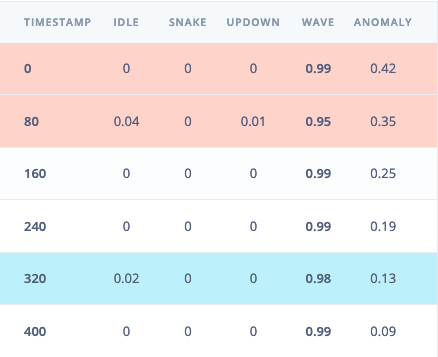

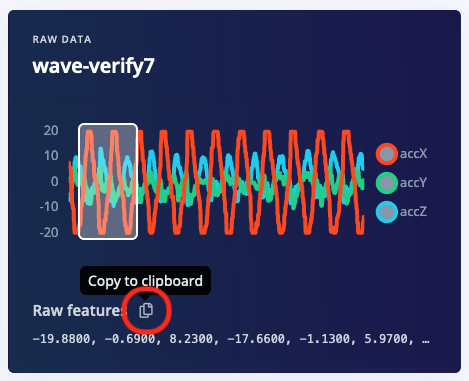

ei_main.cpp. In this minimal code example, inference is run from a static buffer of input feature data. To verify that our embedded model achieves the exact same results as the model trained in Studio, we want to copy the same input features from Studio into the static buffer in ei_main.cpp.

To do this, first head back to the studio and click on the Live classification tab. Then load a validation sample, and click on a row under ‘Detailed result’.

ei_main.cpp paste the raw features inside the static const float features[] definition, for example:

ei_main.cpp to run classification on live data.

Building the project

There are two ways to build the project. The first uses the included Docker environment, pre-configured with the correct SimpleLink SDK version and ARM GCC toolchain. The other option is to build the project locally. This will require installing dependencies and making minor modifications to the makefileBuilding with Docker

If you are building with Docker, you will need to have Docker Desktop installed.- Run the Docker Desktop executable, or start the docker daemon from a terminal as shown below:

- From the example-standalone-inferencing-ti-launchxl directory, build the Docker image:

- Build the application by running the container as follows:

- Connect the board to your computer using USB.

- Flash the board

Building locally

If you are building locally, You will first need to install the following dependencies. This guide assumes these are installed into the same working directory as the cloned standalone example repo.- TI Simplelink SDK version

simplelink_cc13x2_26x2_sdk_5.20.00.52 - ARM GCC toolchain version

9-2019-q4-major

gcc/makefile file in the standalone example repository, and define custom paths to your installed dependencies.

Remove the SIMPLELINK_CC13X2_26X2_SDK_INSTALL_DIR on line 2 of the makefile, and add the following definitions at the top of the makefile

gcc/ folder of the standalone firmware repo, run:

Flash the board

If the UniFlash CLI is added to your PATH, run:/Applications/ti/uniflash_6.4.0 on macOS) to your PATH on:

If during flashing you encounter issues after UniFlash is added to PATH, ensure:

- The device is properly connected and/or the cable is not damaged.

- You have the proper permissions to access the USB device and run scripts. On macOS you can manually approve blocked scripts by clicking the

System Preferences->Security Settings->Unlock Icon (Bottom Left)and then approving the blocked script. - If on Linux you may want to try copying

tools/71-ti-permissions.rulesto/etc/udev/rules.d/. Then re-attach the USB cable and try again.

gcc/build/edge-impulse-standalone.out binary file may be flashed to the LaunchPad using the UniFlash GUI or web-app. See the Texas Instruments Quick Start Guide for more info.