Installing dependencies

Yocto image preparation/patch/build for V2H

Renesas provides Yocto build system to build all the necessary packages and create the Linux image. The Renesas documentation calls out that the build system must be based off of Ubuntu 20.04. The following instructions here outline the necessary steps to setup your build environment. In order to use the Edge Impulse CLI tools, NodeJS v18 needs to be installed into the yocto image that you build. You will need to download the required NodeJS v18 patch here. Given the instructions called out here, once the following file must be downloaded from Renesas (specific versions specified are required):Post-flashing tasks

Once your RZ V2H board is running your new image, you will need to complete an additional task. Please perform the following to setup the DRP-AI and TVM SDK:Accessing the board using screen

The easiest way is to connect through serial to the RZ/V2H board using the USB mini b port.

- After connecting the board with a USB-C cable, please power the board.

-

Power on the board: Connect the power cable to the board, switch

SW3ON thenSW2ON. -

Please install

screento the host machine and then execute the following command from Linux to access the board: -

You will see the boot process, then you will be asked to log in:

- Log in with username

root - There is no password

- Log in with username

Installing Edge Impulse Linux CLI

Once you have logged in to the board, please run the following command to install Edge Impulse Linux CLIConnecting to Edge Impulse

With all software set up, connect your USB camera (or a supported MIPI CSI camera) to your Renesas board (see ‘Next steps’ further on this page if you want to connect a different sensor), and run:--clean.

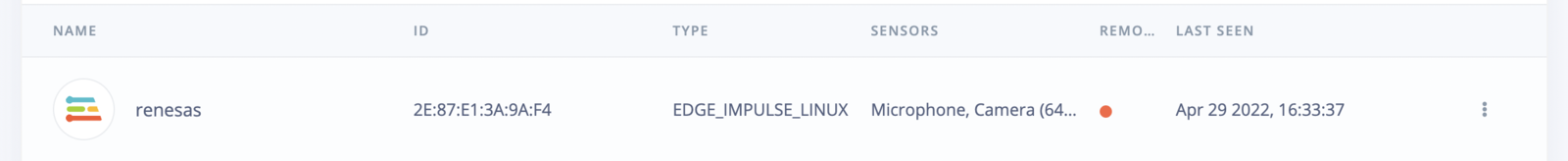

Verifying that your device is connected

That’s all! Your device is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

Currently, all Edge Impulse models can run on the RZ/V2H CPU which is a dedicated Cortex A55. In addition, you can bring your own model to Edge Impulse and use it on the device. However, if you would like to benefit from the DRP-AI3 hardware acceleration support including higher performance and power efficiency, please use one of the following models: For object detection:- Yolov5 (v5)

- FOMO (Faster objects More Objects)

- MobileNet v1, v2

Deploying back to device

To run your impulse locally, just connect to your Renesas RZ/V2H and run:eim model that you can use with the above runner as follows:

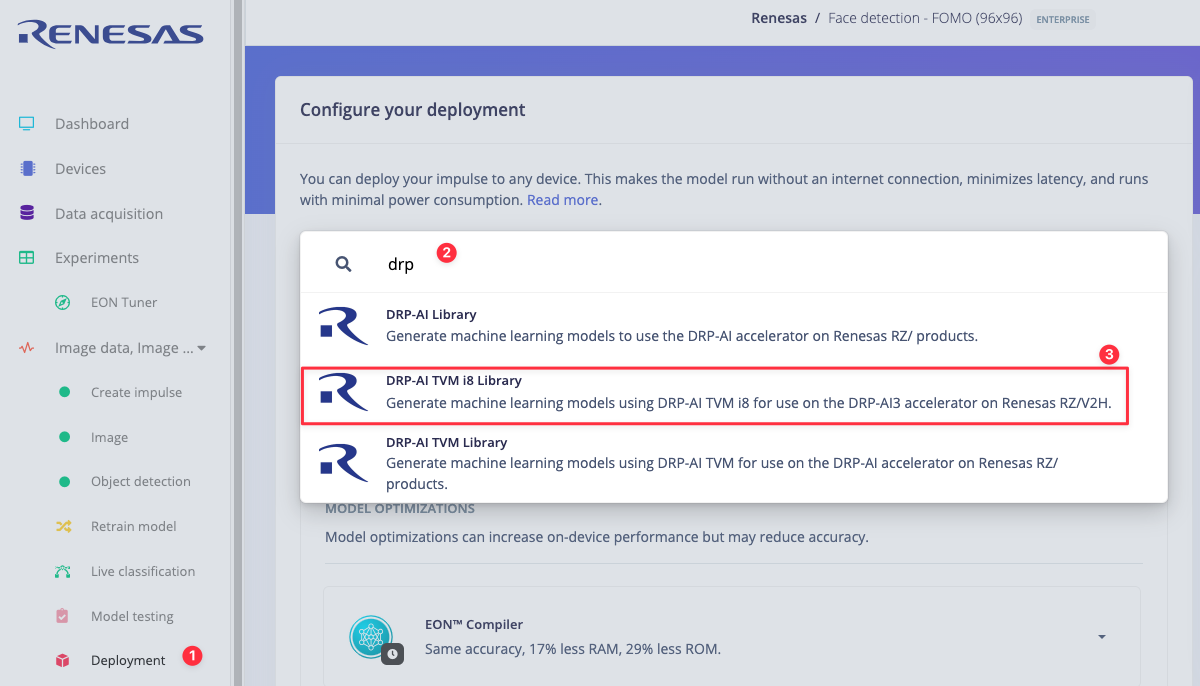

Go to the deployment page and select:

runner and you will see both the camera feed and the classification results.

DRP-AI TVM i8 library

Since the RZ/V2H benefits from hardware acceleration using the DRP-AI, we provide you with thedrp-ai-tvm-i8 library that uses our C++ Edge Impulse SDK, DRP-AI TVM and models headers that run on the hardware accelerator. If you would like to integrate the model source code into your applications and benefit from the DRP-AI then you need to select the drp-ai-tvm-i8 library.

We have an example showing how to use the drp-ai-tvm-i8 library that can be found in Deploy your model as a DRP-AI TVM i8 library.