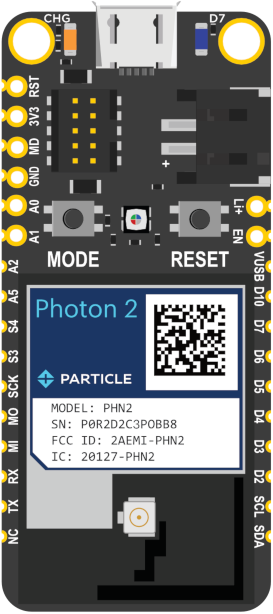

The Photon 2 with Edge ML Kit is a development system with a microcontroller and Wi-Fi networking containing a Realtek RTL8721DM MCU ARM Cortex M33. The form-factor is similar to the Argon (Adafruit Feather), but the Photon 2 supports 2.4 GHz and 5 GHz Wi-Fi, BLE, and has much larger RAM and flash that can support larger applications. Included in the kit are sensors used for embedded machine learning inferencing. It is intended to replace both the Photon and Argon modules. It contains the same module as the P2, making it easier to migrate from a pin-based development module to a SMD mass-production module if desired.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Installing dependencies

To set this device up in Edge Impulse, you will need to install the following software:- Edge Impulse CLI

- Particle CLI

- Particle Workbench (Optional, only required if deploying to Particle Library)

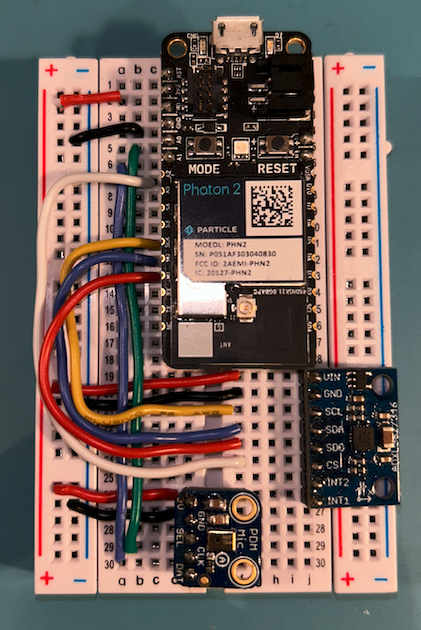

Setup the Particle Photon 2 with the accelerometer and PDM microphone

- Connect the ADXL362 to the Photon 2 as follows:

| ADXL362 | Photon 2 |

|---|---|

| VCC | 3V3 |

| GND | GND |

| CS | D13/A2 |

| SCK | D17 |

| MISO (SDO) | D16 (MISO) |

| MOSI (SDA) | D15 (MOSI) |

| INT1 | not connected |

| INT2 | not connected |

- Connect the microphone to the Photon 2 as follows:

| PDM Mic | Photon 2 |

|---|---|

| VCC | 3V3 |

| GND | GND |

| SEL | Not connected |

| CLK | A0 |

| DAT | A1 |

- Plug in the USB Cable to the device

Connecting to Edge Impulse

Working directly with the device through the Particle Library deployment option involves the use of the Particle Workbench in VS Code, but if you simply want to start gathering data for a project you only need to install the Edge Impulse CLI and flash the following firmware to your device with your sensor(s) connected as described in the section below. Alternatively you can clone the Particle Firmware repo and build the firmware locally.Deploying back to device

Flash a Particle Photon 2 Binary

Flashing your Particle device requires the Particle command line tool. Follow these instructions to install the tools. Navigate to the directory where your Photon2 firmware downloaded and decompress the zip file. Open a terminal and use the following command to flash your device:Collecting Data from the Particle Photon 2

Before starting ingestion create an Edge Impulse account if you haven’t already. Also, be sure to setup the device per the instructions above. To collect data from the Photon 2 please follow these steps:- Create a new Edge Impulse Studio project, remember the name you create for it.

- Connect your device to the Edge Impulse studio by running following command in a terminal:

- After connecting, the Edge Impulse Daemon will ask to login to your account and select the project. Alternatively, you can copy the API Key from the Keys section of your project and use the —api-key flag instead of —clean.

- Open your Edge Impulse Studio Project and click on Devices. Verify that your device is listed here.

- Start gathering data by clicking on Data acquisition

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Audio Event Detection with Particle Boards - Particle Project: Doorbell SMS

- Keyword spotting - Particle Project: You’re Muted

- Sound recognition

- Recognize gestures from motion

- Particle Energy Monitoring Demo

Advanced Deployment

Particle library deployment

If you choose to deploy your project to a Particle Library and not a binary follow these steps to flash the your firmware from Particle Workbench:- Open a new VS Code window, ensure that Particle Workbench has been installed (see above)

- Use VS Code Command Palette and type in Particle: Import Project

- Select the

project.propertiesfile in the directory that you just downloaded and extracted from the section above.

- Select the

- Use VS Code Command Palette and type in Particle: Configure Project for Device

- Select

deviceOS@5.5.0 - Choose a target. (e.g. P2 , this option is also used for the Photon 2).

- Select

- It is sometimes needed to manually put your Device into DFU Mode. You may proceed to the next step, but if you get an error indicating that “No DFU capable USB device available” then please follow these step.

- Hold down both the RESET and MODE buttons.

- Release only the RESET button, while holding the MODE button.

- Wait for the LED to start flashing yellow.

- Release the MODE button.

- Compile and Flash in one command with: Particle: Flash application & DeviceOS (local)

The following video demonstrates how to collect raw data from an accelerometer and develop an application around the Edge Impulse inferencing library with the Photon 2.

Data ingestion via Particle webhook

If you would like to use the Particle webhook to send training data from your particle board directly to Edge Impulse, or indeed any other of our apis follow these steps:-

Access Particle Console:

- Visit Particle Console.

- Log in with your Particle account credentials.

-

Navigate to Integrations:

- Click on the “Integrations” tab in the left-hand menu.

- Select “Webhooks” from the available options.

-

Create a New Webhook:

- Click “New Integration”.

- Choose “Webhook”.

-

Webhook Configuration:

- Name: Assign a descriptive name to your webhook.

- Event Name: Specify the event name that triggers the webhook (e.g., “edge/ingest”).

- URL: Set this to the Edge Impulse ingestion API URL, typically something like

https://ingestion.edgeimpulse.com/api/training/data. - Request Type: Choose “POST”.

- Request Format: Select “Custom”.

-

Custom Request Body:

- Input the JSON structure required by Edge Impulse. This will vary based on your project’s data schema.

-

HTTP Headers:

- Add necessary headers:

x-api-key: Your Edge Impulse API key.Content-Type: “application/json”.x-file-name: Use a dynamic data field like{{PARTICLE_EVENT_NAME}}.

- Add necessary headers:

-

Advanced Settings:

- Response Topic: Create a custom topic for webhook responses, e.g.,

{{PARTICLE_DEVICE_ID}}/hook-response/{{PARTICLE_EVENT_NAME}}. - Enforce SSL: Choose “Yes” for secure transmission.

- Response Topic: Create a custom topic for webhook responses, e.g.,

-

Save the Webhook:

- After entering all details, click “Save”.

-

Test the Webhook:

- Use example device firmware to trigger the webhook.

- Observe the responses in the Particle Console.

- Debugging:

- If errors occur, review the logs for detailed information.

- Ensure payload format aligns with Edge Impulse requirements.

- Verify the accuracy of your API key and other details.