| Product | reComputer J1010 | reComputer J1020 | reComputer J2011 | reComputer J2012 |

|---|---|---|---|---|

| SKU | 110061362 | 110061361 | 110061363 | 110061401 |

| Side View |  |  |  |  |

| Equipped Module | Jetson Nano 4GB | Jetson Nano 4GB | Jetson Xavier NX 8GB | Jetson Xavier NX 16GB |

| Operating carrier Board | J1010 Carrier Board | Jetson A206 | Jetson A206 | Jetson A206 |

| Power Interface | Type-C connector | DC power adapter | DC power adapter | DC power adapter |

Installing dependencies

You will also need the following equipment to complete your first boot.- A monitor with HDMI interface. (For the A206 carrier board, a DP interface monitor can also be used.)

- A set of mouse and keyboard.

- An ethernet cable or an external WiFi adapter (there is no WiFi on the Jetson)

Make sure your ethernet is connected to the Internet

Issue the following command to check:Running the setup script

To set this device up in Edge Impulse, run the following commands (from any folder). When prompted, enter the password you created for the user on your Jetson in step 1. The entire script takes a few minutes to run (using a fast microSD card).Connecting to Edge Impulse

With all software set up, connect your camera or microphone to your Jetson (see ‘Next steps’ further on this page if you want to connect a different sensor), and run:--clean.

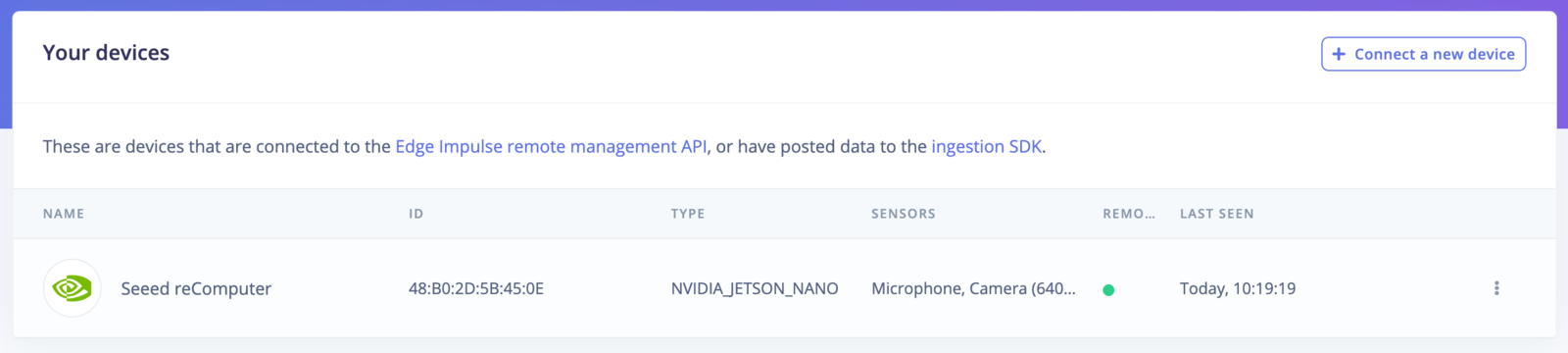

Verifying that your device is connected

That’s all! Your device is now connected to Edge Impulse. To verify this, go to your Edge Impulse project, and click Devices. The device will be listed here.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Keyword spotting

- Sound recognition

- Image classification

- object detection.

- Object detection with centroids (FOMO)

Deploying back to device

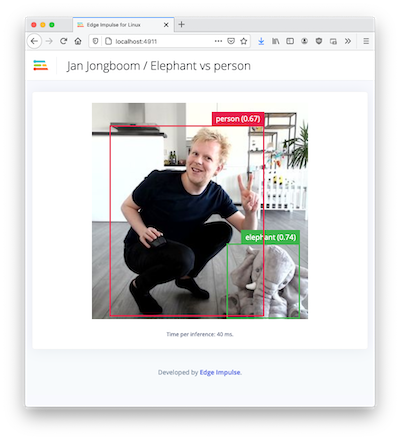

To run your impulse locally, just connect to your Jetson again, and run:Image model?

If you have an image model then you can get a peek of what your device sees by being on the same network as your device, and finding the ‘Want to see a feed of the camera and live classification in your browser’ message in the console. Open the URL in a browser and both the camera feed and the classification are shown: