By processing data closer to where the data is generated, we can reduce latency, limit bandwidth usage, improve reliability, and increase data privacy.

Network architecture overview

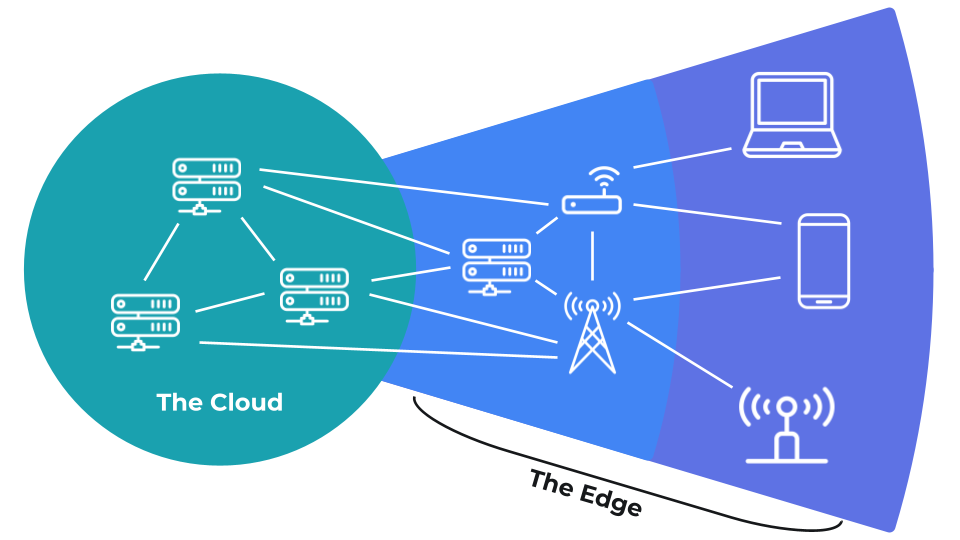

Most networking architectures can be divided into the “cloud” and the “edge.” Cloud computing consists of applications and services running on remote, internet-connected devices. Edge computing is essentially everything that is not part of the cloud (i.e. in the internet).

Note: a sensor is a device that measures a physical property in its environment (such as temperature, pressure, humidity, acceleration, etc.) and converts that measurement into a signal (often an electrical signal) that can be interpreted by a human or computer.Sometimes, data can be stored and processed on the end device, like saving a local spreadsheet or playing a single-player game. In other cases, you need the power of cloud computing to stream movies, host websites, perform complex data analysis, and so on.

Cloud computing

You are likely already familiar with many cloud computing services, such as Netflix, Spotify, Salesforce, HubSpot, Dropbox, Google Drive. These services run on powerful, internet-connected servers that you access through a client application, such as a browser. Most of the time, these services run on top of one of the major cloud computing platforms, like Amazon Web Services, Microsoft Azure, or Google Cloud Platform. Such platforms offer containerized operating systems that allow you to easily build your application in a modular fashion and scale up production to meet the demand of thousands or millions of users. The benefits of cloud computing include:- Large servers offer powerful computing capabilities that can crunch numbers and run complex algorithms quickly

- Remote access to services from any device (as long as you have an internet connection)

- Processing and storage can be scaled on demand

- Physical servers are managed by large companies (e.g. Google, Amazon, Microsoft) so that you do not need to handle the infrastructure and maintenance

Edge computing

In addition to cloud computing, you also have the option of running services directly on the end devices or on local network servers. Processing such edge data might include running a user application (e.g. word processing document), analyzing sensor data to look for anomalies, identifying faces in a doorbell camera, and hosting an intranet website accessible only to local users. According to Ericsson, there will be over 7 billion smartphones in the world by 2025. Additionally, the International Data Corporation (IDC) predicts a staggering 41.6 billion IoT devices will be in use by 2025. These devices will produce nearly 80 zettabytes that year, which amounts to about 200 million terabytes every day. The sheer amount of raw data is likely to strain existing infrastructure. One way to handle such data is to process locally or on the edge, rather than transmit everything to the cloud. The network edge can be divided into “near” edge and “far” edge. Near edge equipment consists of on-premises or regional servers and routing equipment controlled by you or your business. Near refers to the physical proximity or relatively low number of router hops it takes for traffic to go from the border of the internet to your equipment. In other words, “near” and “far” are from the perspective of the internet service provider (ISP) or cloud service provider. Far edge consists of the devices further away from the internet gateway on your network. Examples include user end-devices, such as laptops and smartphones, as well as IoT devices and local networking equipment, such as routers and switches. The border between the cloud, near edge, and far edge can often be nebulous. In fact, a relatively recent trend includes fog computing, which is a term coined by Cisco in 2012. In fog computing, edge devices (often near edge servers) are used to store and process data, often replicating the functionality of cloud services on the edge.Advantages

Edge computing offers a number of benefits:- Reduced bandwidth usage - you no longer need to constantly stream raw data to have it stored, analyzed, or processed by a cloud computing service. Instead, you can simply transmit the results of such processing.

- Reduced network latency - network latency is the round-trip time it takes for information to travel to its destination (e.g. a cloud server) and for the response to return to the end-point device. For cloud computing, this can be 100s of milliseconds or more. If processing is performed locally, such latency is often reduced to almost nothing.

- Improved energy efficiency - Transmitting data, especially via a wireless connection like WiFi, usually requires more electrical power than processing the data locally.

- Increased reliability - Edge computing means that data processing can often be done without an internet connection.

- Better data privacy - If raw data is processed directly on an end device without travelling across the network, it becomes harder to access by malicious parties. This means that user data can be made more secure, as there are fewer avenues to access that raw data.

Disadvantages

While edge computing offers a host of benefits, there are several limitations:- Resource constraints - Most edge devices do not offer the same level of raw computing as most cloud servers. If you need to crunch numbers quickly or run complex algorithms, you might have to rely on cloud computing.

- Limited remote access - Services running locally on edge or end devices might not be easily accessed via remote clients. To provide such remote access, you often need to run additional services (such as a web server) and/or configure a VPN on your local network.

- Security - Many IoT devices come from the manufacturer with default login credentials and open ports, making them prime targets for attackers (such as with the infamous Mirai botnet attack in 2016). You and your network administrators are responsible for implementing and enforcing up-to-date security plans for all edge devices.

- Scaling - Adding more computing power and resources is often easy in cloud computing; you just pay the cloud service provider more money. Scaling your resources for edge computing often requires purchasing and installing additional hardware along with maintaining the infrastructure.