Introduction

Recently, I was making a “long range acoustic device” (LRAD) triggered by specific human actions, and I thought about obtaining my own pictures to train a model. But with just a few pictures (under representation in the training data) my predictions would not be reliable. As you can imagine, the quality of the data affects the results of predictions, and thus the quality of an entire machine learning project. Then I learned about Hugging Face, an AI community with thousands of open-source and curated image classification datasets and models, and I’ve decided to use one of their datasets with Edge Impulse. In this tutorial I will show you how I got a Hugging Face Image Classification dataset imported to Edge Impulse, in order to train a machine learning model.Hugging Face Dataset Download

- Go to https://huggingface.co/

-

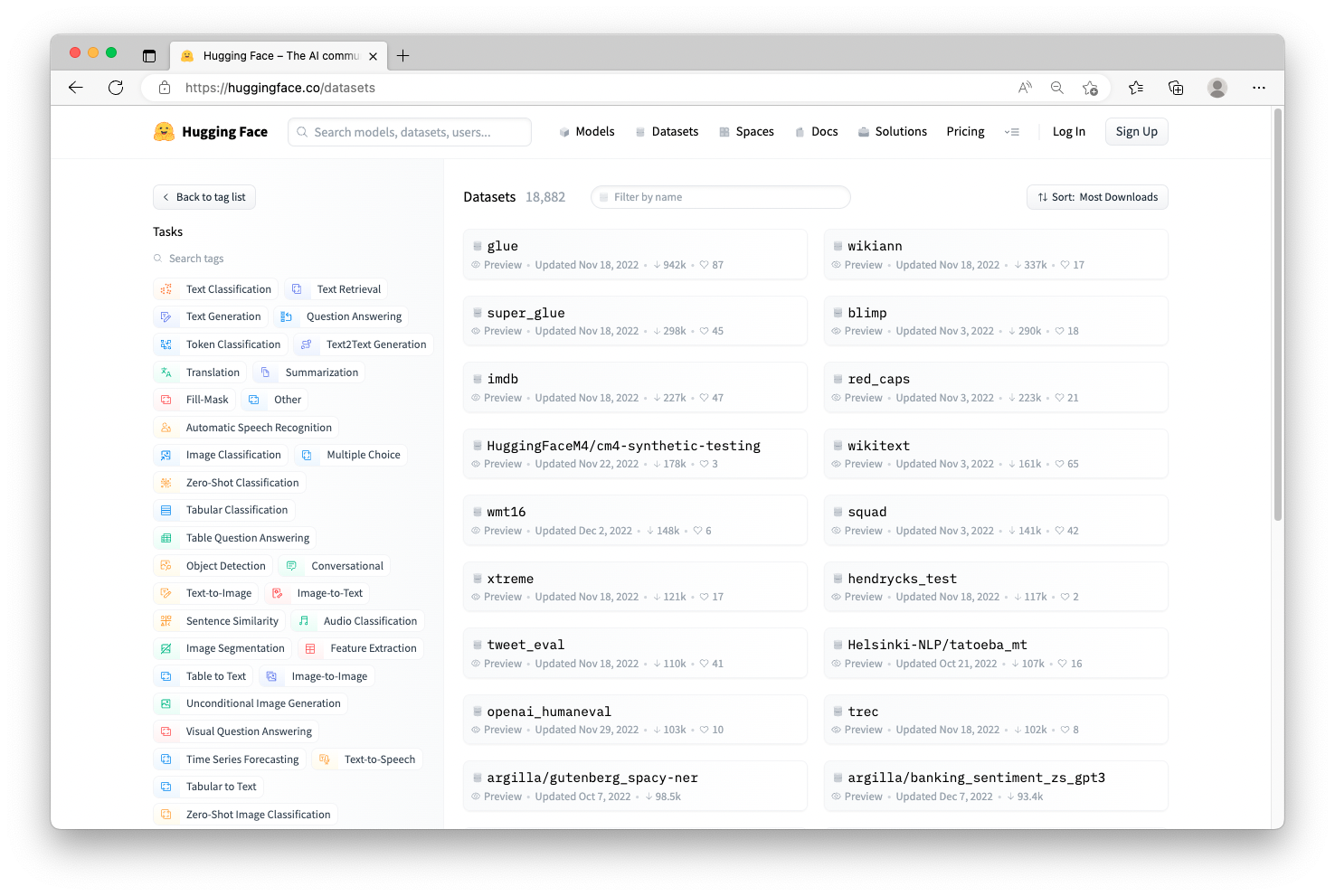

Click on Datasets, then on the left, in the Tasks find and click on Image Classification (you may need to click on “+27 Tasks” in order to see the entire list of possible options).

-

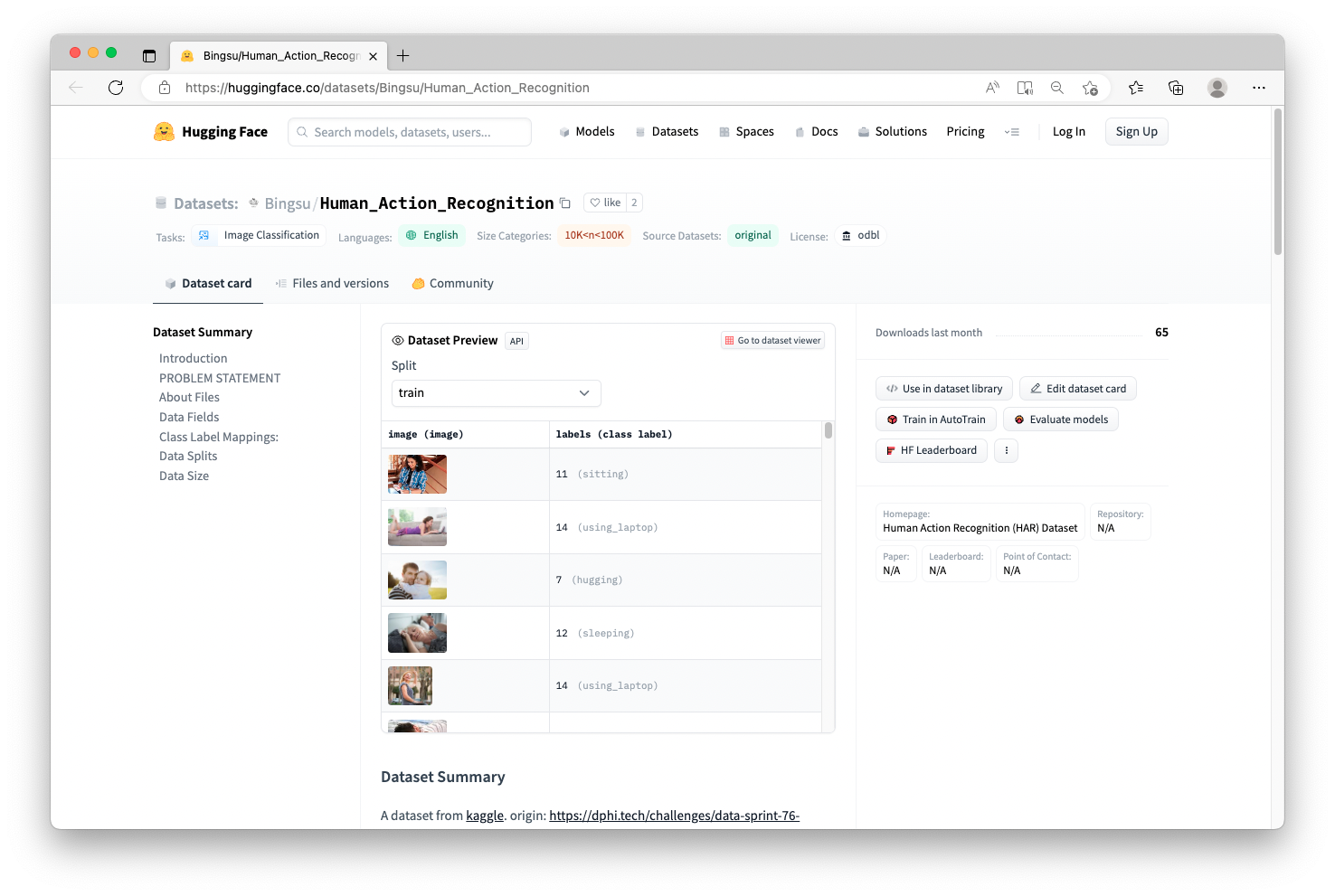

For this project, I chose to use this dataset https://huggingface.co/datasets/Bingsu/Human_Action_Recognition

- There are a few important things to note on this page:

- The dataset name (Bingsu/Human_Action_Recognition)

- How many images are contained in the dataset (if you scroll down, you will see 12,600 images are in Train and 5,400 are in Test)

- The Labels assigned to the images (15 different Classes are represented)

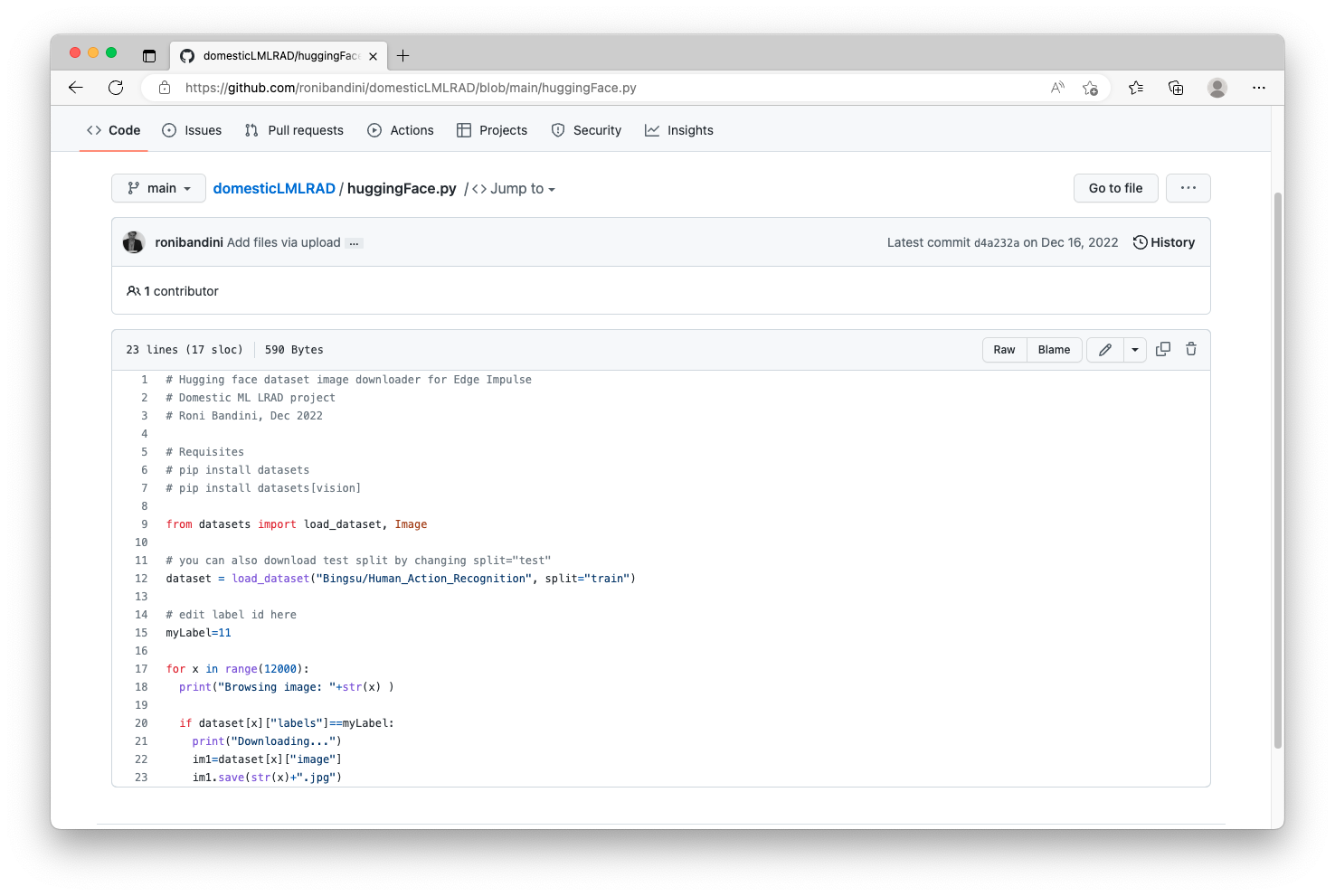

git clone of the repository containing the dataset. Alternatively, I have written a small python script to handle the download. You can retrieve the huggingFace.py script from https://github.com/ronibandini/domesticLMLRAD, then open the file in an editor to have a look at it’s contents.

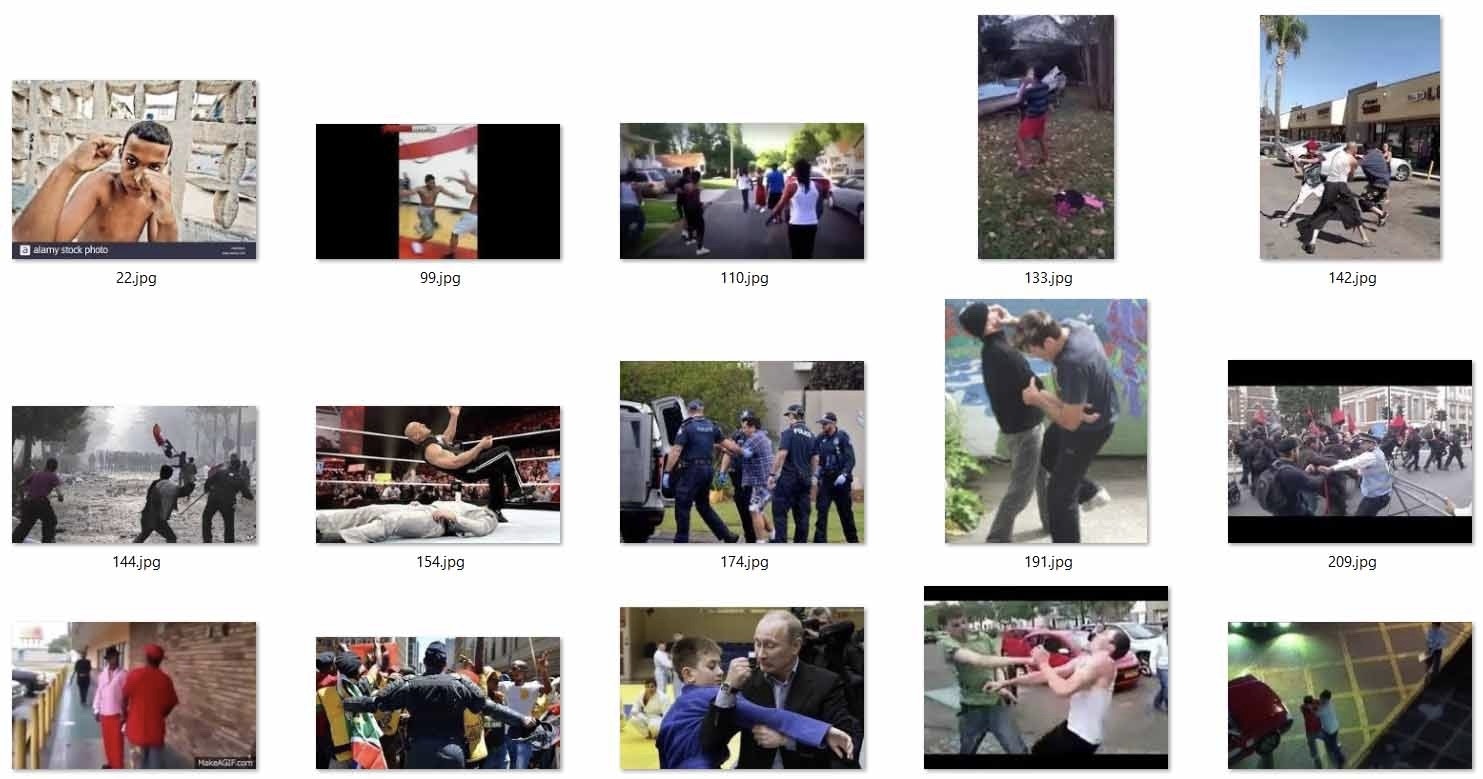

myLabel=11 to myLabel=6, you will instead download the “fighting” Class. Now you can run the python download script:

python huggingFaceDownloader.py

The contents of the dataset Class for “fighting” will be downloaded to the /datasets folder you just created.

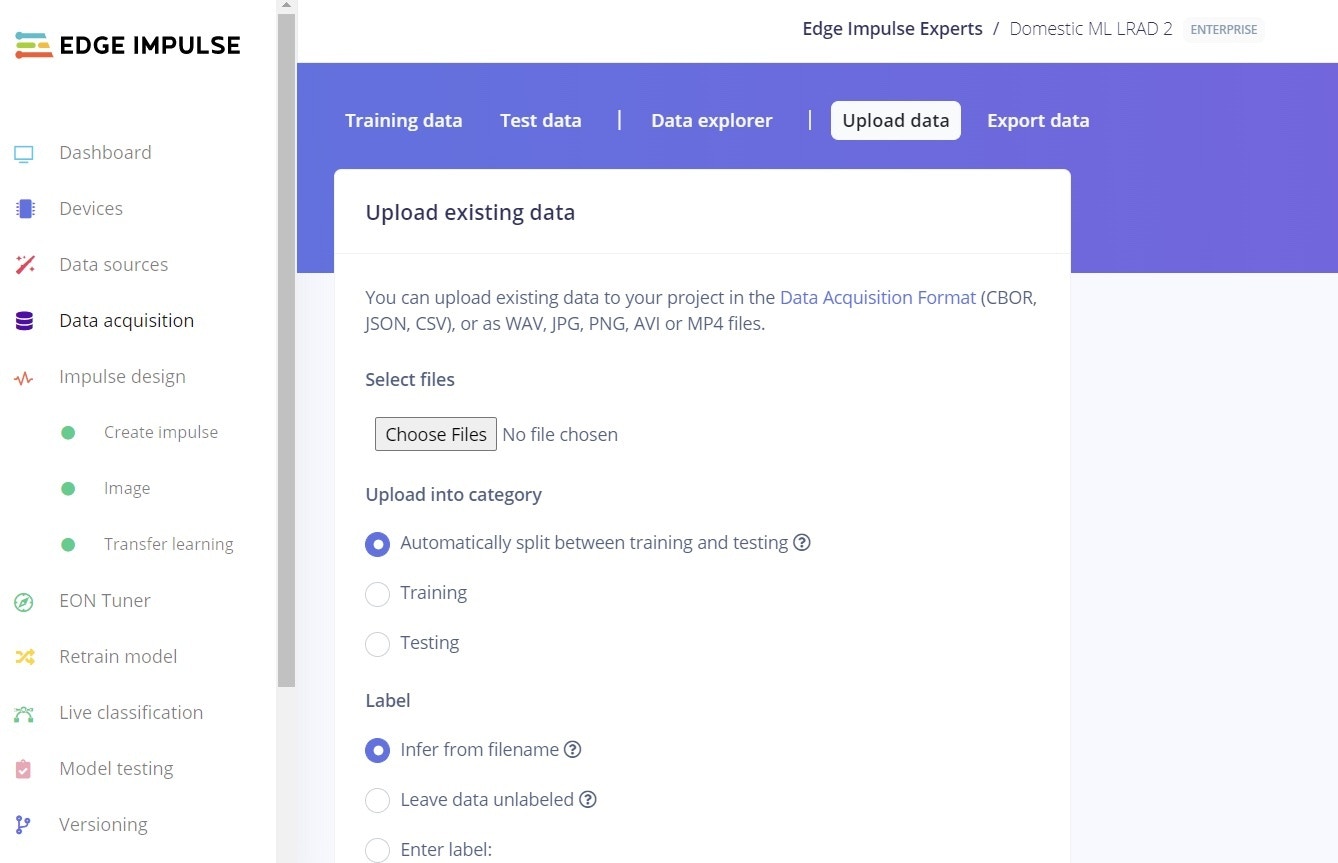

Data Acquisition

Now we can follow the normal Edge Impulse data upload process. More information on that process can be found here. Essentially, you will go to Edge Impulse and login to your account, choose your project (or create a new one), click on Data Acquisition, and then click on Upload data.

/datasets folder you created earlier, and you can automatically split between Training and Testing. You’ll also need to enter the Label: “fighting”.

To prepare another Class, edit line 15 in the huggingFace.py script once again and change the value to another number, perhaps the original value of Class 11 (sitting) is a good one. Re-run the script, and the images for that Class will be downloaded, just like previously. Then, repeat the Data Upload steps, remembering to change the Label in the Edge Impulse Studio to “sitting”.

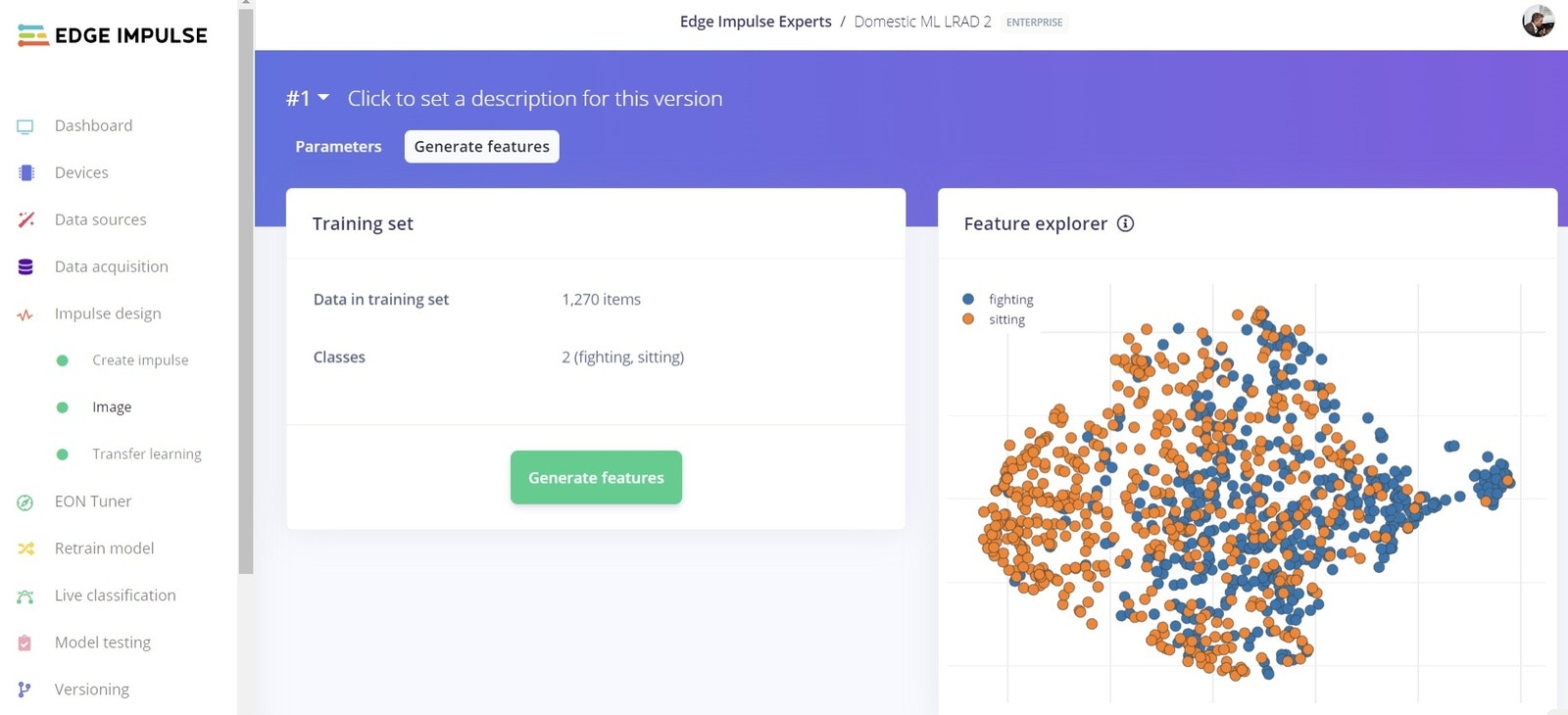

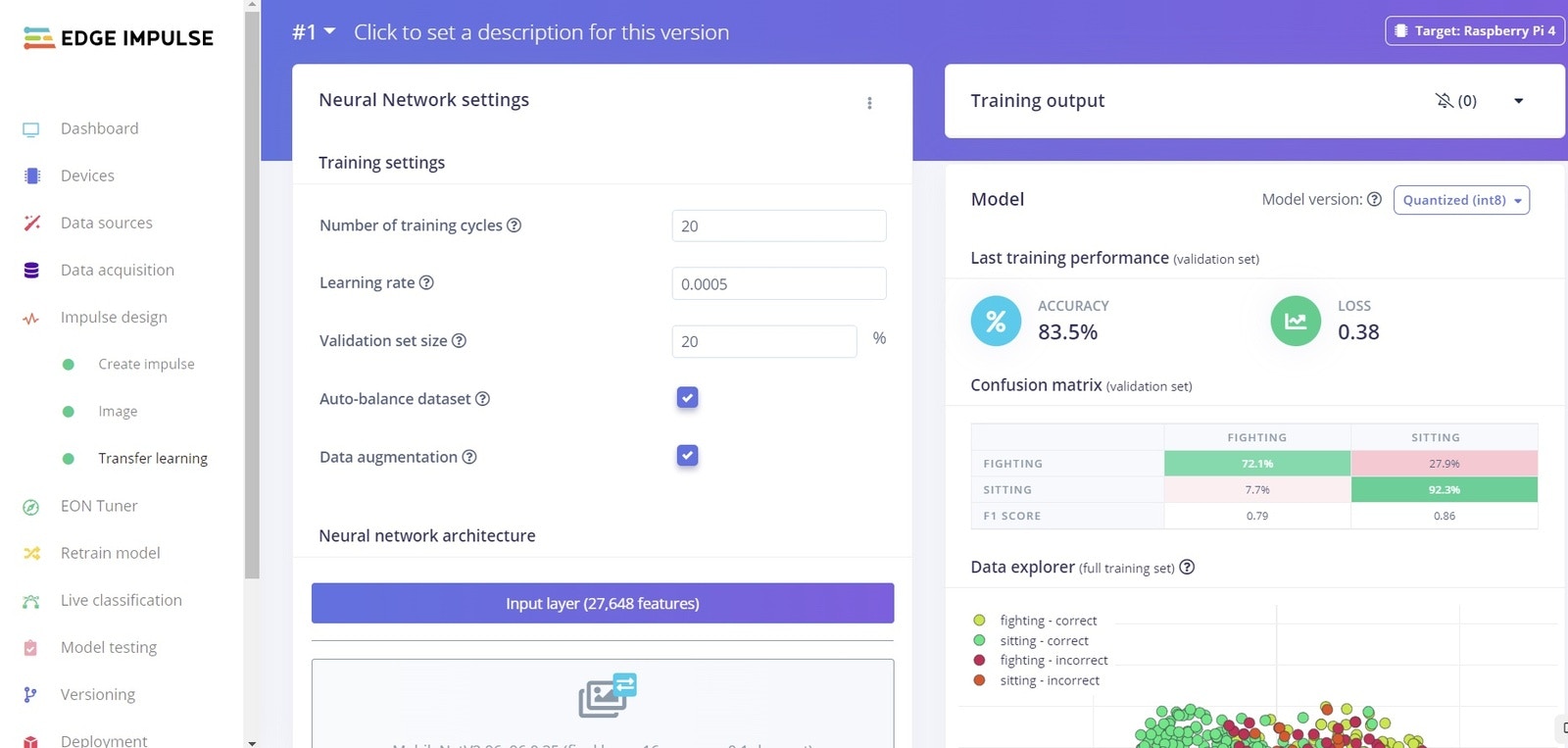

Machine Learning Model Creation

With our images uploaded, we can now build a machine learning model. To get started, go to Impulse Design on the left menu, then in Create Impulse you can choose the default values and add an Image block and a Transfer Learning (Images) block, then click Save Impulse.