Created By: Adam Milton-BarkerDocumentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Project Repo

https://github.com/AdamMiltonBarker/edge-impulse-jetson-nano-trainerIntroduction

NVIDIA Jetson Nano

The NVIDIA Jetson Nano is a small yet powerful computer designed for use in embedded systems and edge computing applications. The Jetson Nano is particularly well-suited for use in applications that require machine learning or computer vision processing at the edge of a network. Its small size and low power consumption also make it a cost-effective and efficient choice for edge computing applications in industries such as robotics, healthcare, and manufacturing. As an NVIDIA Jetson AI Specialist and Jetson AI Ambassador, I love building projects for the Jetson Nano. I use the Jetson Nano for both my Leukaemia MedTech non-profit, my business, for projects I contribute to the Edge Impulse Experts platform, and for personal projects.Edge Impulse

Edge Impulse is an end-to-end platform for building and deploying machine learning models on edge devices. It simplifies the process of collecting, processing, and analyzing sensor data from various sources, such as microcontrollers, and turning it into high-quality machine learning models. The platform offers a variety of tools and resources, including a web-based IDE, a comprehensive set of libraries and APIs, and a range of pre-built models that can be customized for specific use cases.The Problem

Whilst the Jetson Nano is a highly capable device for edge inference, it may not be the most suitable choice for AI model training, in fact NVIDIA recommend you should not train models on the Jetson Nano. However, Edge Impulse offers a compelling solution to this challenge by providing a platform for developing and deploying models on a range of edge devices, including the Jetson Nano. That said, some researchers and developers may prefer a more hands-on approach to coding and developing solutions on the Jetson Nano, despite its limitations for AI training.The Solution

This is where the Edge Impulse API comes in. Edge impulse have a number of APIs, which together, provide the ability to hook into most of the platforms capabilities, including the Studio. In this project we will create a new Edge Impulse project, connect a device, upload training and test data, create an Impulse, train the model, and then deploy and run the model on your Jetson Nano.Installation

Before you can get started you need to clone theedge-impulse-jetson-nano-trainer repository to your Jetson Nano. On your Jetson Nano navigate to where you want to be and run the following command:

The Configuration

You can find the configuration in theconfs.json file. This file has been set up to run this program as it is, but you are able to modify it and the code to act how you like. Think of this program as a boilerplate program and introduction to using the Edge Impulse APIs.

At certain points during the program, this file will be update, this ensures that if you stop the program you will always start off from where you left off.

Edge Impulse Account

To use this program you will need an Edge Impulse account. If you do not have one, head over to the Edge Impulse website and create one, then head back here.Data

You can use any dataset you like for this tutorial, I used the Car vs Bike Classification Dataset from Kaggle, and the Unsplash random images collection for the unknown class. These datasets include.jpg, .jpeg, and .png files, so we need to update the configuration file to look like the following:

test_dir and train_dir paths, this is where your data should be placed. The directory names inside of those directories will be used as the labels for your dataset. In this case, you should create car, bike, and unknown directories in both the train and test dirs.

There is a limitation on the number of files you can upload through the API, through my testing I was able to comfortably upload around 500 training per class, and 250 testing images per class.

The Program

The main bulk of the code lives in theei_jetson_trainer.py file. Ensuring you have your Edge Impulse account set up, let’s begin.

Start The Program

Navigate to the project root directory and execute the following command:Login To Edge Impulse

The first thing the program will ask you to do is login. Enter your Edge Impulse username or email, and then your password.Project Details

Next you will be asked for a name for your new project.Connect Your Device

The prompt will now ask you for your device ID:Data

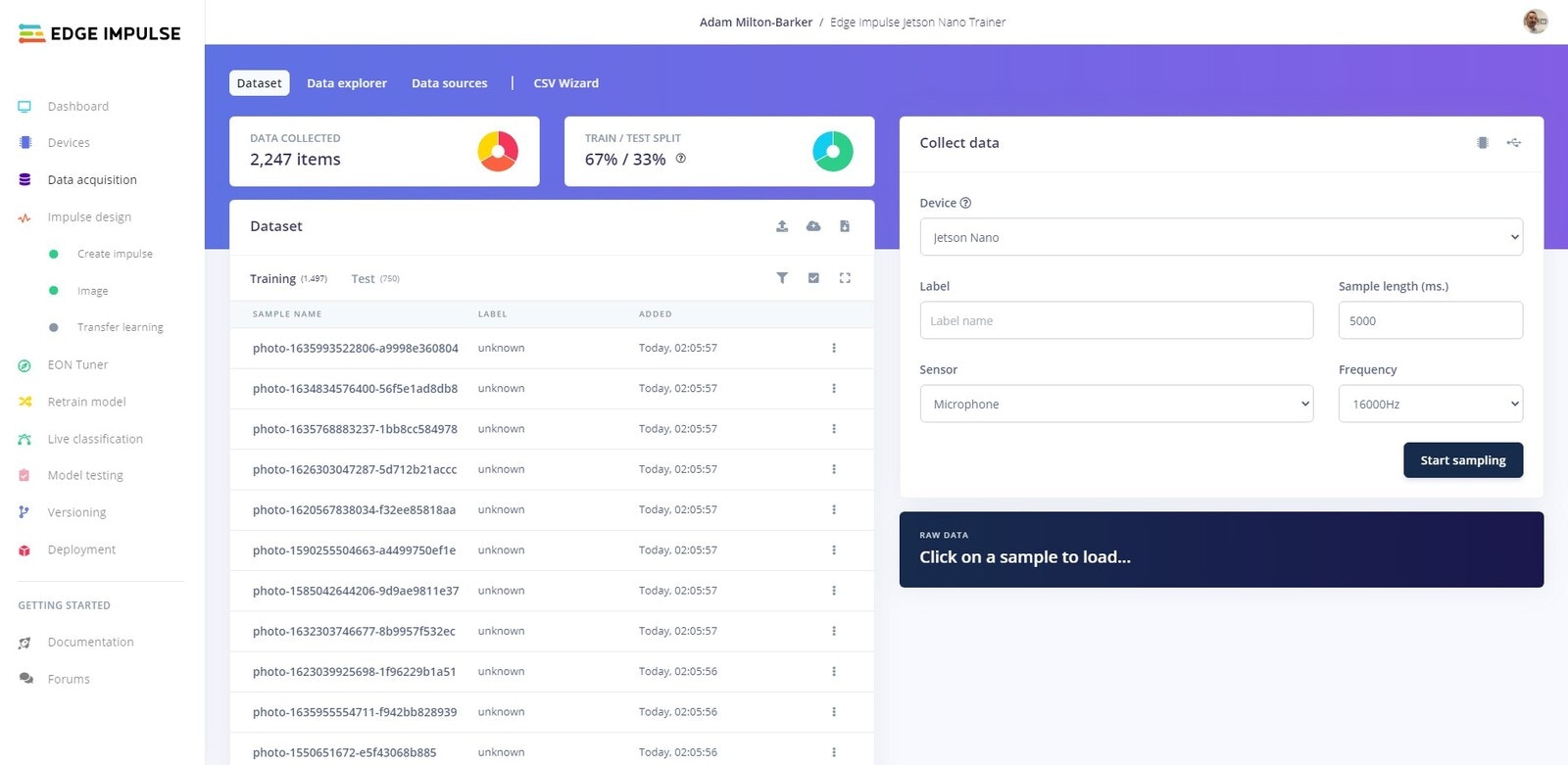

You should have followed the steps above and all of your training and testing data is in the relevant directories. The program will now loop through your data and send it to the Edge Impulse platform.

Data Aquisition tab and you will be able to see your data being imported to the platform.

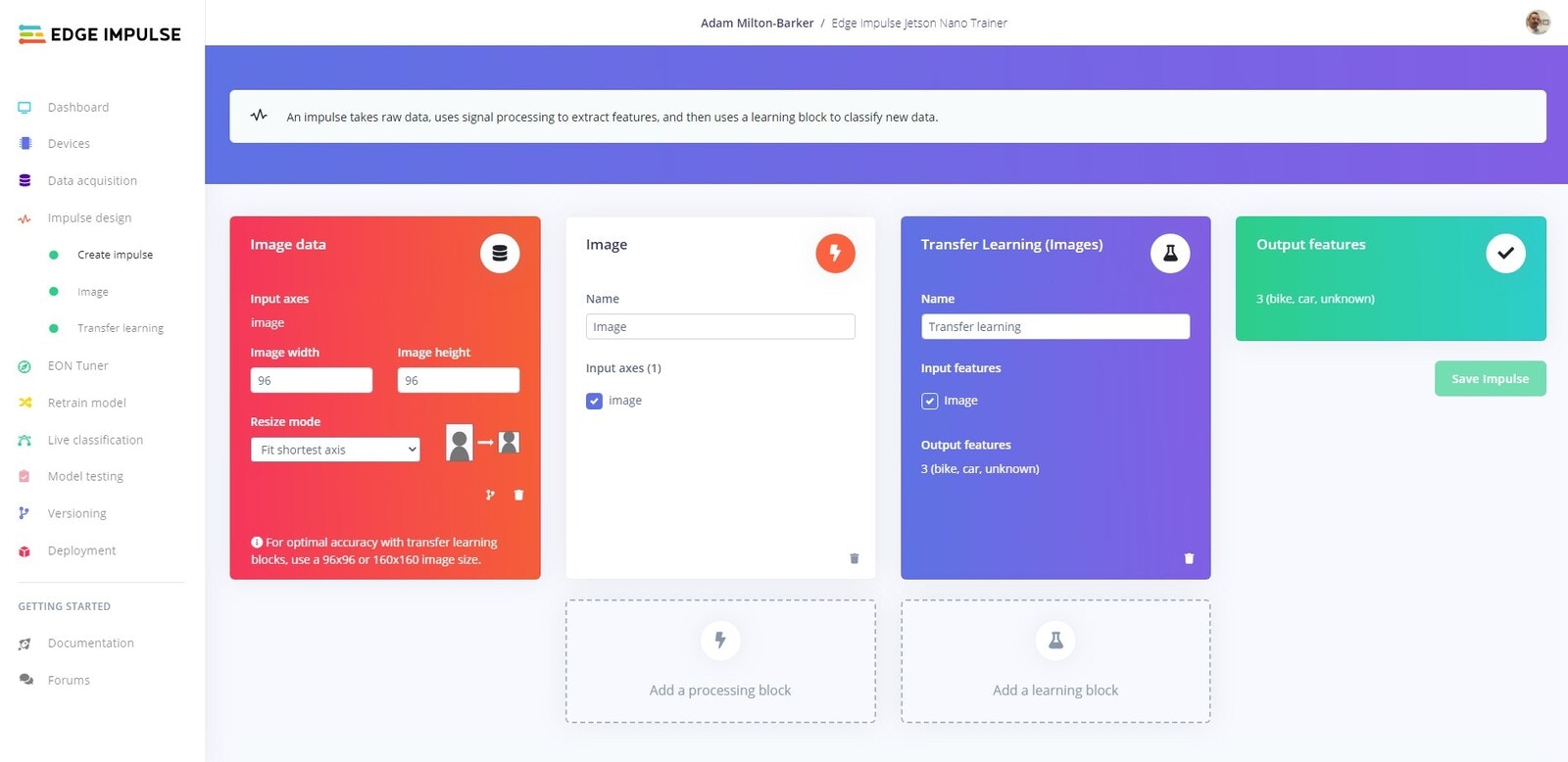

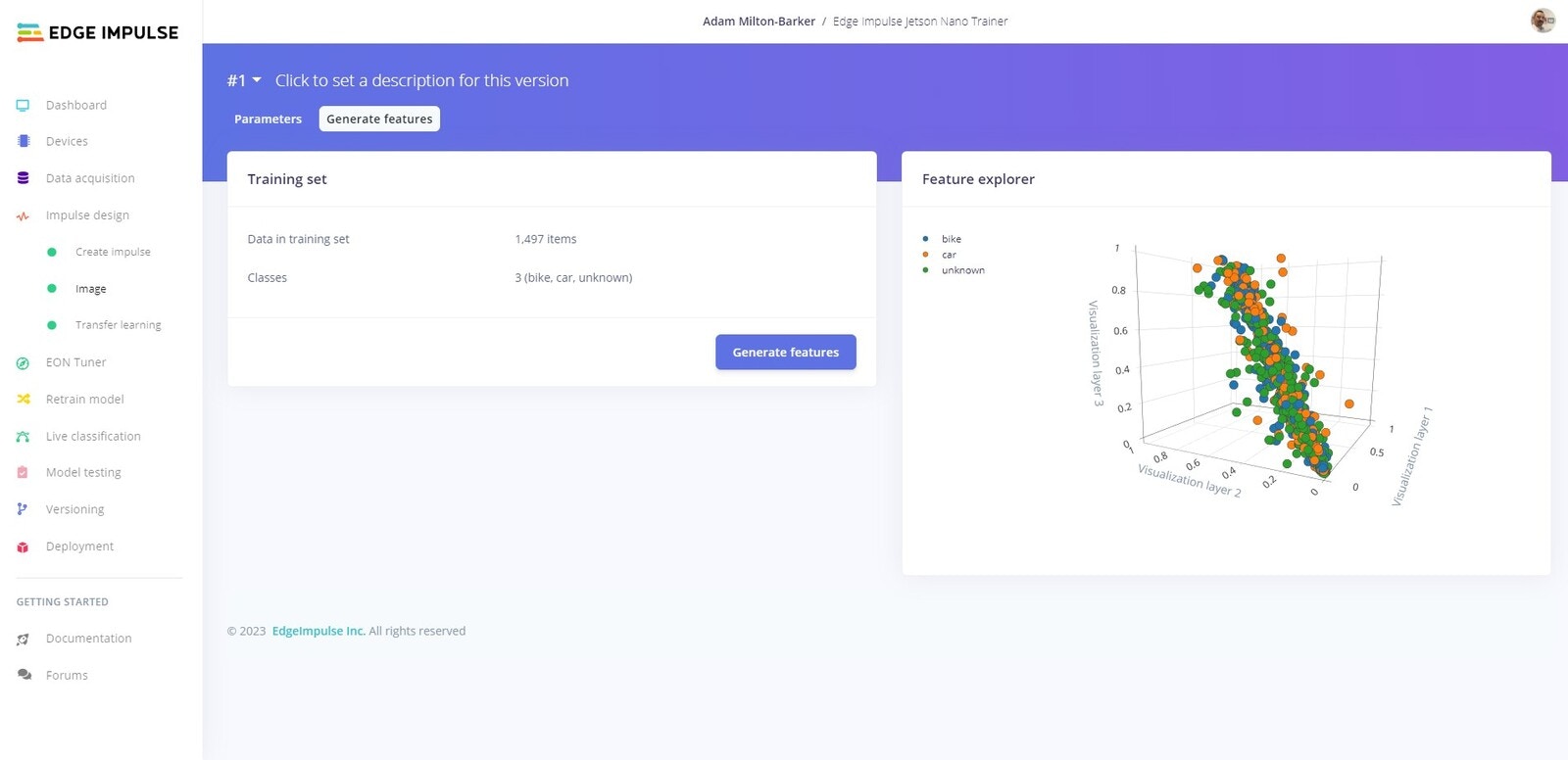

Feature Generation

Impulse Design -> Image -> Generate Features where you will see the features being generated.

Once the platform informs the program that the features have been created, training will begin.

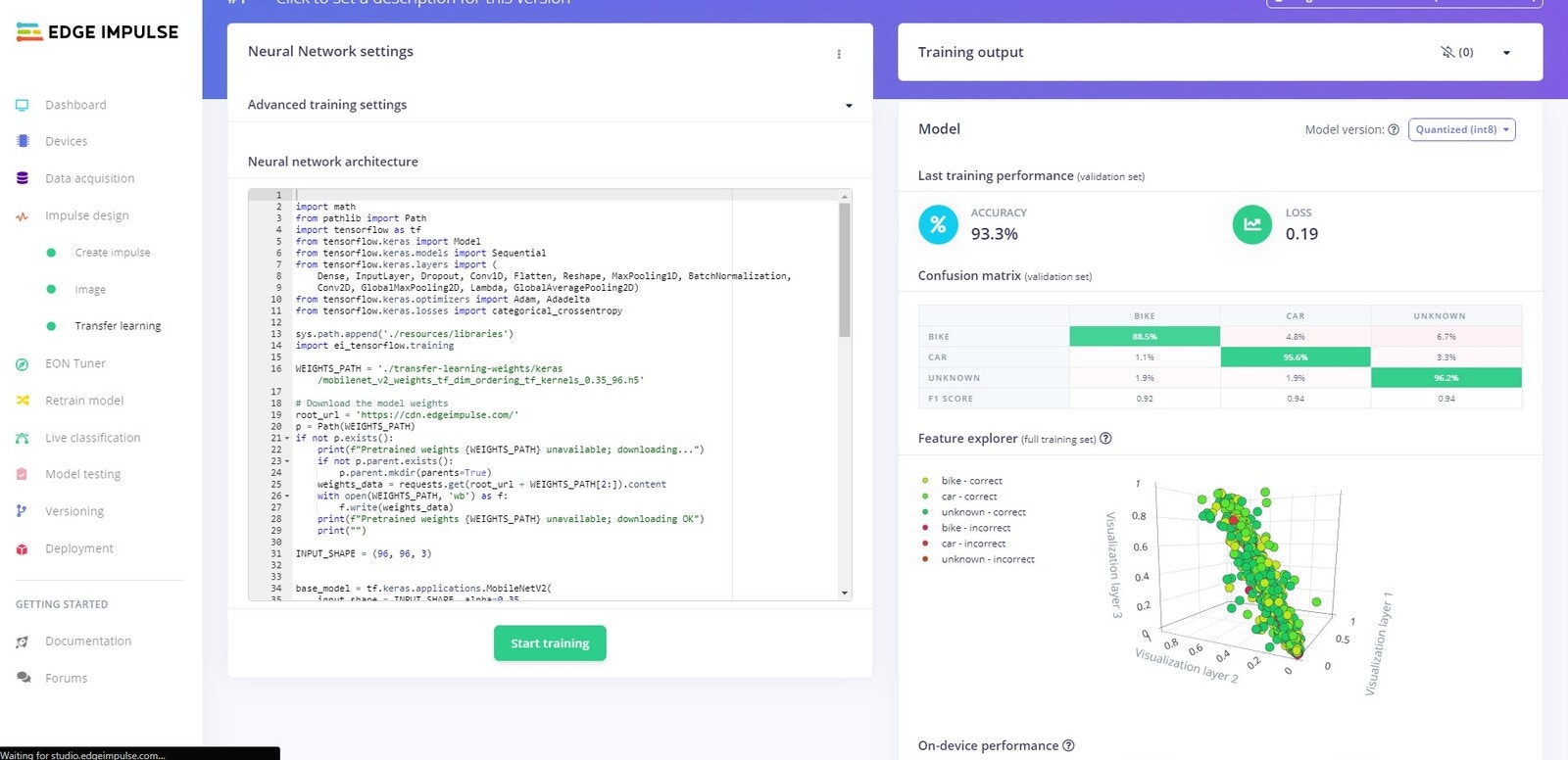

Training

Impulse Design -> Image -> Transfer Learning where you will be able to watch the model being trained. Once the training has finished the results will be displayed and the program will be notified via sockets.

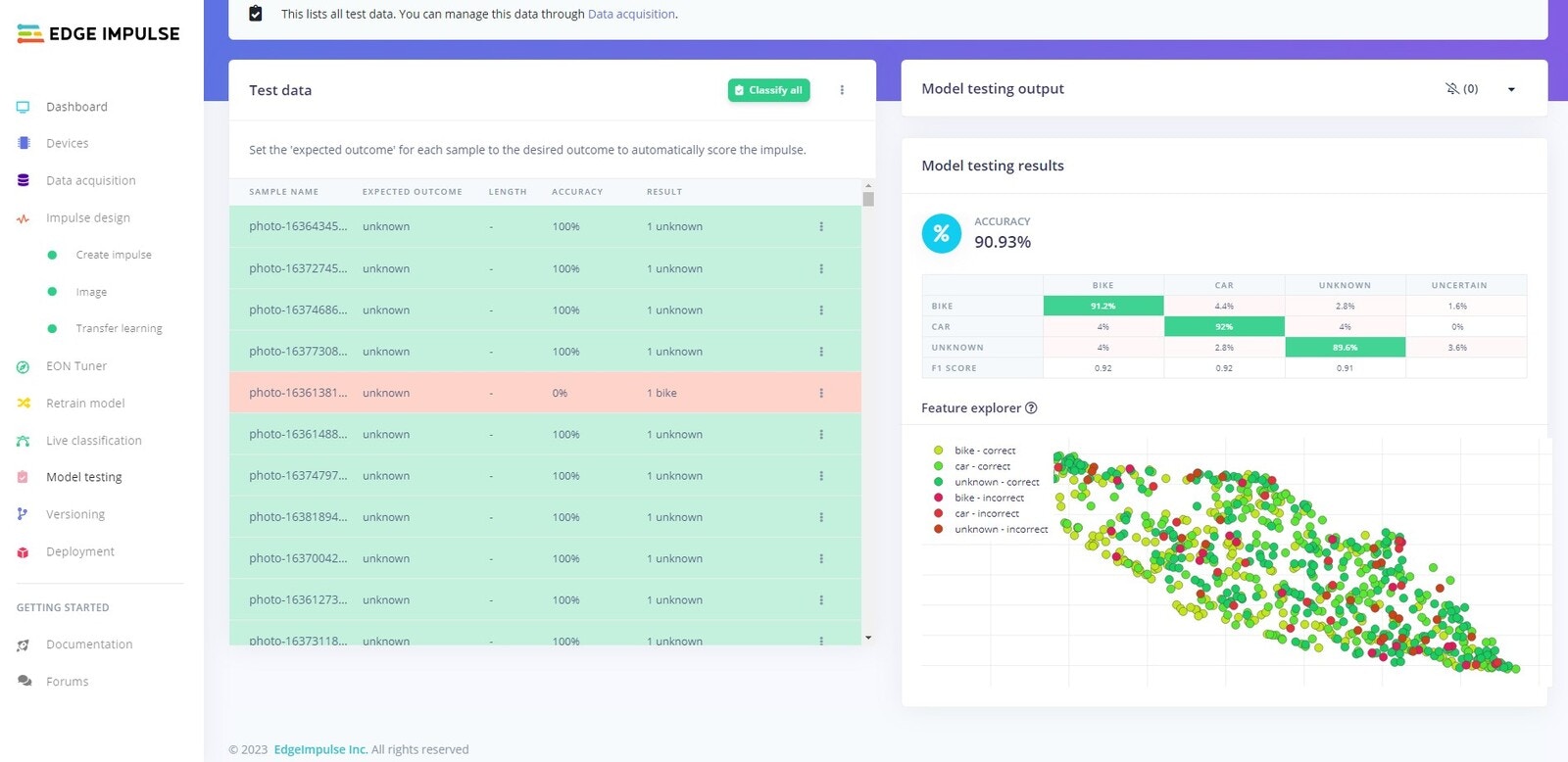

Testing

Model Testing tab.

Deploy