Monitoring checkout lines with Computer Vision

No one likes waiting in lines! Research shows that people wait in lines three to five days a year. Long queues at supermarkets cause shopper fatigue, reduce the customer experience and can lead to cart abandonment. Existing surveillance cameras can be used in stores near the checkout area. Using Computer Vision models , the camera will be able to understand how many customers are in a queue. When the line reaches a certain threshold of customers, this can be identified as a long queue, so staff can take action and even suggest that another counter should be opened. A branch of mathematics known as queuing theory investigates the formation, operation, and causes of queues. The arrival process, service process, number of servers, number of system spaces, and number of consumers are all factors that are examined by queueing theory. The goal of queueing theory is to strike a balance that is efficient and affordable. For this project, I developed a solution that can identify when a long queue is forming at a counter. If a counter is seen to have 51 percent of the total customers then the system flags this as a long queue with a red indicator. Similarly the shortest serving counter is indicated with a green indicator signaling that people should be redirected there. You can find the public project here: Person Detection with Renesas DRP-AI. To add this project to your Edge Impulse projects, click “Clone” at the top of the window.Dataset Preparation

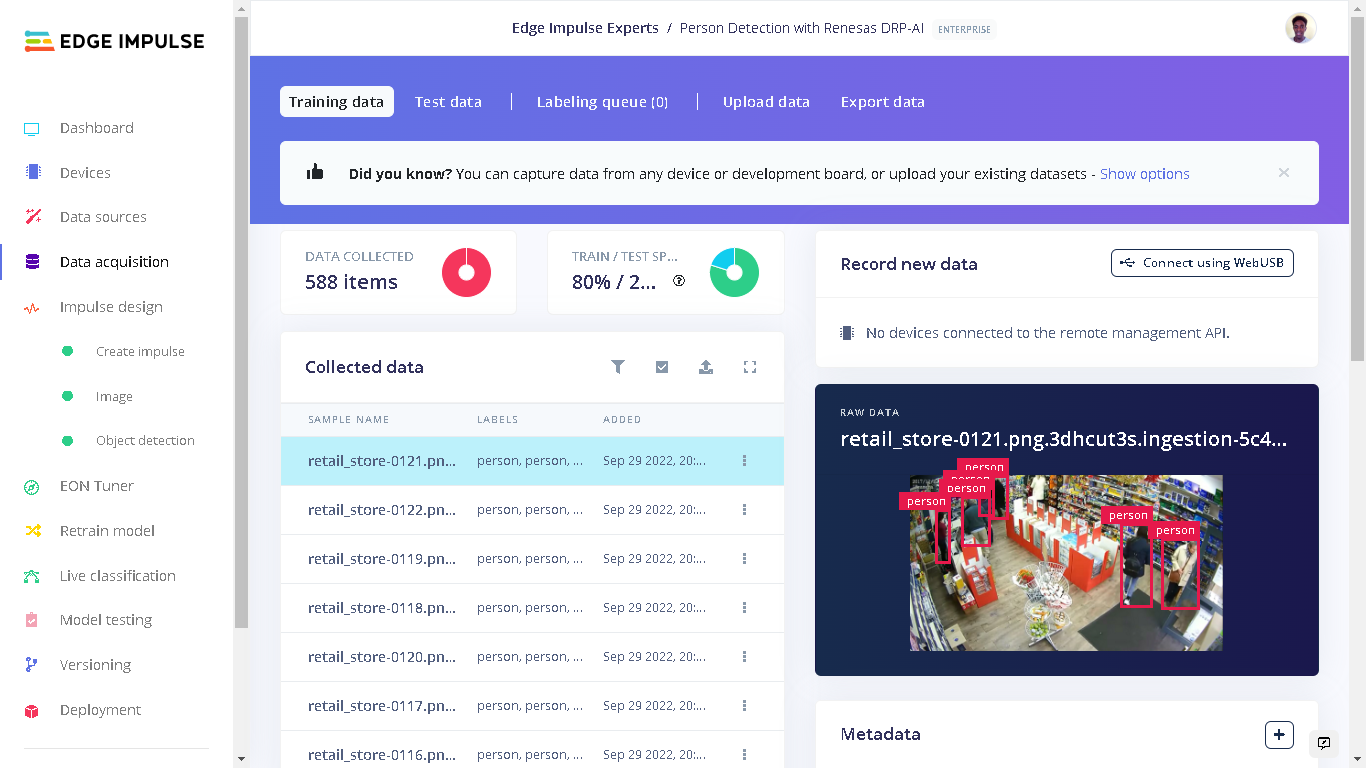

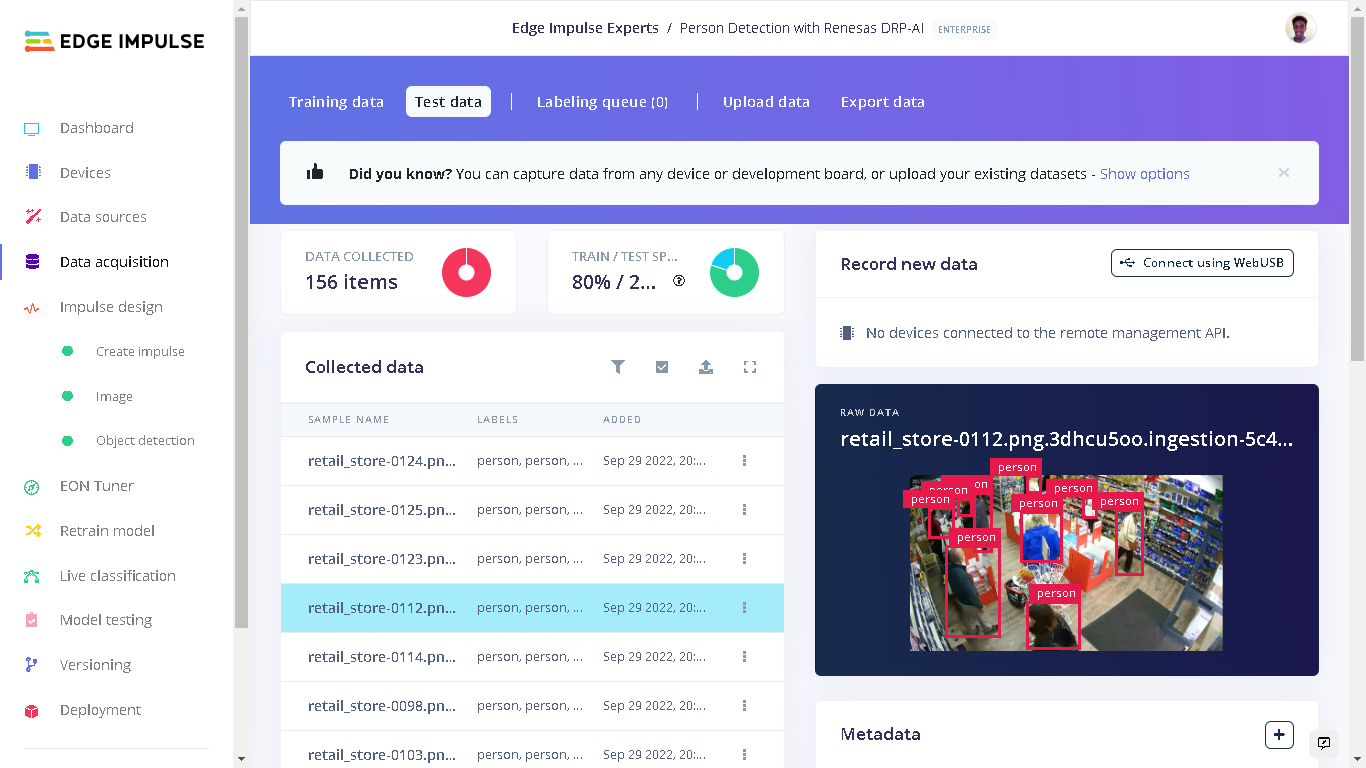

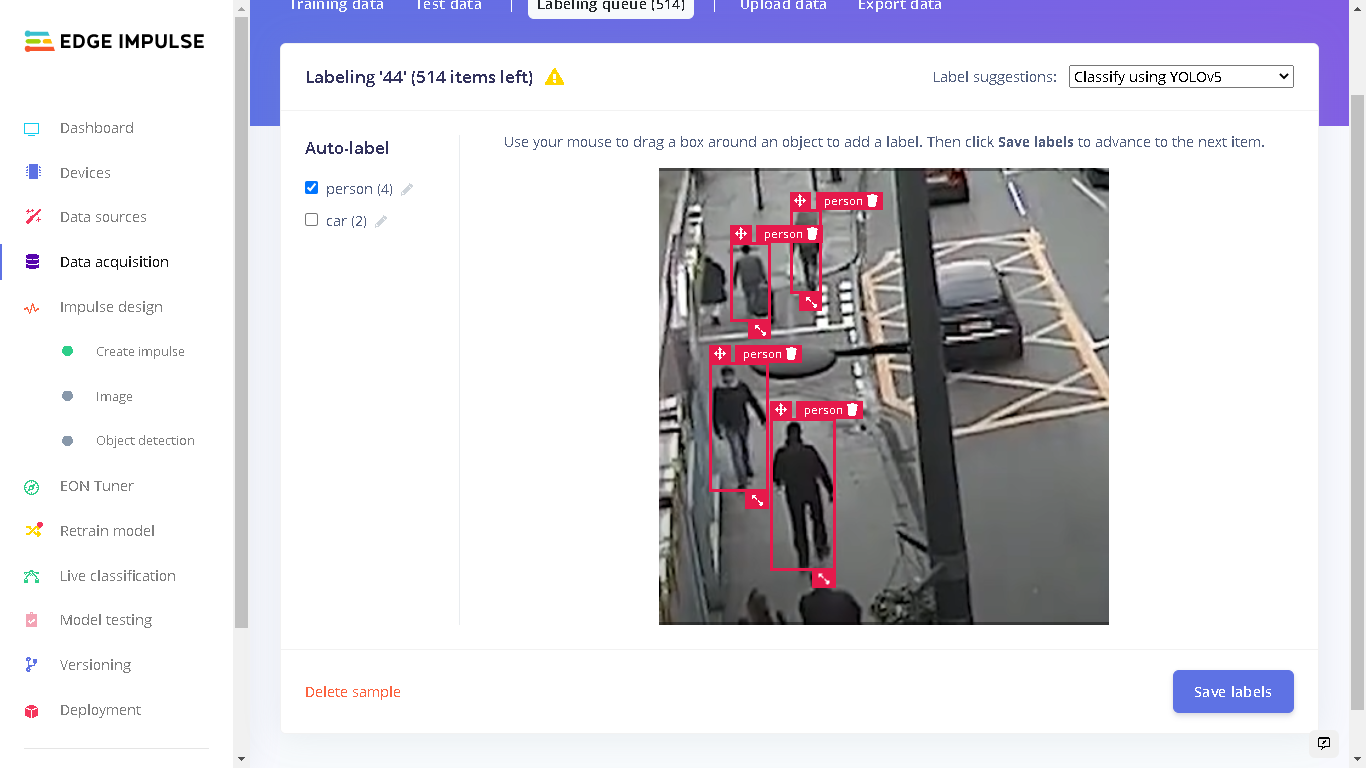

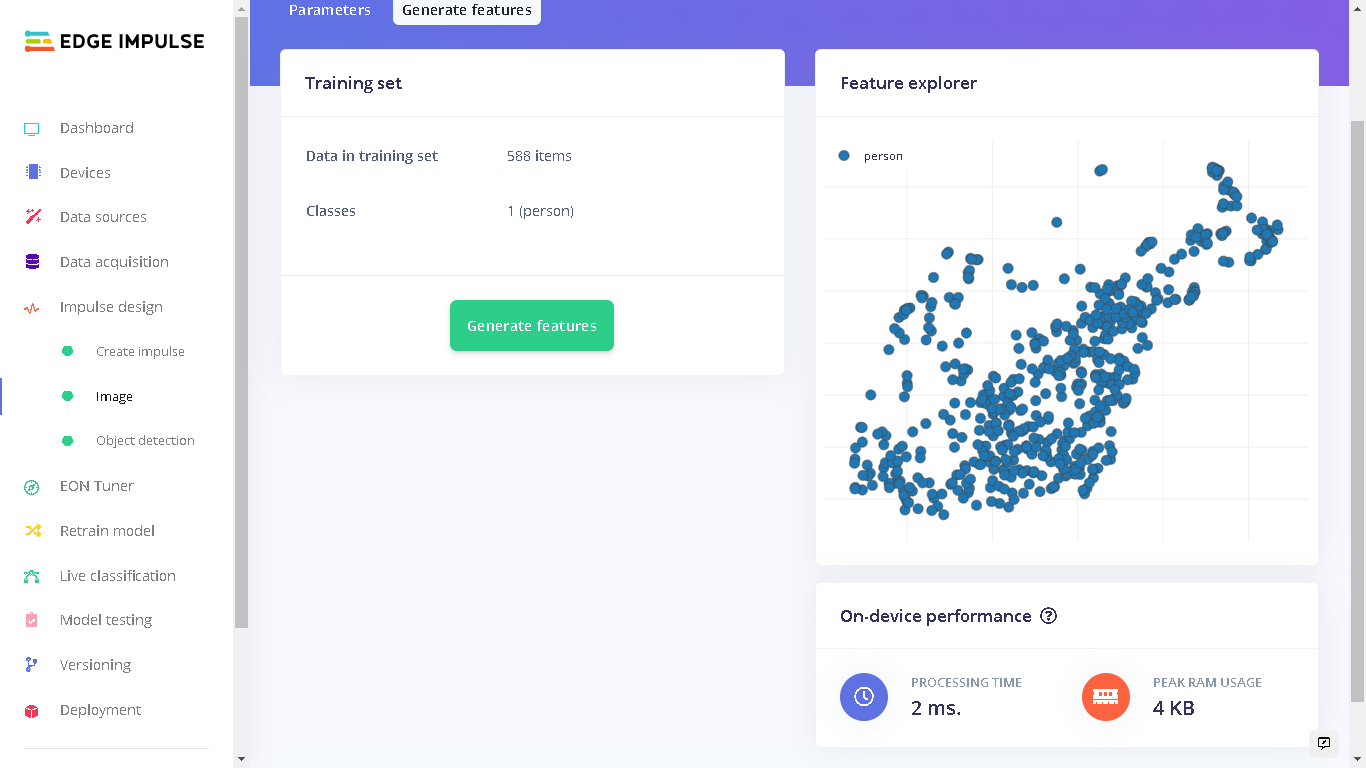

For my dataset, I sourced images of people from public available datasets such as Kaggle. This dataset contains images of people in retail stores and various other environments. In total, I had 588 images for training and 156 images for testing.

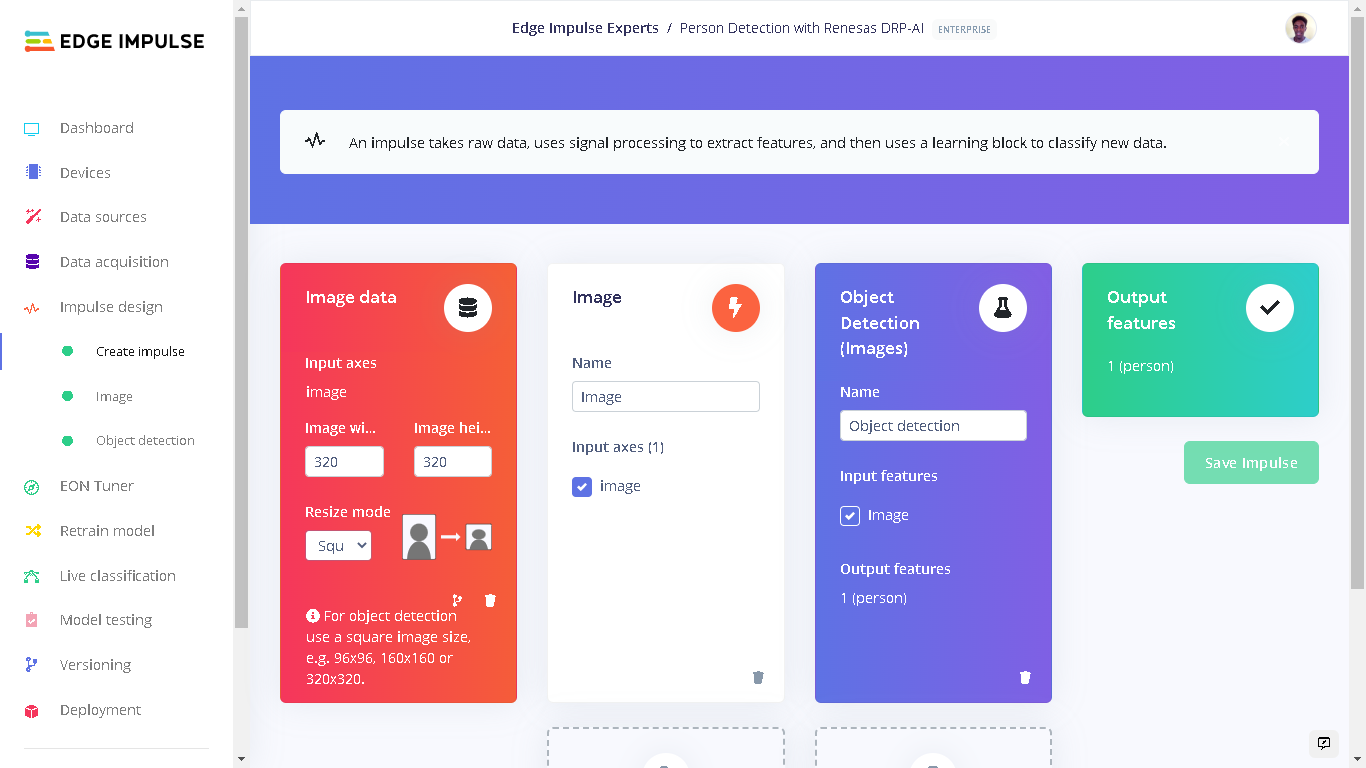

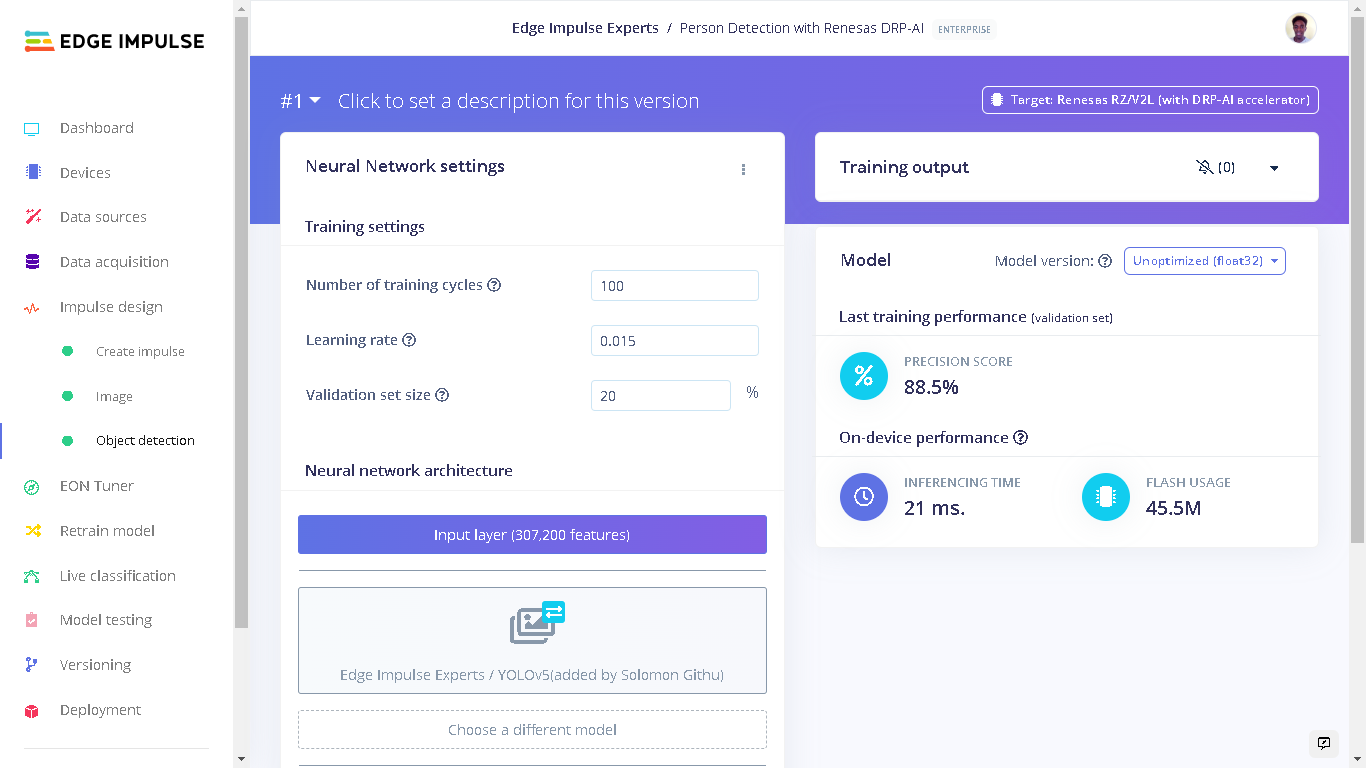

Impulse Design

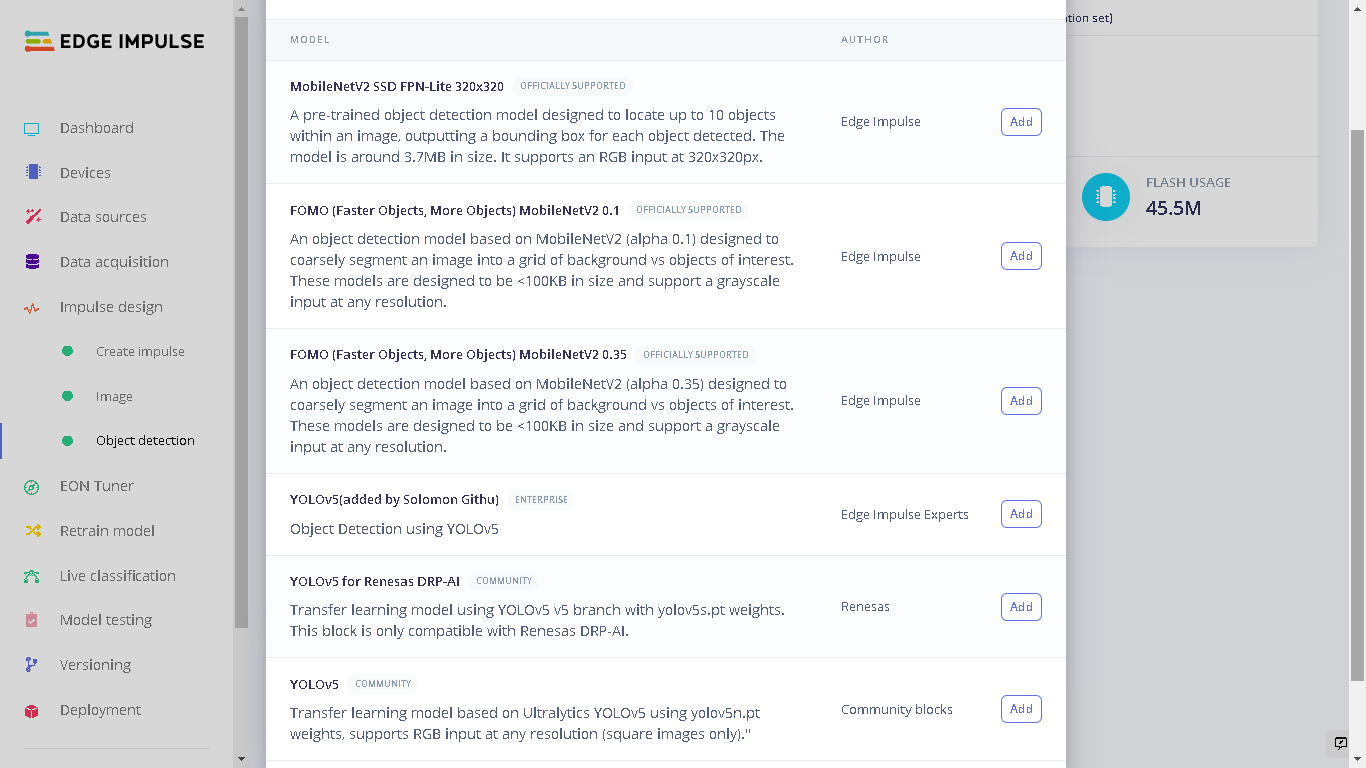

An Impulse is a machine learning pipeline that indicates the type of input data, extracts features from the data, and finally a neural network that trains on the features from your data. For the YOLOv5 model that I settled with, I used an image width and height of 320 pixels and the “Resize mode” set to “Squash”. The processing block was set to “Image” and the learning block set to “Object Detection (Images)”.

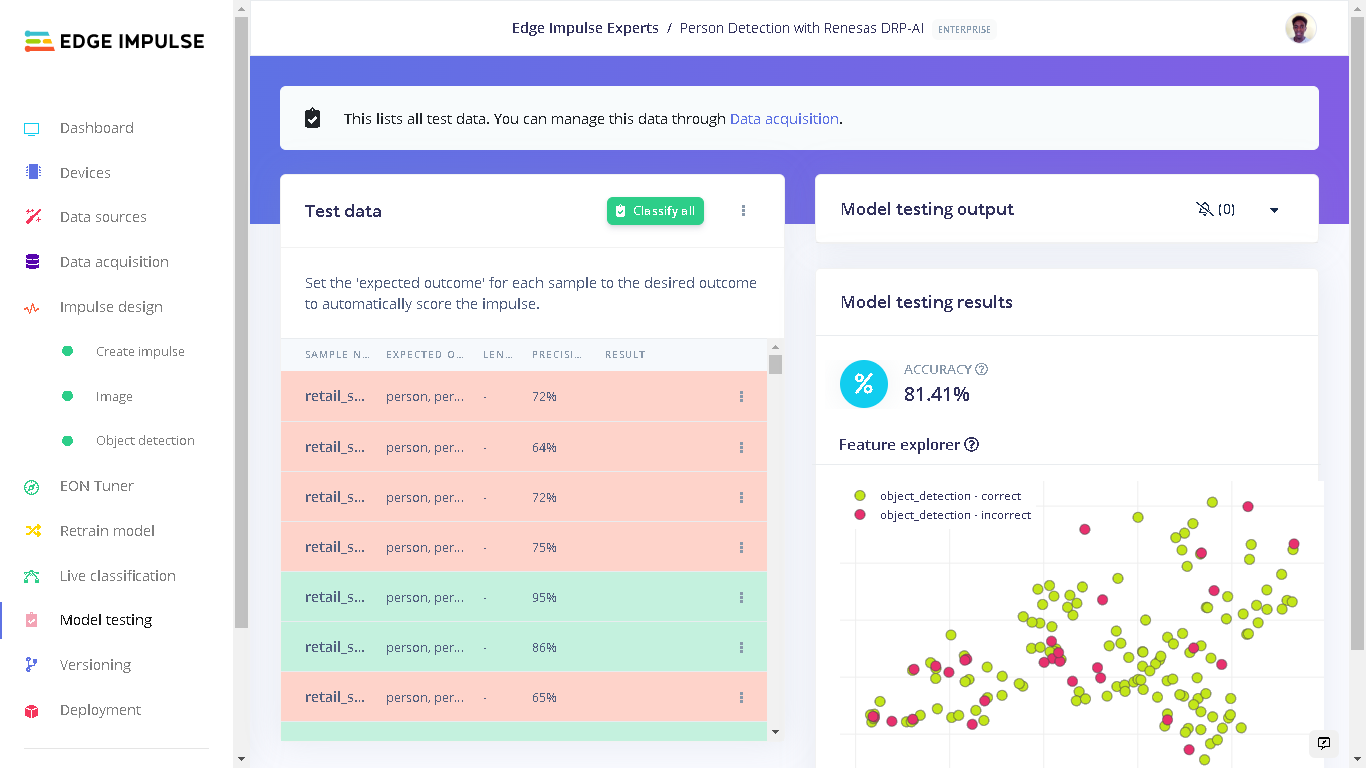

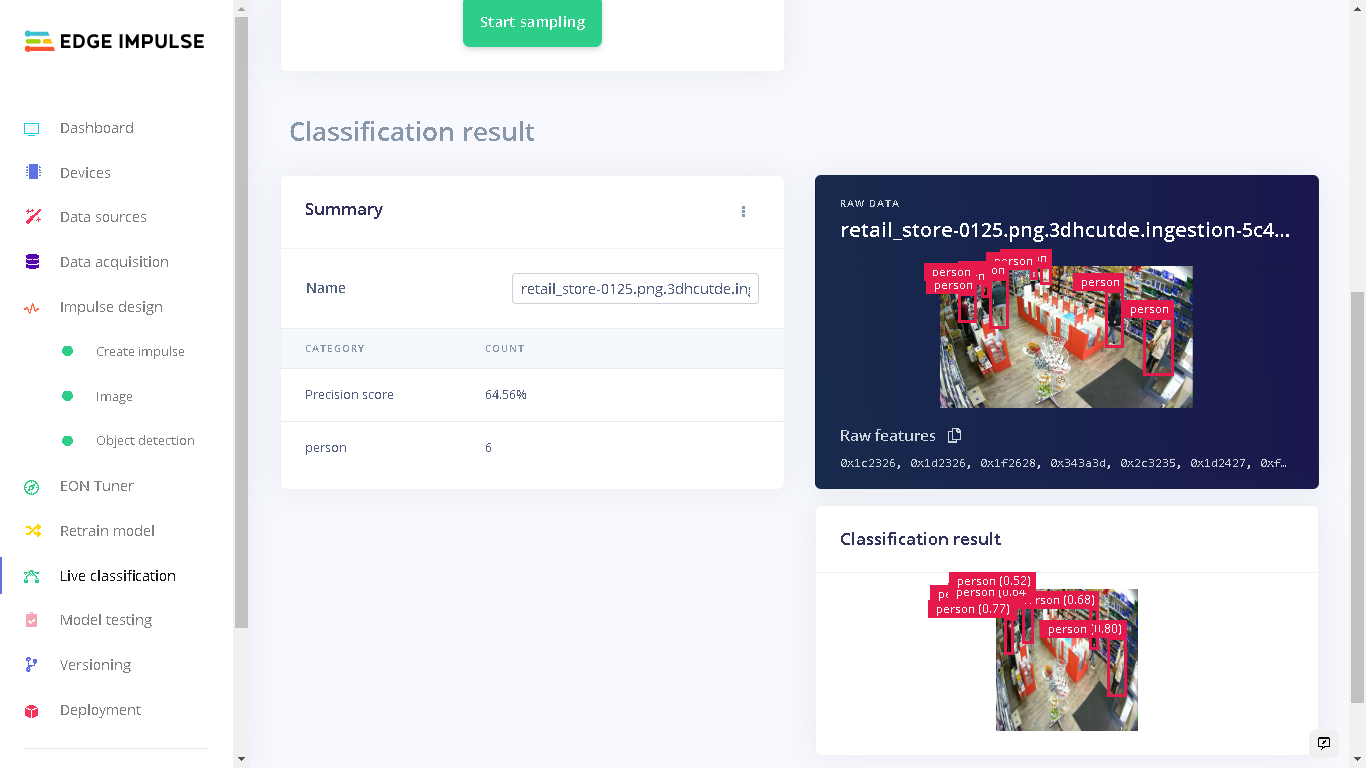

Model Testing

After training the model, I did a test with the unseen (test) data and the results were impressive, at 85% accuracy.

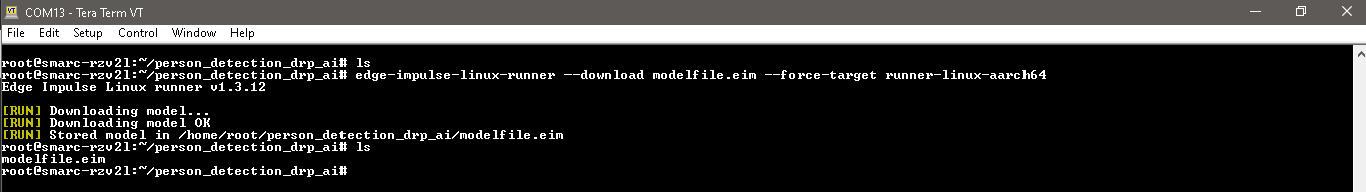

Deploying to Renesas RZ/V2L Evaluation Kit

The Renesas Evaluation Kit comes with the RZ/V2L board and a 5-megapixel Google Coral Camera. To setup the board, Edge Impulse has prepared a guide that shows how to prepare the Linux OS image, install Edge Impulse CLI and finally connecting to Edge Impulse Studio..jpg?fit=max&auto=format&n=lnCwBUvZVOz6Veyh&q=85&s=57d194ca8d0c8c28a2062d01ad3a7f09)

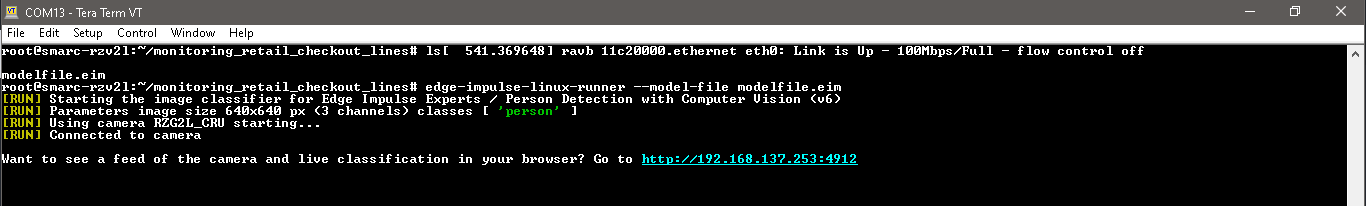

edge-impulse-linux and then edge-impulse-linux-runner which lets us log in to our account and select the cloned Public project.

We can also download an executable of the model which contains the signal processing and ML code, compiled with optimizations for the processor, plus a very simple IPC layer (over a Unix socket). This executable is called an .eim model

To do a similar method, create a directory and navigate into the directory:

.eim model with the command:

Renesas RZ/V2L CPU vs DRP-AI Performance

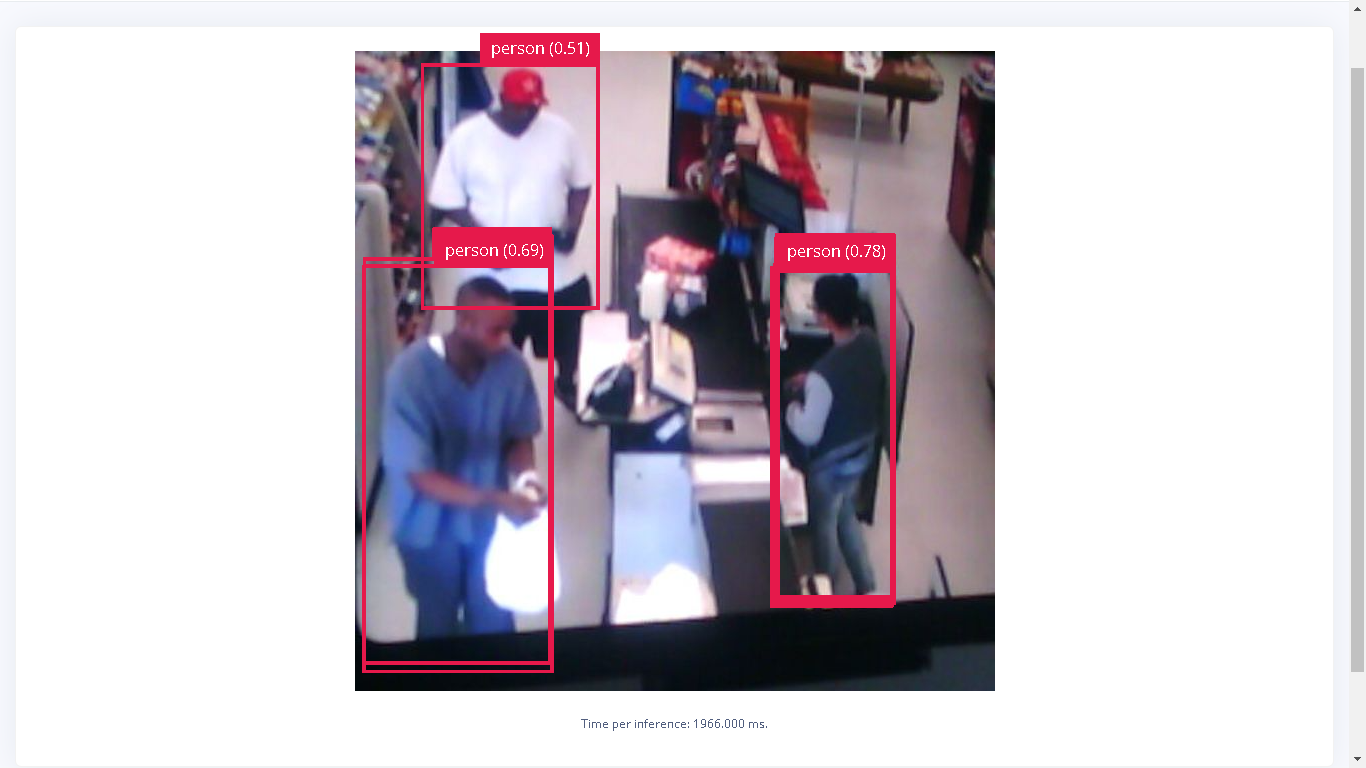

Currently, all Edge Impulse models can run on the RZ/V2L CPU which is an SoC based on Cortex-A55 cores. In addition, you can bring your own model to Edge Impulse and use it on the device. However, if you would like to benefit from the DRP-AI hardware acceleration support, there are a couple of models that you can use for both object detection and image classification. In the midst of the rapid evolution of AI, Renesas developed the AI accelerator (DRP-AI) and the software (DRP-AI translator) that delivers both high performance and low power consumption, and has the ability to respond to evolution. Combining the DRP-AI and the DRP-AI translator makes AI inference possible with high power efficiency.- Performance with RZ/V2L CPU - I first trained another model but with the target device being RZ/V2L (CPU). Afterwards, I downloaded the

.eimexecutable on the RZ/V2L and run live classification with it.

- Performance with RZ/V2L (DRP-AI Accelerator) - running the same inference test, but with the downloaded

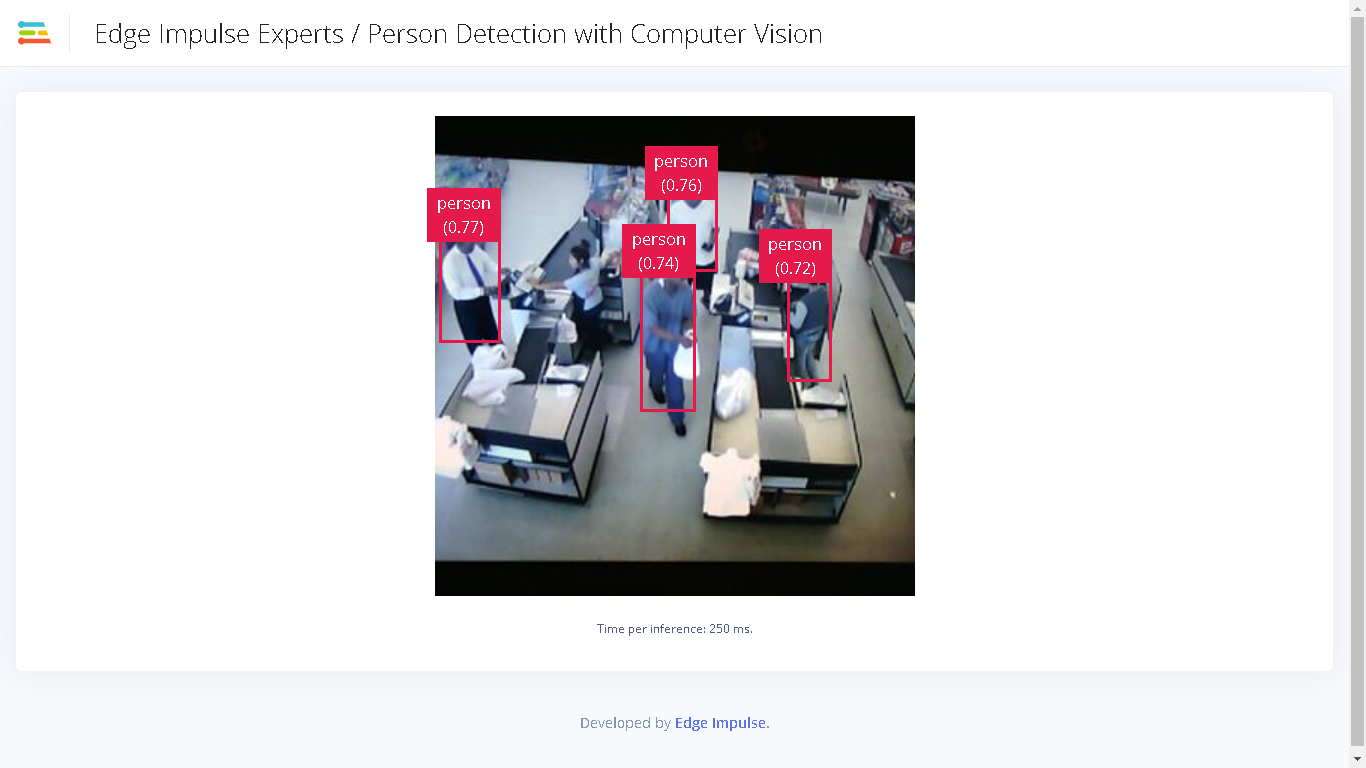

.eimwith DRP-AI acceleration enabled gave a latency of around 250ms, or roughly 4 fps.

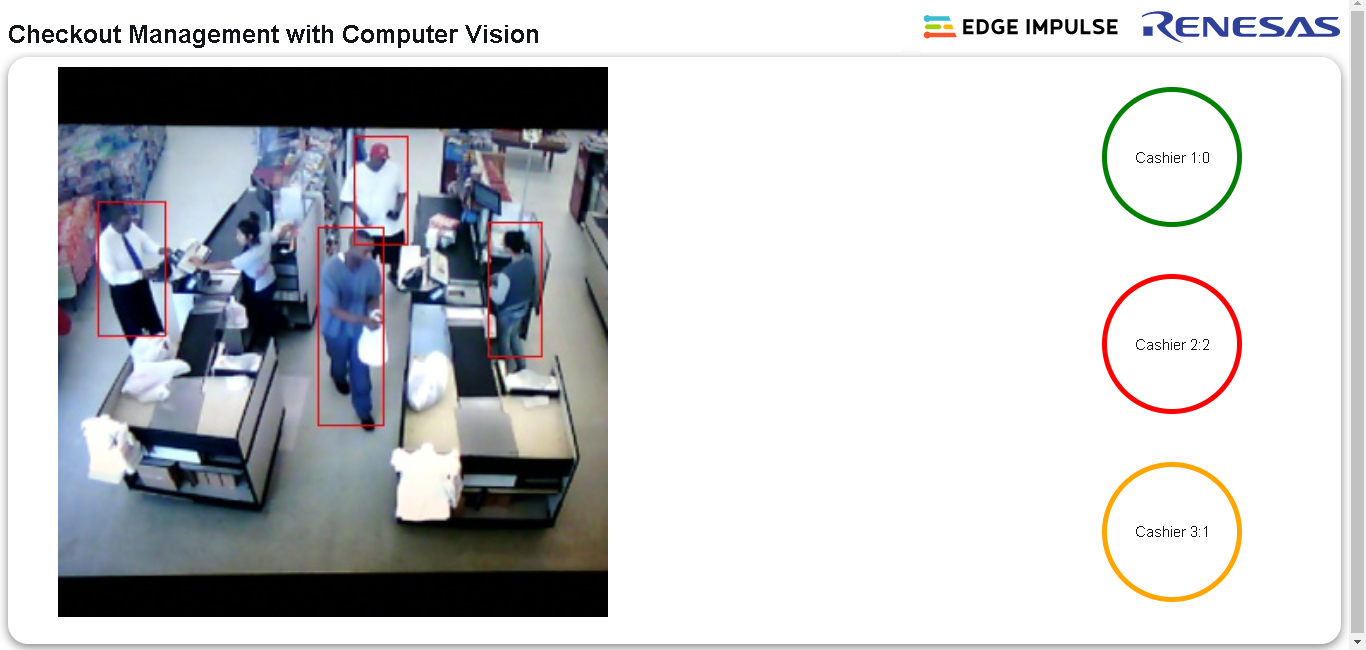

A Smart Application to Monitor Checkout Lines

Using the.eim executable and Linux Python SDK for Edge Impulse for Linux, I developed a Web Application using Flask that counts the number of people at each queue and computes a distribution of total counts across the available counters which enables identifying long and short queues.

The application shows which counter is serving more than 51 percent of the total number of people with a red indicator. At the same time the counter with the lowest number of customers is shown with a green indicator, signaling that people should be redirected there.

For the demo I obtained publicly available video footage of a retail store which shows 3 counter points. A snapshot of the footage can be seen below.