Problem Statement

The object counting systems in the manufacturing industry are essential to inventory management and supply chains. They mostly use proximity sensors or color sensors to detect objects for counting. Proximity sensors detect the presence or absence of an object based on its proximity to the sensor, while color sensors can distinguish objects based on their color or other visual characteristics. There are some limitations of these systems though; they typically have difficulty detecting small objects in large quantities, especially when they are not in a row or orderly manner. This can be compounded by a relatively fast conveyor belt. These conditions make the object counting inaccurate.Our Solution

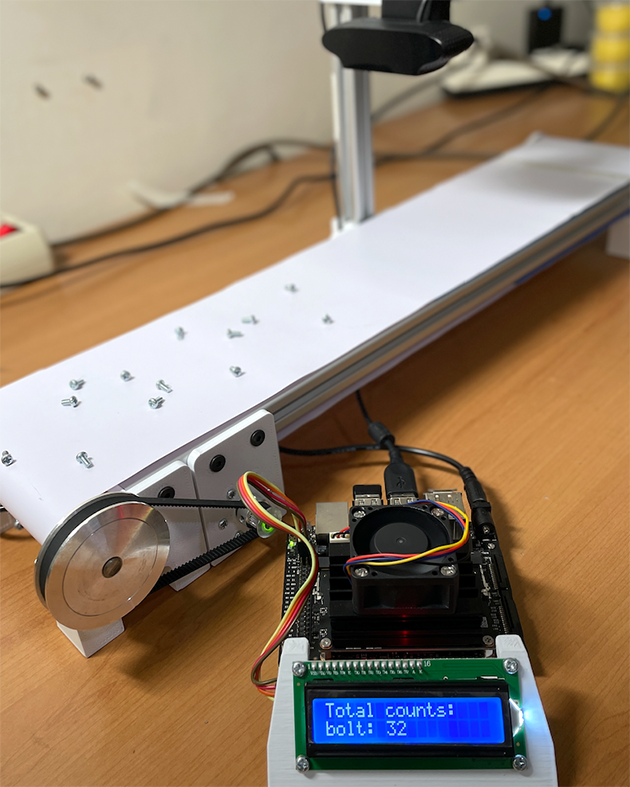

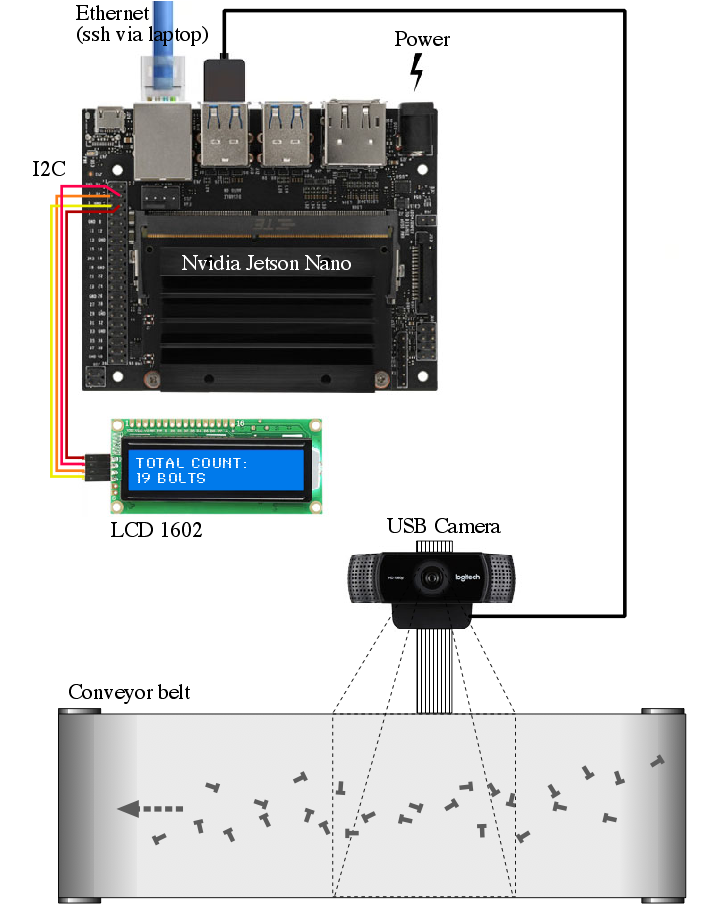

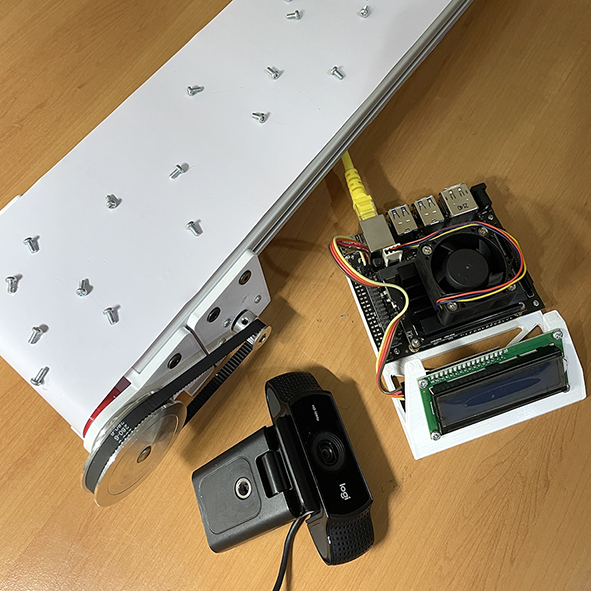

After experimenting with computer vision on the Jetson Nano in a previous project, I believe that a computer vision system with its object detection capabilities can explore its potential to accurately count small objects in large quantities and on fast-moving conveyor belts. Basically, we’ll explore the capability of Edge Impulse’s FOMO models that have been optimized for the GPU in the Jetson Nano. In this project, the production line / conveyor belt will run quite fast, with lots of small objects in random positions, and the number of objects will be counted live and displayed on a 16x2 LCD display. Speed and accuracy are the goals of the project.

How Does It Work?

This project utilizes Edge Impulse’s FOMO algorithm, which can quickly detect objects in every frame that a camera captures on a running conveyor belt. FOMO’s ability to know the number and position of coordinates of an object is the basis of this system. The project aims to assess the Nvidia Jetson Nano’s GPU capabilities in processing higher-resolution imagery (720x720 pixels), compared to typical FOMO object detection projects (which often target lower resolutions such as 96x96 pixels), all while maintaining optimal inference speed. The machine learning model (namedmodel.eim) will be deployed using the TensorRT library, configured with GPU optimizations and integrated through the Linux C++ SDK. Additionally, the Edge Impulse model will be seamlessly integrated into our Python codebase to facilitate cumulative object counting. Our proprietary algorithm compares current frame coordinates with those of previous frames to identify new objects and avoid duplicate counting.

Hardware Requirements:

- NVIDIA Jetson Nano Developer Kit

- USB Camera (eg. Logitech C922)

- Mini conveyor belt system with camera stand

- Objects: eg. bolt

- Ethernet cable

- PC/Laptop to access Jetson Nano via SSH

Software & Online Services:

- Edge Impulse Studio

- Edge Impulse Linux, Python & C++ SDK

- NVIDIA Jetpack SDK

- Terminal

Steps

1. Prepare Data / Images

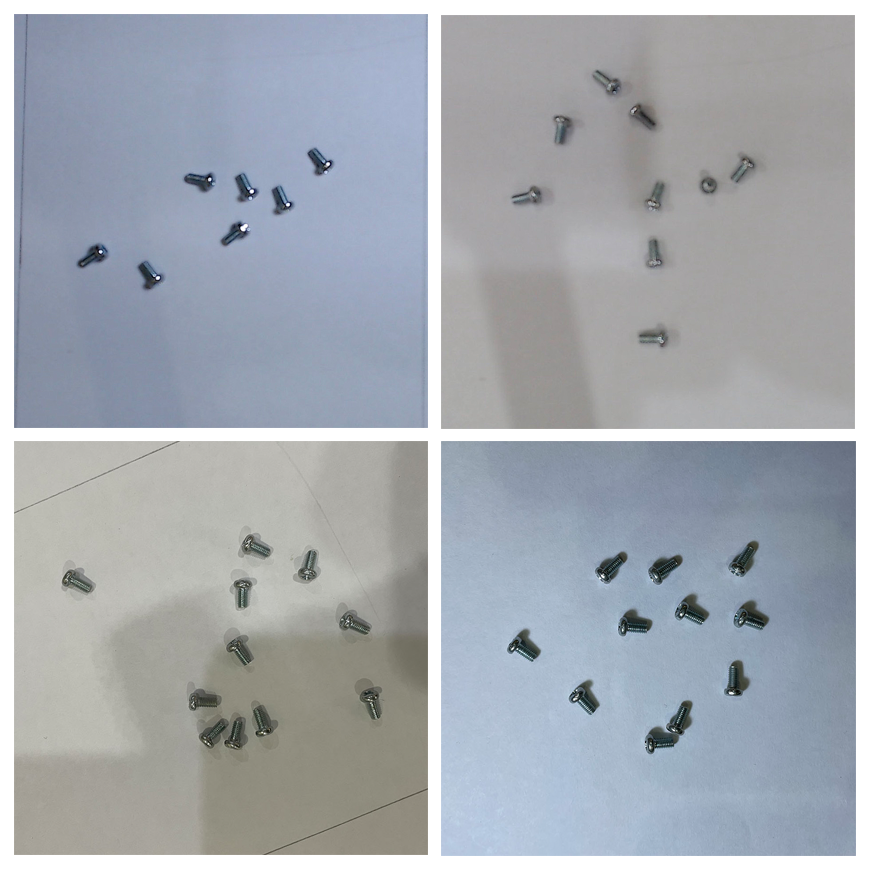

In this project we use a Logitech C922 USB camera capable of 720p at 60 fps connected to a PC/laptop to capture the images for data collection, for ease of use. Take pictures from above the parts, at slightly different angles and lighting conditions to ensure that the model can work under different conditions (to prevent overfitting). Object size is a crucial aspect when using FOMO, to ensure the performance of this model. You must keep the camera distance from the objects consistent, because significant difference in object sizes will confuse the algorithm and cause difficulty in the auto-labelling process.

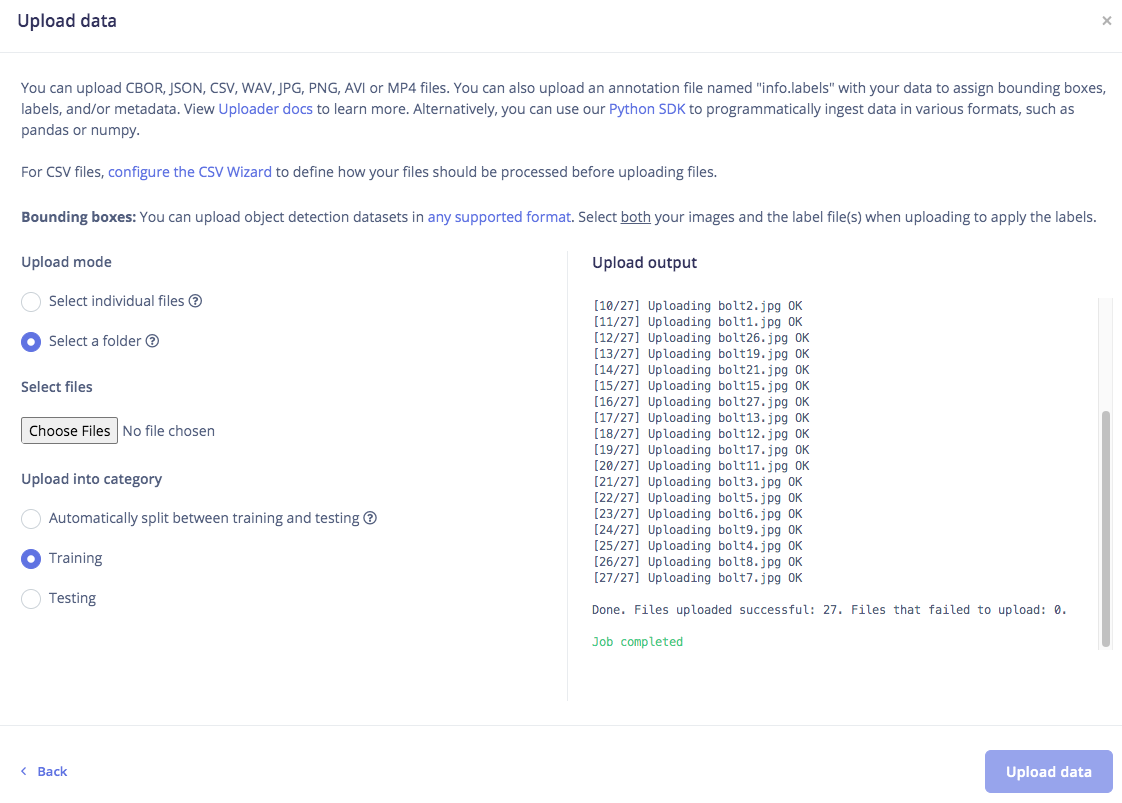

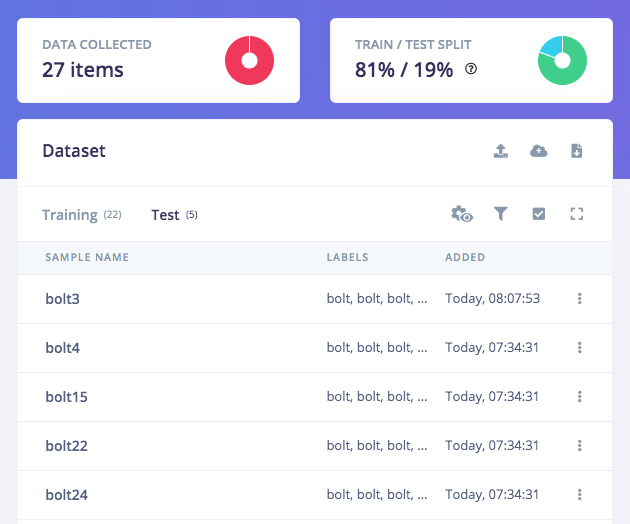

2. Data Acquisition and Labeling

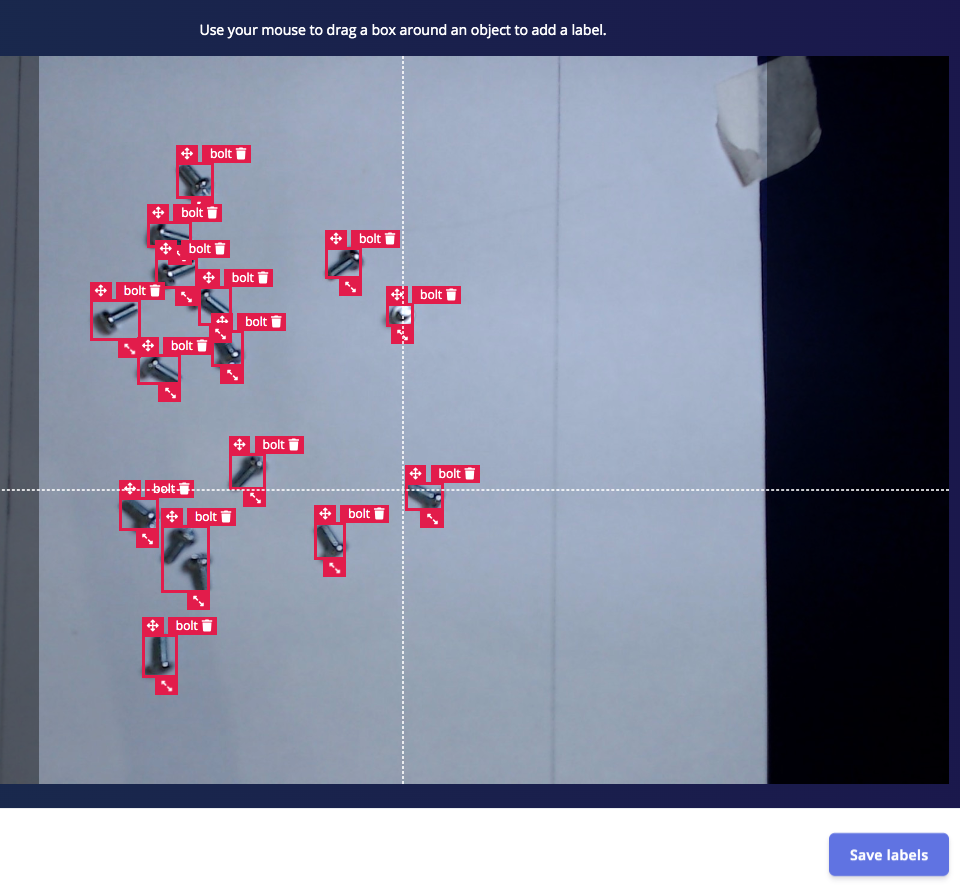

Open studio.edgeimpulse.com, login or create an account then create a new project. Choose the Images project option, then Object detection. In Dashboard > Project Info, choose Bounding Boxes for the labeling method and NVIDIA Jetson Nano for the target device. Then in Data acquisition, click on Upload Data tab, choose your photo files, automatically split them between Training and Testing, then click on Begin upload. Next,- For Developer accounts: click on the Labeling queue tab then drag a bounding box around an object and label it, then click Save. Repeat this until all images labelled. It goes quickly though, as the bounding boxes will attempt to follow an object from image to image.

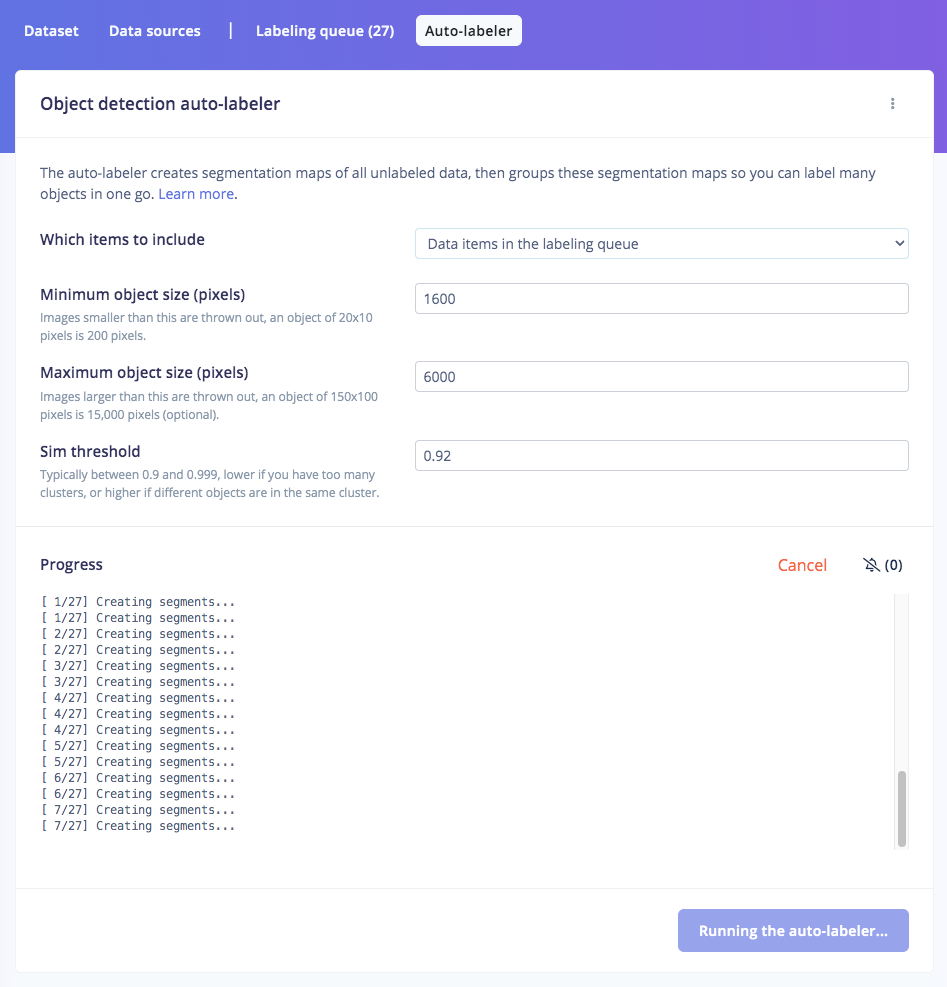

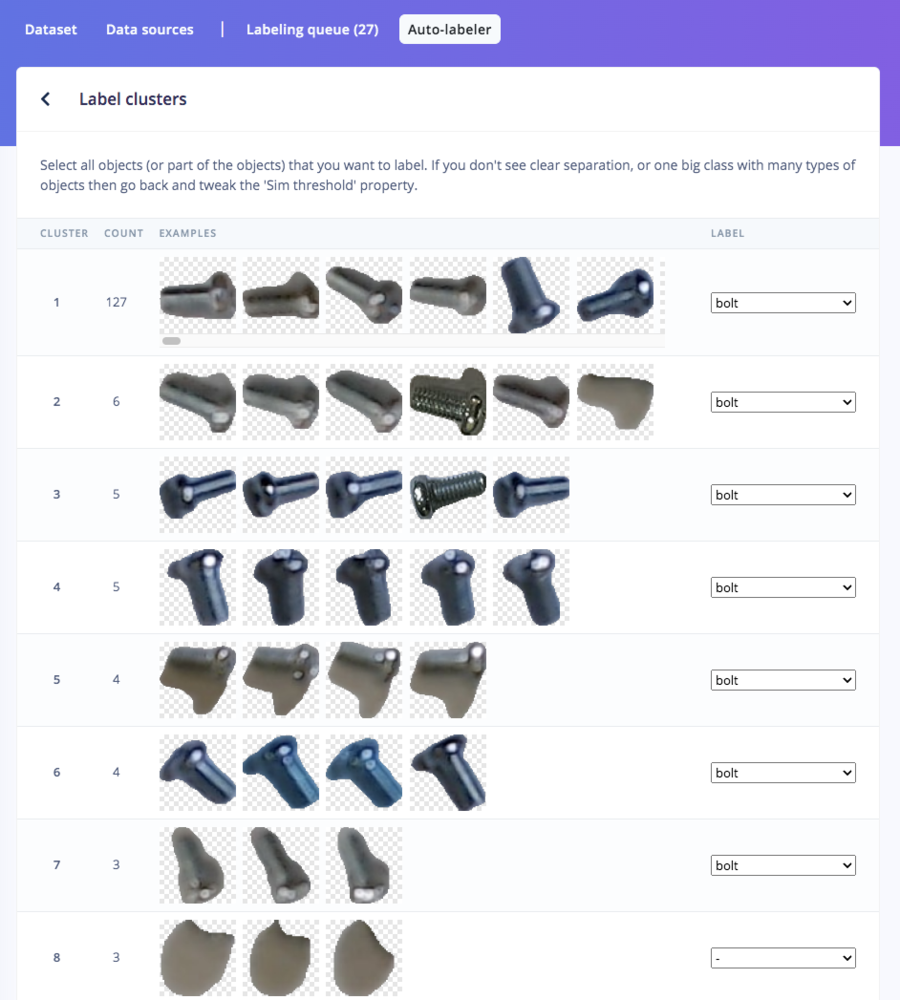

- For Enterprise accounts: click on Auto-Labeler in Data Acquisition. This auto-labeling segmentation / cluster process will save a lot of time over the manual process above. Set min/max object pixels and sim threshold (0.9 - 0.999) to adjust the sensitivity of cluster detection, then click Run. If something doesn’t match or if there is additional data, labeling can be done manually as well.

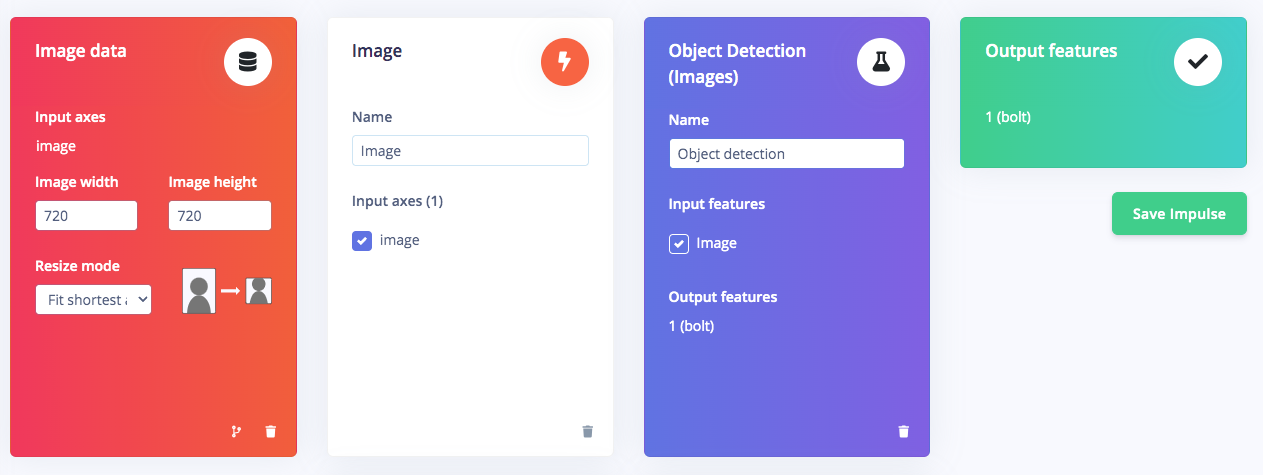

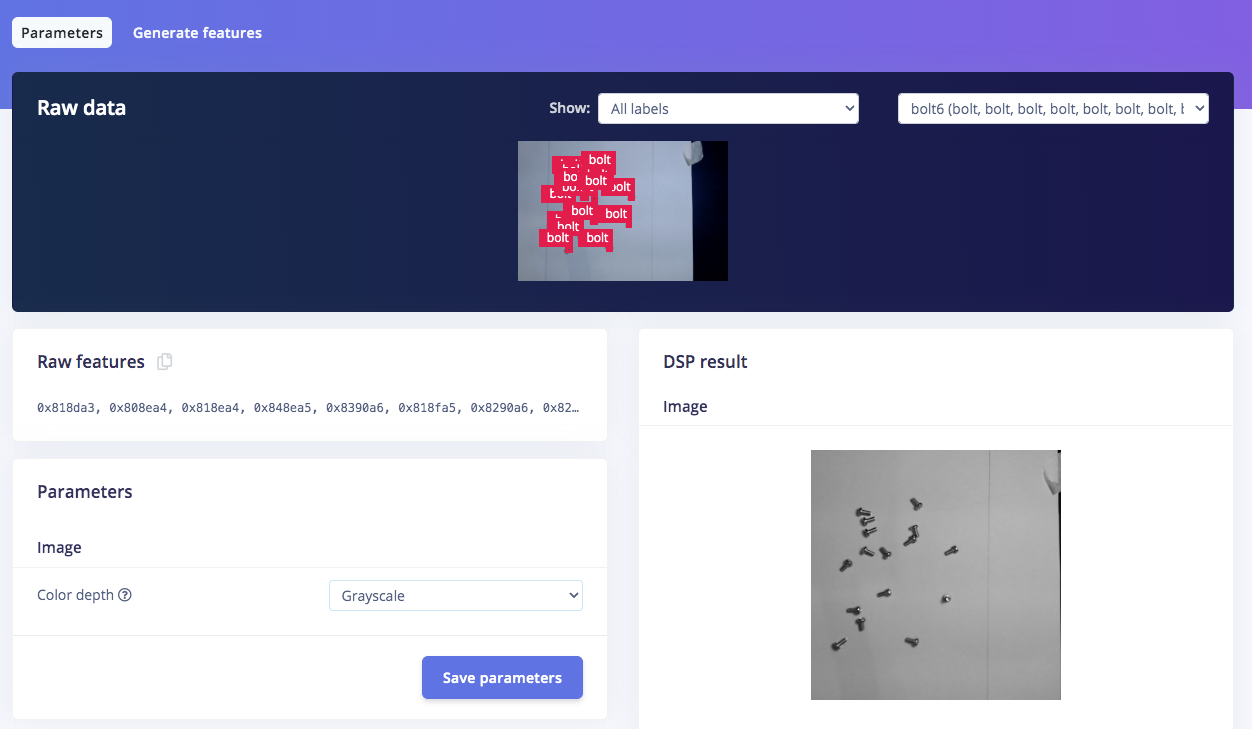

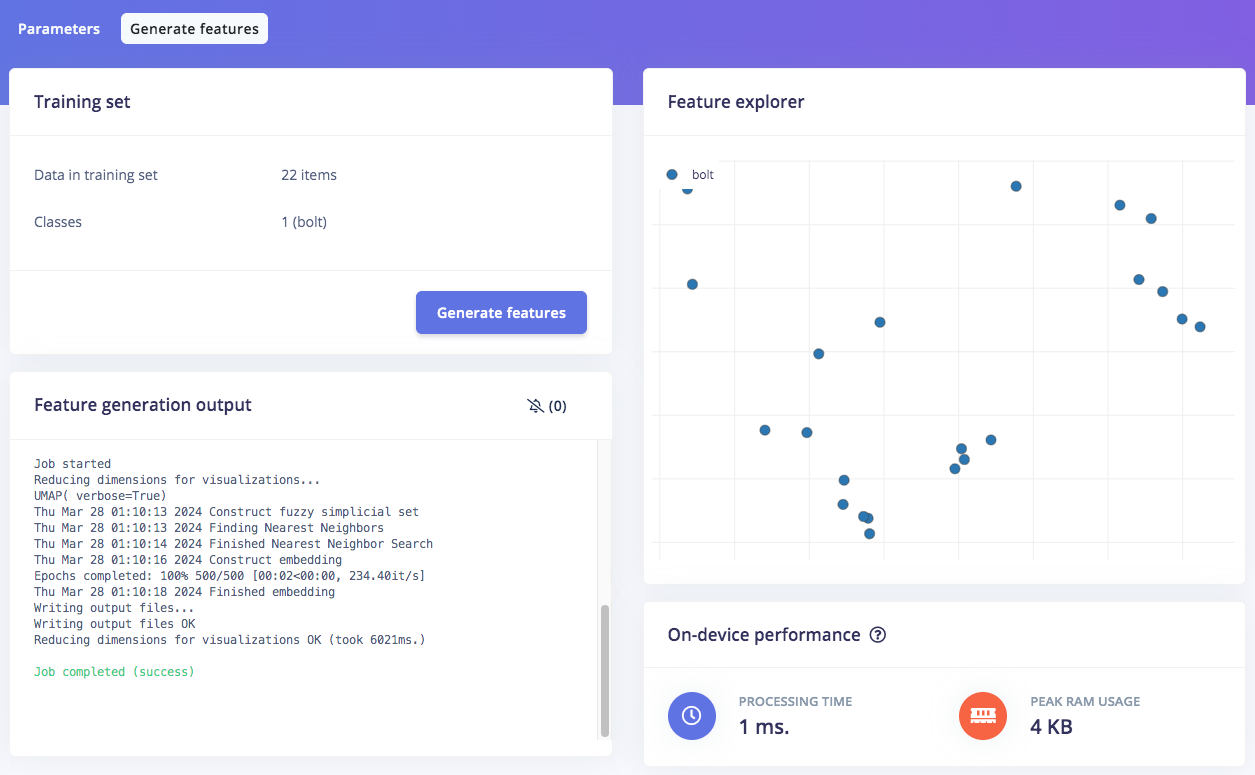

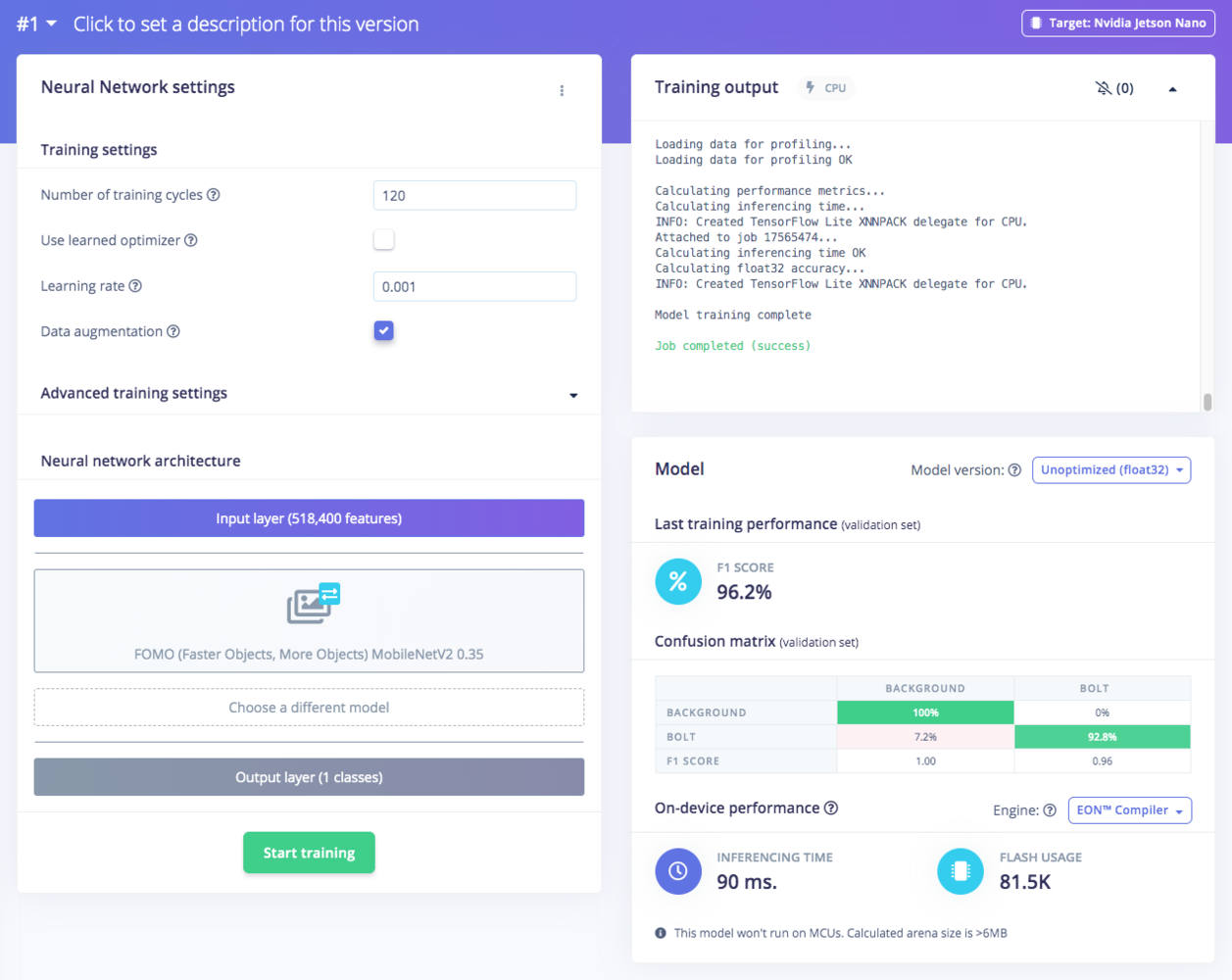

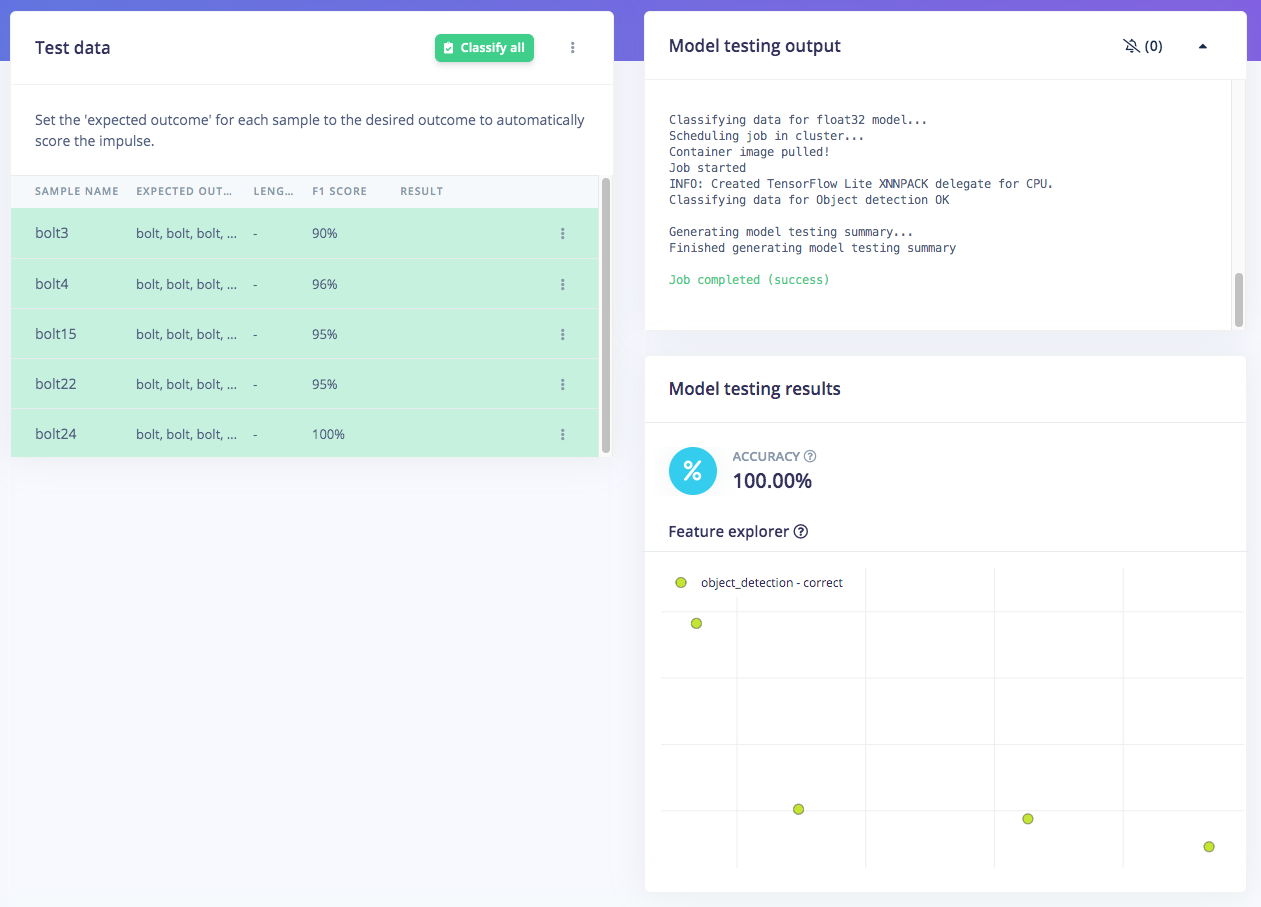

3. Train and Build Model Using FOMO Object Detection

Once you have the dataset ready, go to Create Impulse and set 720 x 720 as the image width and height. Then choose Fit shortest axis, and choose Image and Object Detection as Learning and Processing blocks. In the Image block configuration, select Grayscale as the color depth and click Save parameters. Then click on Generate features to get a visual representation of the features extracted from each image in the dataset. Navigate to the Object Detection block setup, and leave the default selections as-is for the Neural Network, but perhaps bump up the number of training epochs to 120. Then we choose FOMO (MobileNet V2 0.35), and train the model by clicking the Start training button. You can see the progress on the right side of the page. If everything is OK, then we can test the model, go to Model Testing on the left navigation and click Classify all. Our result is above 90%, so we can move on to the next step — Deployment.

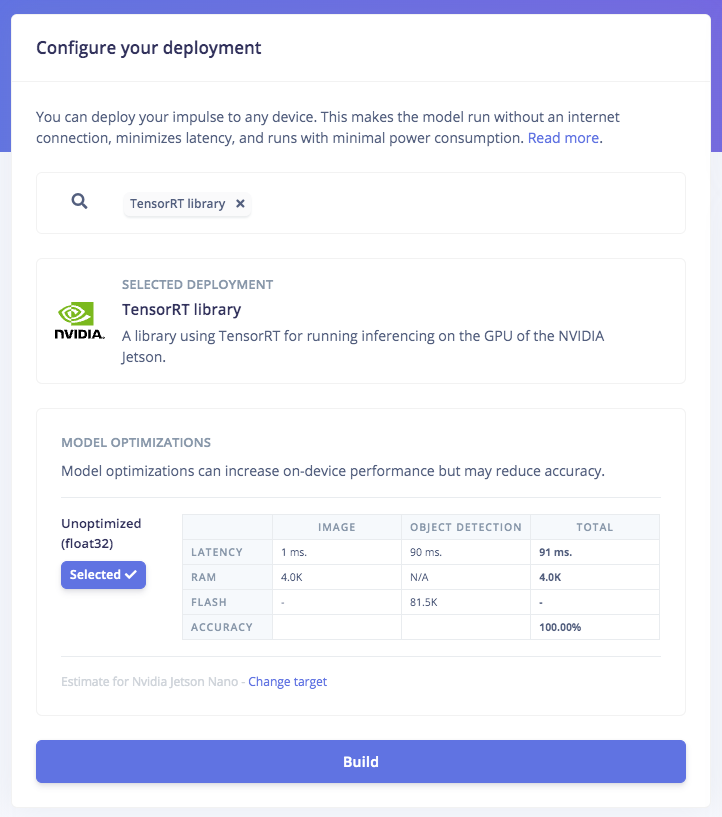

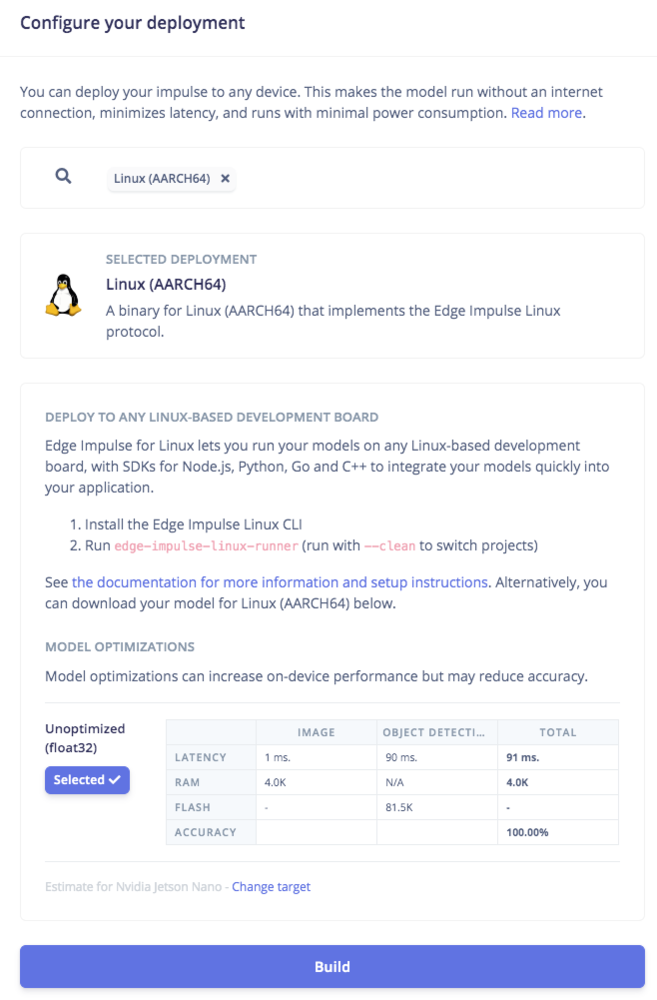

4. Deploy Model Targeting Jetson Nano’s GPU

Click on the Deployment navigation item, then search for TensorRT. Select Float32 and click Build. This will build an NVIDIA TensorRT library for running inferencing targeting the Jetson Nano’s GPU. After it has downloaded, open the.zip file and then we’re ready for model deployment with the Edge Impulse C++ SDK directly on the NVIDIA Jetson Nano.

ssh via a PC or laptop with Ethernet and setup Edge Impulse firmware in the terminal:

/build/model.eim

If your Jetson Nano is running on a dedicated power supply (as opposed to a battery), its performance can be maximized by this command:

sudo /usr/bin/jetson_clocks

Now the model is ready to run in a high-level language such as the Python program in the next step. To ensure this model works, we can run the Edge Impulse Runner with the camera setup on the Jetson Nano and run the conveyor belt. You can the see the camera stream via your browser (the IP address is provided when Edge Impulse Runner first starts up). Run this command:

5. Build Cumulative Count Program (Python)

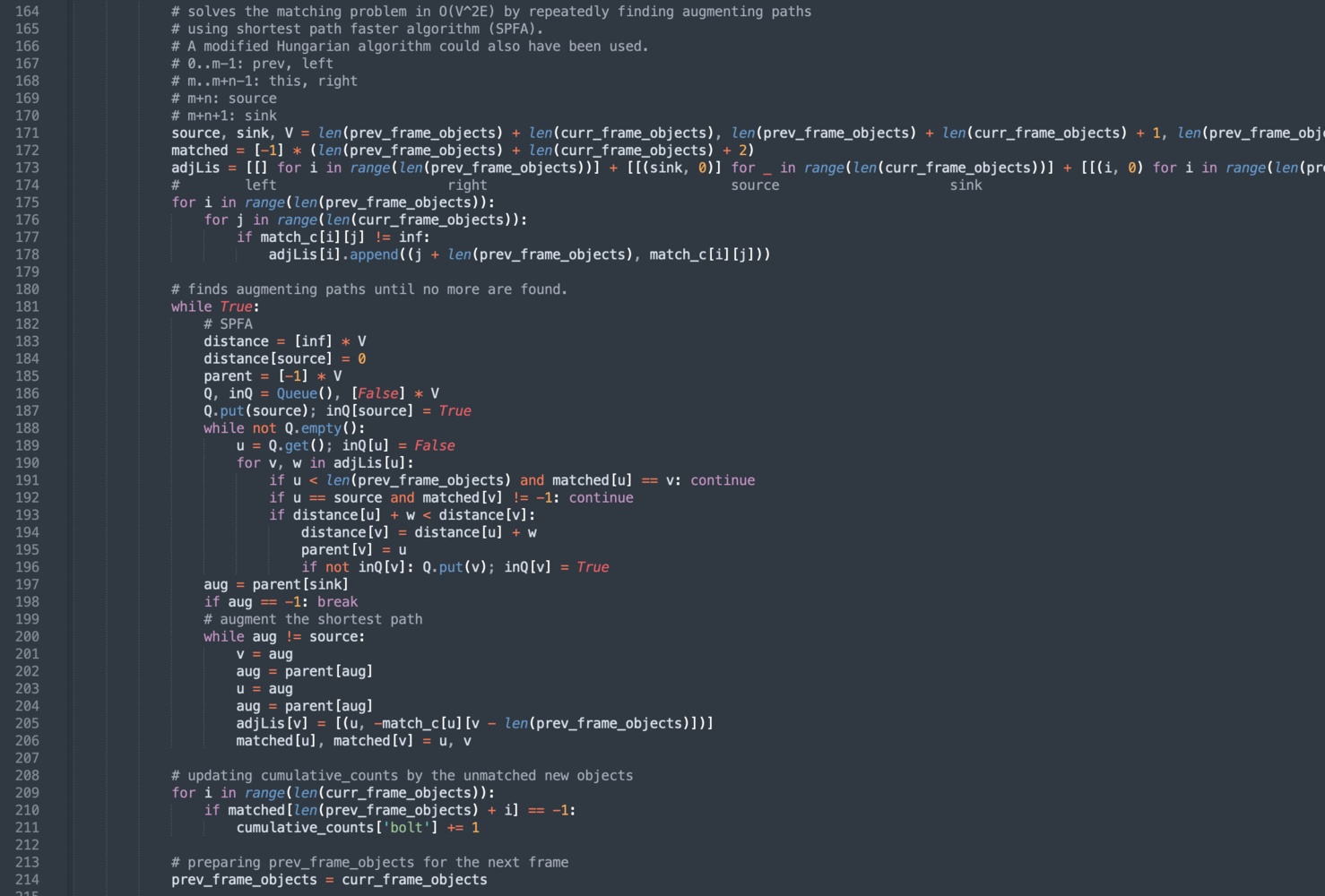

Before we start with Python, we need to install the Edge Impulse Python SDK and clone the repository from the previous Edge Impulse examples. Follow the steps here. With the impressive performance of live inferencing in the Runner, now we will create a Python program to be able to calculate the cumulative count of moving objects taken from camera capture. The program is a modification of Edge Impulse’sclassify.py in examples/image from the linux-python-sdk directory. We turned it into an object tracking program by solving a bipartite matching problem so the same object can be tracked across different frames to avoid double counting. For more detail, you can download and check the python program at this link, https://github.com/Jallson/High_res_hi_speed_object_counting_FOMO_720x720

model.eim is located:

The delay visible in the video stream display and its corresponding output calculation is caused by the OpenCV program rendering a 720x720 display resolution window, not by the inference time of the object detection model. This demo test uses 30 bolts per cycle attached to the conveyor belt to show a comparison with the output on the counter.