Project Demo

Use-Cases

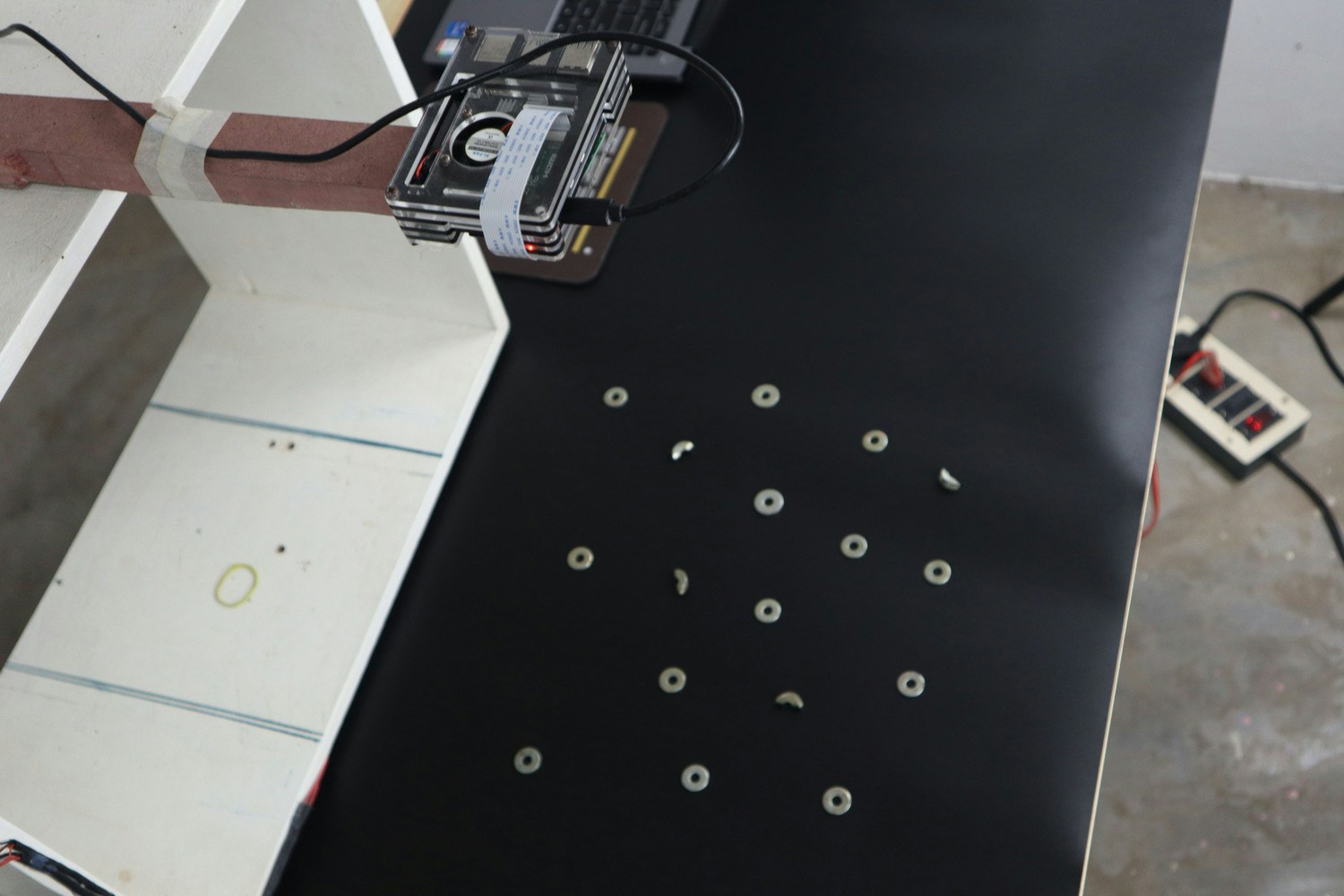

These sample use-cases can be applied to any industry.1. Counting from the Top

In this case, we are counting defective and non-defective washers.

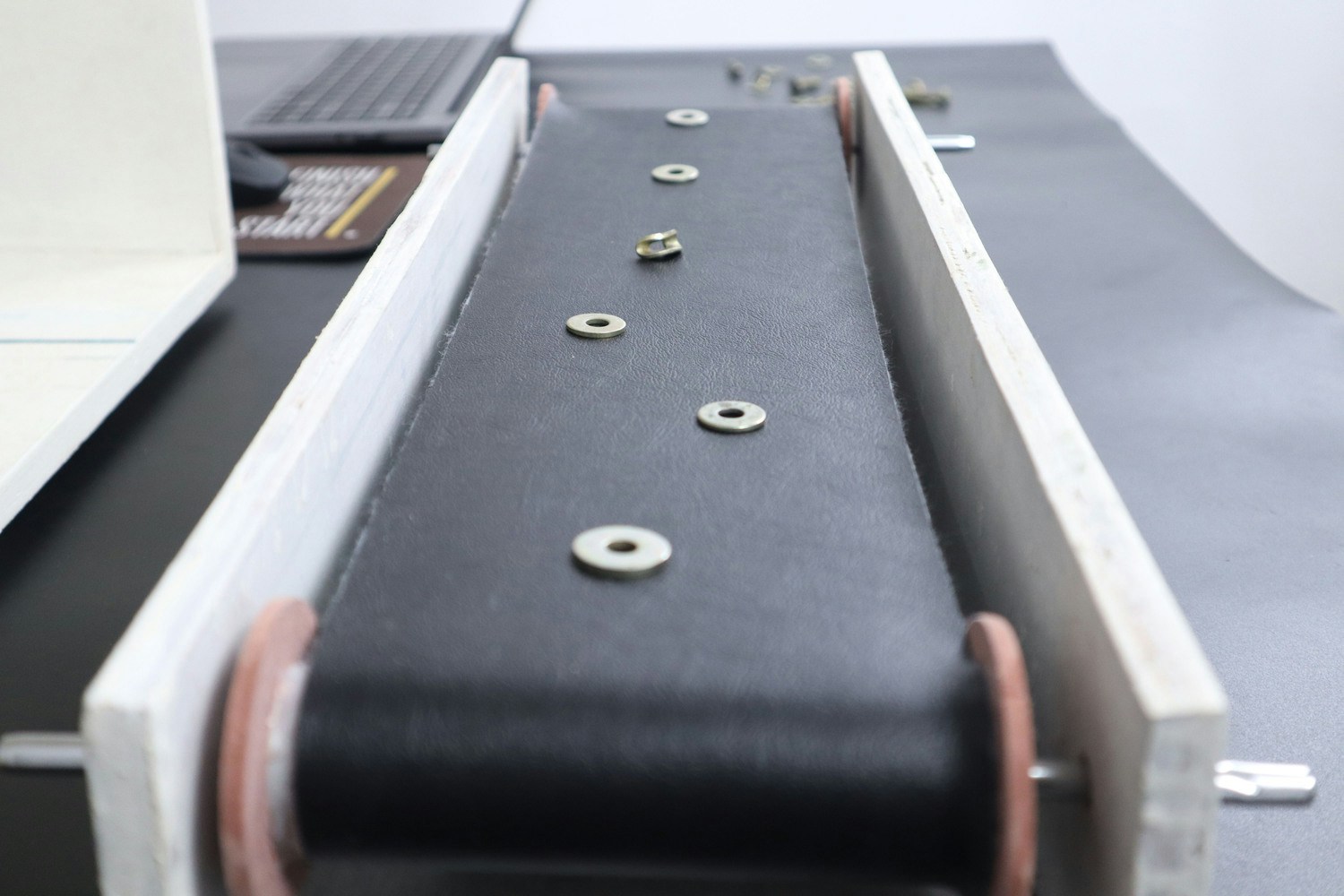

2. Counting in Motion

In this case, we are counting bolts and washers and faulty washers passing through the conveyor belt.

3. Counting in a Bunch

In this case, we are counting the bunch of lollipops.

4. Multiple Parts Counting

In this case, we are counting multiple parts such as Washers and Bolts.

Software

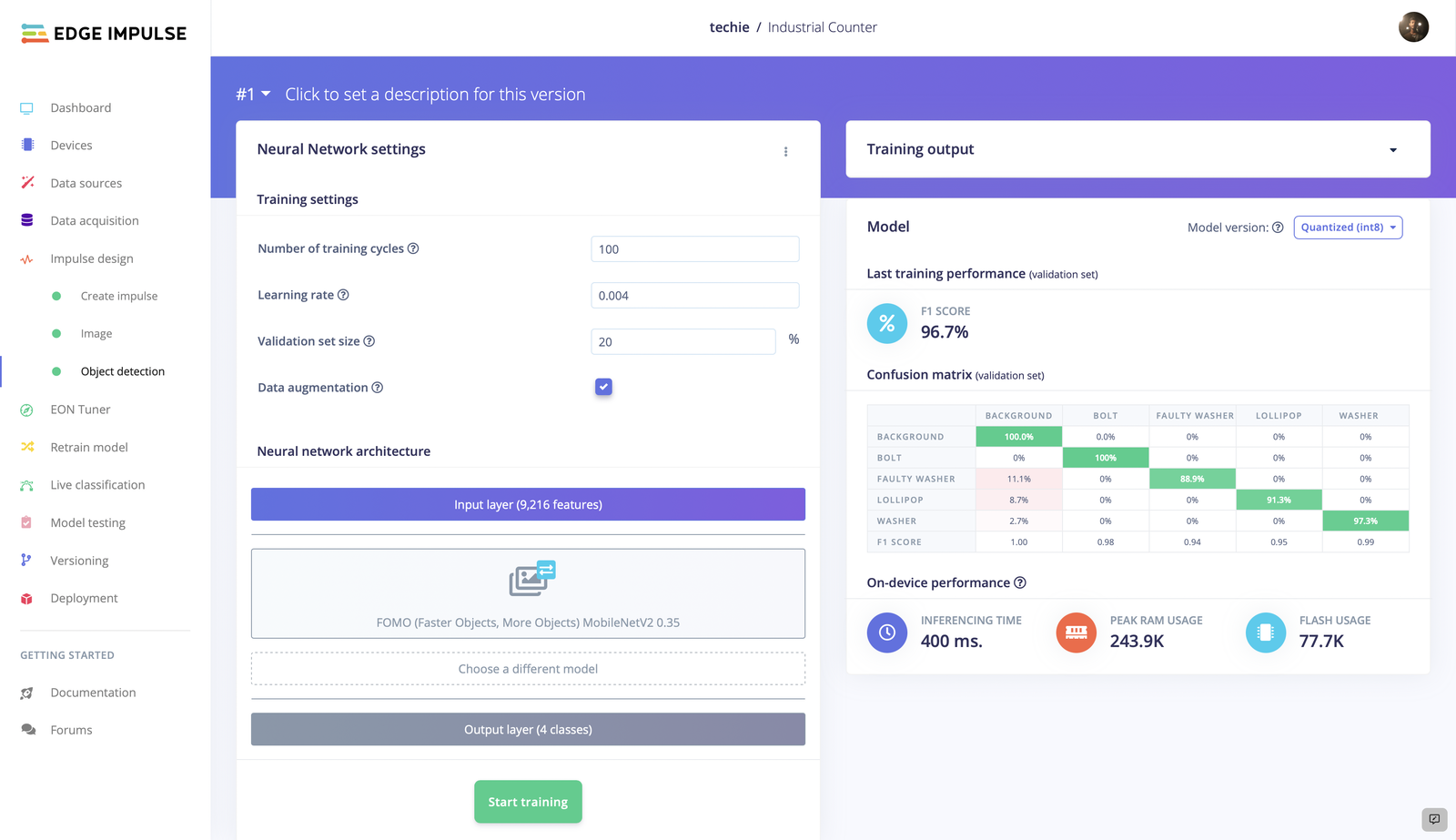

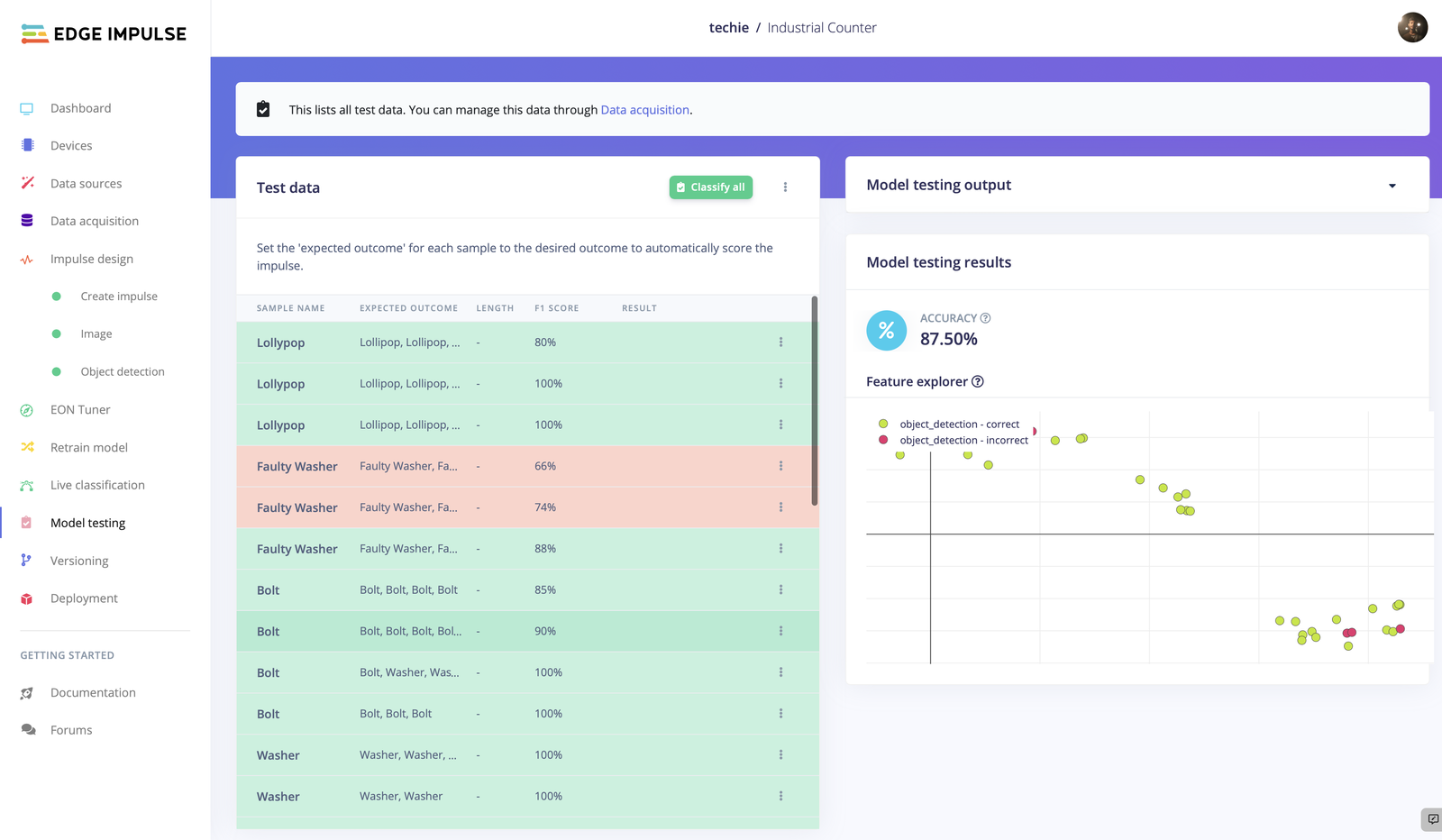

Object Detection Model Training

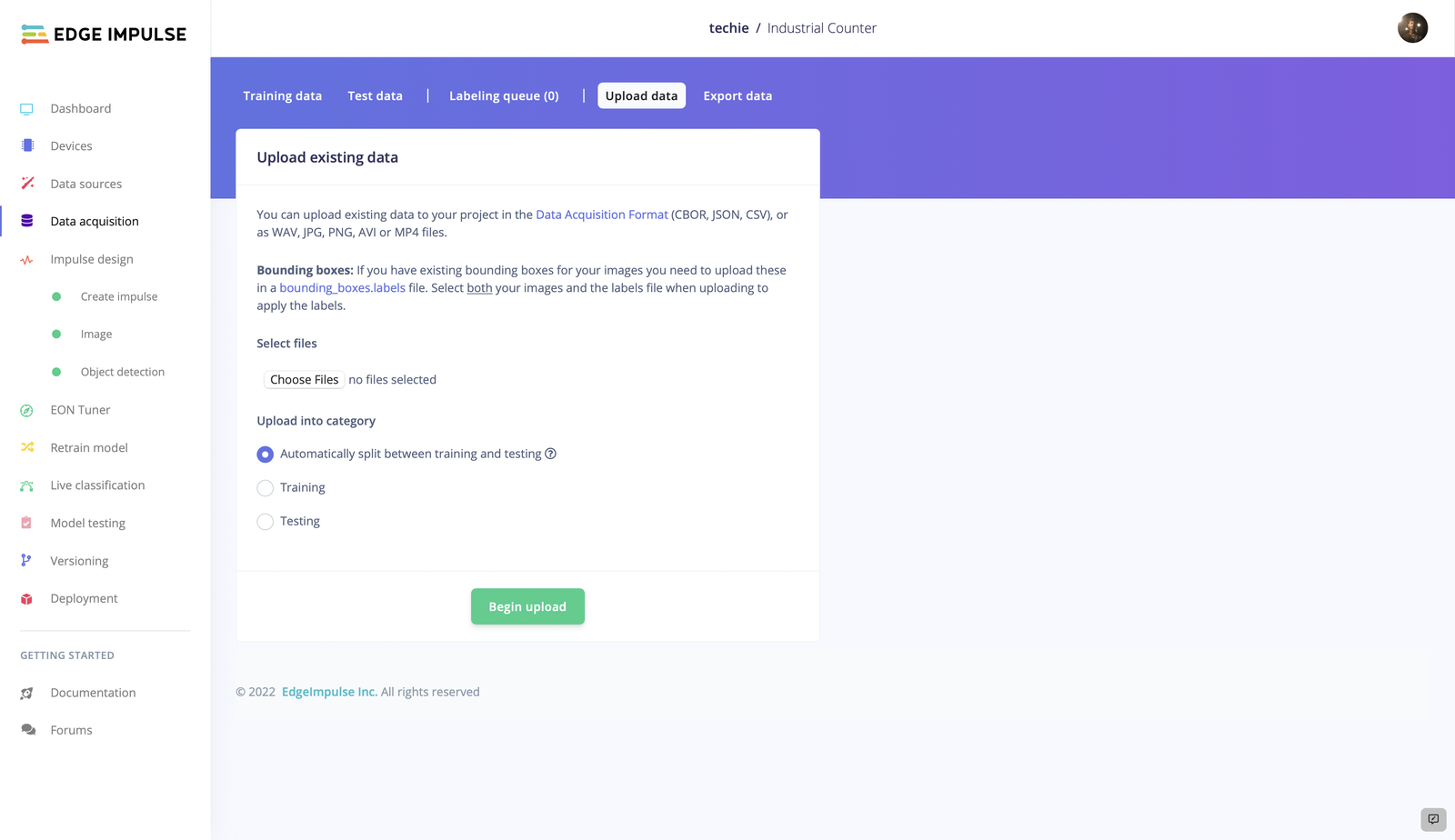

Data Acquisition

Every machine learning project starts with data collection. A good collection of data is one of the major factors that influences the performance of the model. Make sure you have a wide range of perspectives and zoom levels of the items that are being collected. You may take data from any device or development board, or upload your own datasets, for data acquisition. As we have our own dataset, we are uploading them using the Data Acquisition tab.

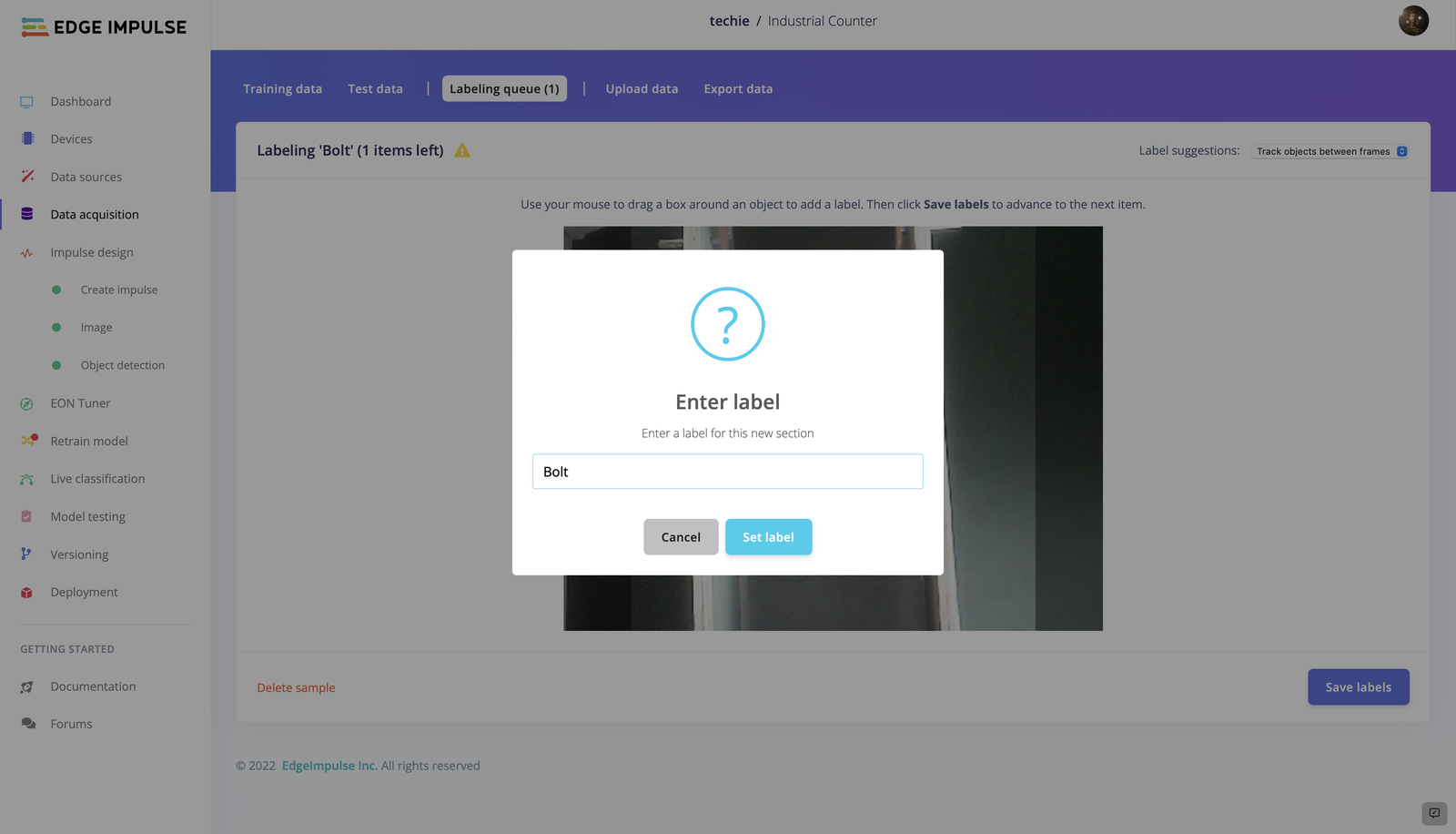

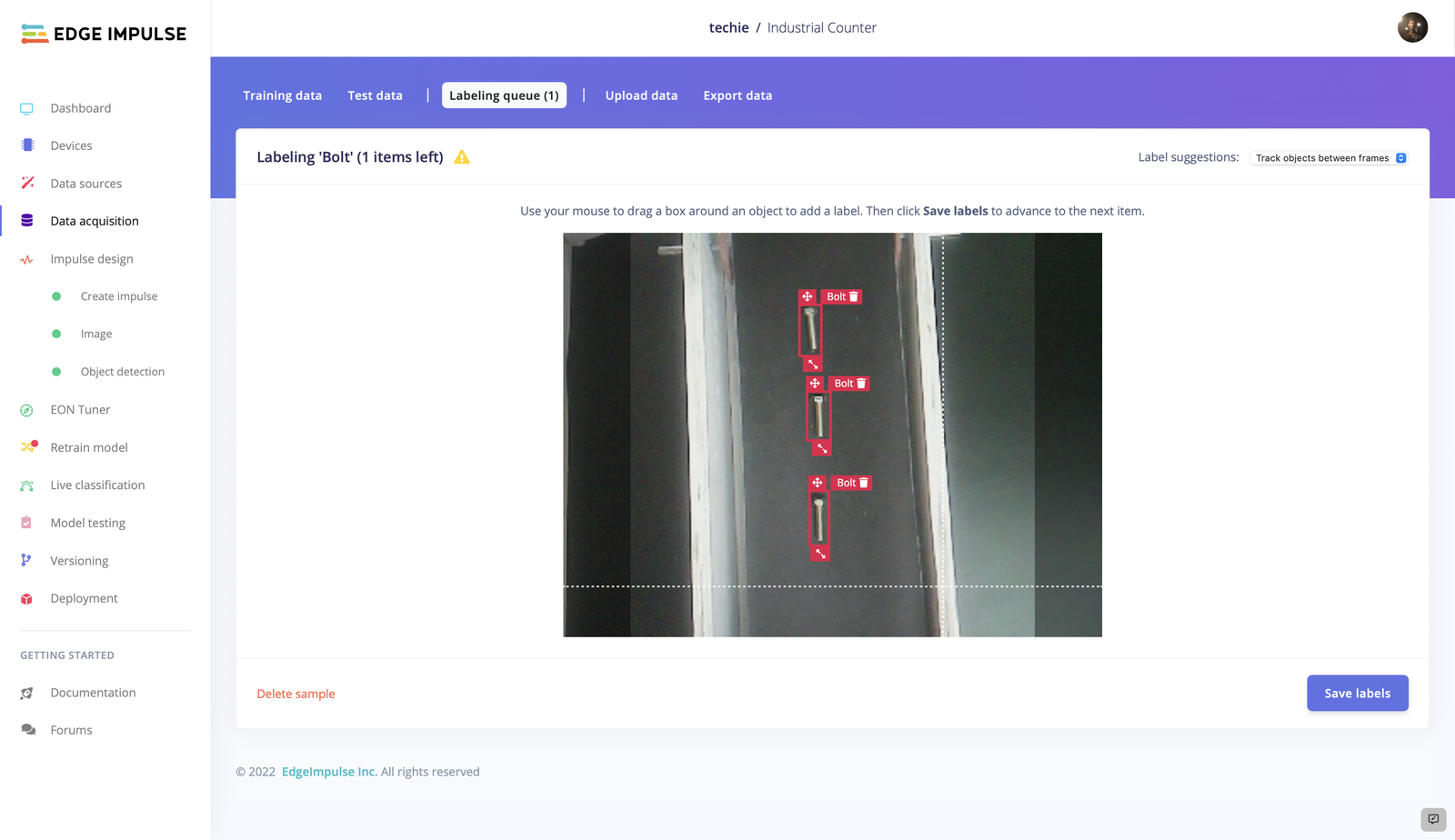

Labeling Data

You may view all of your dataset’s unlabeled data in the labeling queue. Adding a label to an object is as simple as dragging a box around it. Edge Impulse attempts to automate this procedure by running an object tracking algorithm in the background in order to make life a little easier. If you have the same object in multiple photos the box moves for you and you just need to confirm the new box. Drag the boxes, then click Save labels. Continue doing this until your entire dataset has been labeled.

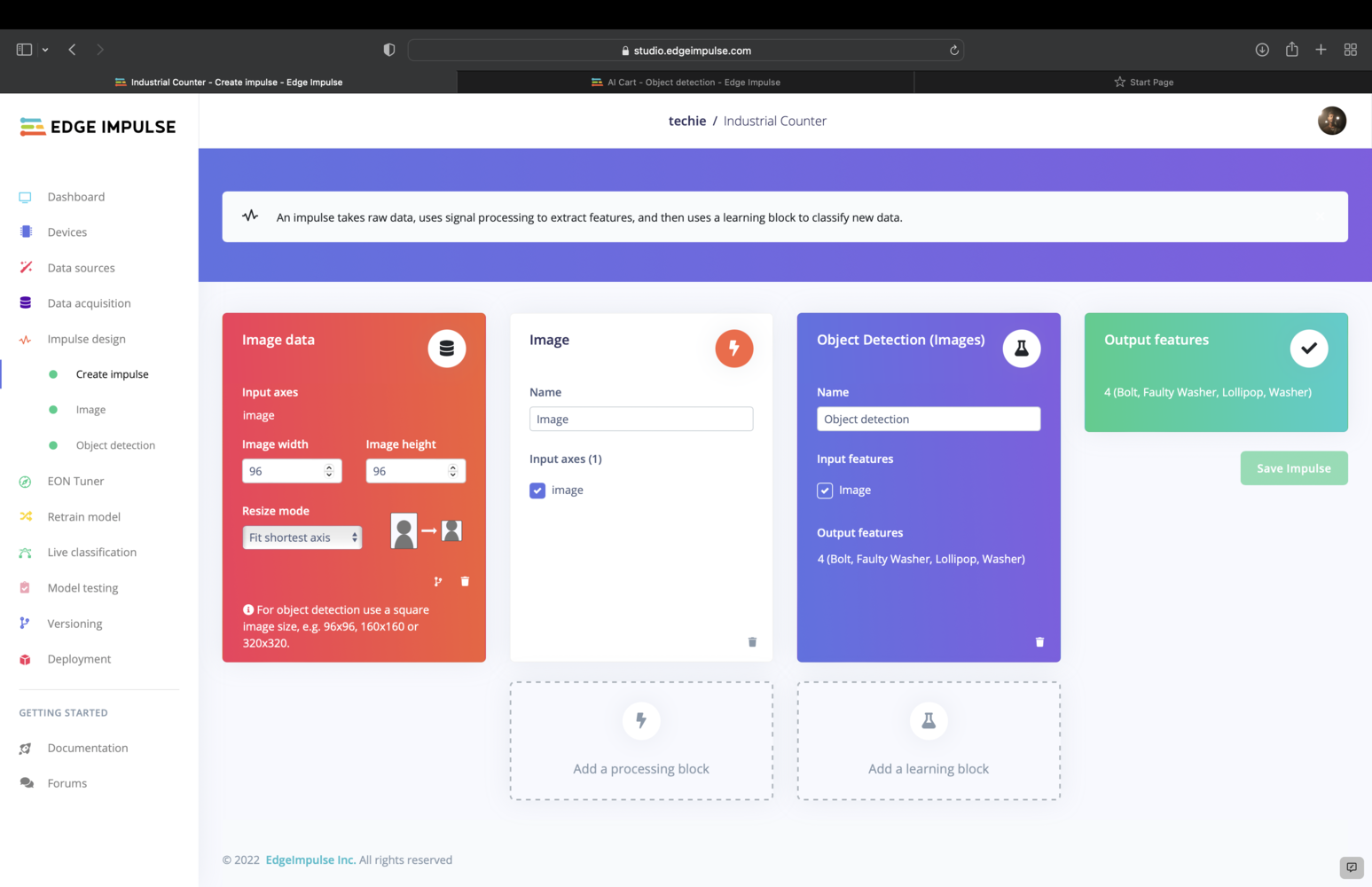

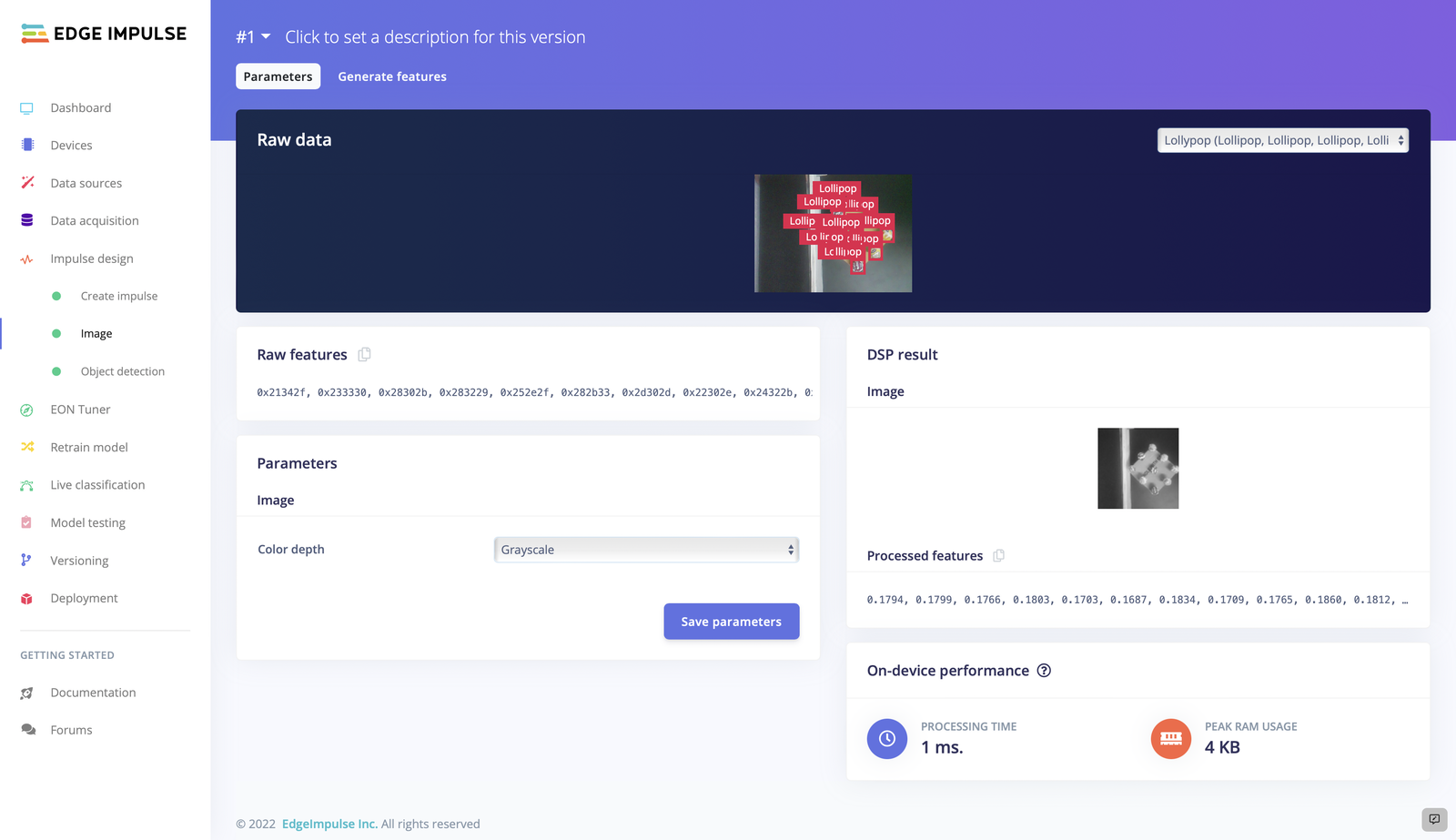

Designing an Impulse

- Resize all the data

- Apply the processing block on all this data.

- Create a visualization of your complete dataset.

- Click Generate features to start the process.

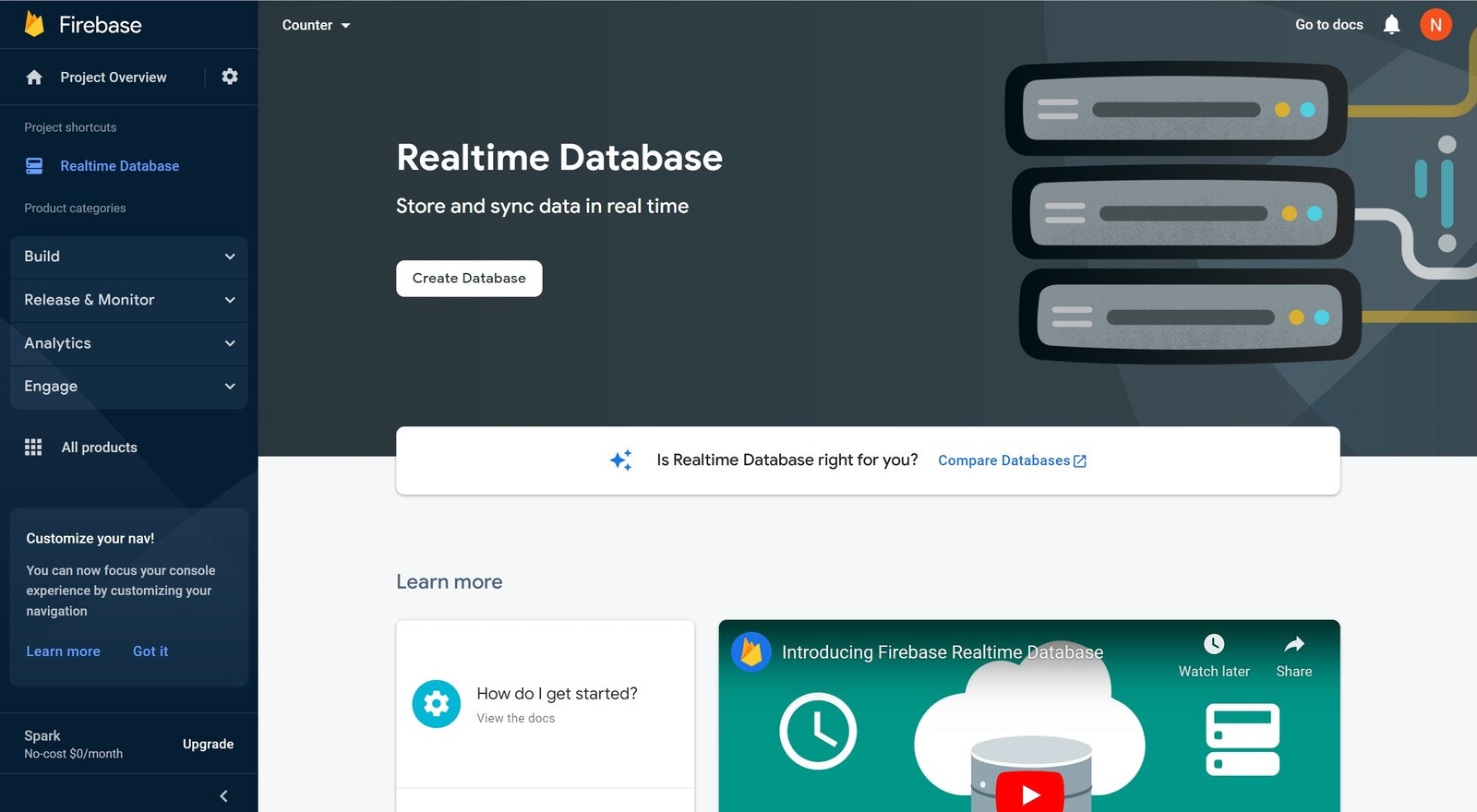

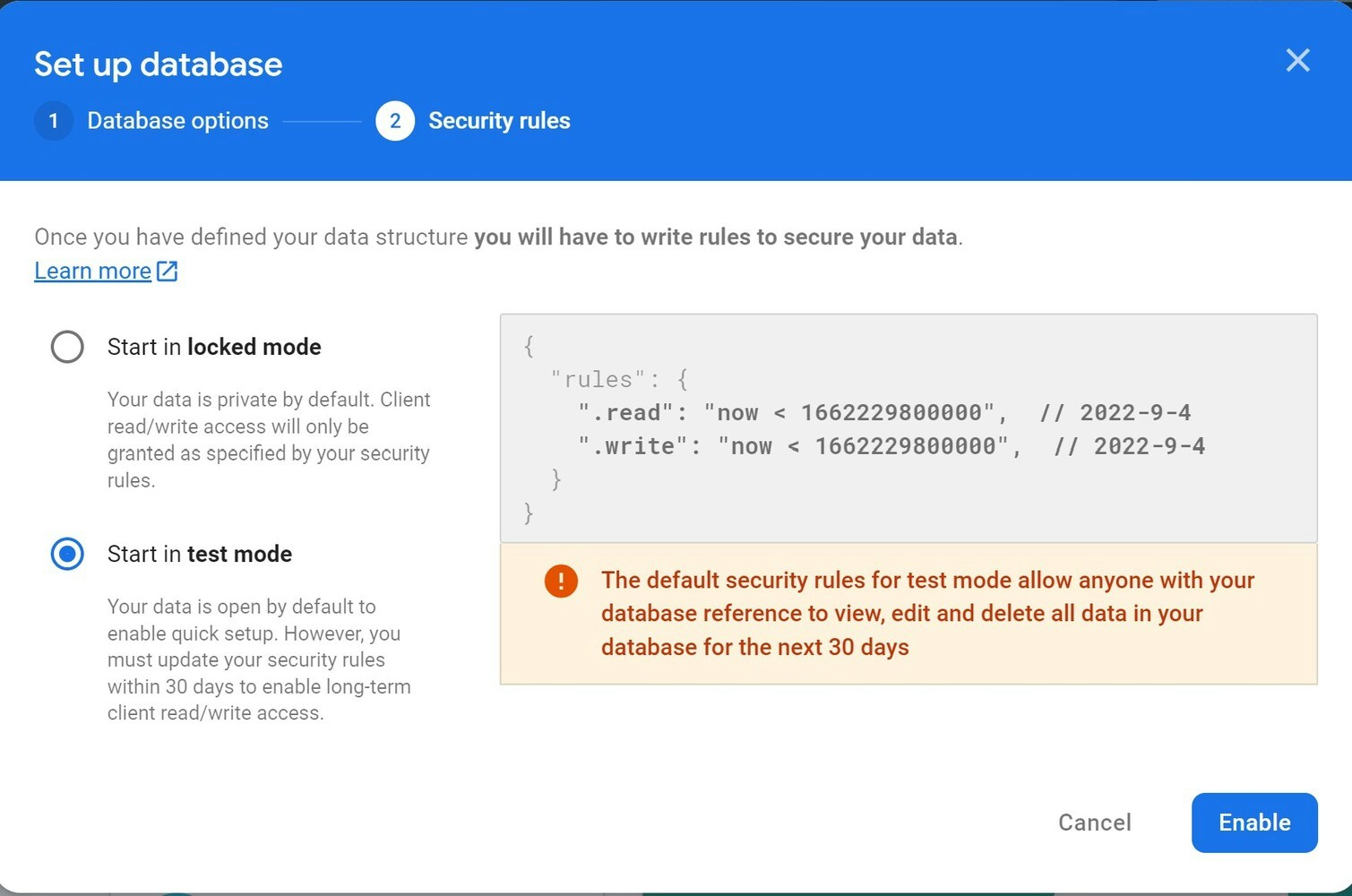

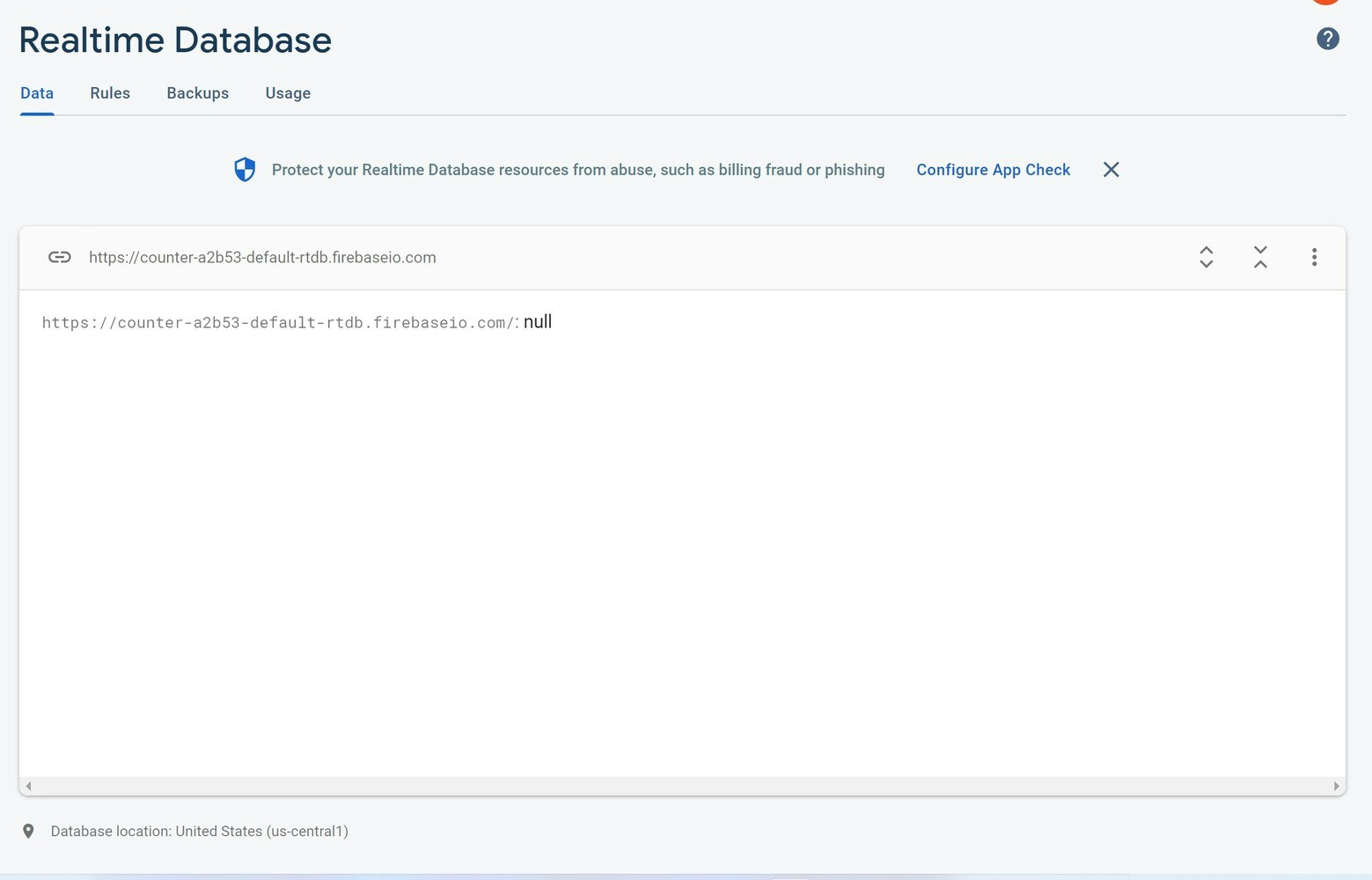

Firebase (set-up)

In our project, we use Firebase, a real-time database to instantly post and retrieve data, so that there is no time delay. Here we used the Pyrebase library which is a Python wrapper for Firebase. To install Pyrebase, run the following command:pip install pyrebase

Pyrebase is written for Python 3 and may not work correctly with Python 2.

First we created a project in the database:

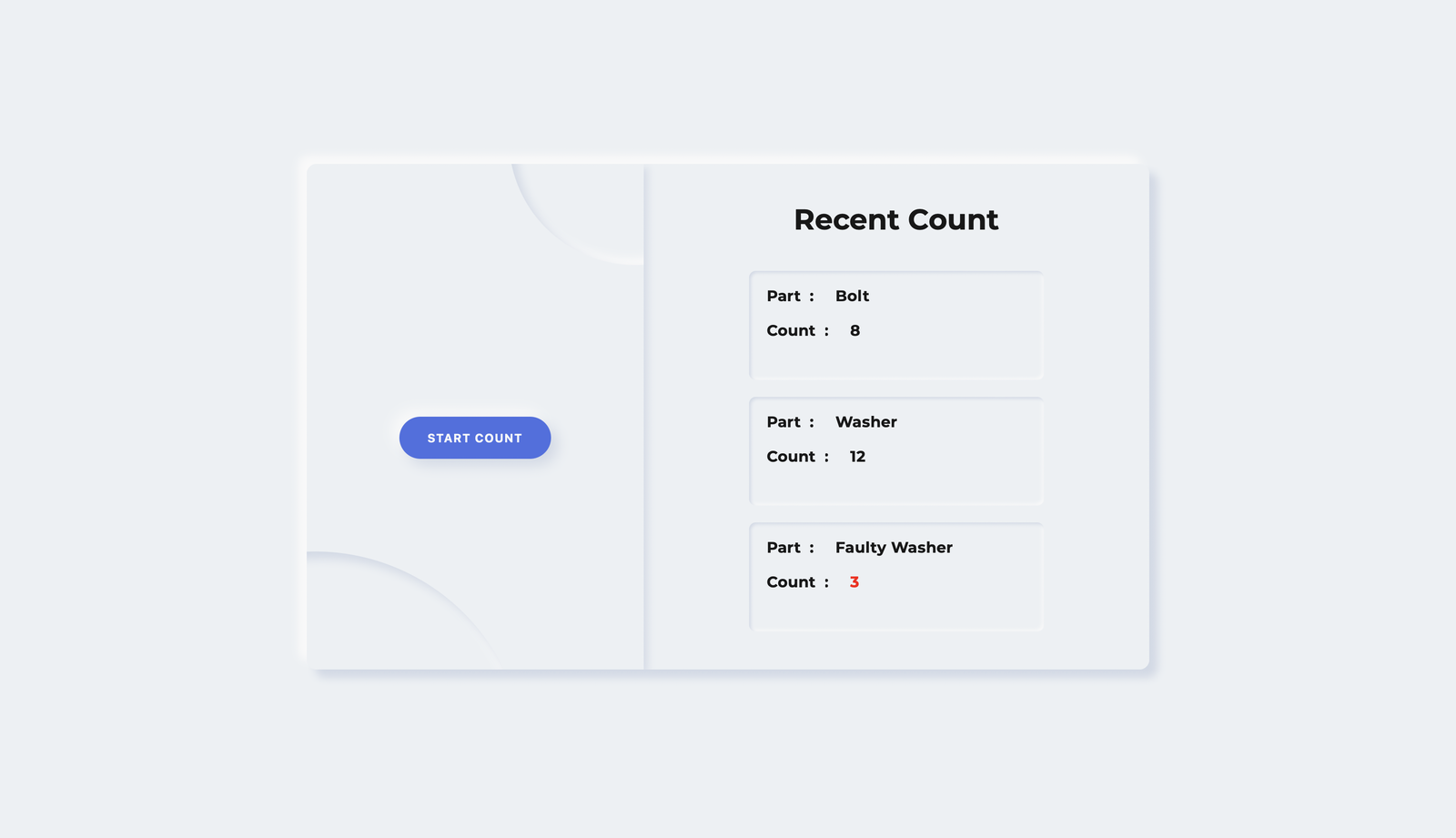

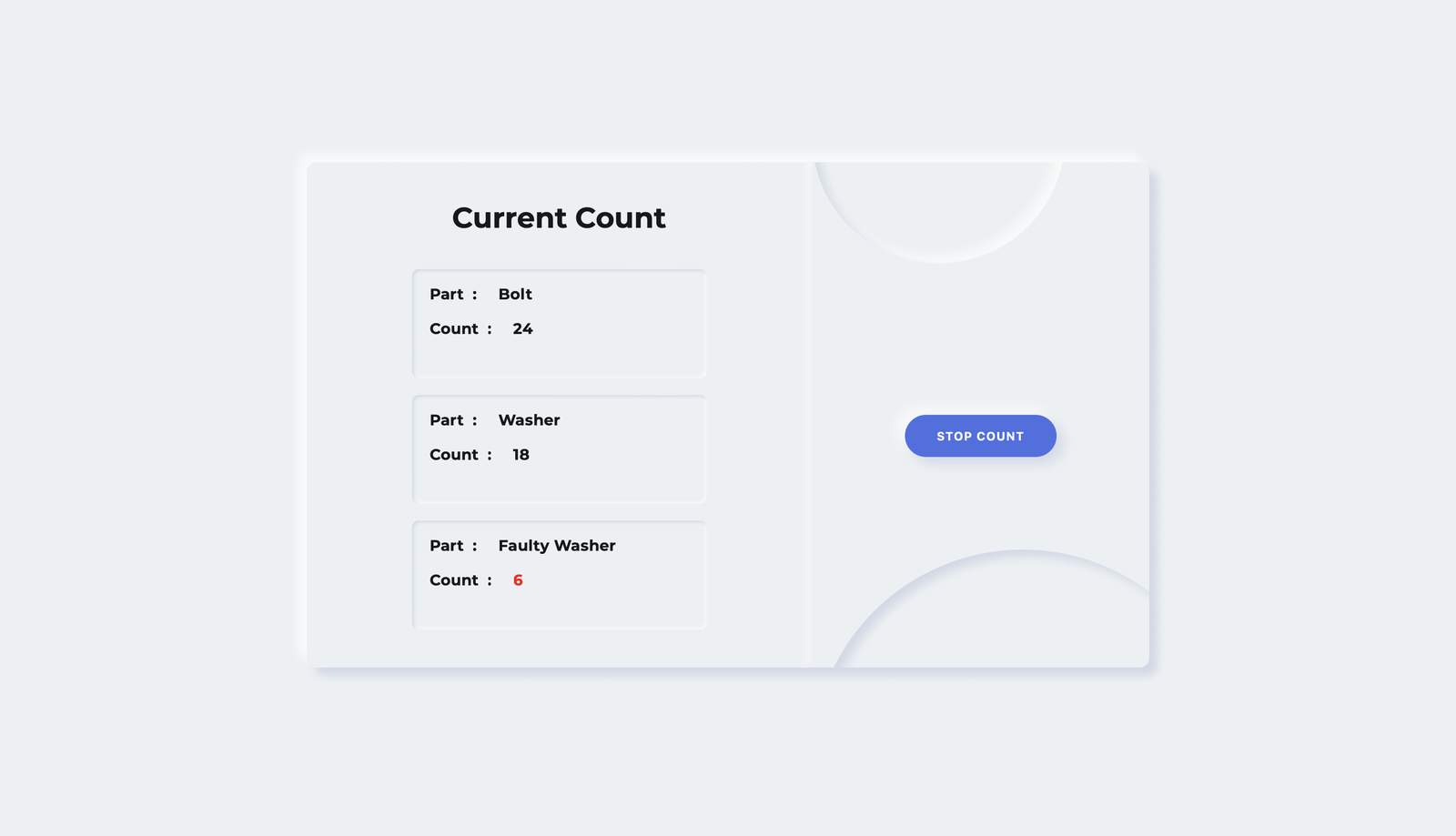

Website

A webpage is created using HTML, CSS and JS to display the count in realtime. The data updated in Firebase is reflected in the webpage in realtime. The webpage displays Recent Count when the counting process is halted, and displays Current Count whenever the counting process is going on.

Code

The entire code and assets are provided in the GitHub repository.Hardware

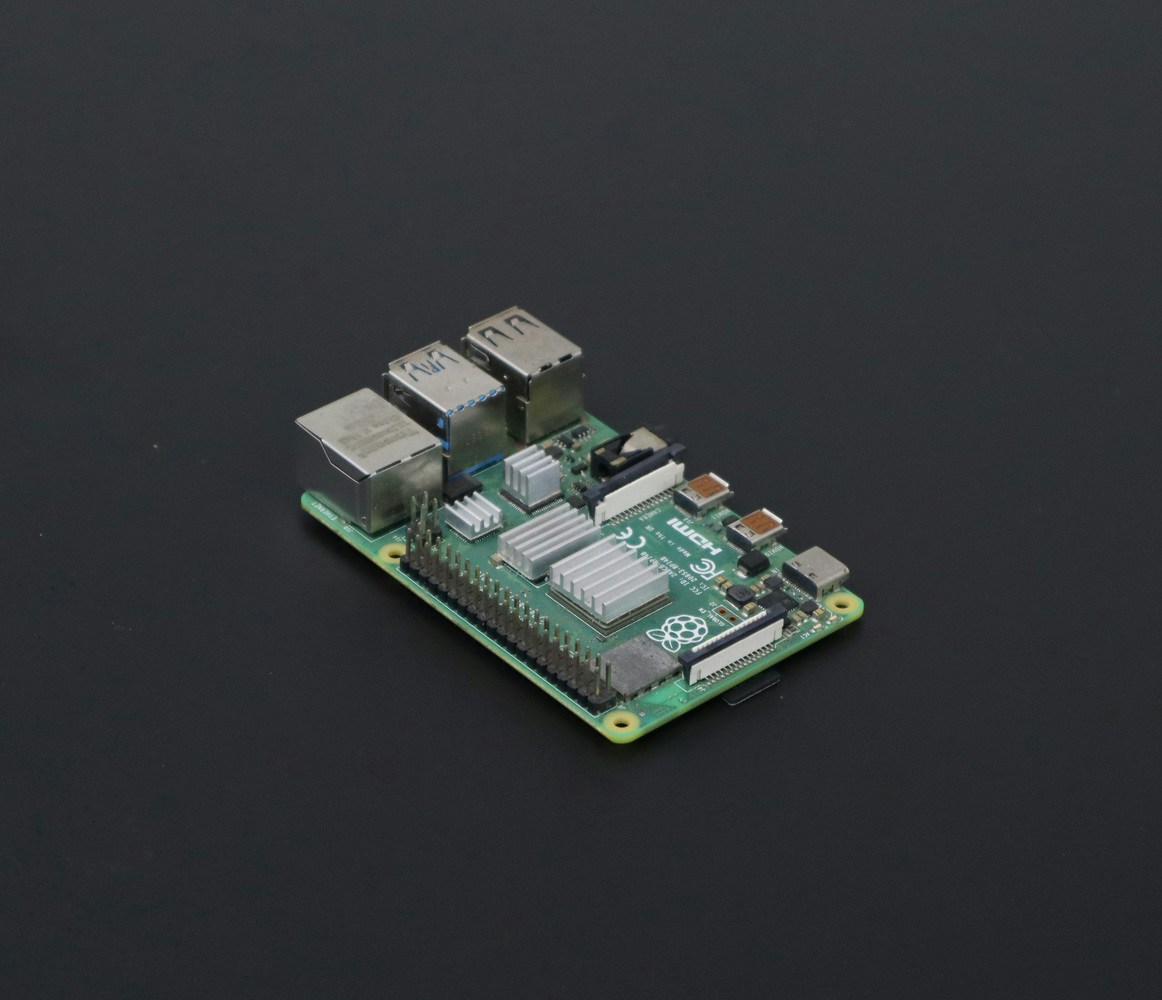

Raspberry Pi 4B

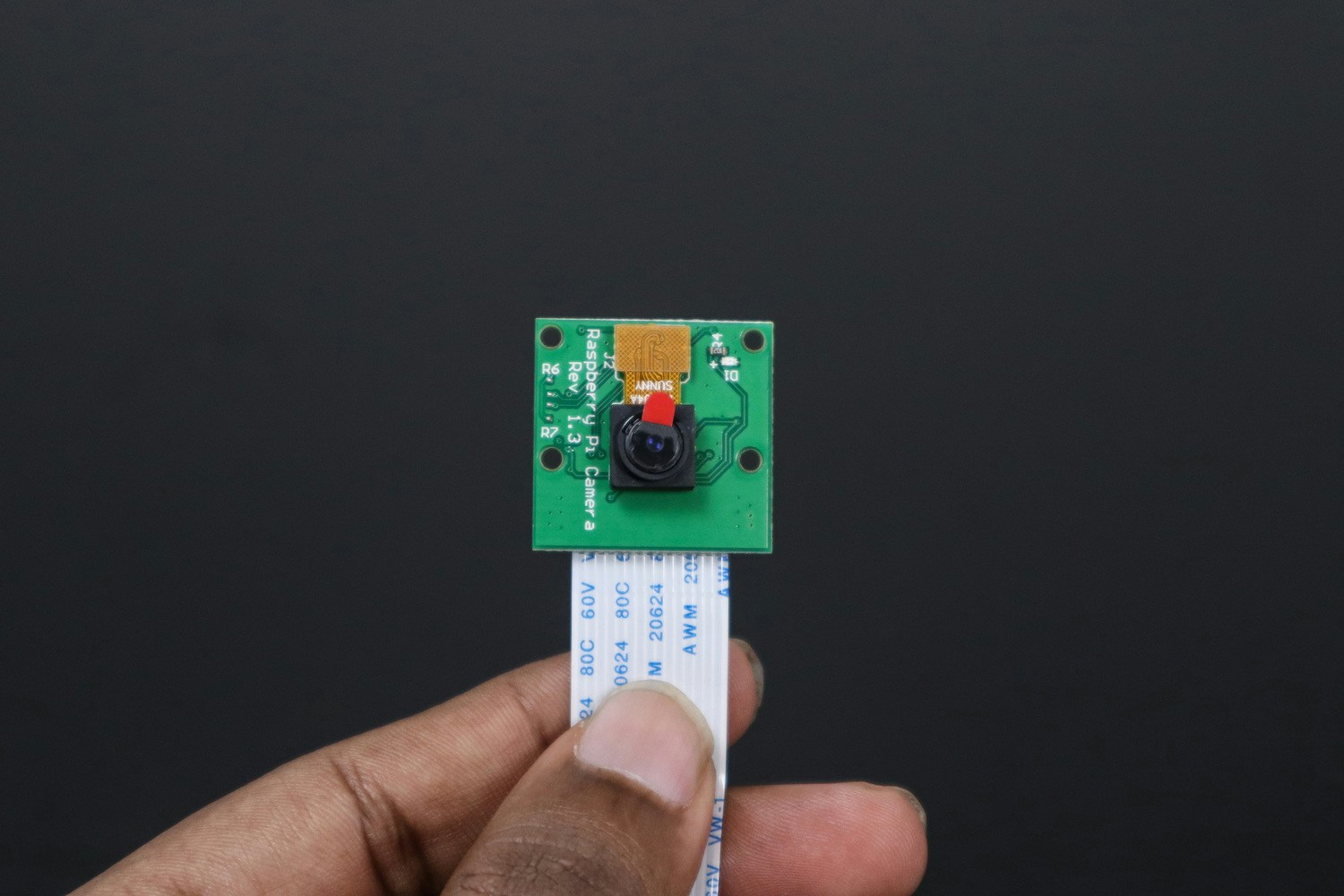

Camera Module

Power Adapter