Created By: Shebin Jose Jacob Public Project Link: https://studio.edgeimpulse.com/public/156676/latestDocumentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Intro

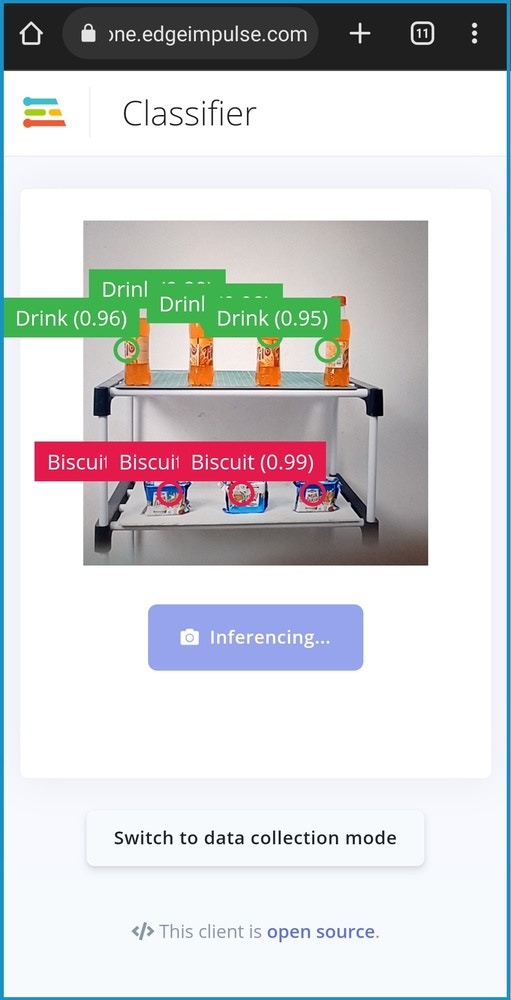

A store may experience a loss of sales as a result of the products being unavailable or being positioned incorrectly on the shelves in a retail shop. Generally, visual audits are undertaken by the staff to discover out-of-stock and misplaced products on a shelf. In this proof-of-concept, we are trying to automate the visual inspection process by having computer vision act as another set of eyes watching over every shelf in a store. The Edge Impulse FOMO model that we train can identify the products in a store’s inventory, and the application alerts the staff when an item needs to be restocked. The FOMO model is trained to identify all the products in the store. The model can identify and count the number of objects available on the shelf. When the count falls below a threshold, the staff is alerted immediately, which ensures a proper supply of products. Artificial intelligence-powered inventory control also enables managers to track product trends and place new product orders more easily to satisfy future demand.Advantages

- Realtime detection and counting of products in the store

- Immediate alert in case the stock is running low

- The system can be upgraded to monitor the stock-in and stock-out time which helps managers to track product trends

- User behavior can be analysed to modify the alignment of the products in the store

- Fewer staff needed to manage the store.

- A staff interference-free environment provides customers with more room for exploration.

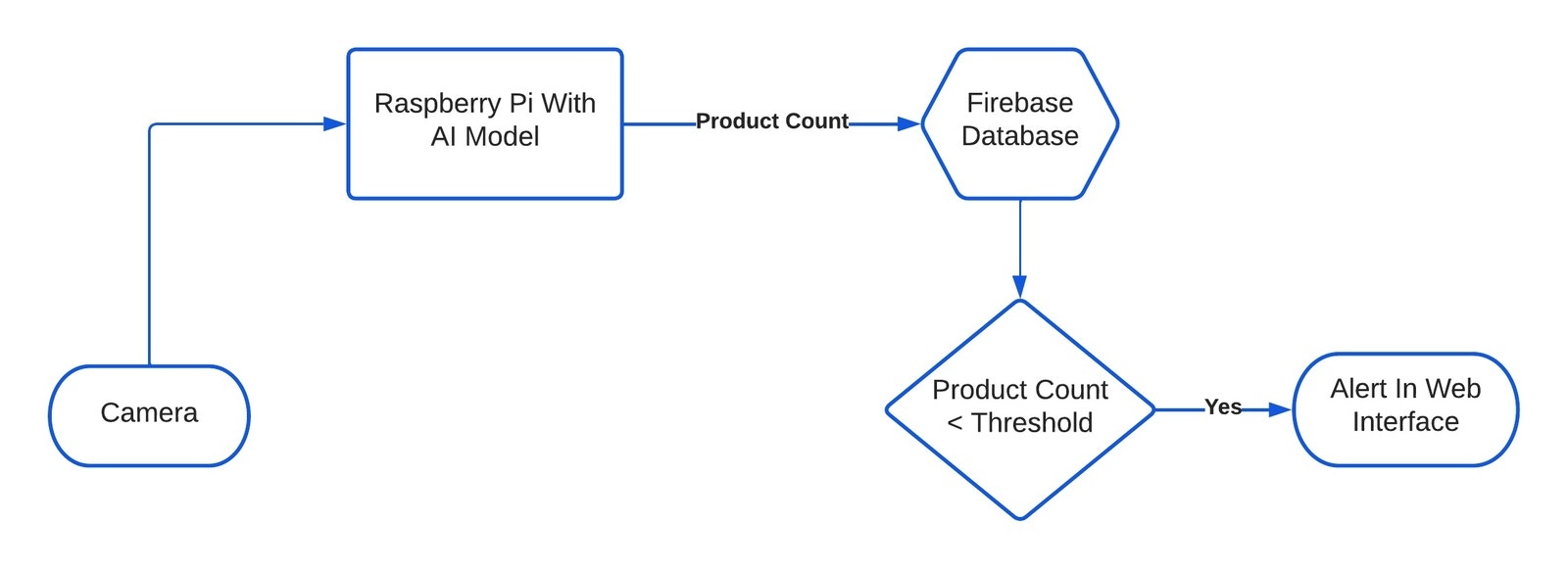

How Does It Work?

The key component of the system is a smart camera. The smart camera consists of a Raspberry Pi 4 and a compatible camera module, running an object detection model on it. The object detection model counts the number of products on the shelf and when the number falls below the threshold, an alert is generated in the web interface.

Hardware Requirements

- Raspberry Pi 4B

- 5MP Camera Module

- Compatible Power Supply

Software Requirements

- Edge Impulse Python SDK

- Python 3.x

- HTML, CSS, JS

Hardware Setup

The current hardware setup is very minimal. The setup consists of a 5 MP camera module connected to a Raspberry Pi 4B using a 15-pin ribbon cable.

Software Setup

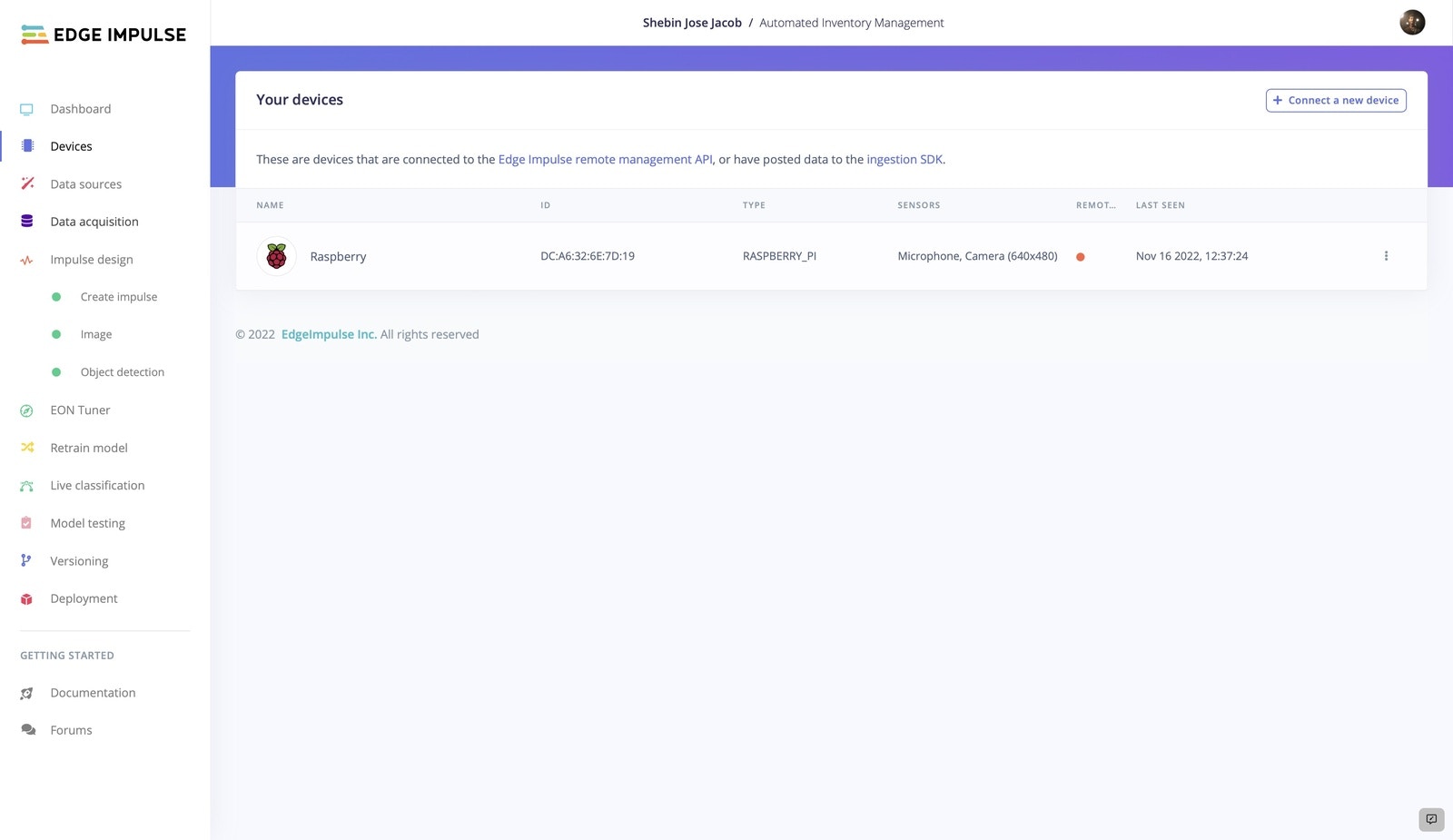

If you’re new here and haven’t set up the Raspberry Pi for Edge Impulse yet, follow this quick tutorial to connect the device to the Edge Impulse dashboard. After successfully connecting the device, you should see something like this in the Devices tab.

Build The TinyML Model

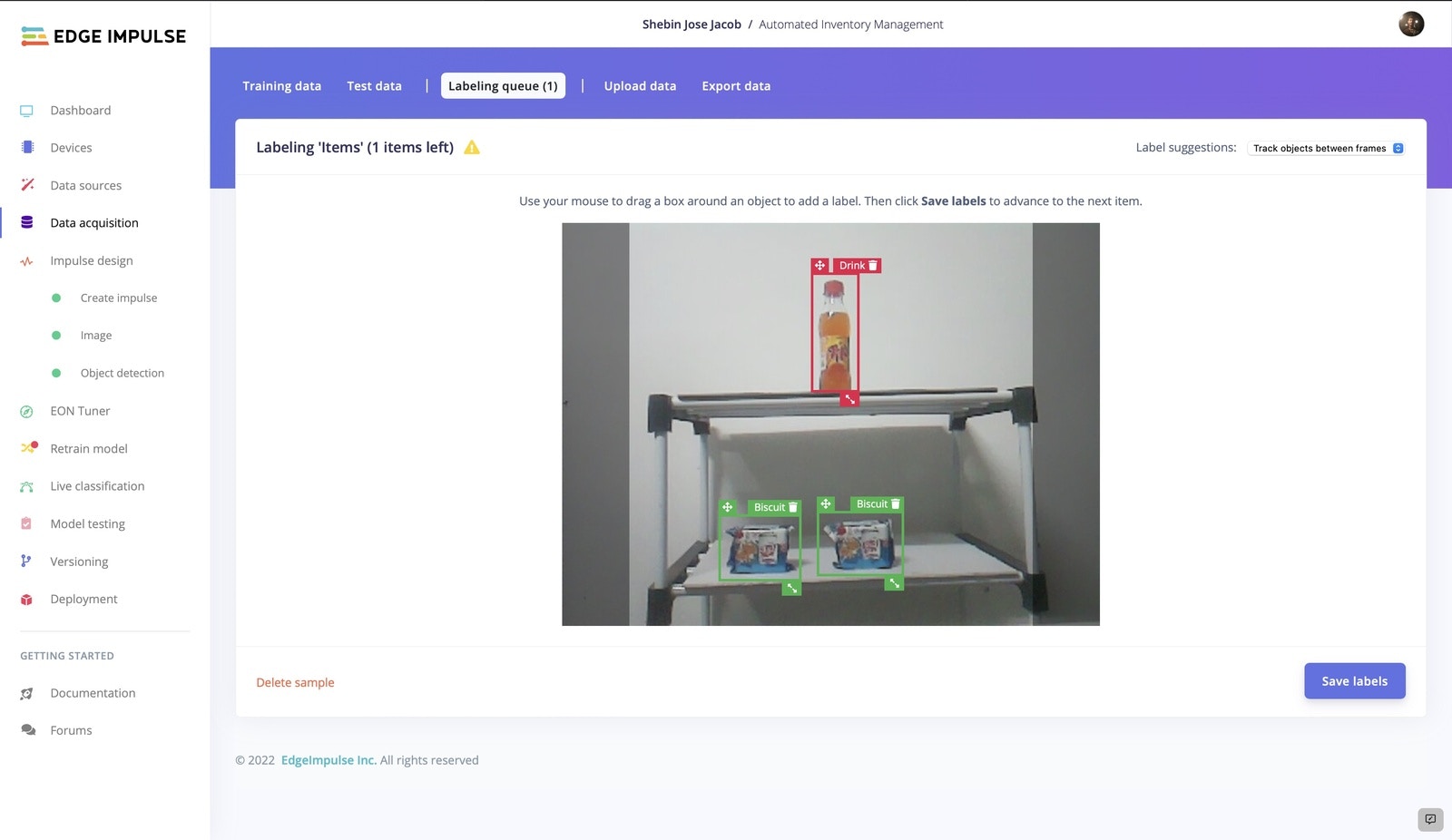

1. Data Acquisition And Labeling

After the preliminary software and hardware setup, now it’s time to build the object detection model. Let’s start by collecting some data. We can use two methods to collect data. Either we can directly collect the data using the connected device or we can upload the existing data using the Uploader. In this project, we are using the former method.

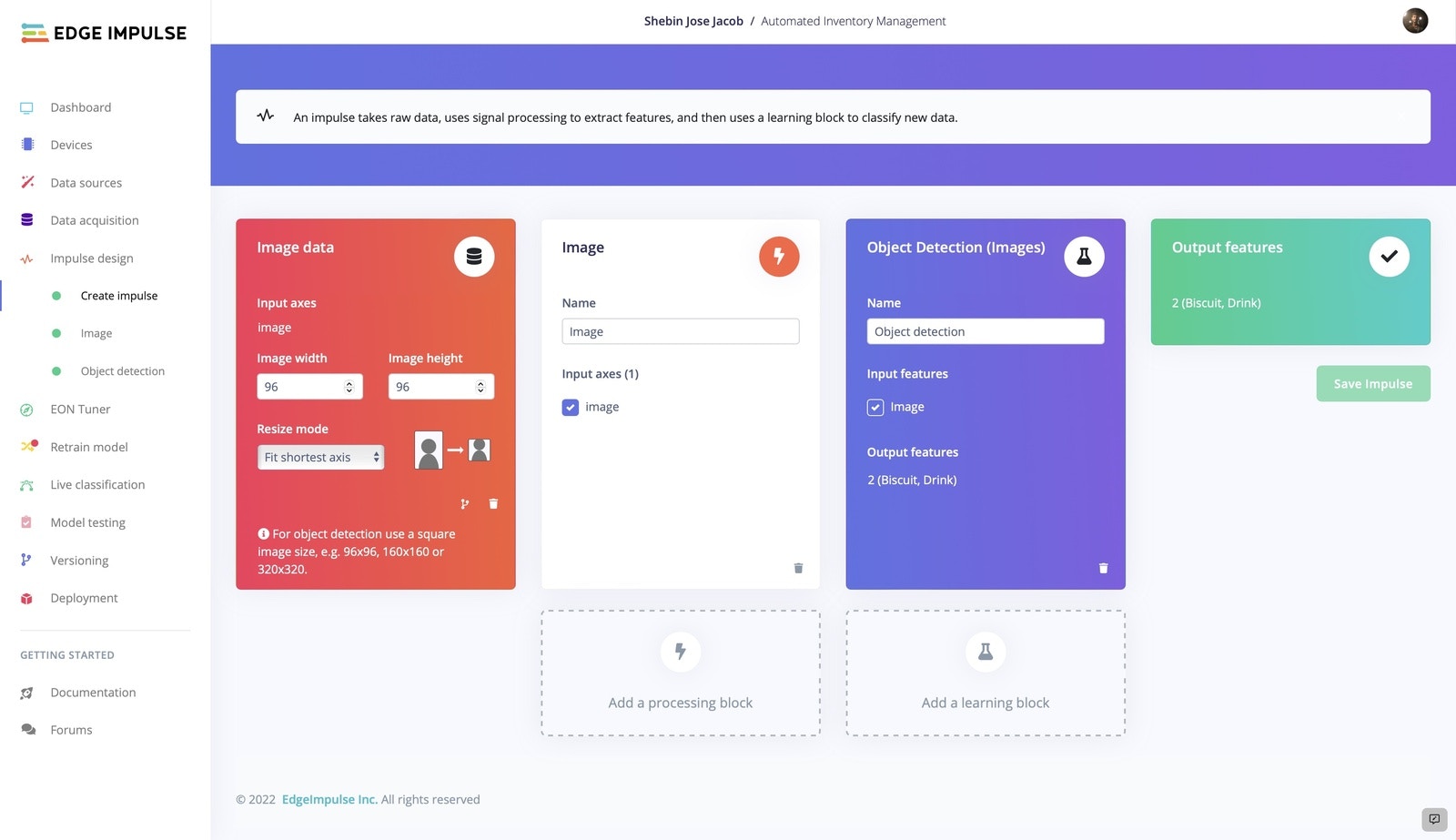

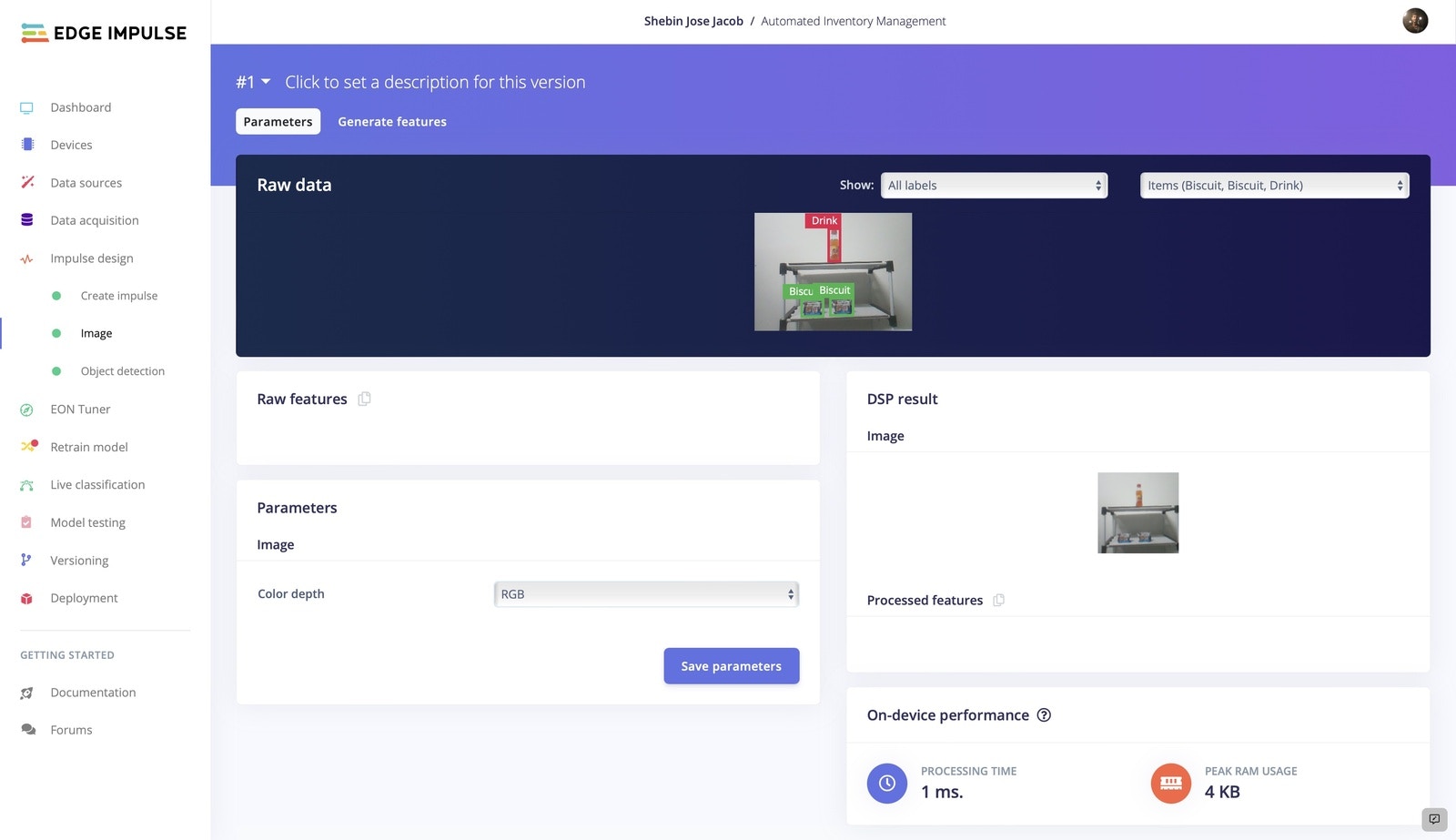

2. Impulse Design

As our case is real-time detection and counting, we need a faster-performing model with reliable accuracy. So we are using FOMO which generates a lightweight, fast model. Since FOMO performs better with 96x96 images, we are setting the image dimensions to 96px. Keeping Resize Mode to Fit shortest axis, add an Image processing block and an Object Detection (Images) learning block to the impulse.

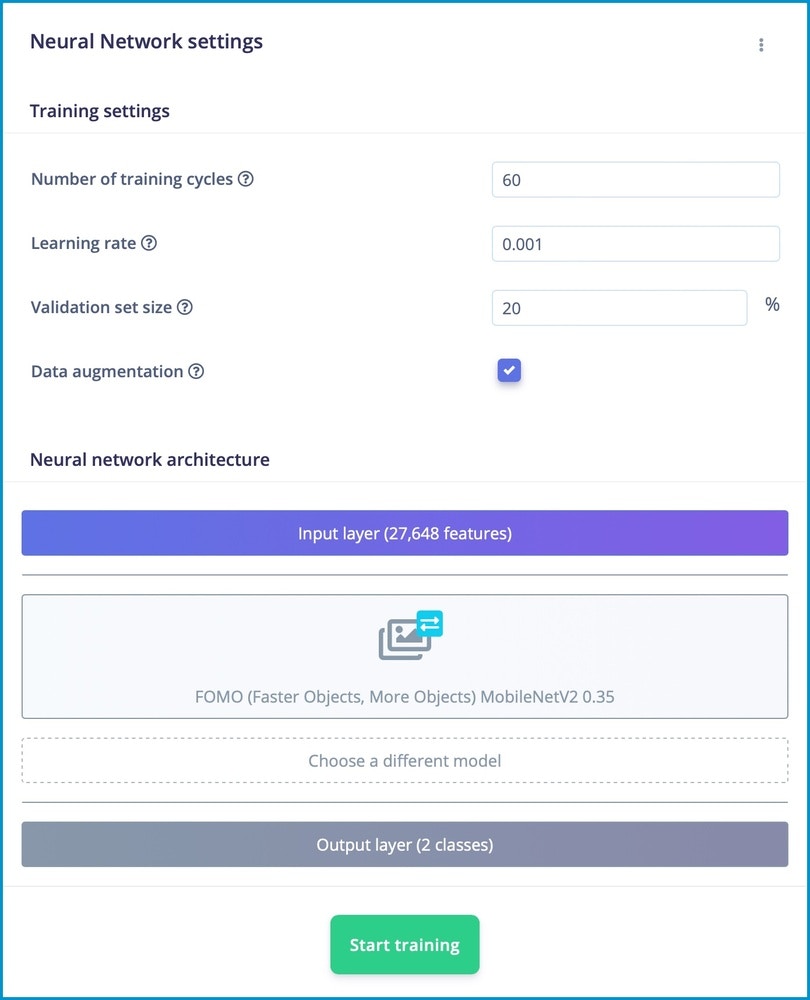

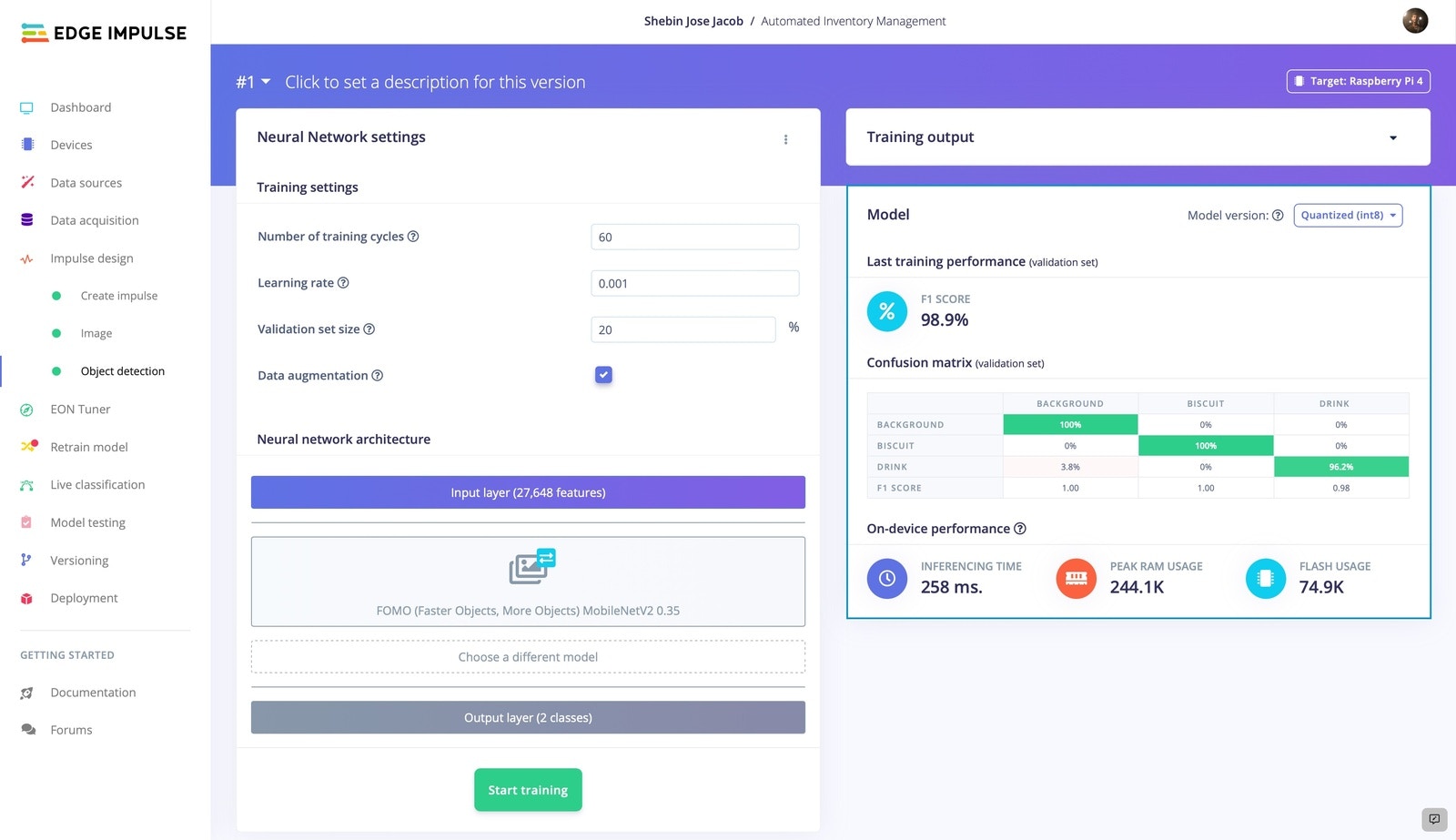

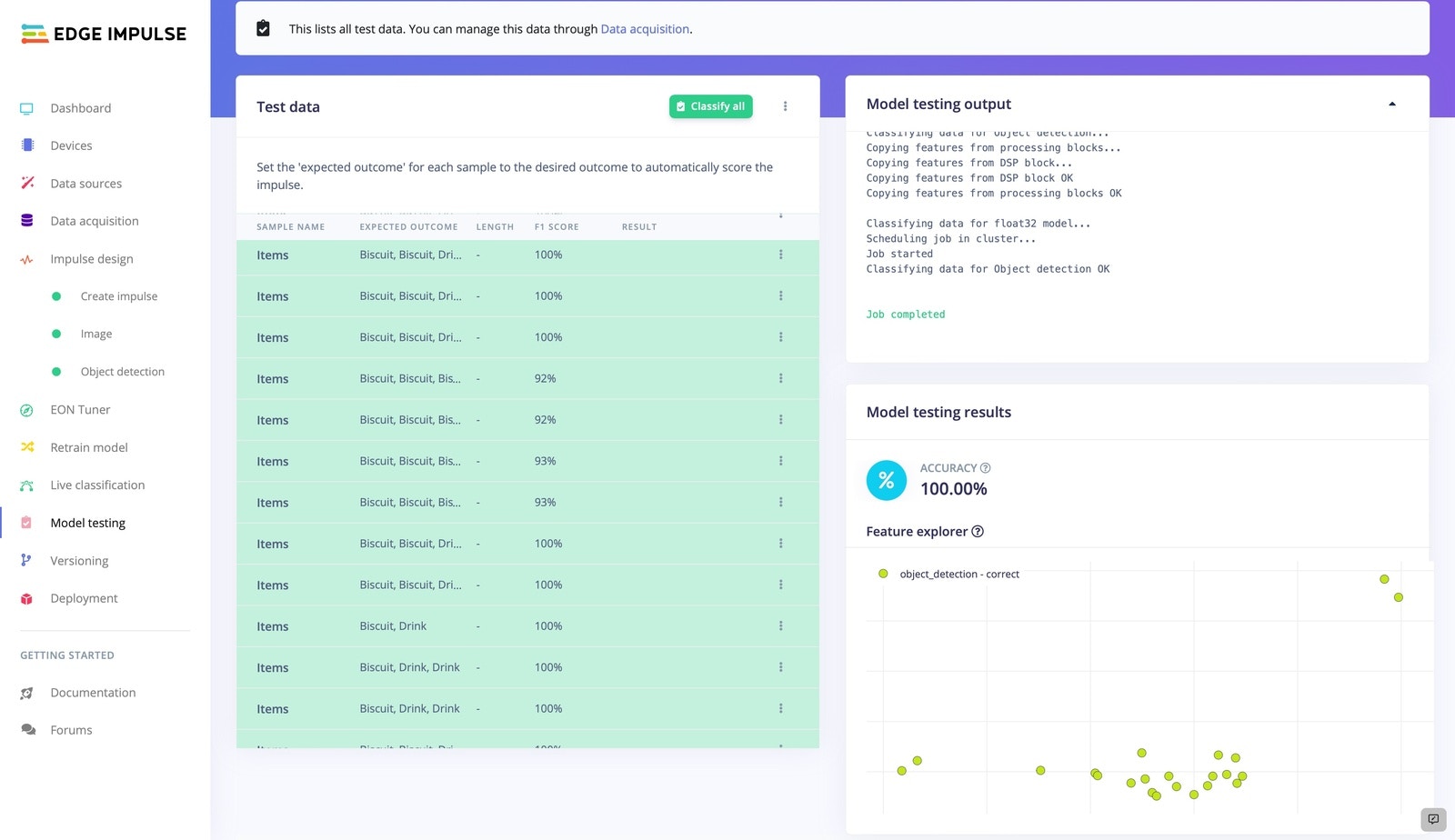

3. Model Training

Now that we have our impulse designed, let’s proceed to train the model. The settings we employed for model training are depicted in the picture. You can play with the model training settings so that the trained model exhibits a higher level of accuracy, but be cautious of overfitting.

Firebase Realtime Database

For our project, we used Firebase real-time database that allows us to rapidly upload and retrieve data. In this case, we take advantage of thePyrebase package, a Python wrapper for Firebase.

To install Pyrebase,

- Create a project.

- Then navigate to the Build section and create a real-time database.

- Start in test mode, so we can update the data without any authentication

- From Project Settings, copy the config.

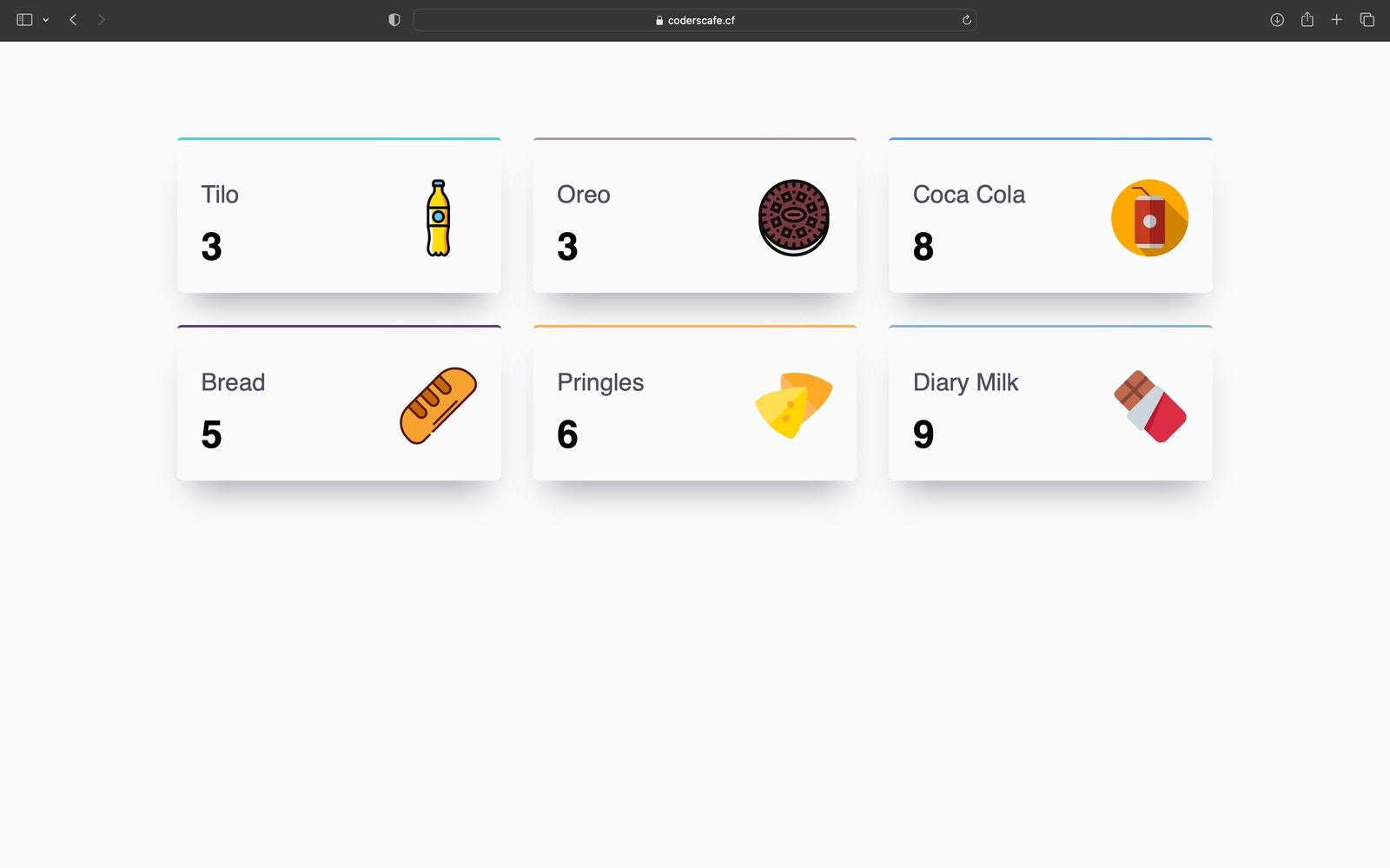

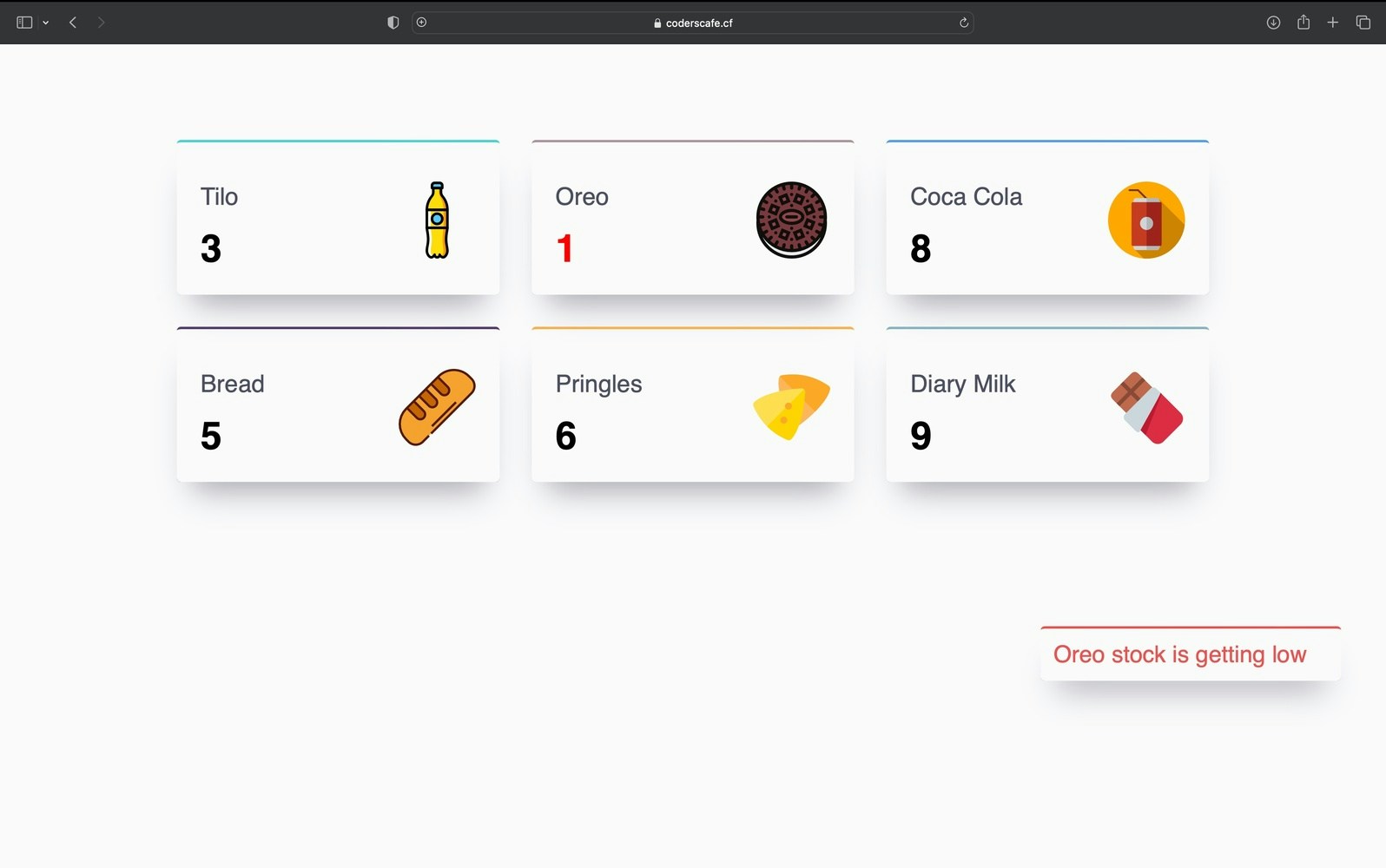

Web Interface

We are using a webpage created using HTML, CSS, and JS to display the detected objects in real-time. The data from the Firebase Realtime Database is updated on the webpage in real-time. The webpage displays the current count of each product across the store. If the count is less than a prefixed threshold, it displays an alert in the web interface.