Introduction

An electronic device that is intended to function on a user’s body is considered wearable technology. The largest categories of wearables are smartwatches and hearables, which have experienced the fastest growth in the recent years. Steve Roddy, former Vice President of Product Marketing for Arm’s Machine Learning Group once said that “TinyML deployments are powering a huge growth in ML deployment, greatly accelerating the use of ML in all manner of devices and making those devices better, smarter, and more responsive to human interaction”. TinyML enables running Machine Learning on resource constrained devices like wearables. Sound classification is one of the most widely used applications of Machine Learning. A new use case for wearables is an environmental audio monitor for individuals with hearing disabilities. This is a wearable device that has a computer which can listen to the environment sounds and classify them. In this project, I focused on giving tactile feedback when vehicle sounds are detected. The Machine Learning model can detect ambulance and firetruck sirens as well as cars honking. When these vehicles are detected, the device then gives a vibration pulse which can be felt by the person wearing the device. This use case can be revolutionary for people who have hearing problems and even deaf people. To keep people safe from being injured, the device can inform them when there is a car, ambulance or firetruck nearby so that they can identify it and move out of the way.

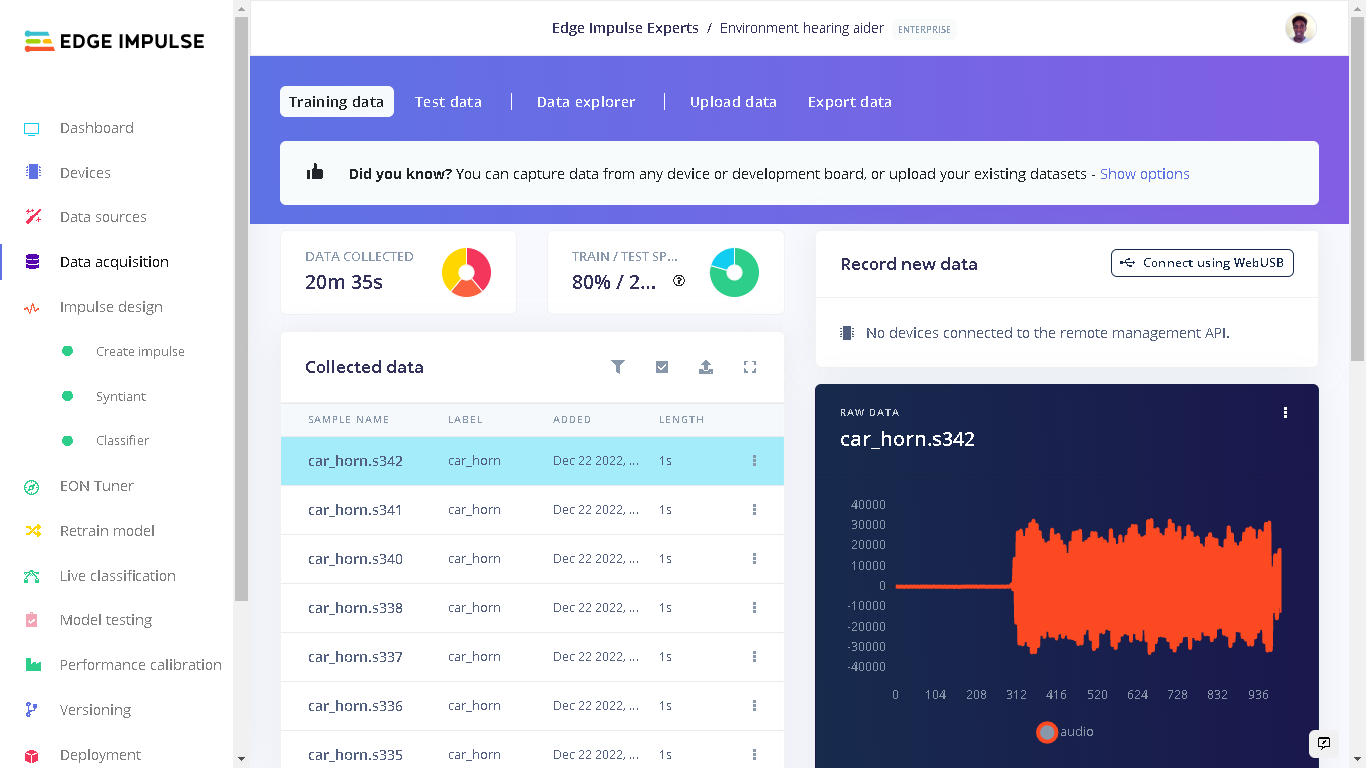

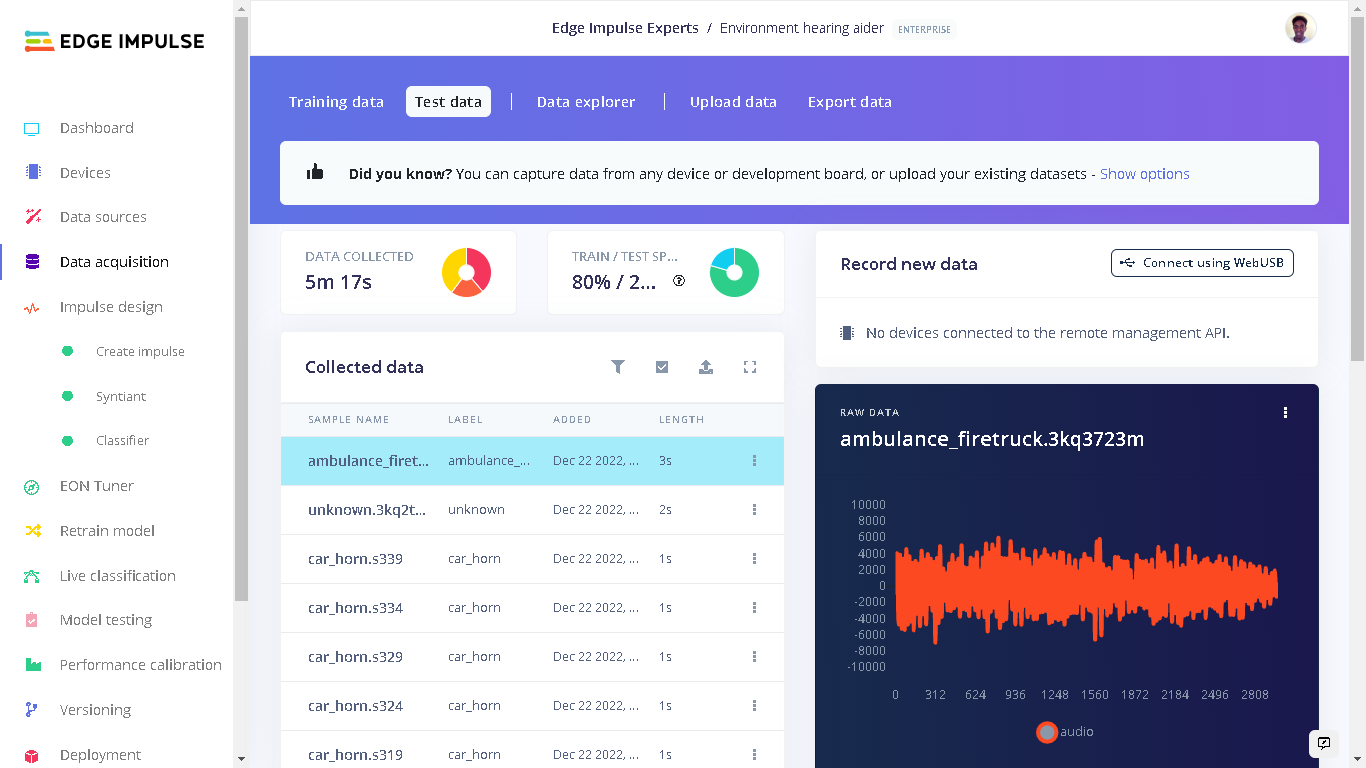

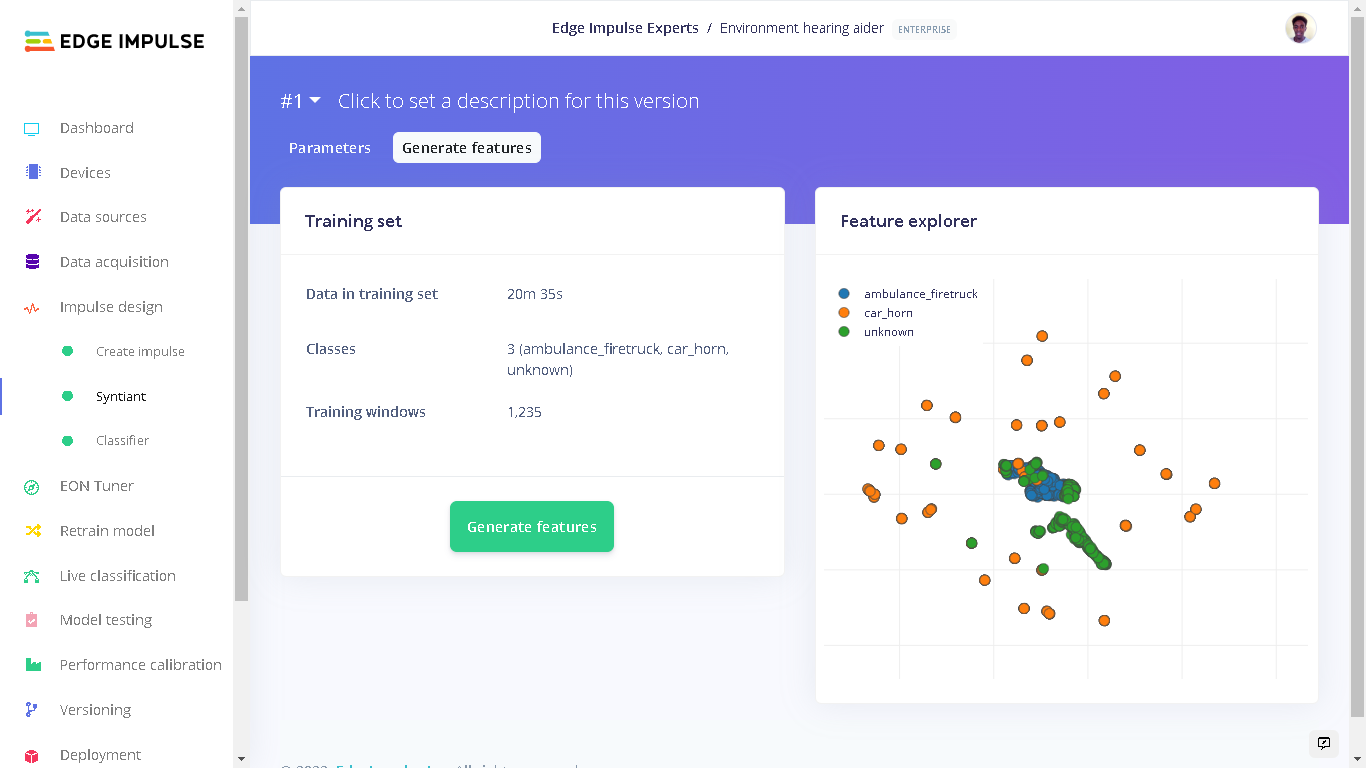

Dataset Preparation

I first searched for open datasets of ambulance siren, firetruck siren, car horns and traffic sounds. I used the Kaggle dataset of Emergency Vehicle Siren Sounds and the Isolated urban sound database for the key sounds. From these datasets, I created the classes “ambulance_firetruck” and “car_horn”. In addition to the key events that I wanted to be detected, I also needed another class that is not part of them. I labelled this class as “unknown” and it has sounds of traffic, people speaking, machines, and vehicles, among others. Each class has 1 second of audio sounds. In total, I had 20 minutes of data for training and 5 minutes of data for testing. For part of the “unknown” class, I used Edge Impulse keywords dataset. From this dataset, I used the “noise” audio files.

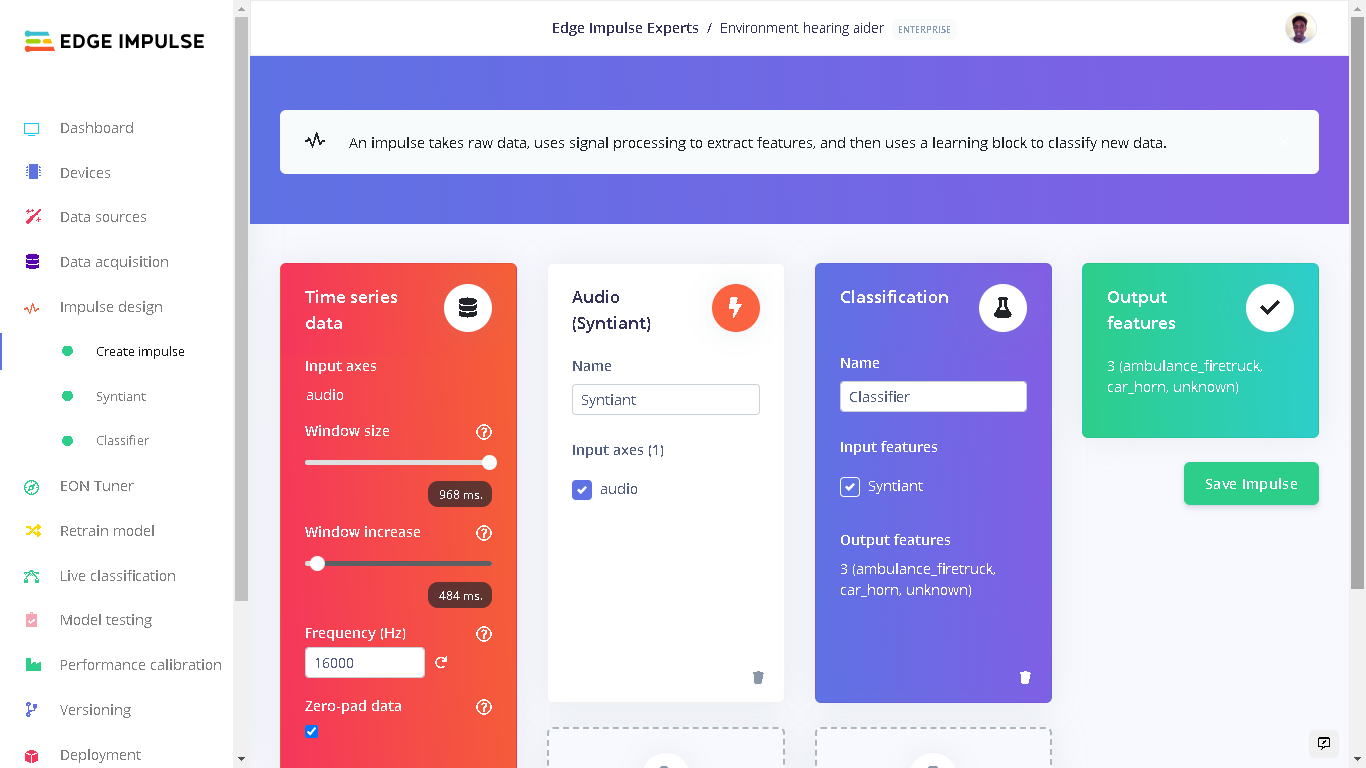

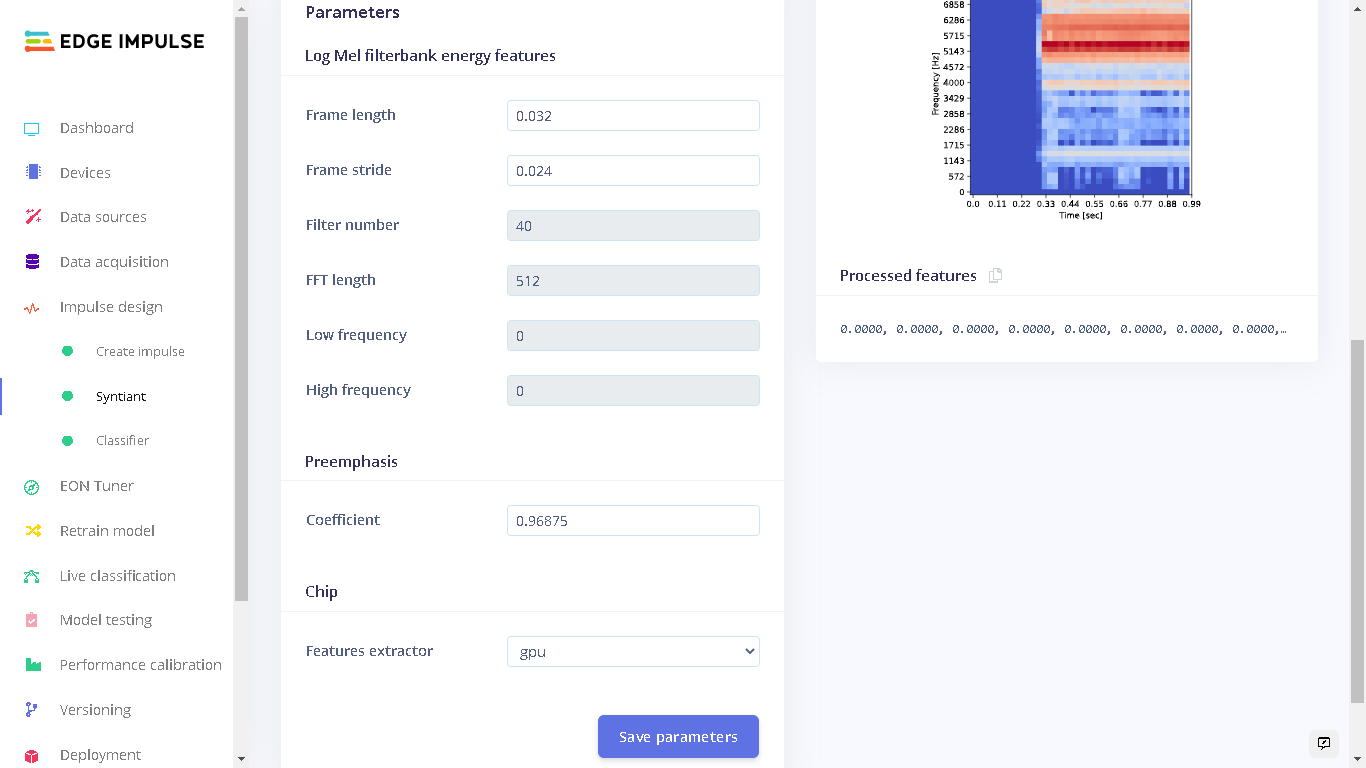

Impulse Design

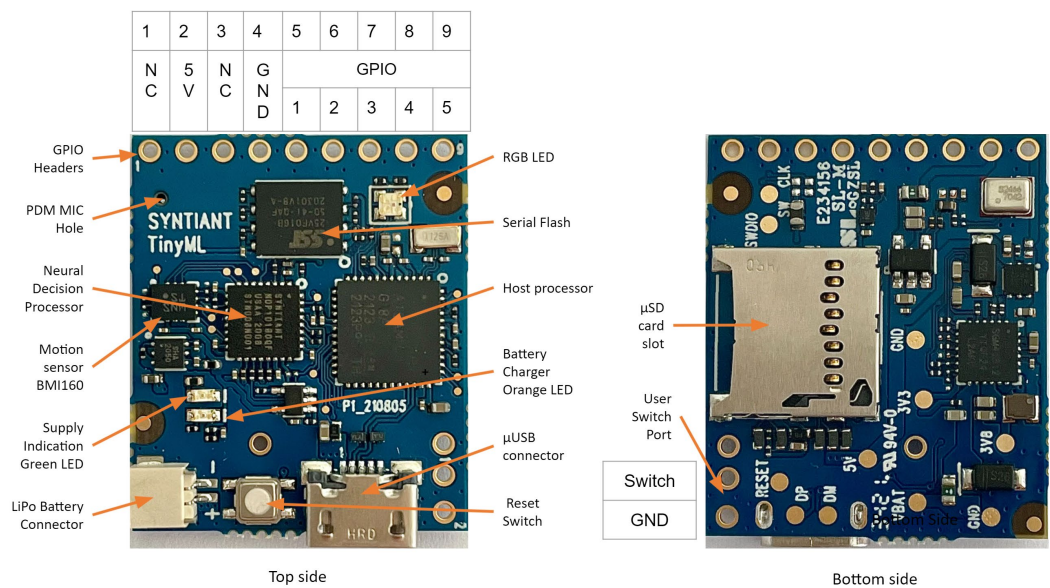

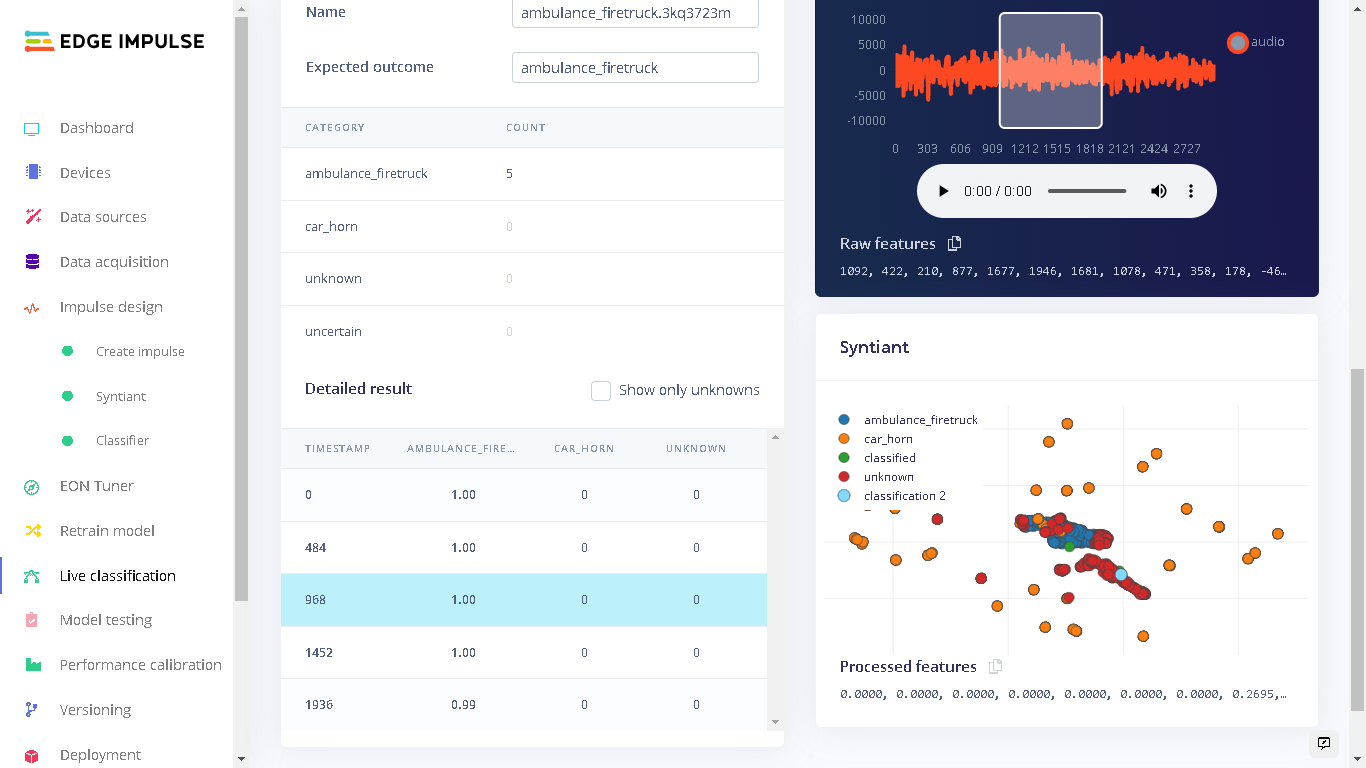

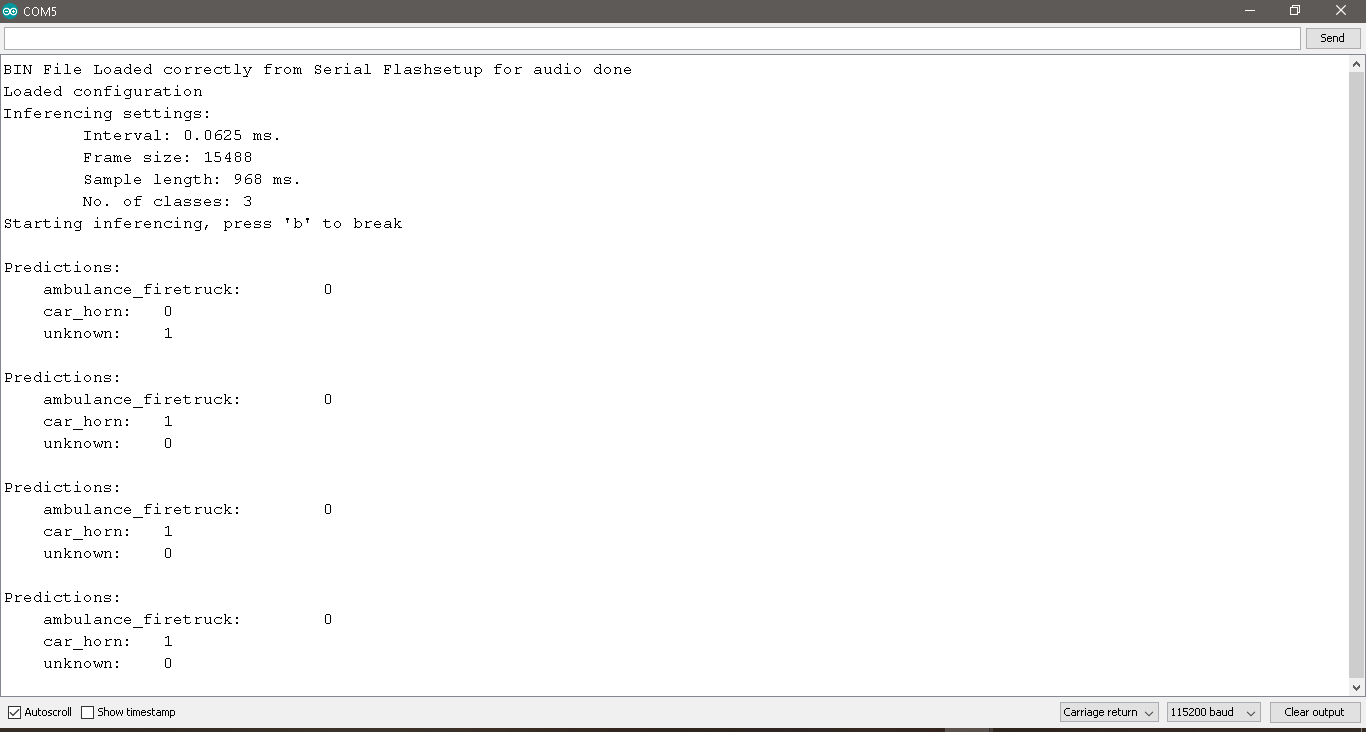

The Impulse design was very unique as I was targeting the Syntiant TinyML board. Under “Create Impulse” I set the following configurations: The window size is 968ms and window increase is 484ms milliseconds(ms). I then added the “Audio (Syntiant)” processing block and the “Classification” Learning block. For a detailed explanation of the Impulse Design for the Syntiant TinyML audio classification, checkout the Edge Impulse documentation.

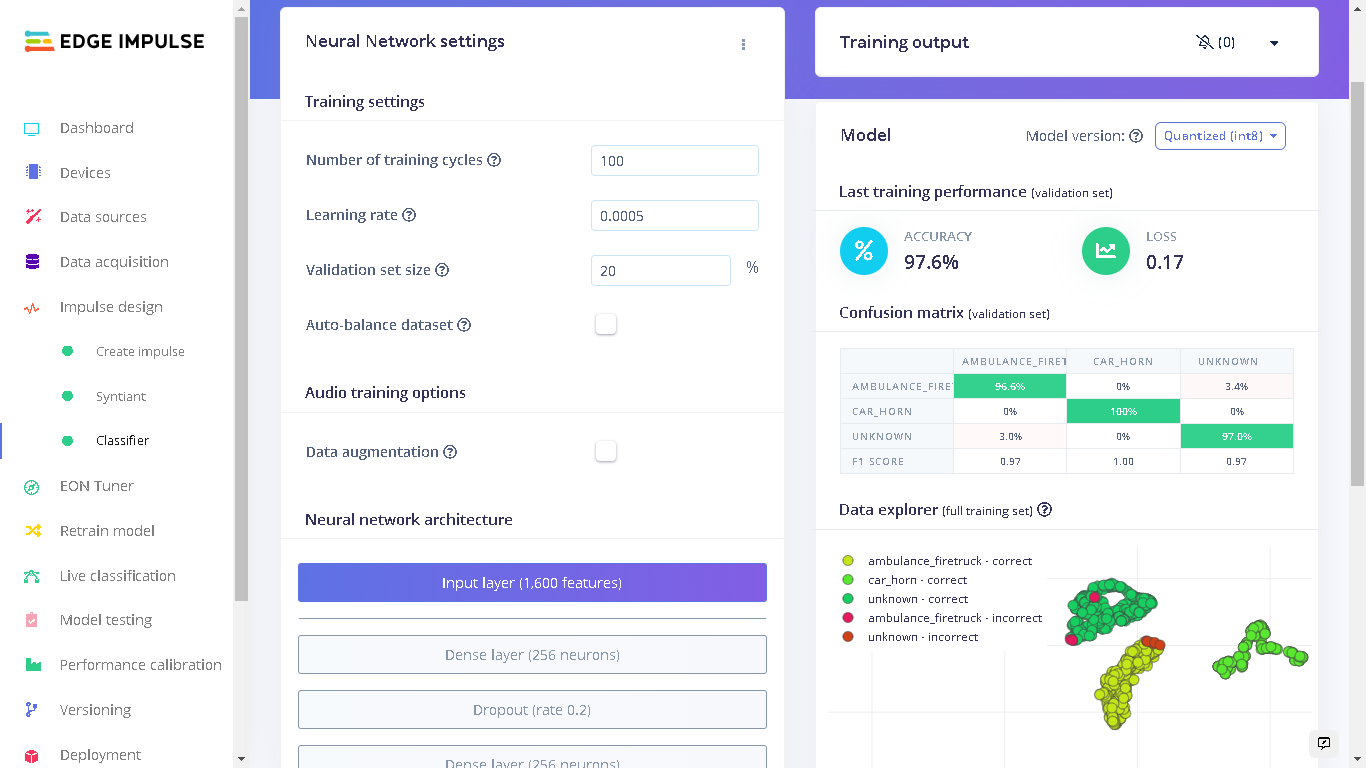

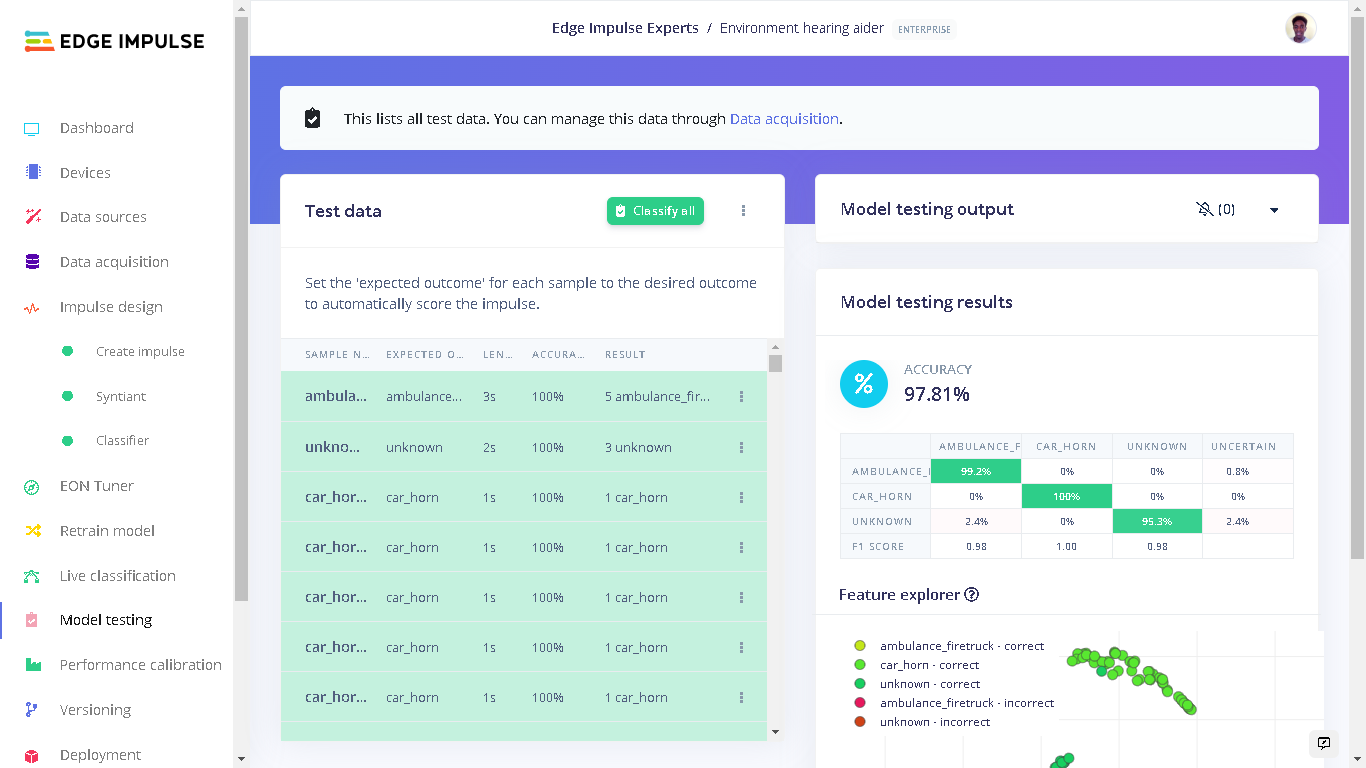

Model Testing

When training the model, I used 80% of the data in the dataset. The remaining 20% is used to test the accuracy of the model in classifying unseen data. We need to verify that our model has not overfit by testing it on new data. If your model performs poorly, then it means that it overfit (crammed your dataset). This can be resolved by adding more dataset and/or reconfiguring the processing and learning blocks if needed. Increasing performance tricks can be found in this guide. On the left bar, we click “Model testing” then “Classify all”. The current model has a performance of 97.8% which is pretty good and acceptable.

Deploying to Syntiant TinyML Board

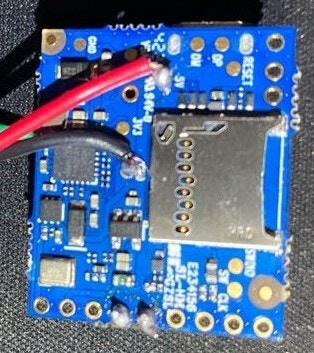

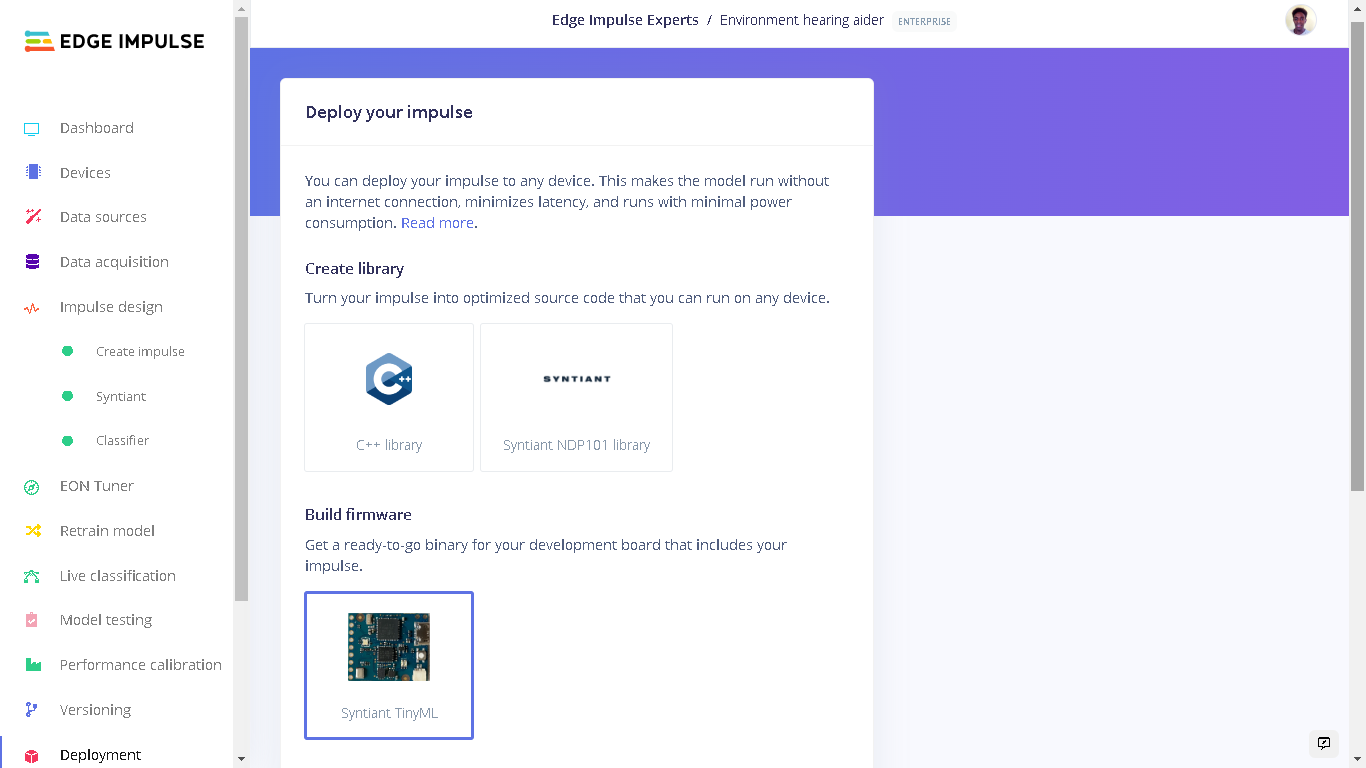

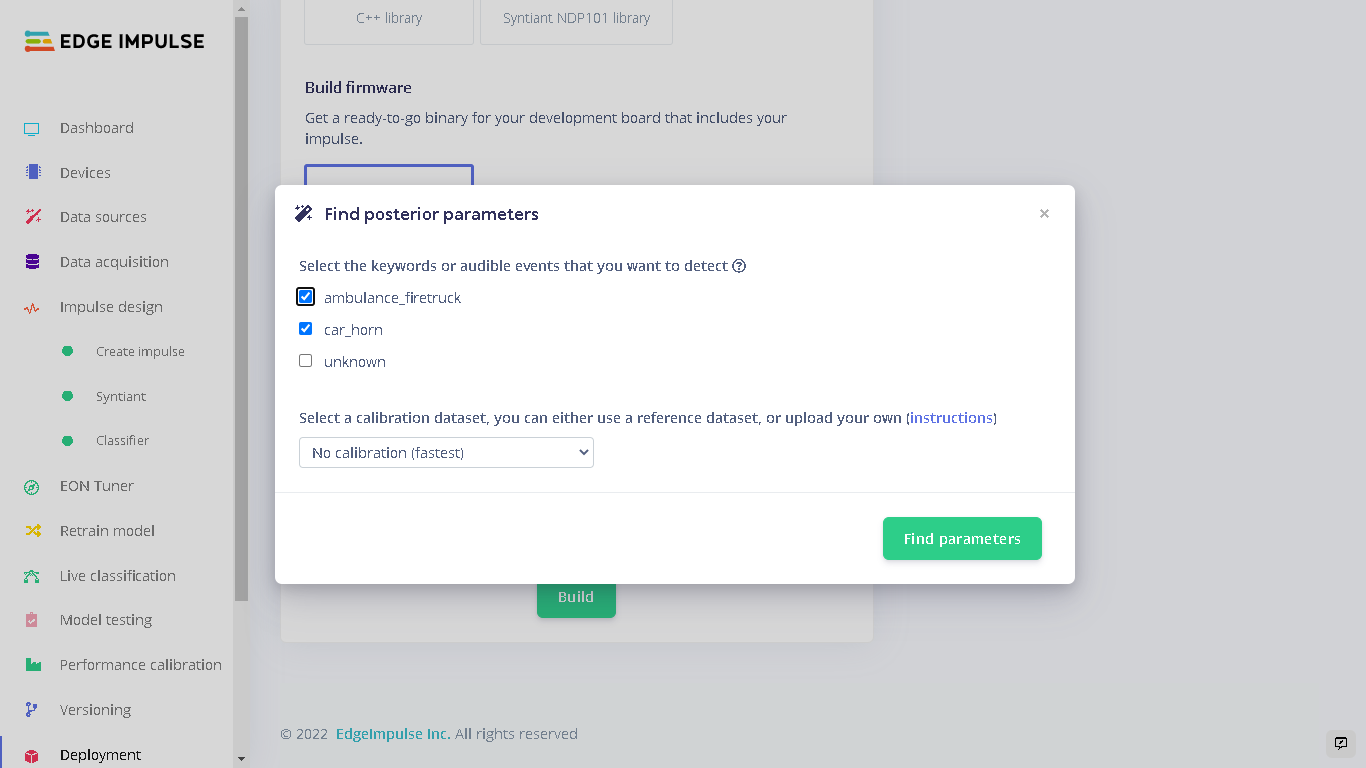

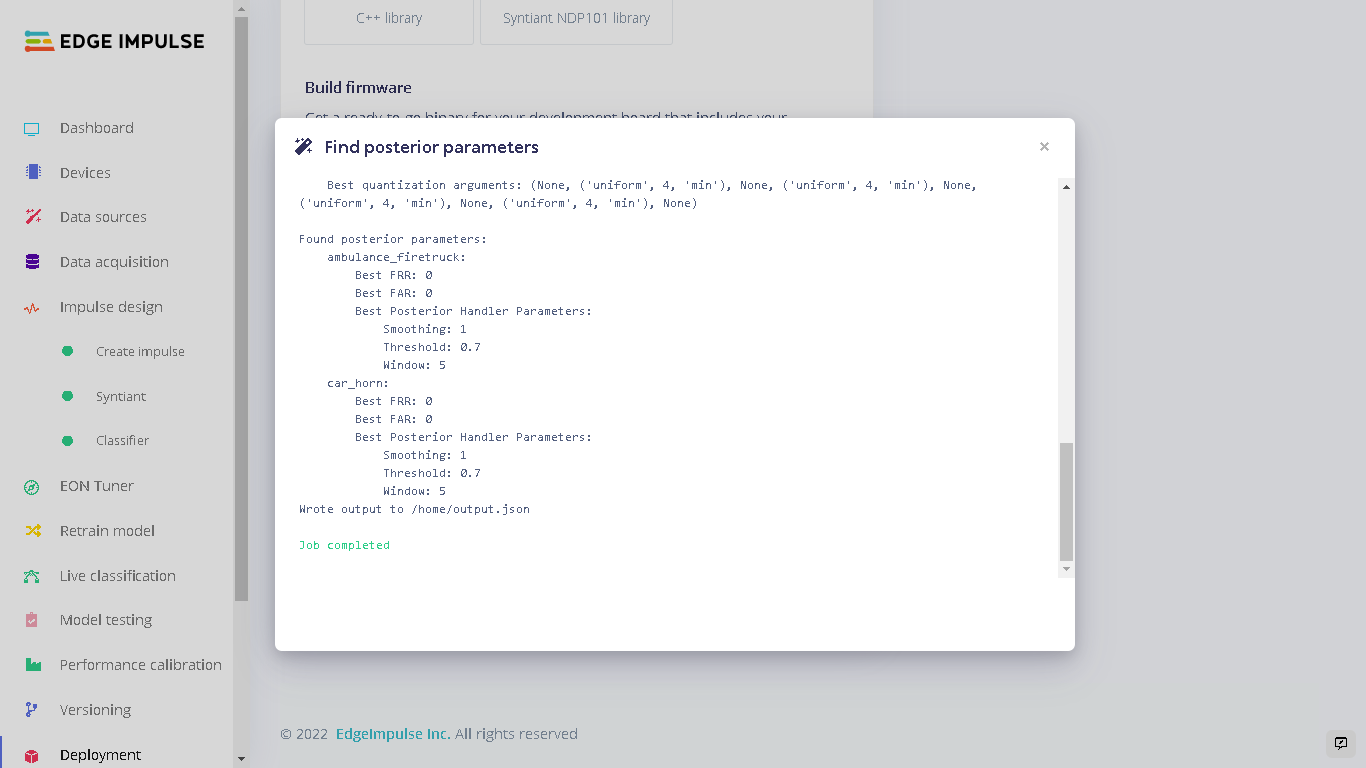

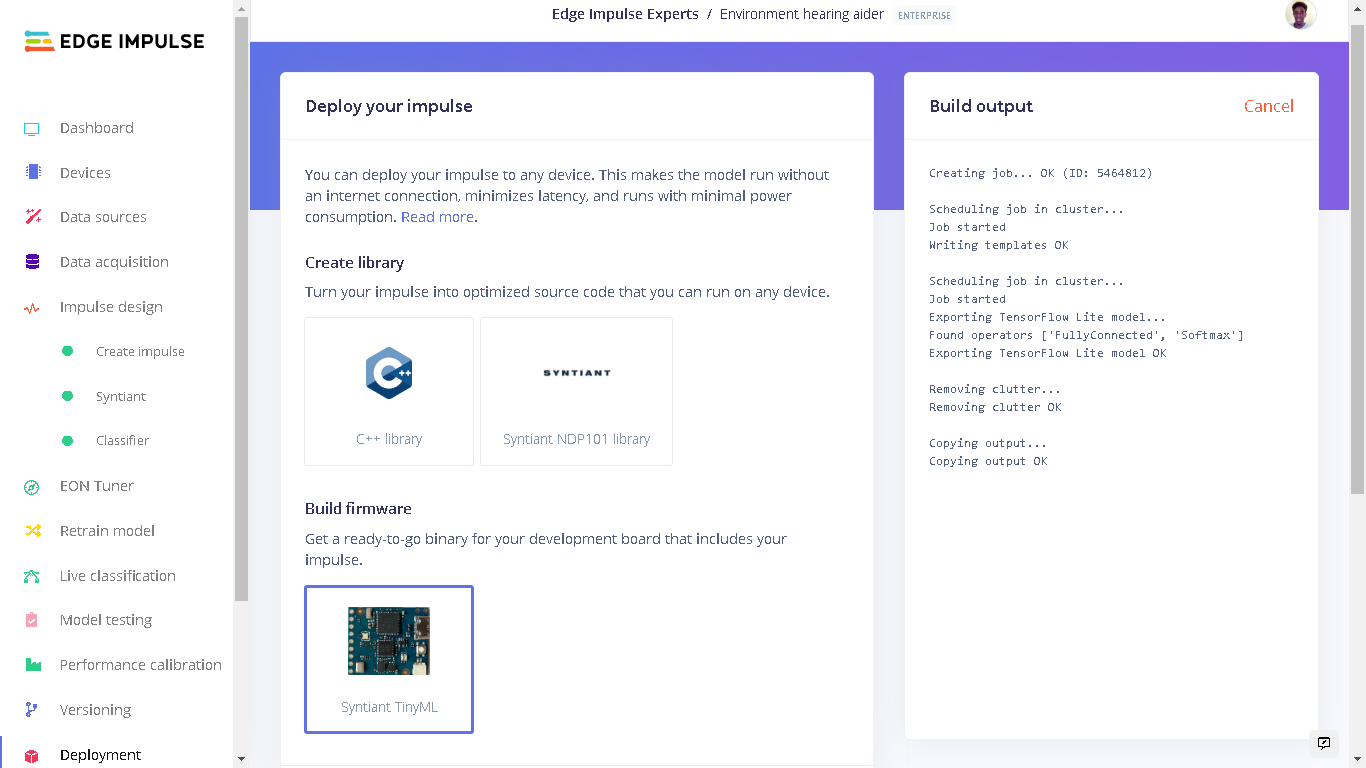

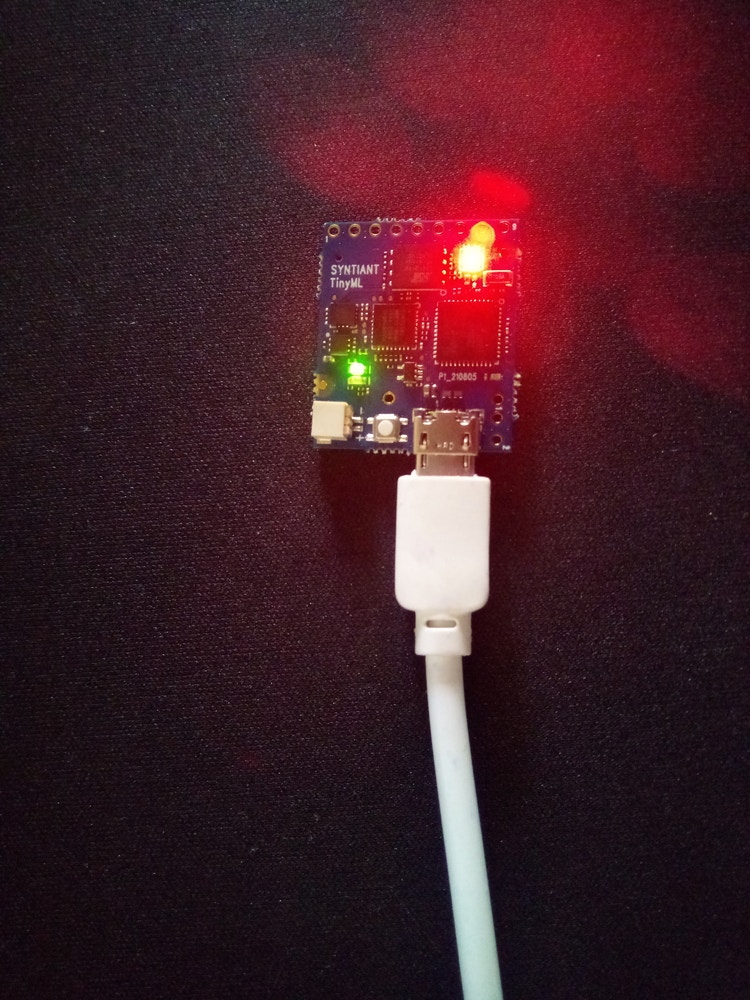

To deploy our model to the Syntiant Board, first click “Deployment” on the left side panel. Here, we will first deploy our model as a firmware for the board. When our audible events: ambulance_firetruck and car_horn are detected, the onboard RGB LED will turn on. When the “unknown” sounds are detected, the onboard RGB LED will be off. This firmware runs locally on the board without requiring internet connectivity and also with minimal power consumption. Under “Build Firmware” select Syntiant TinyML.

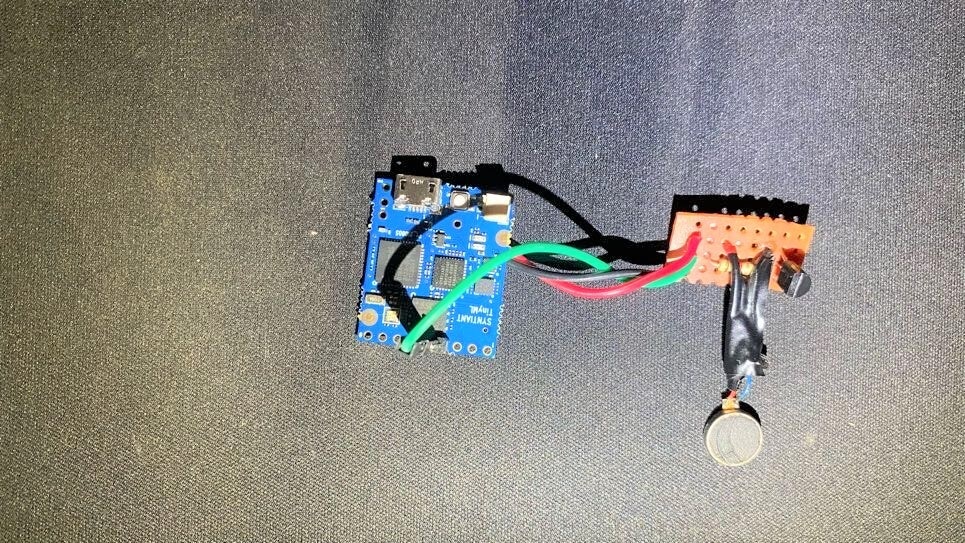

A Smart Watch-out

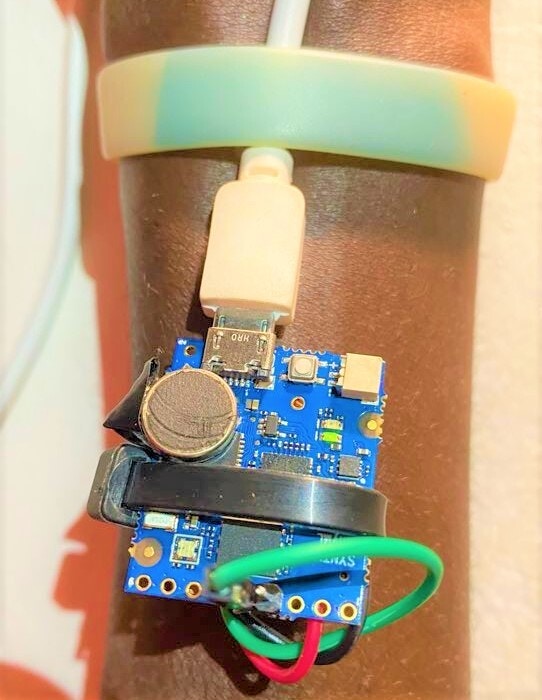

After testing the model on the Syntiant TinyML board and finding that it works great, I proceeded to create a demo of the smart wearable of this project. This involved connecting a vibration motor to GPIO 1 of the Syntiant TinyML board. When the classes “ambulance_firetruck” and “car_horn” are detected, the GPIO 1 on the board is set HIGH and this causes the vibration motor to vibrate for 1500 milliseconds. Vibration motors are mostly used to give haptic feedback in mobile phones and video game controllers. They are the components that make your phone vibrate.