Project Demo

Intro

Noise pollution can be a significant problem especially in densely populated urban areas. It can have negative effects both humans and the wildlife. Also, noise pollution is often caused by power hungry activities, such as industrial processes, constructions, flights, etc. A Noise Pollution Monitoring device built on top of the Nordic Thingy:53 development kit, with smart classification capabilities using Edge Impulse can be a good way to monitor this phenomenon in urban areas. Using a set of Noise Pollution Monitoring the noise / environmental pollution from a city can be monitored. Based on the measured data, actions can be taken to improve the situation. Activities causing noise pollution tend to also have a high energy consumption. Replacing this applications with more efficient solutions can reduce their energy footprint they have.

Getting Started with the Thingy:53

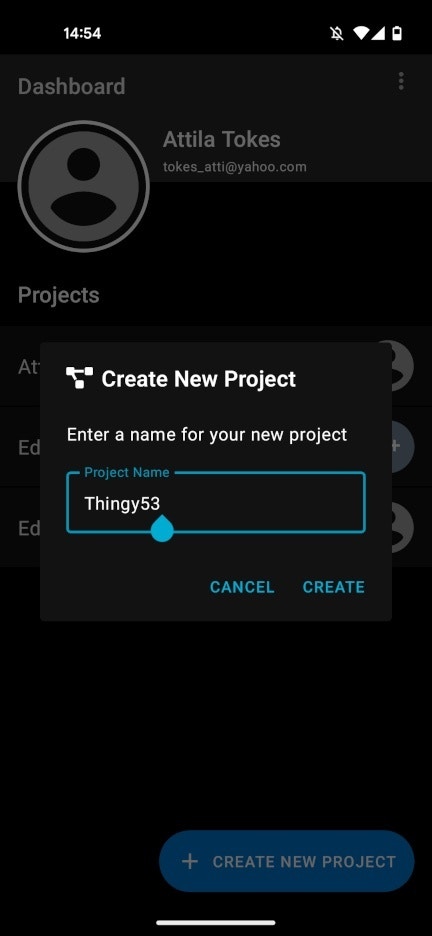

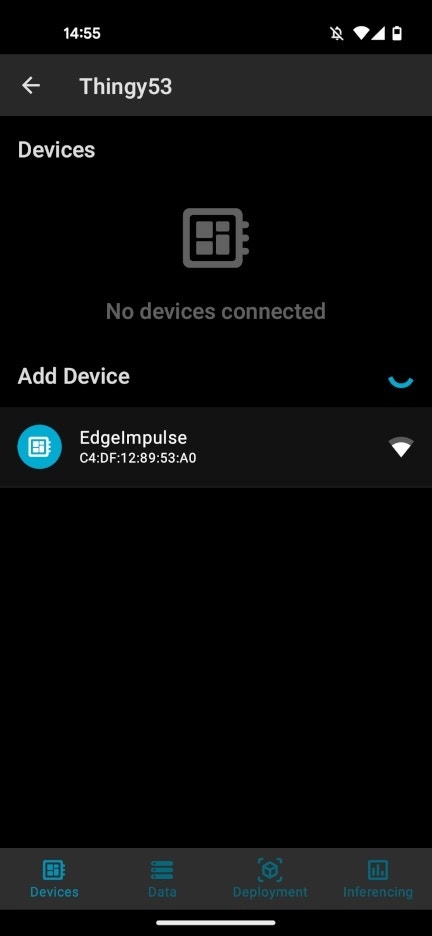

The Nordic Thingy:53 is comes with the pre-installed firmware, that allows us to easily create machine learning projects with Edge Impulse. The nRF Edge Impulse mobile app is used to interact with Thingy:53. The app also integrates with the Edge Impulse embedded machine learning platform. To get started with the app we will need to create an Edge Impulse account, and a project:

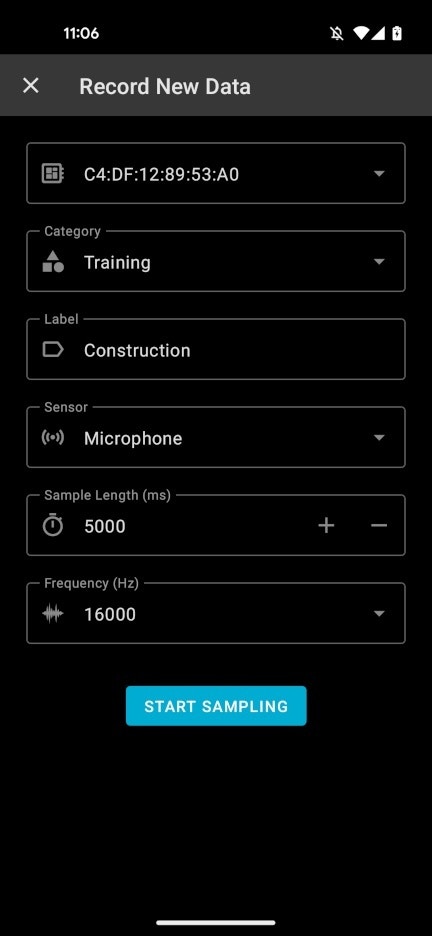

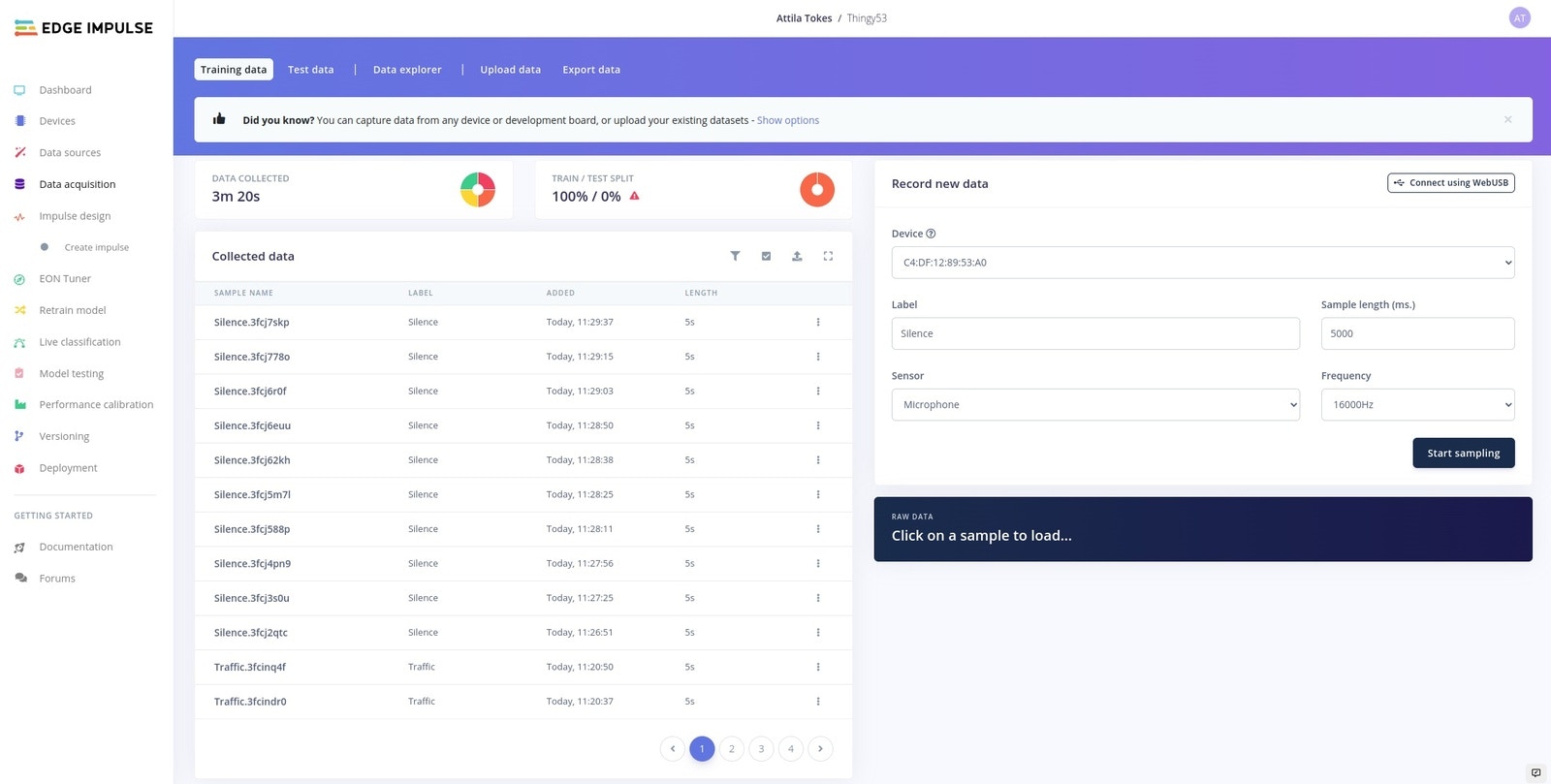

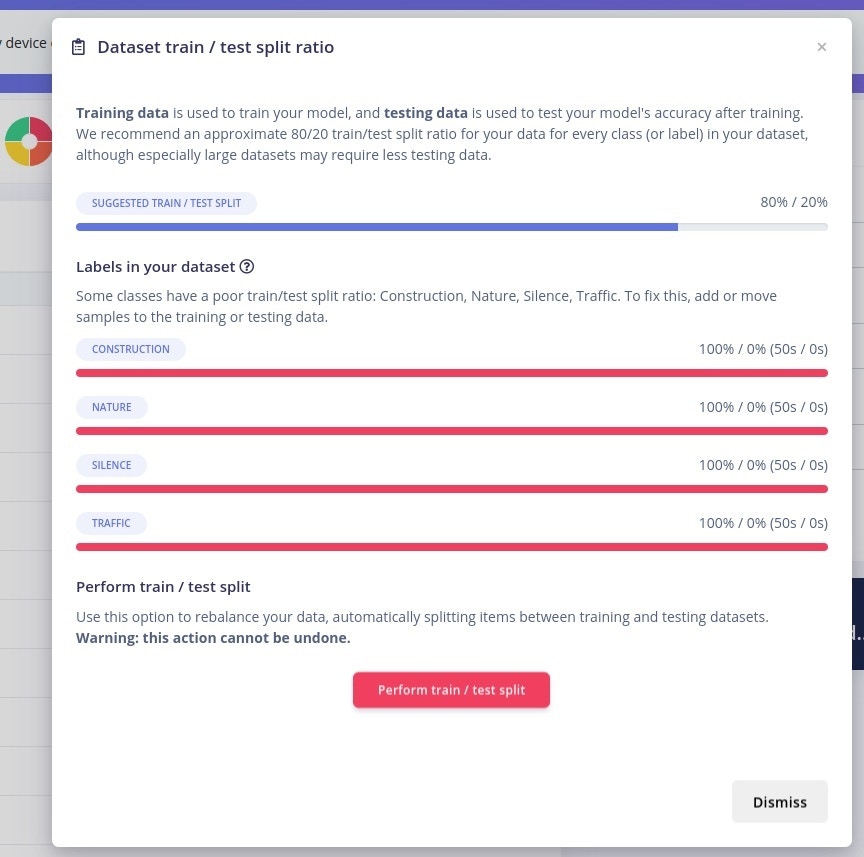

Collecting Audio Data

The first step of building a machine learning model is to collect some training data. For this proof-of-concept, I decided to go with 4 classes of sounds:- Silence - a silent room

- Nature - sound of birds, rain, etc.

- Construction - sounds from a construction site

- Traffic - urban traffic sounds

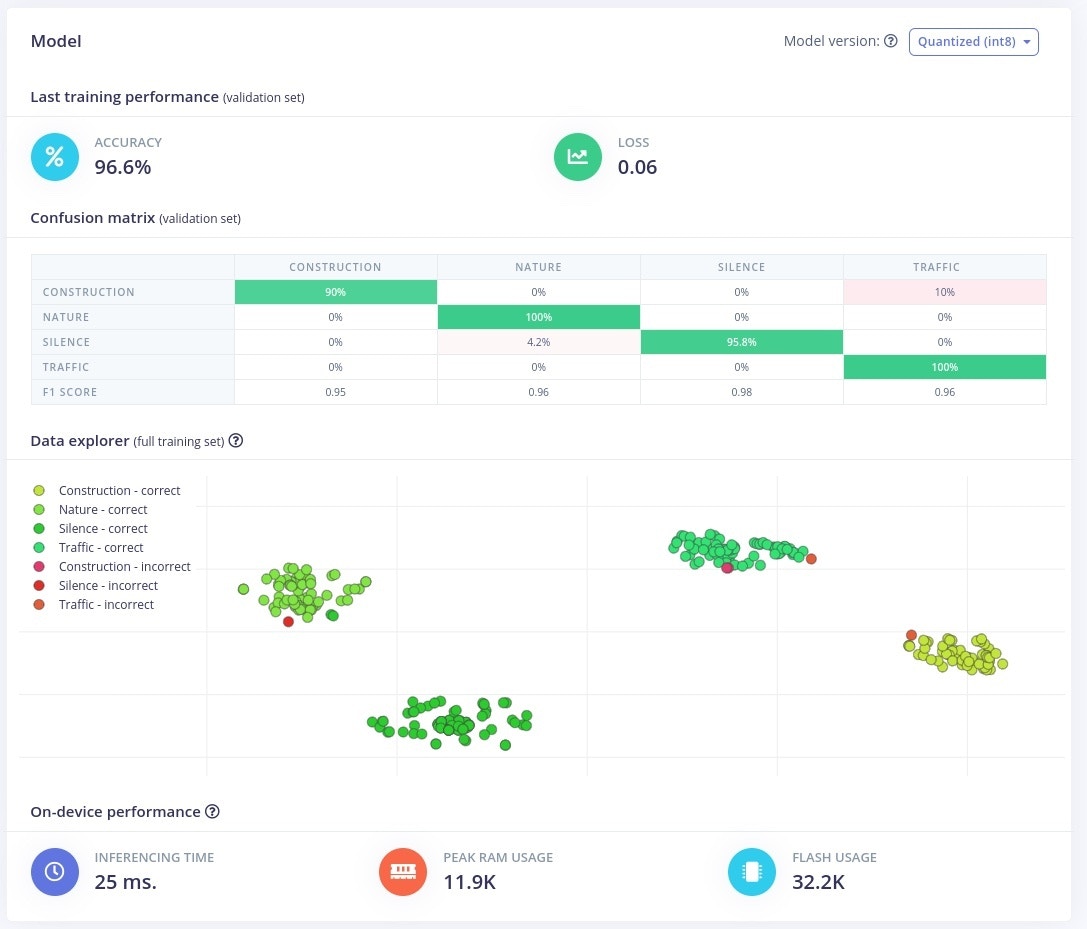

Training an Audio Classification Model

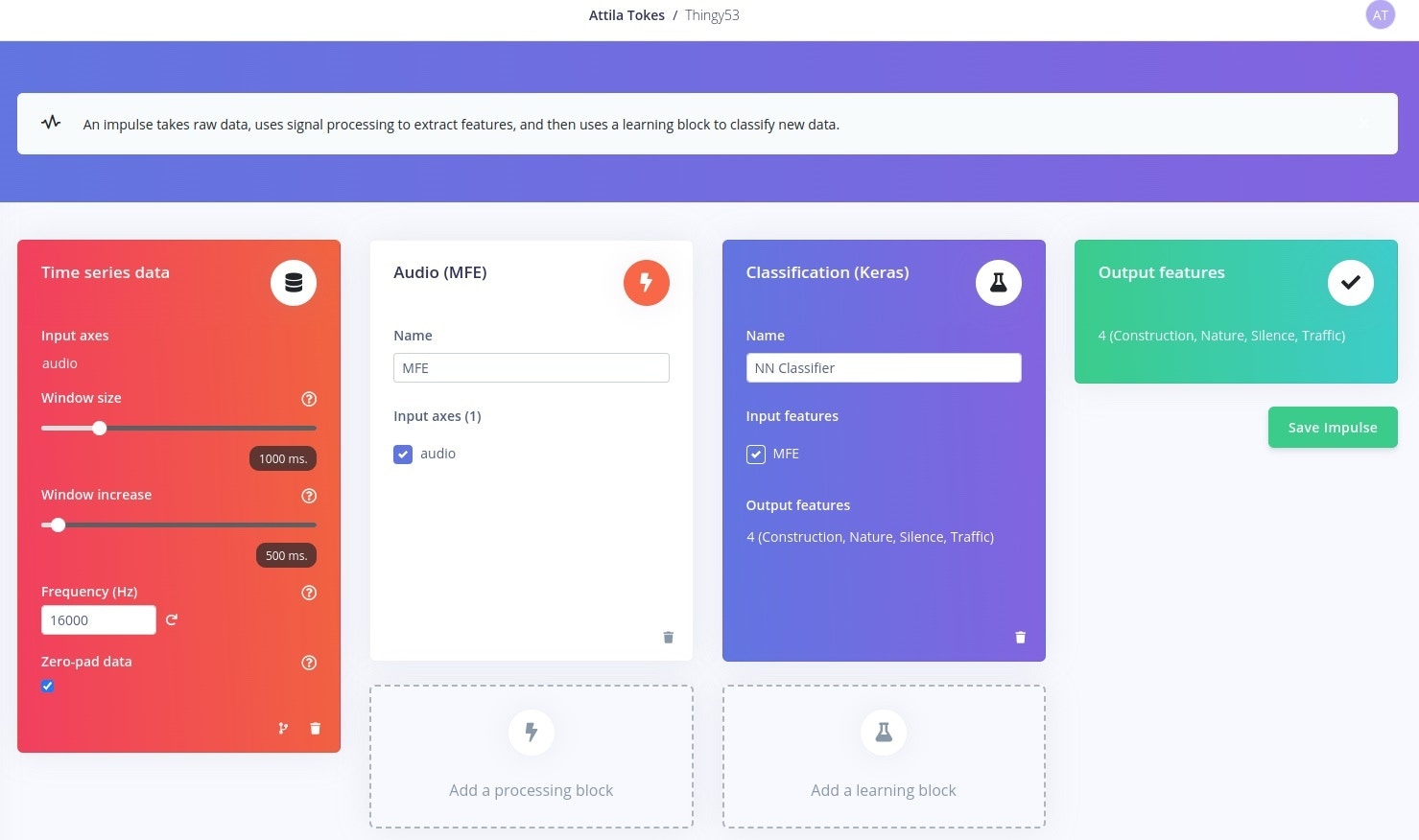

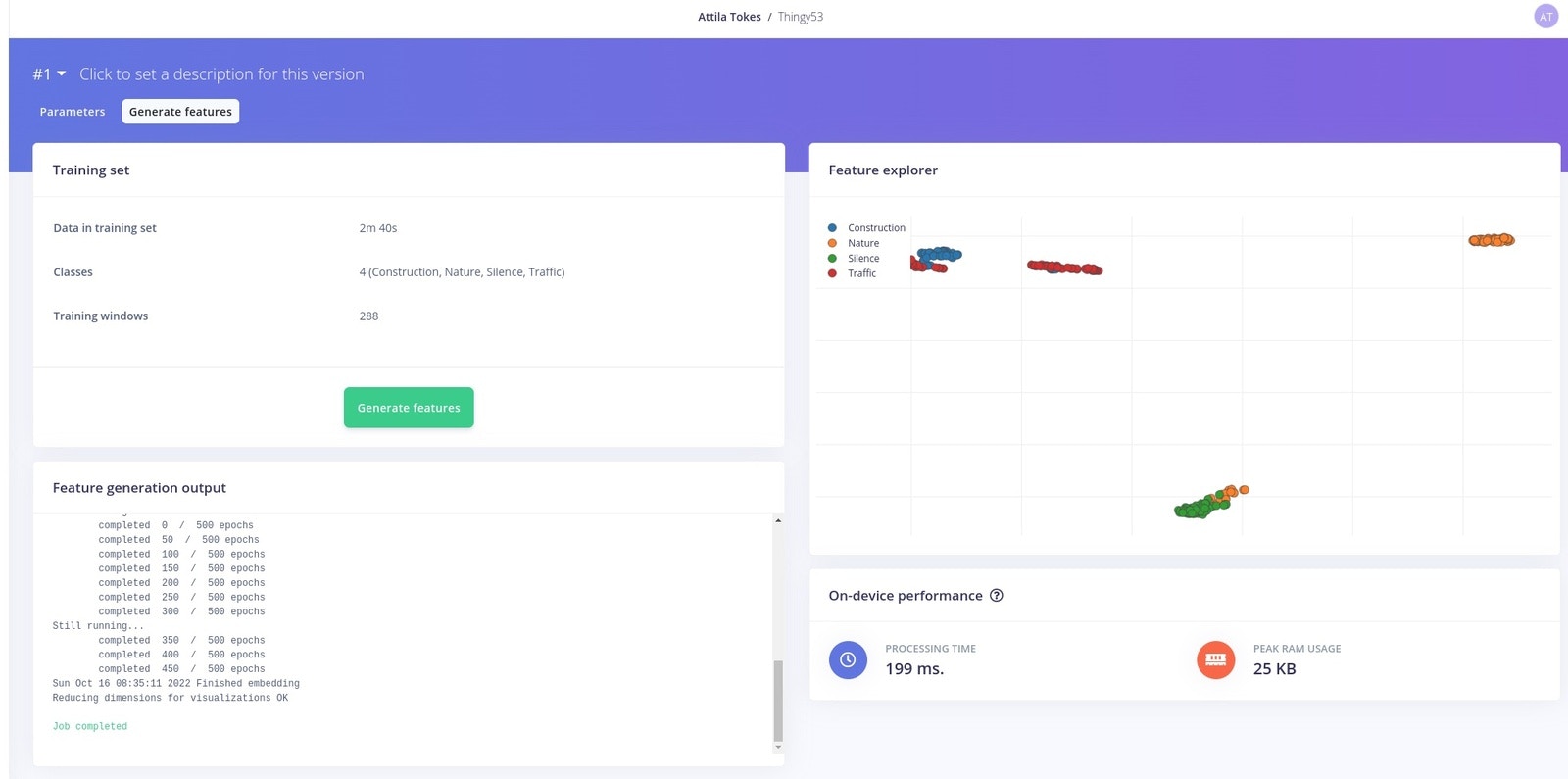

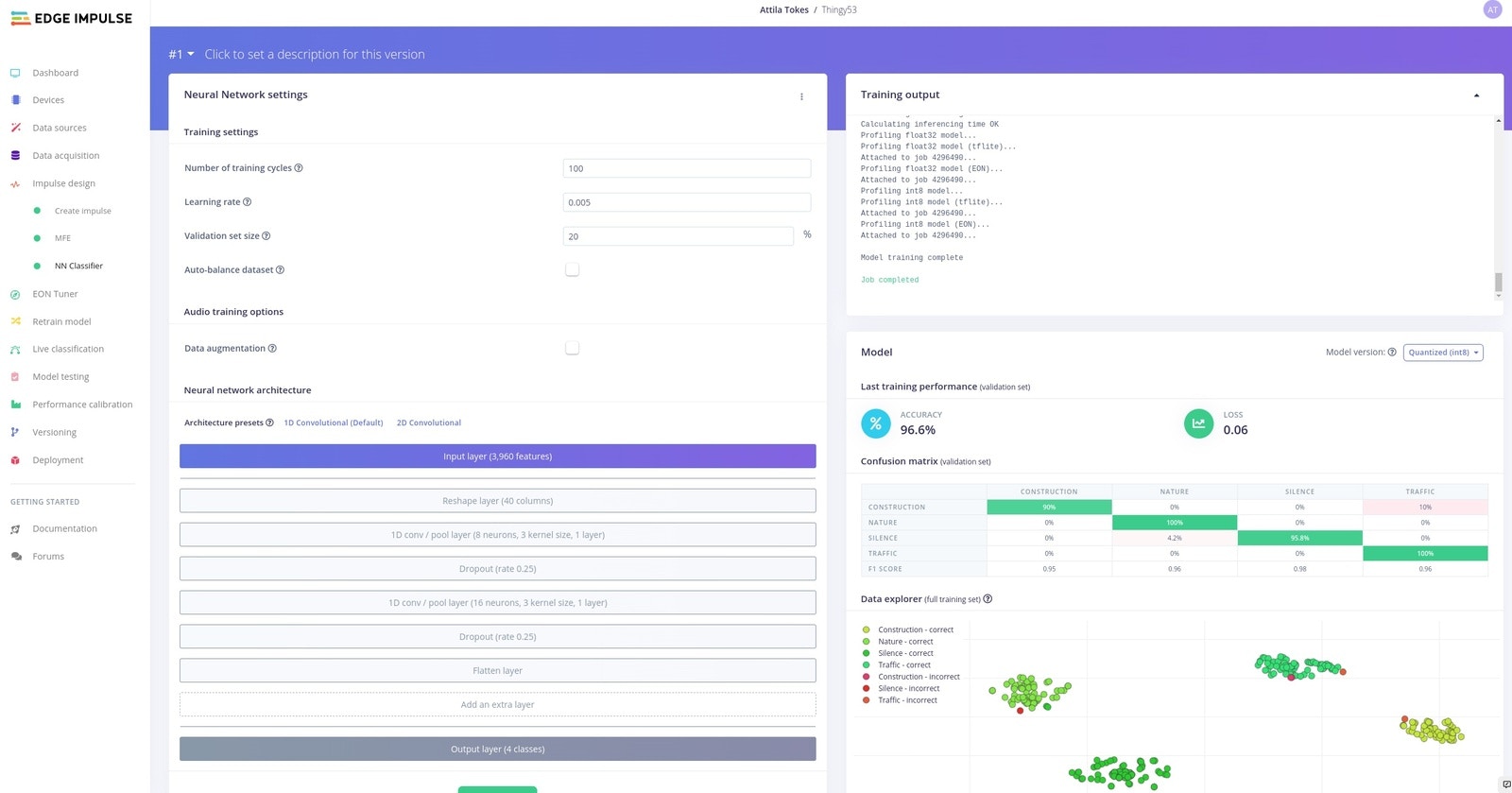

Having the audio data, we can start building a machine learning model. In Edge Impulse project the machine learning pipeline is called an Impulse. An impulse includes the pre-processing, feature extraction and inference steps needed to classify, in our case, audio data. For this project I went will the following design:

- Time Series Data Input - with 1 second windows @ 16kHz

- Audio (MFE) Extractor - this is the recommended feature extractor for non-voice audio

- NN / Keras Classifier - a neural network classifier

- Output with 4 Classes - Silence, Nature, Traffic, Construction

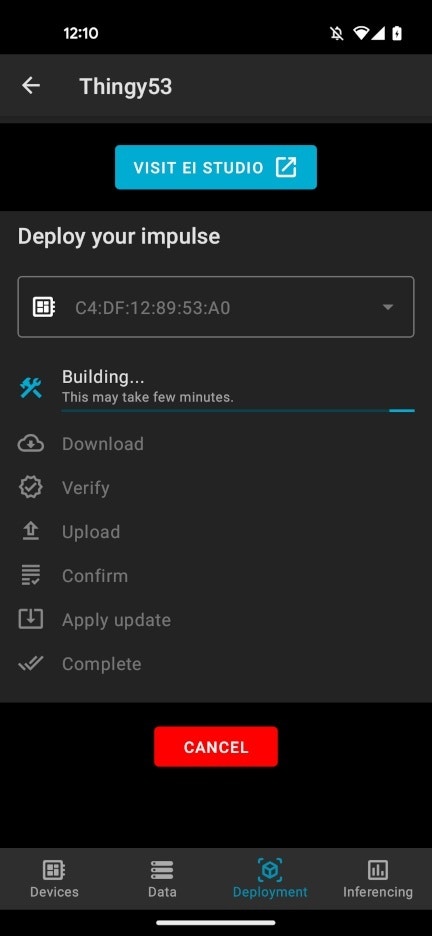

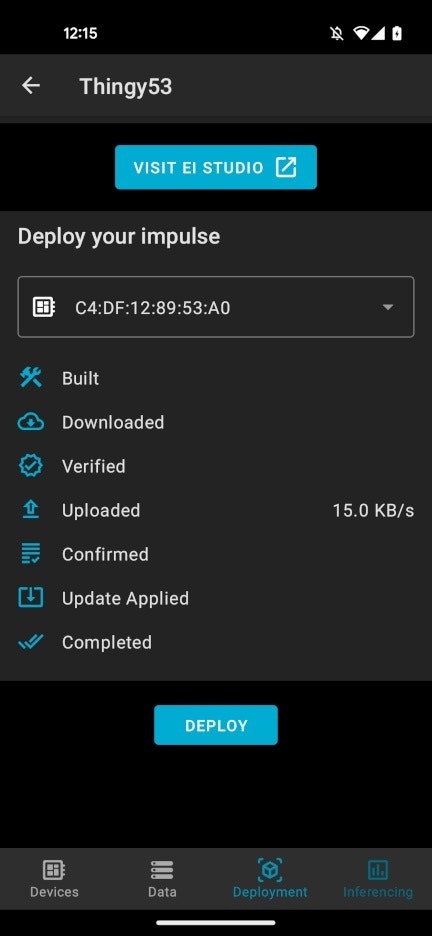

Deploying the Model on the Thingy:53

Building an deploying an embedded application including machine learning used to involve a couple of steps. With the Thingy:53 and Edge Impulse this become much easier. We just need to go to the Deployment tab, and hit Deploy. The model will automatically start building:

Running Live Inference on the Thingy:53

The Deployment we did earlier should have been uploaded a firmware with the new model on the Thingy:53. Hitting Start on the Inference will start live classification on the device. I tested the application out with new audio samples for each class:A Network of Devices

In future versions this project could be extended to also include features like:- Noise level / decibel measurement

- Cloud connectivity via Bluetooth Mesh / Thread

- Solar panel charging

Resources

Edge Impulse Project: https://studio.edgeimpulse.com/studio/146039 Nordic Thingy:53: https://www.nordicsemi.com/Products/Development-hardware/Nordic-Thingy-53 Edge Impulse Documentation: https://docs.edgeimpulse.com/ Sound Sources:- Construction: 10 Hours of Construction Sound | Amazing Sounds with Peter Baeten (Sunville Sounds)

- Nature: Bird Watching 4K with bird sounds to relax and study | A day in the backyard (Sunville Sounds) Beautiful Afternoon In Nature With Singing Birds ~ Stories With Peter Baeten (Sunville Sounds) Gentle Rain Sounds on Window ~ Calming Rain For Sleeping & Relaxing | Rain Sounds with Peter Baeten (Sunville Sounds)

- Traffic: Busy Traffic Sound Effects (All Things Sound) Heavy Traffic Sound Effects | Bike Riding in Traffic Roads Sounds | Zoom Hn1 Indian Roads FreeSounds (To Know Everything) City Traffic Sounds for Sleep | Highway Ambience at Night | 10 Hours ASMR White Noise (Nomadic Ambience)