Introduction

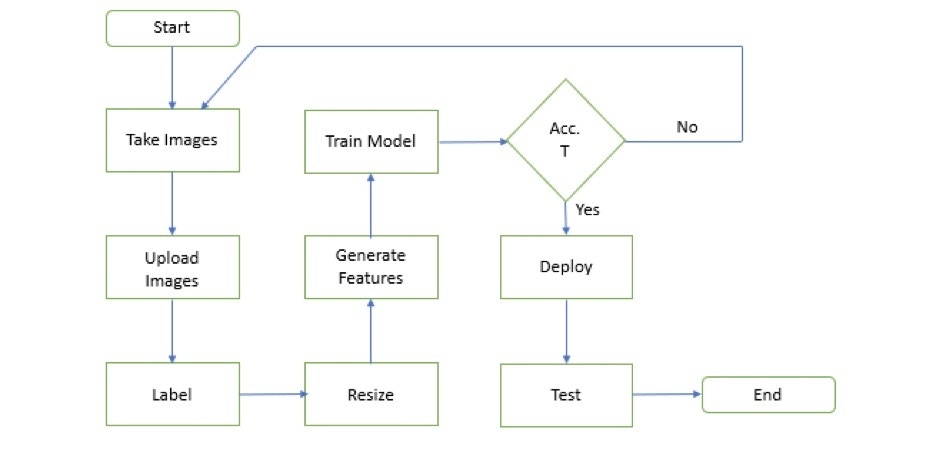

We utilize metal objects crafted from iron and steel in our everyday lives. When these materials are exposed to moisture, there is a risk of corrosion occurring. Corrosion is a chemical process that destroys the surface of metals, as a result of chemical and electrochemical reactions caused by environmental circumstances. This leads to a loss of metallic components, which may contribute to diminished efficiency in the metal part’s end-use applications. This reduces the life of metallic parts and can increase maintenance costs. The study of corrosion growth aids in the development of preventative strategies to avert such losses. In this project the idea is to perform a demonstration on detecting corrosion, using TinyML. In heavy industries such as transportation, mining, construction, ship building, etc, corrosion remains a serious risk of operational safety. The cost associated with inadequate protection against corrosion can be huge. A lot of these industries rely on visual inspection of industrial environments by humans. However, in some cases, these industrial environments can be in remote or adverse conditions, thereby putting humans at risk. Additionally, the process of detecting and analyzing different types of corrosion is also subject to interpretation by humans. Using Deep Learning, it is possible to reduce and, in many cases, even remove the subjectivity. By using vehicles, such as robots or drones, it is possible to automate the process of inspection of such industrial environments. This can reduce risks to humans, as well as control costs for such operations. In this demonstration, we’re proposing to build a deep learning model, using an edge AI device based upon the Seeed Studio reTerminal. And we’ll integrate this with a webcam for you to visualize such corroded parts. A promising way to overcome the aforementioned drawbacks is to develop an artificial intelligence-based algorithm that can recognize corrosion damage in a series of photographic images. Since an image with small dimensions, but still showing important features, demands less time for the processing, resizing large and high-quality images to smaller ones might be an option for an end-users. This diagram shows the process in more detail.

reTerminal Configuration

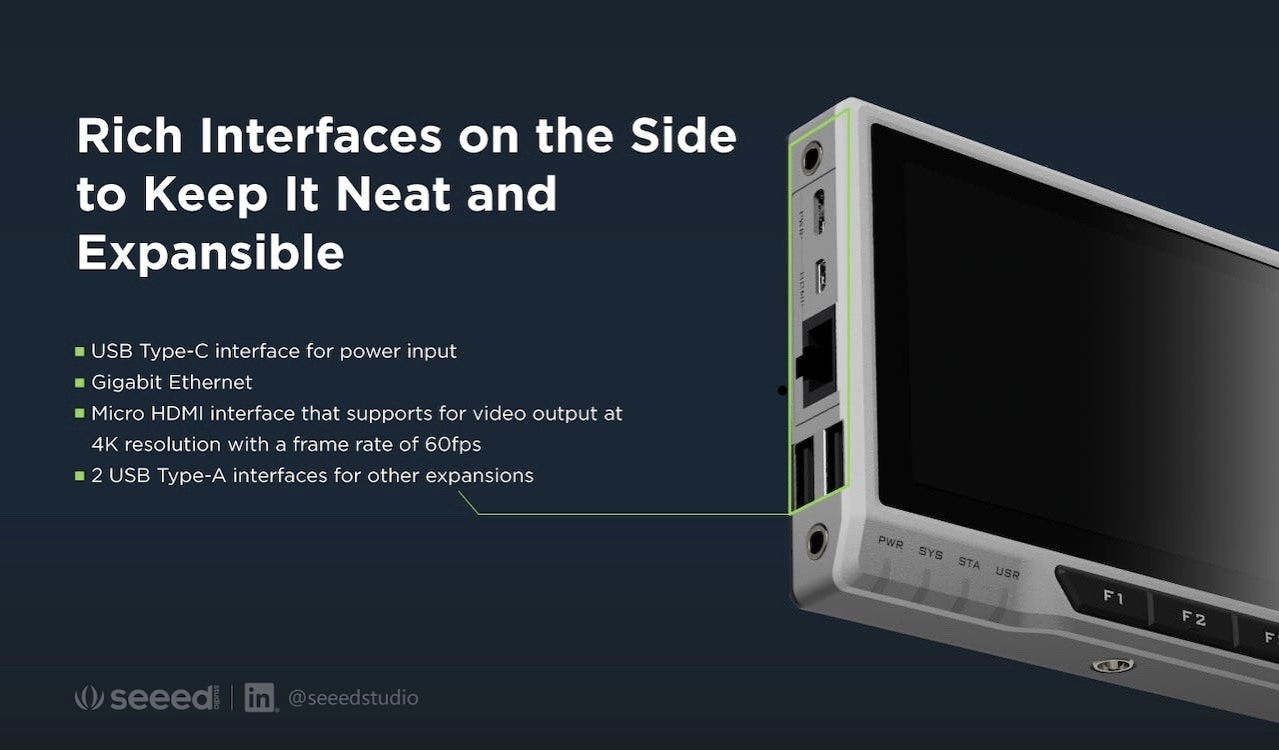

The reTerminal is an HMI device built around the Raspberry Pi Compute Module 4 (CM4) with a 1.5GHz quad-core Cortex-A72 CPU and a 5-inch IPS capacitive multi-touch screen with a resolution of 1280 x 720. It has enough RAM (4GB) to multitask and enough eMMC storage (32GB) to install an operating system, allowing for quick startup times and an enjoyable overall experience. It has dual-band 2.4GHz/5GHz Wi-Fi and Bluetooth 5.0 BLE for wireless networking.

Hardware Required

You need to prepare the following hardware before getting started with reTerminal:- reTerminal

- Ethernet cable or Wi-Fi connection

- Power adapter (5V/3A)

- USB Type-C cable

Software Set Up

reTerminal comes with Raspberry Pi OS pre-installed out-of-the-box. Be sure to go through the out-of-box setup process documented here to prepare your reTerminal for use. Once you have setup the hardware, the next step is to upload images into the Edge Impulse Studio and begin training a machine learning model.Model Development

Dataset

Rust is the most common form of corrosion. Rusting is oxidation of iron in the presence of air and moisture and occurs on surfaces of iron and its alloys, such as steel. A dataset of curated images, labeled as CORROSION and NO CORROSION were collected from [2]. The figure below represents the Corrosion and No Corrosion images of a steel plate. In total 150 images were used for both classes, and were labeled in the Studio asRust and No Rust. Information on how to upload images in to the Studio can be found here.

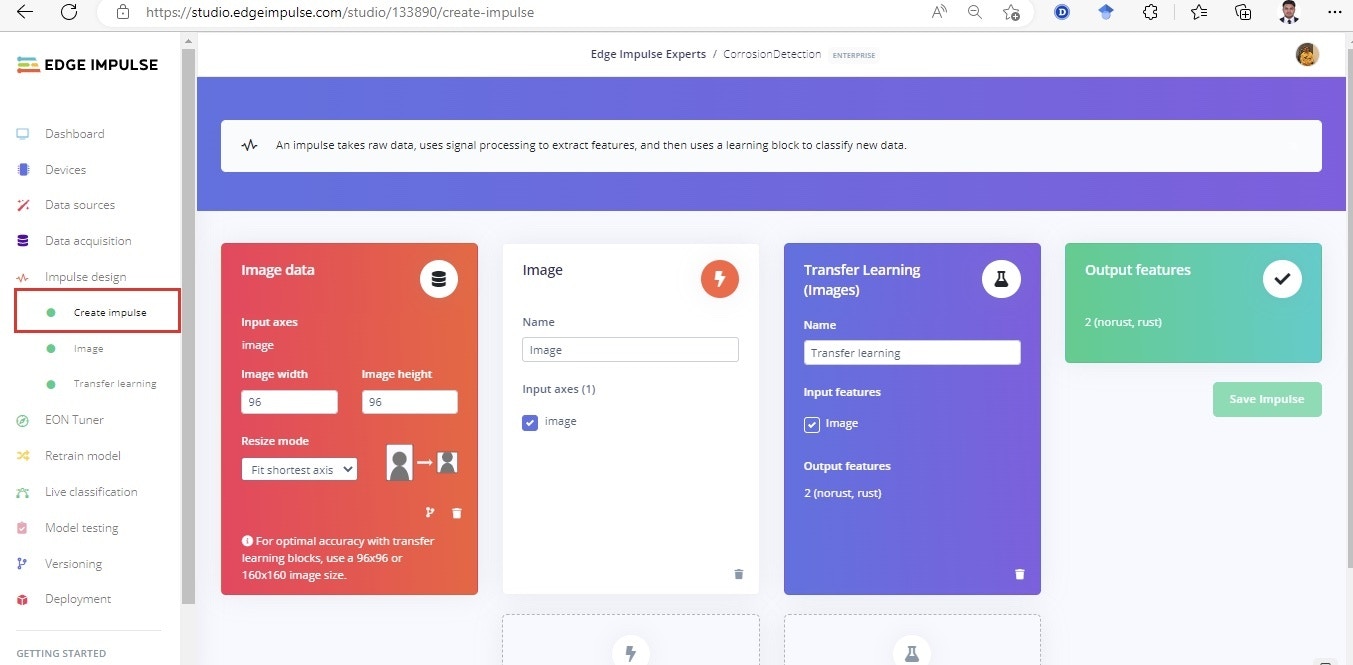

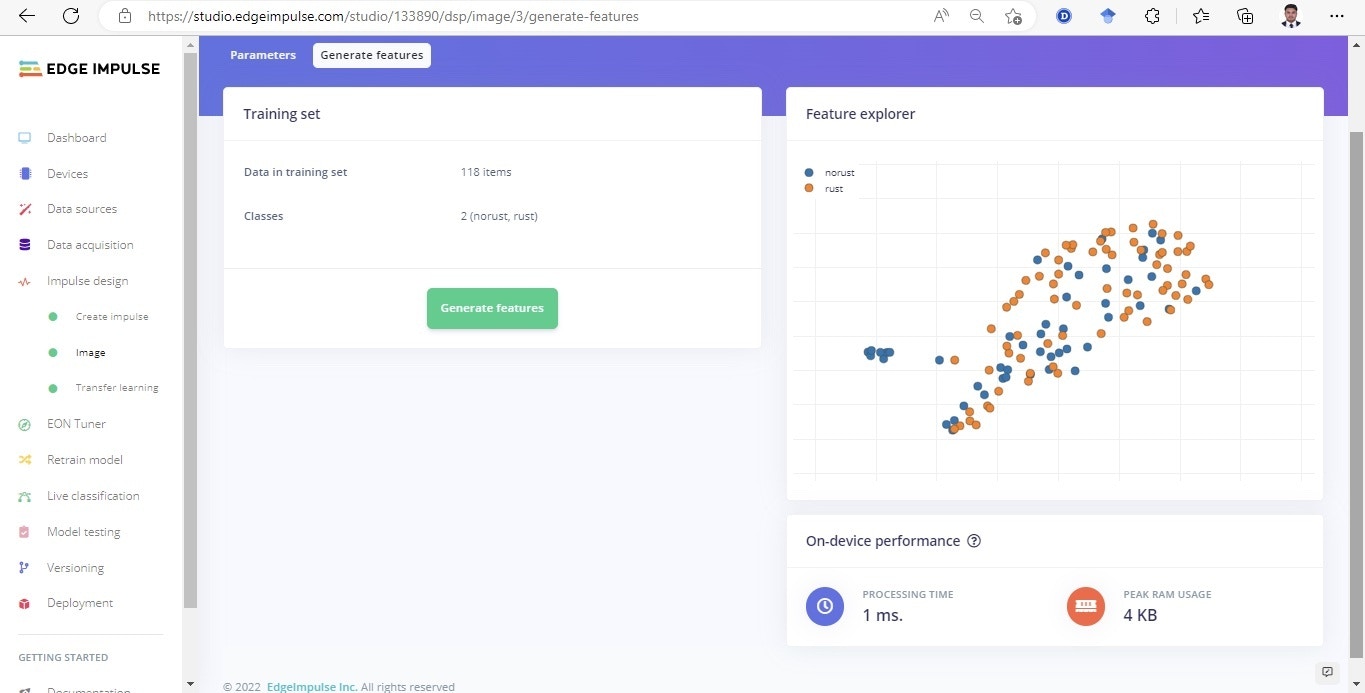

Impulse Design

Once the dataset is uploaded, we are ready to train our model. This requires two important features: a processing block and a learning block. Documentation on Impulse Design can be found here. We first click “Create Impulse”. Here, set image width and height to 96x96; and Resize mode to Squash. The Processing block is set to “Image” and the Learning block is “Transfer Learning (Images)”. Click ‘Save Impulse’ to use this configuration as shown in below figure. We have used a 96x96 image size to lower the RAM usage, presented in [3]. Higher resolution images will cause a subsequent increase in RAM usage when running the model.

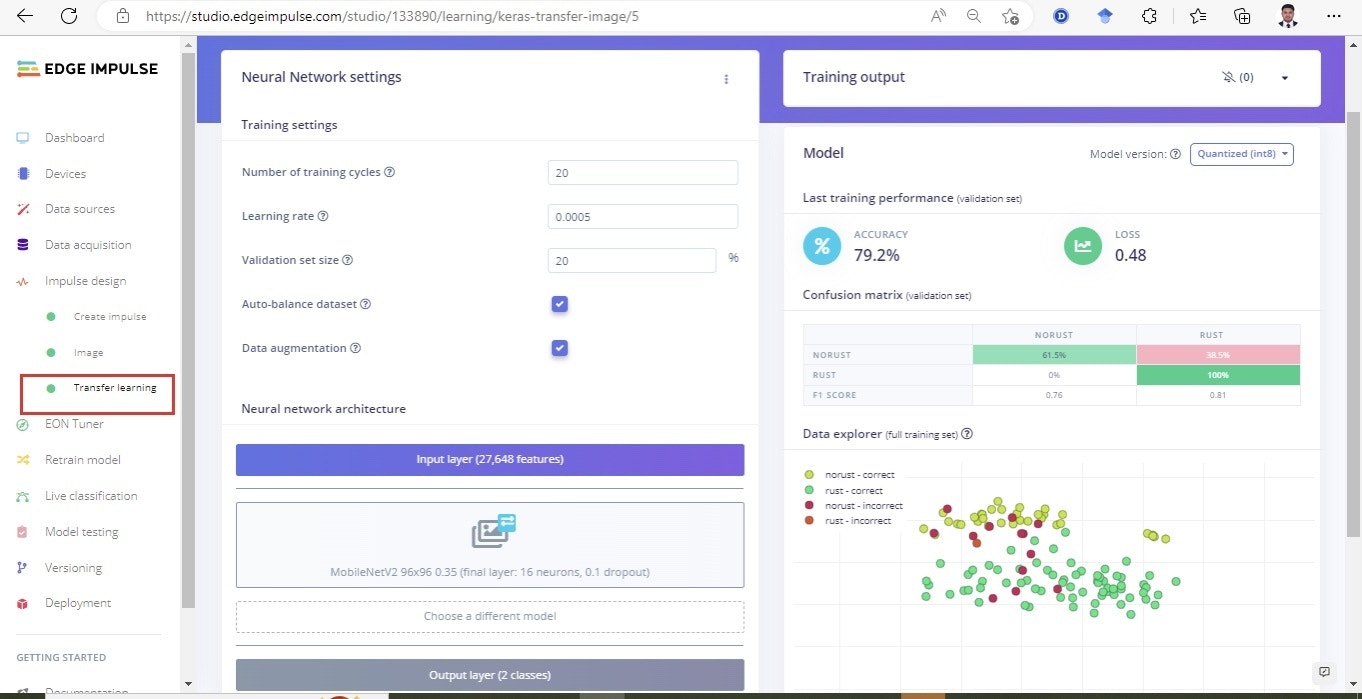

Building and Training the Model

To train a model, the MobileNetV2 96x96 0.2 algorithm was then used with a hyperparameter of 60 epochs, and learning rate set to 0.005 with the dataset split into Training, Validation, and Testing. After introducing a dynamic quantization from a 32-bit floating point to an 8-bit integer, the resulting optimized model showed a significant reduction in size (346.3K). The onboard inference time was reduced to 93 msec and the RAM use was limited to 585.6K, with an accuracy after the post-training validation of 79.2% shown.

Deploying to the reTerminal

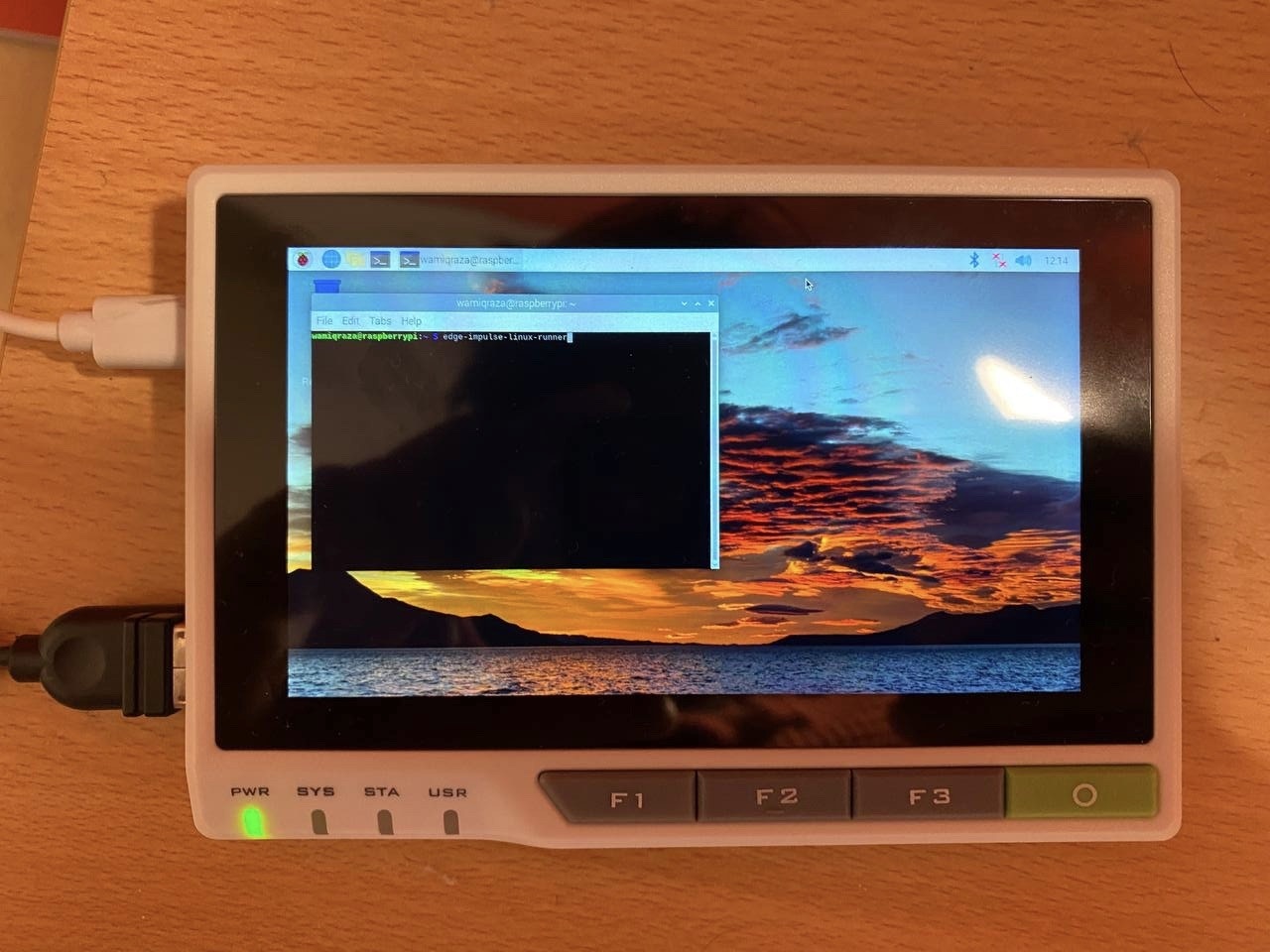

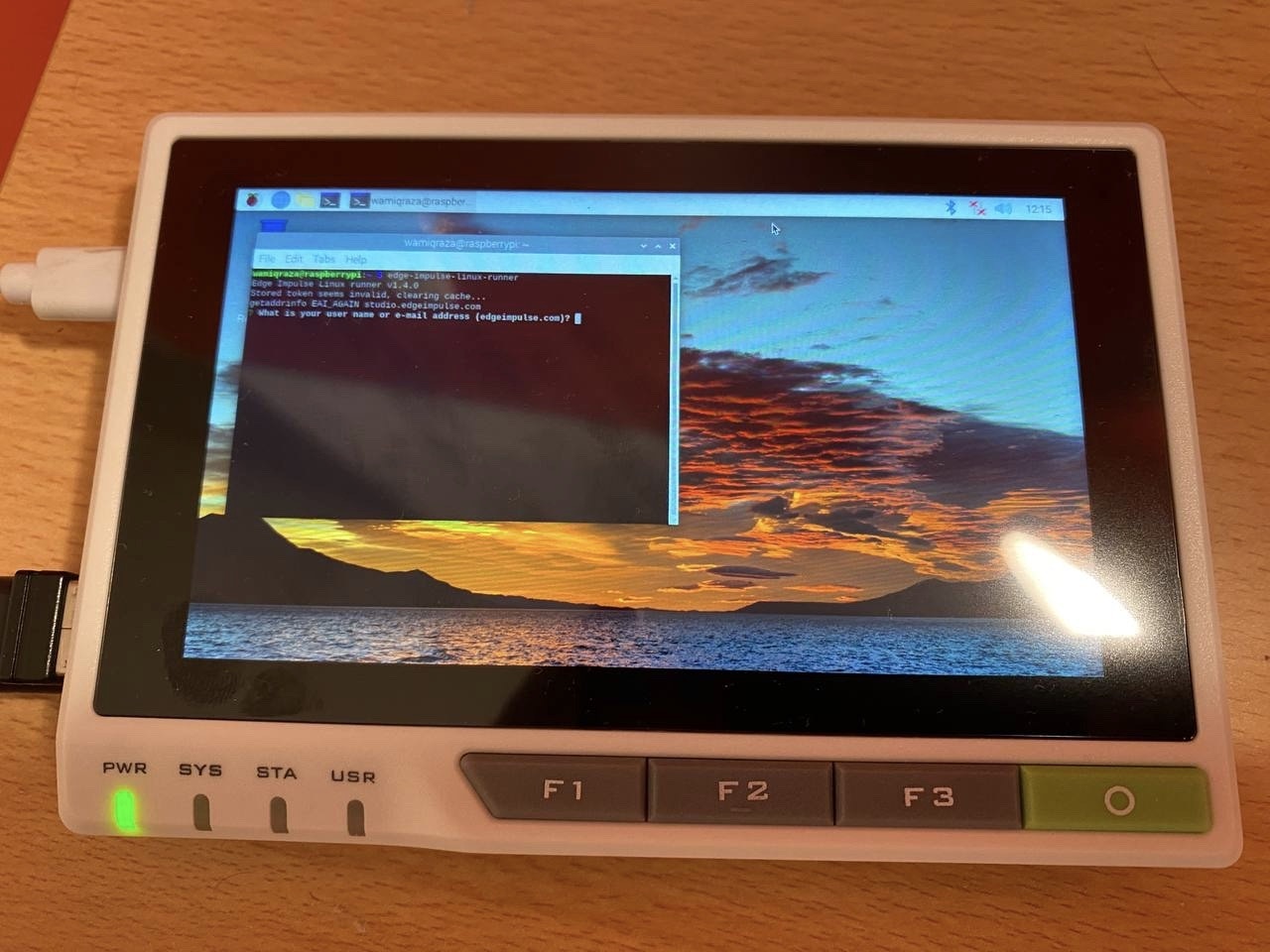

In order to deploy a model on to the reTerminal, the Edge Impulse CLI will be needed. The installation process is described in the documentation here, but basically comes down to a few simple steps:raspi-config.

edge-impulse-linux-runner. When the model training is finished and you’re satisfied with the accuracy on your Validation dataset (which is automatically split from Training data), you can test the model in Live classification before deploying it on the device. In this case it is as simple as running: