Introduction

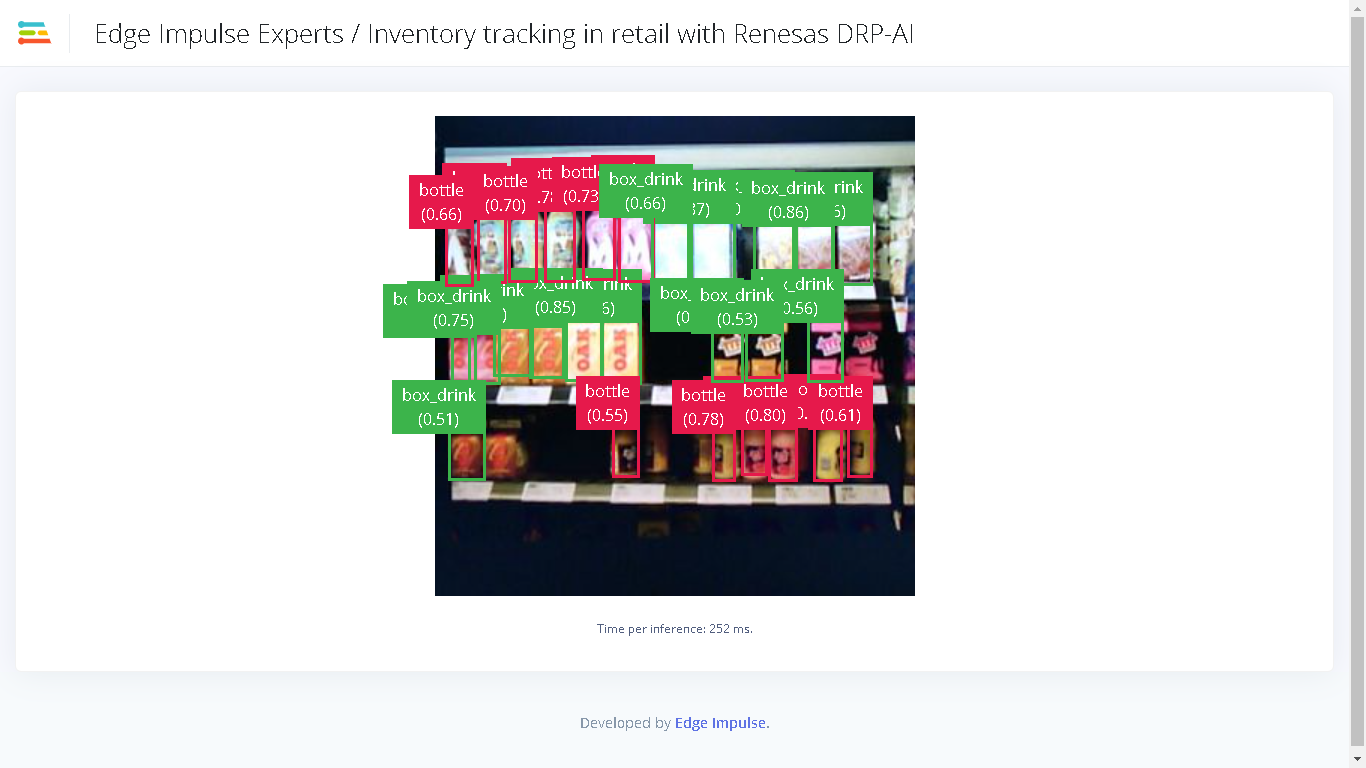

The primary method of inventory management in retail, warehouse, manufacturing, and logistics is simply counting parts or stock. This procedure ensures that the inventory on the sales floor, or in the warehouse meets requirements for production, sale, or spare capacity. In retail, this is done to ensure there are enough goods available for sale to consumers. Even with many technology advancements and improved production and capacity planning, physical inventory counting is still required in the modern world. This is a time consuming and tedious process, but machine learning and computer vision can help decrease the burden of physical inventory counting. To demonstrate the process of inventory counting, I trained a machine learning model that can identify a bottle and a box/carton drink on store shelves, and their counts are displayed in a Web application. For the object detection component, I used Edge Impulse to label my dataset, train a YOLOv5 model, and deploy it to the Renesas RZ/V2L Evaluation Board Kit. You can find the public Edge Impulse project here: Inventory Tracking in Retail with Renesas DRP-AI.Dataset Preparation

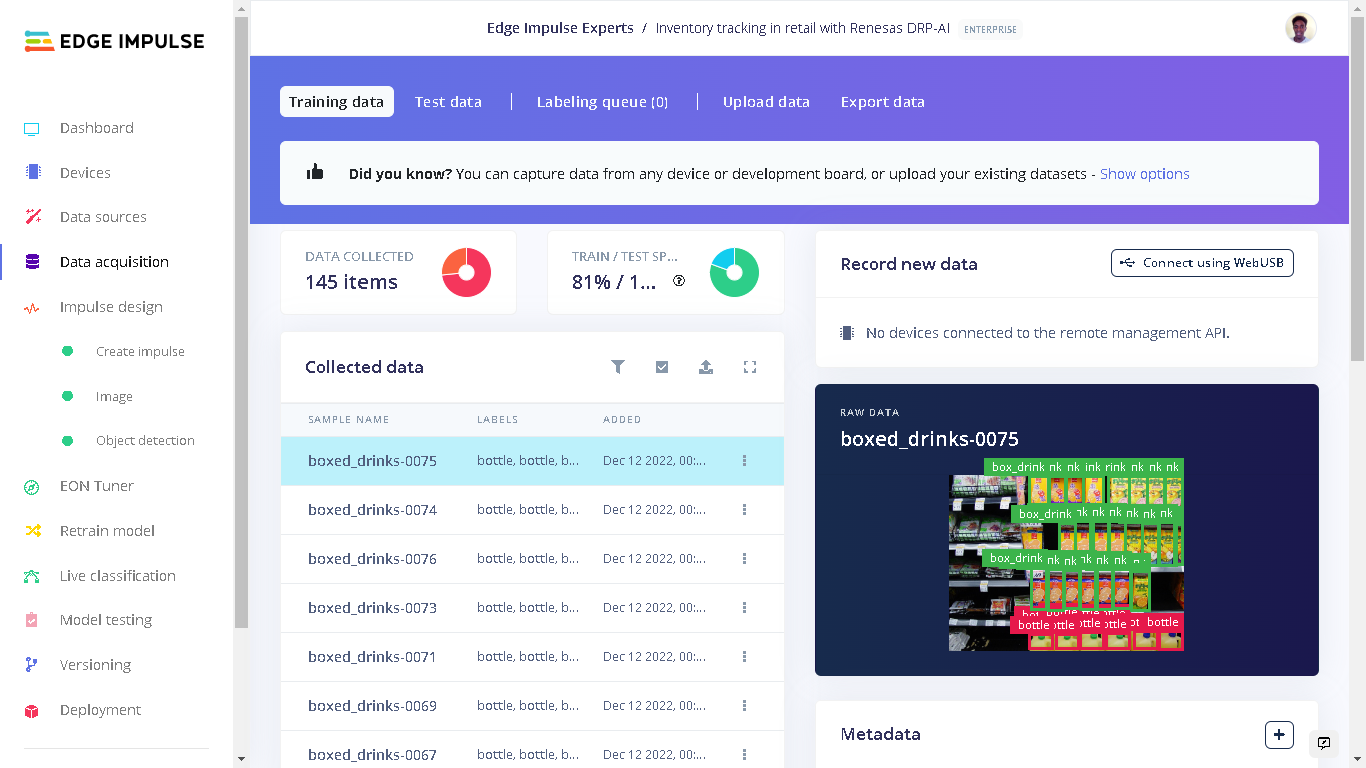

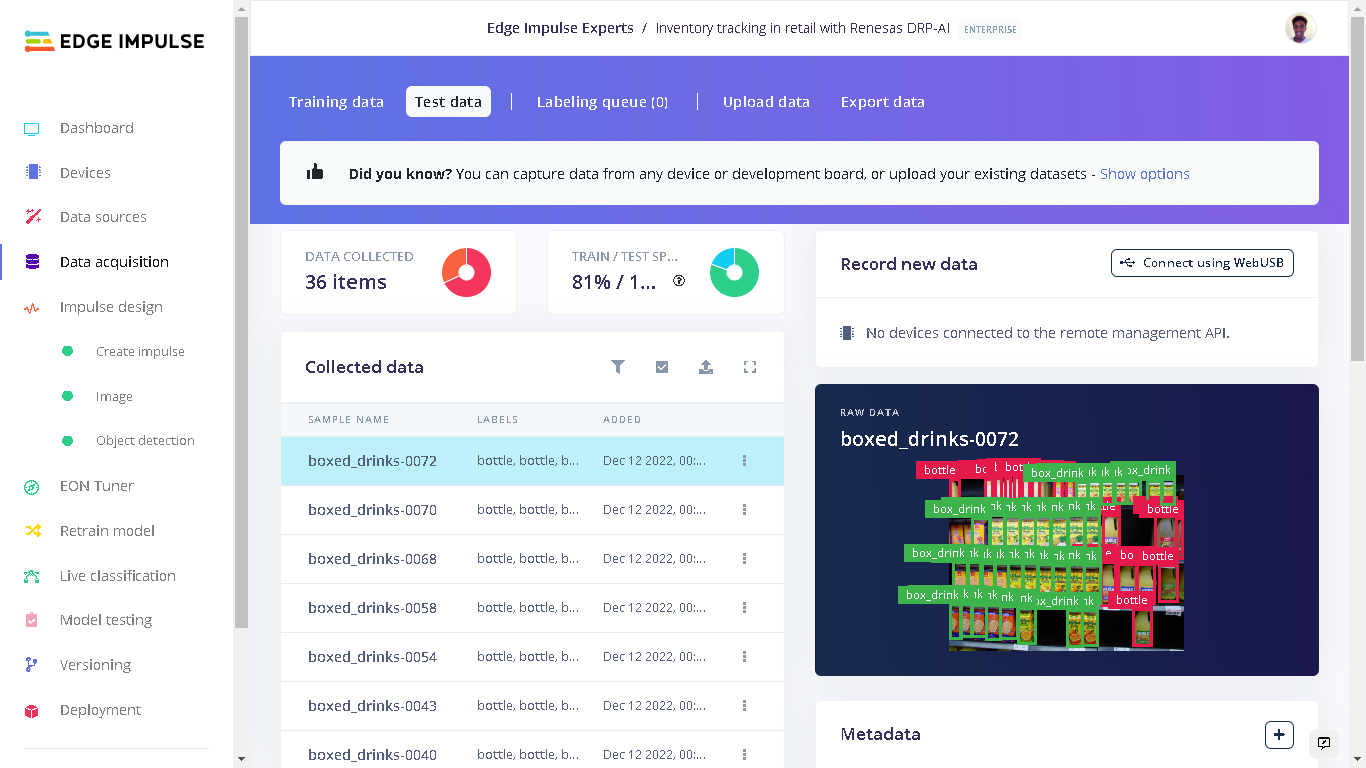

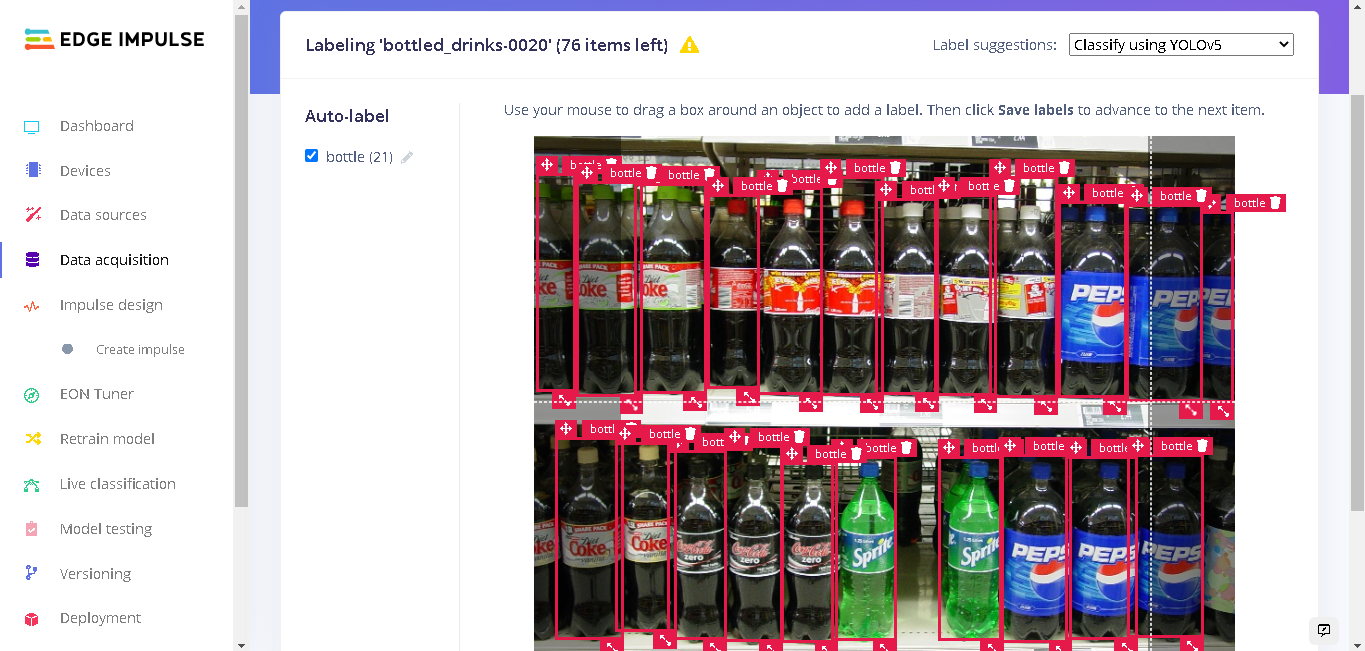

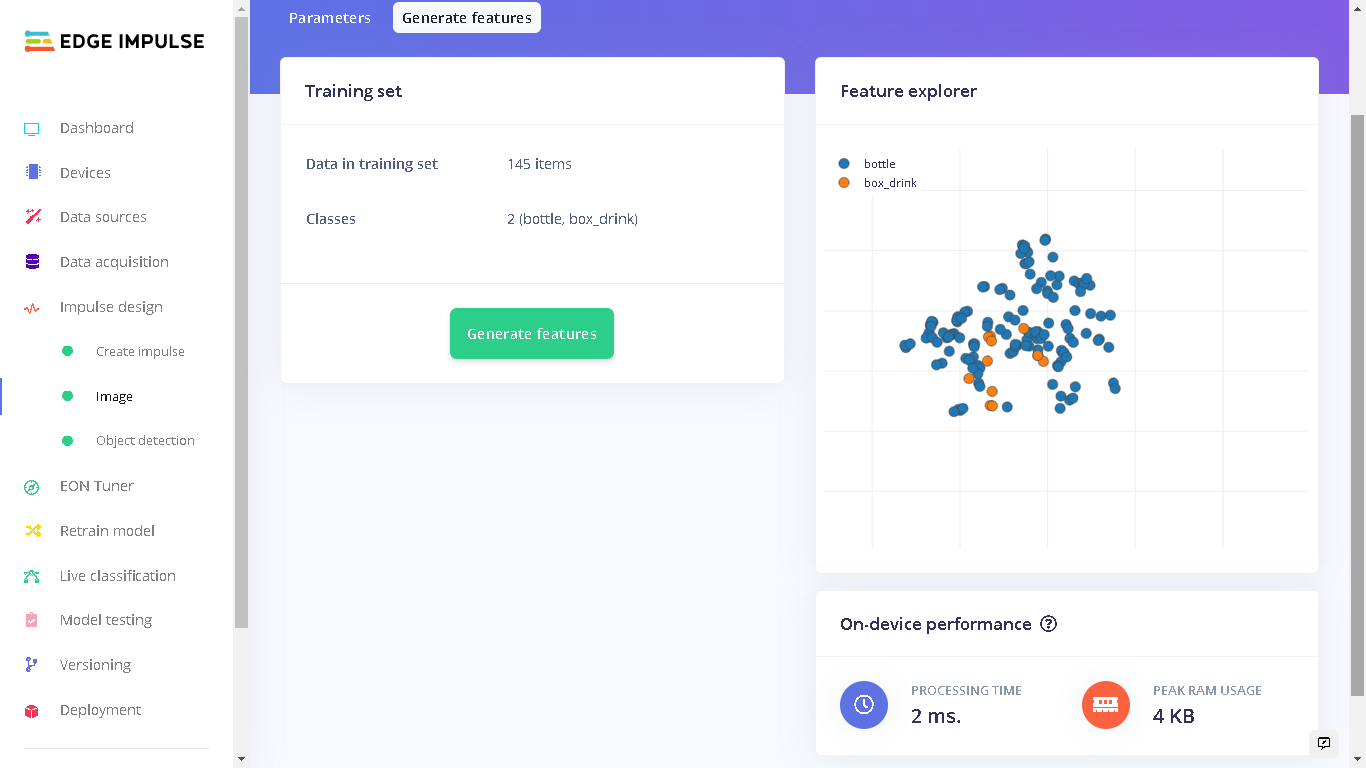

For the dataset, I used the SKU110K image dataset. This dataset focuses on object detection in densely packed scenes where images contain many objects. From the dataset, I chose to use a bottle and boxed/carton drinks. This is because these objects are not too similar, and they have not been as densely packed as some of other items in the dataset. This is a proof-of-concept using only two items, but the dataset includes much more that could also be used. In total, I had 145 images for training and 36 images for testing. Certainly not a huge amount, but enough to get going with. I simply called the two classes: bottle and box_drink.

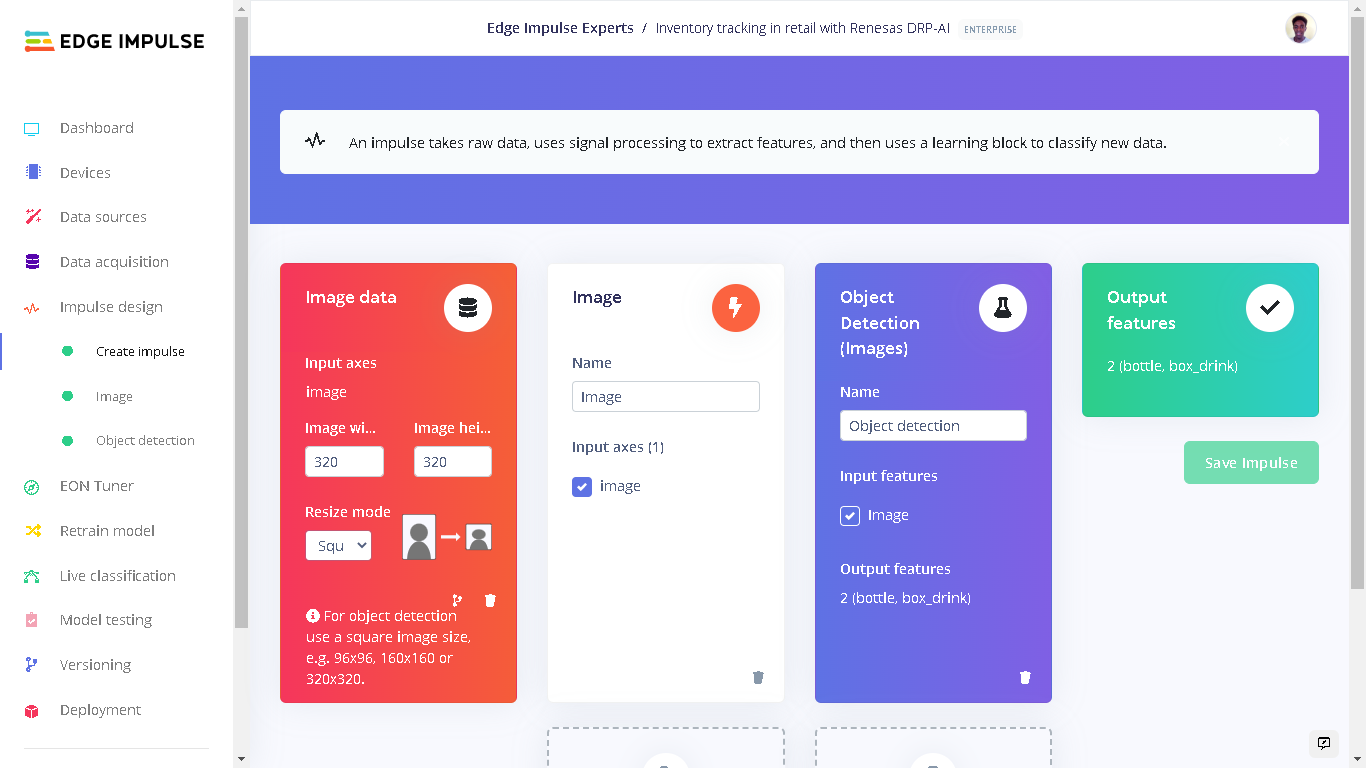

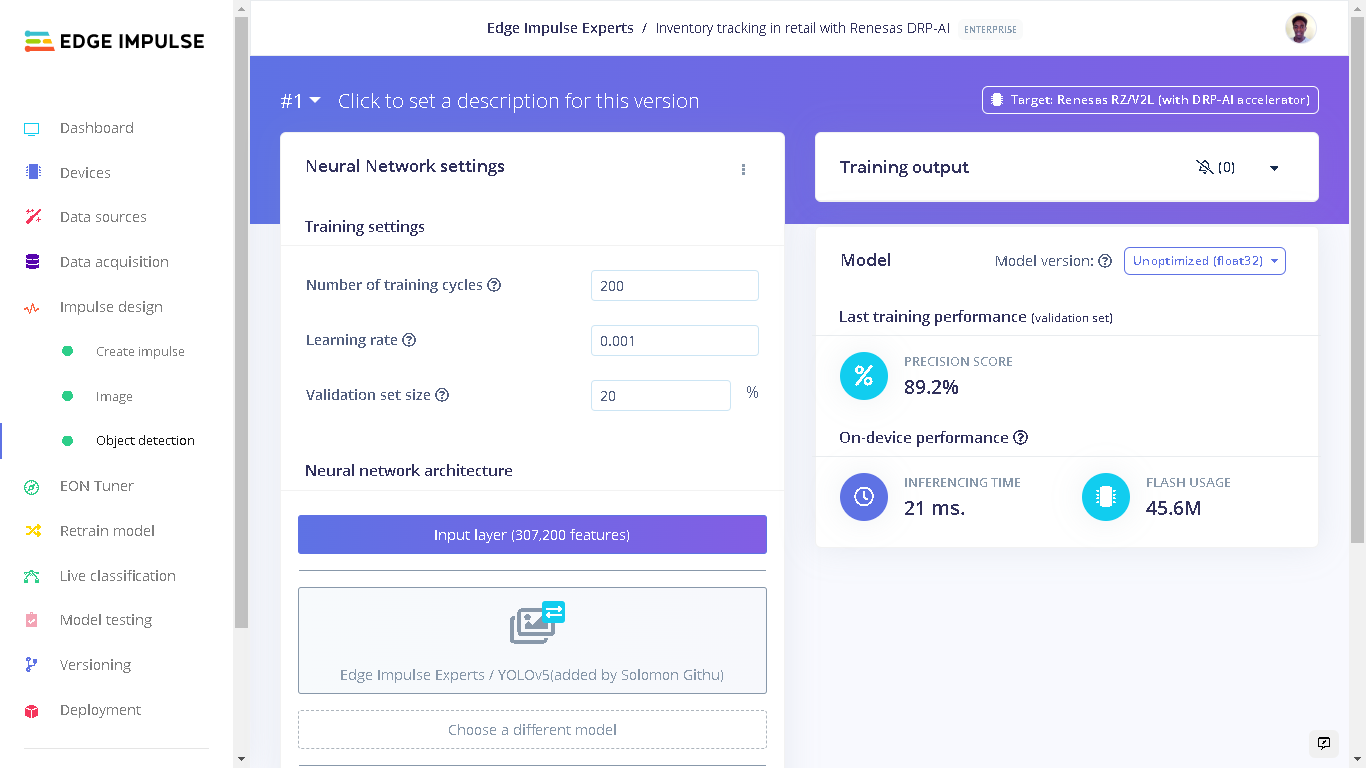

Impulse Design

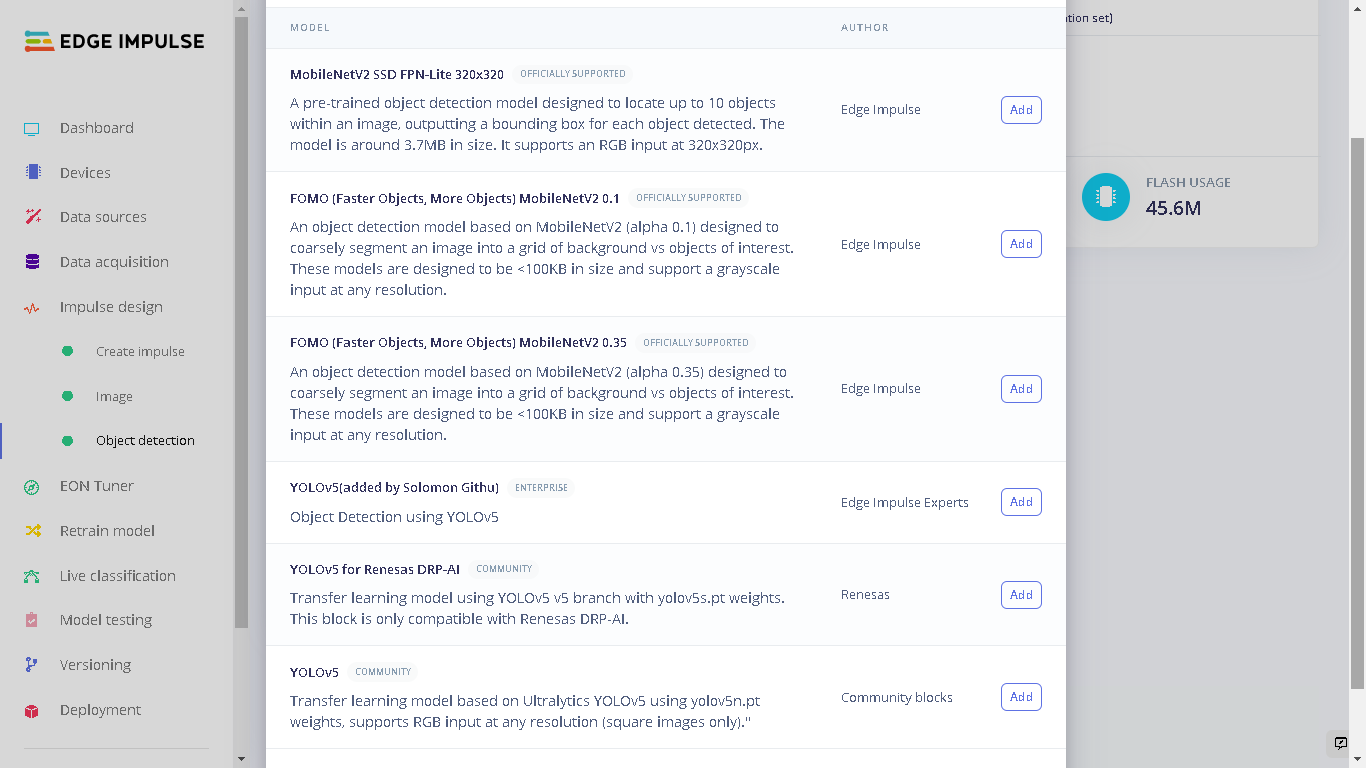

An Impulse is a machine learning pipeline that indicates the type of input data, extracts features from the data, and finally includes a neural network that trains on the features from your data. For my YOLOv5 model, I used an image width and height of 320 pixels and the “Resize mode” set to “Squash”. The processing block was set to “Image” and the learning block set to “Object Detection (Images)”.

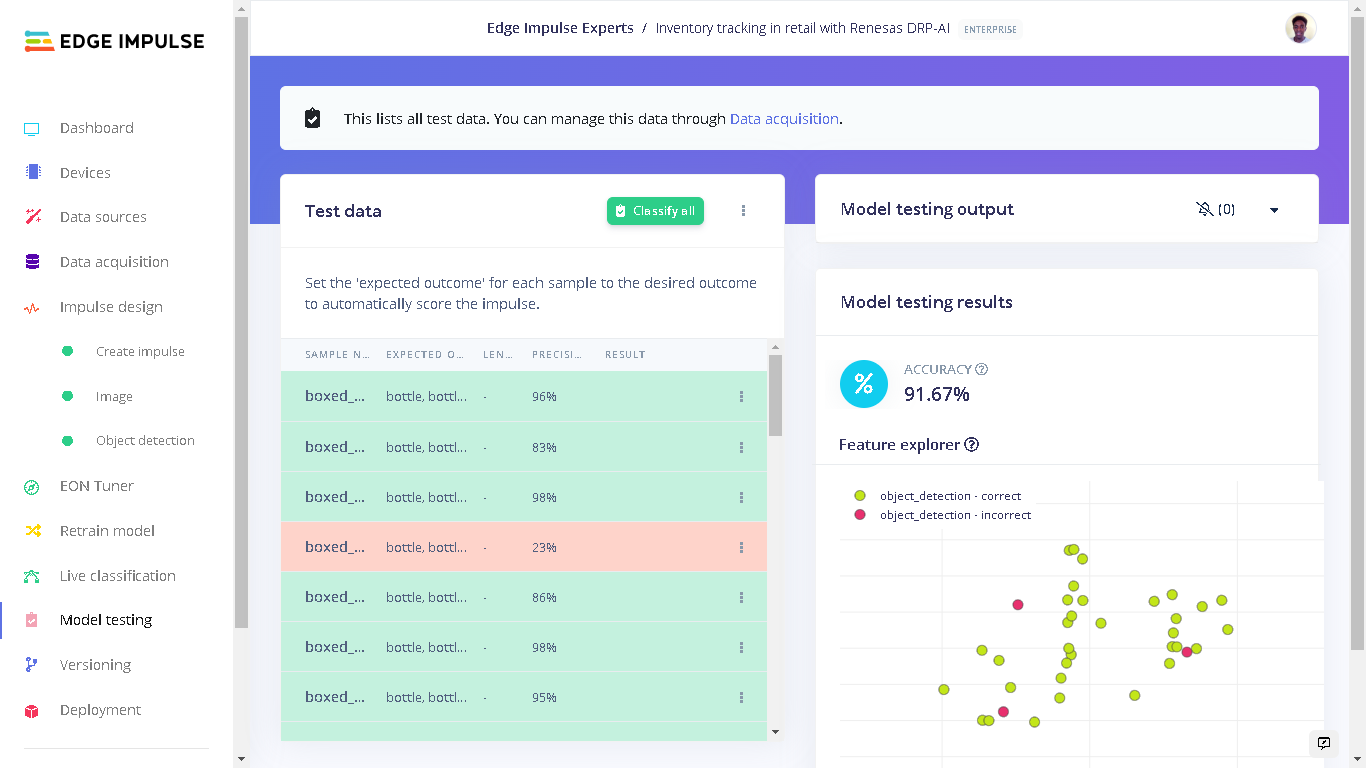

Model Testing

After training a model, we need to do a test with some unseen (test) data. When we uploaded our data earlier, the images that were set aside into the Test category were not used during the training cycle, and are unseen by the model. Now they will be used. In my case, the model had an accuracy of 91% on this Test data. This accuracy is a percent of all samples with a precision score above 90%.

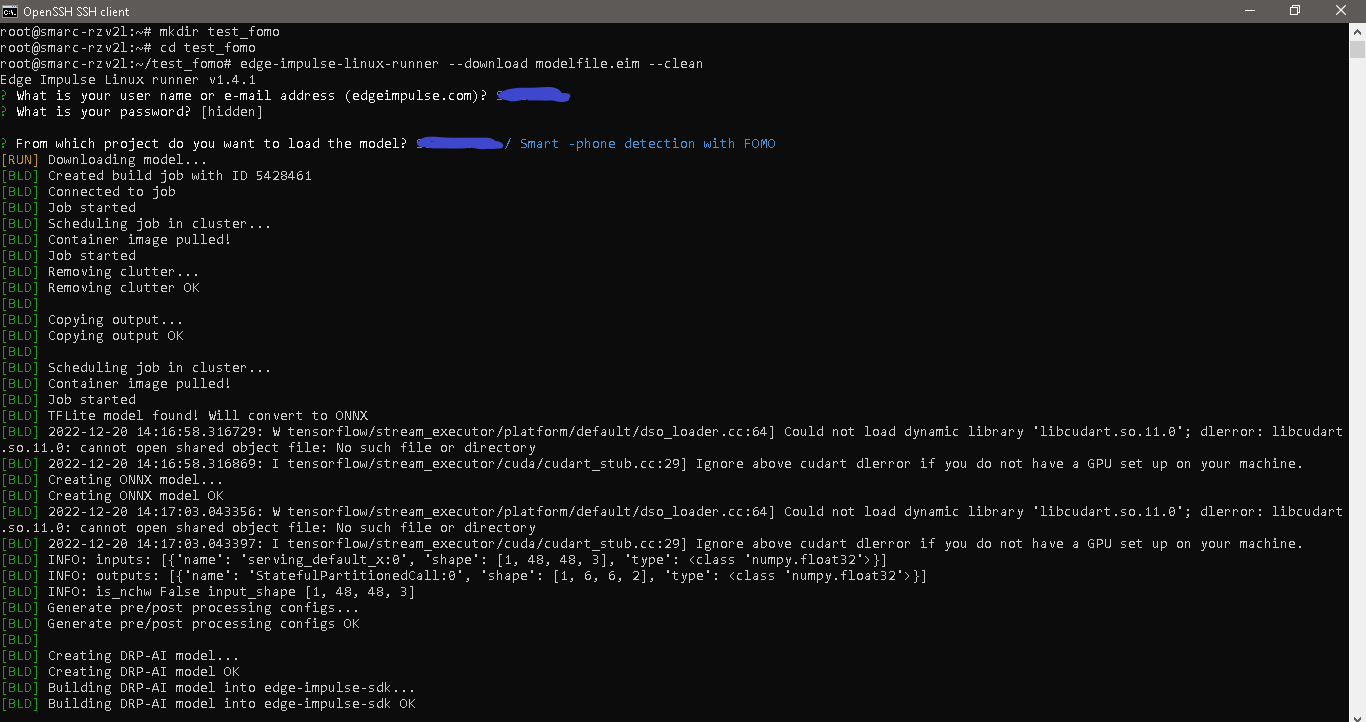

Deploying to Renesas RZ/V2L Evaluation Kit

The Renesas Evaluation Kit comes with the RZ/V2L board and a 5-megapixel Google Coral Camera. To setup the board, Edge Impulse has prepared a guide that shows how to prepare the Linux Image, install the Edge Impulse CLI, and finally connect to the Edge Impulse Studio..jpg?fit=max&auto=format&n=lnCwBUvZVOz6Veyh&q=85&s=212446e804fd827dd41fcf4293ea8caa)

edge-impulse-linux-runner which lets us log in to our Edge Impulse account and select the project.

Alternatively, we can also download an executable of the model which contains the signal processing and ML code, compiled with optimizations for the processor, plus a very simple IPC layer (over a Unix socket). This executable is called an .eim model.

To go about it in this manner, create a directory and navigate into the directory:

.eim model with the command:

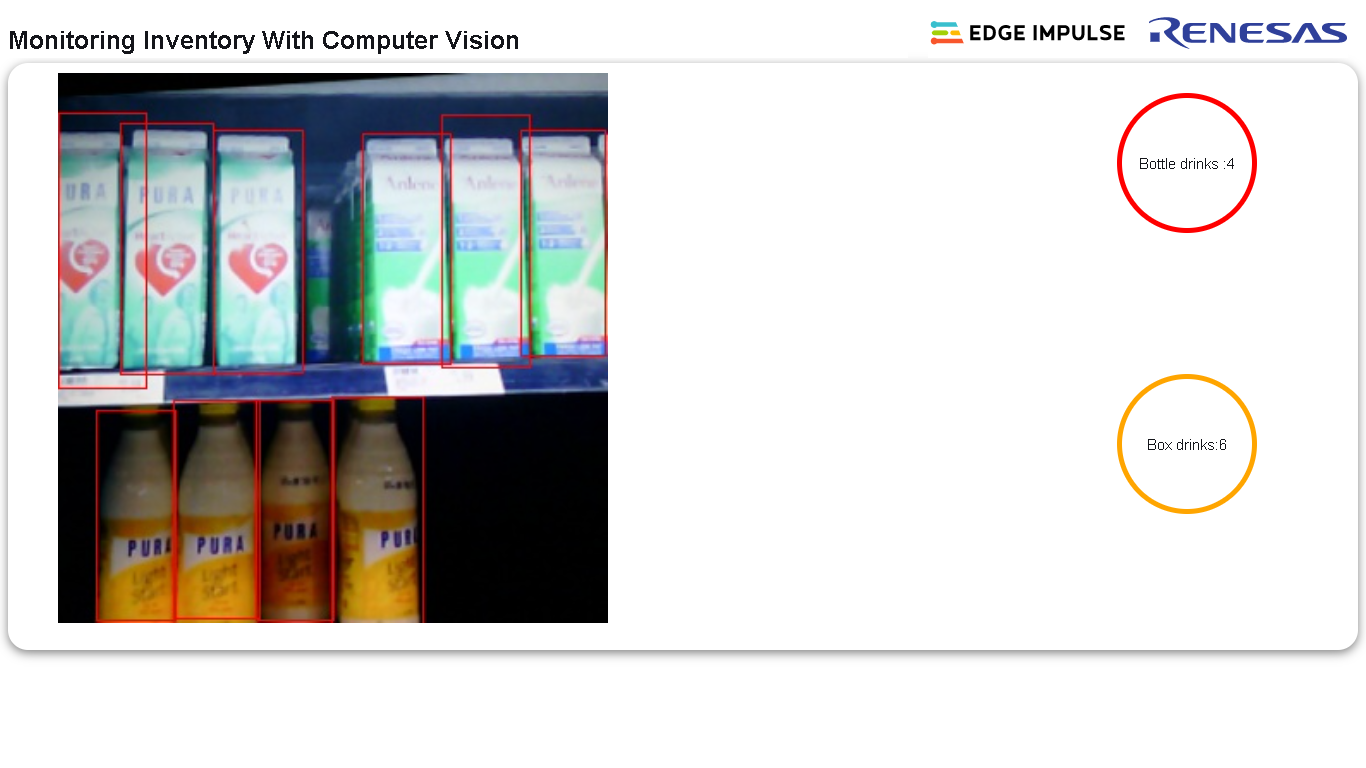

A Smart Application to Count Shelf Items

Using the.eim executable and the Edge Impulse Linux Python SDK, I developed a Web application using Flask that counts the number bottles and box drinks in a camera frame. The counts are then displayed on a webpage in real-time.