Introduction

Even with the current limitations of Artificial Intelligence, it is still a very useful tool, and many tasks can be automated with the technology. As more tasks become automated, human resources are freed up, allowing them to spend more time focusing on what really matters to businesses: their customers. The retail industry is a prime example of an industry that can be automated through the use of Artificial Intelligence, the Internet of Things, and Robotics.Solution

Computer Vision is a very popular field of Artificial Intelligence, with many possible applications. This project is a proof of concept that shows how Computer Vision can be used to create an automated checkout process using the NVIDIA Jetson Nano and the Edge Impulse platform.Hardware

- NVIDIA Jetson Nano Buy

- USB webcam

Platform

- Edge Impulse Visit

Project Setup

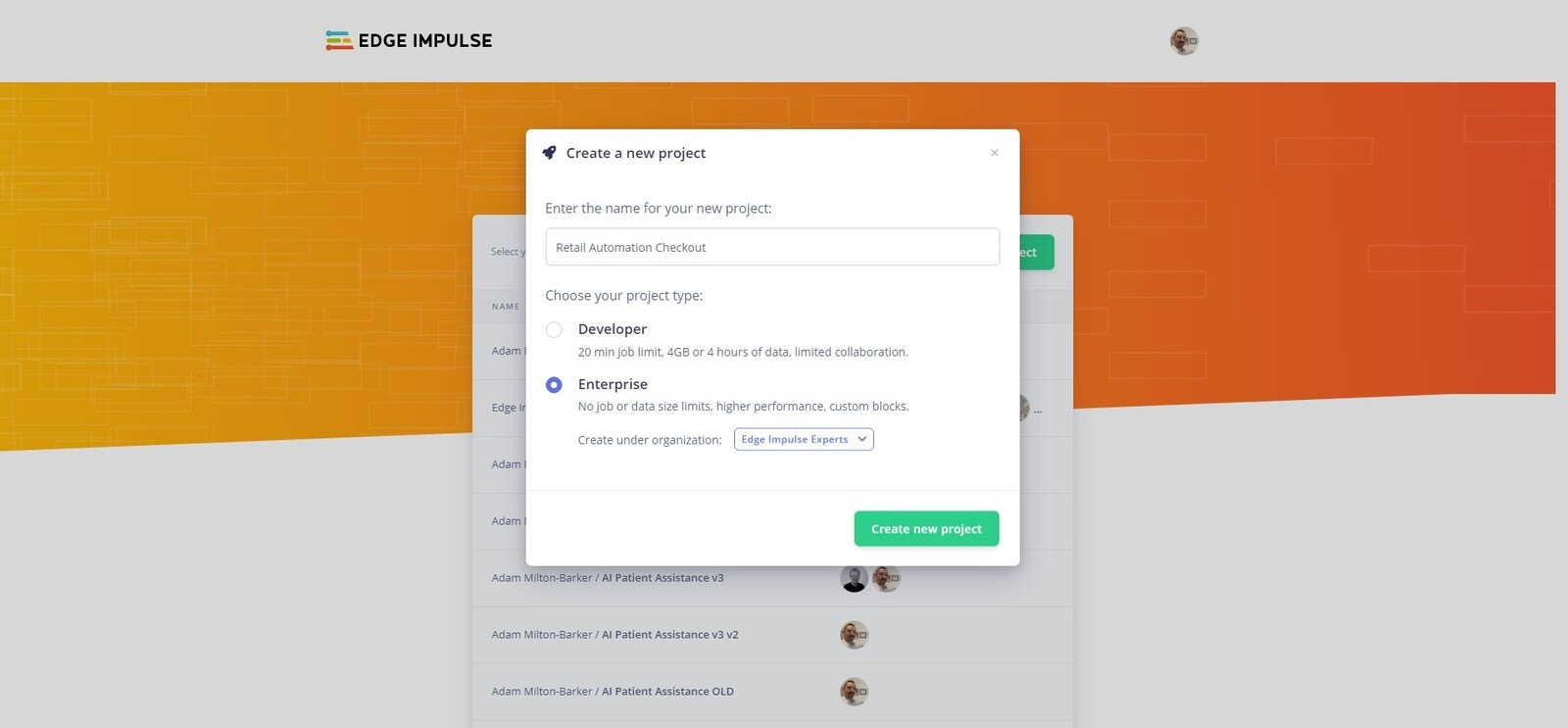

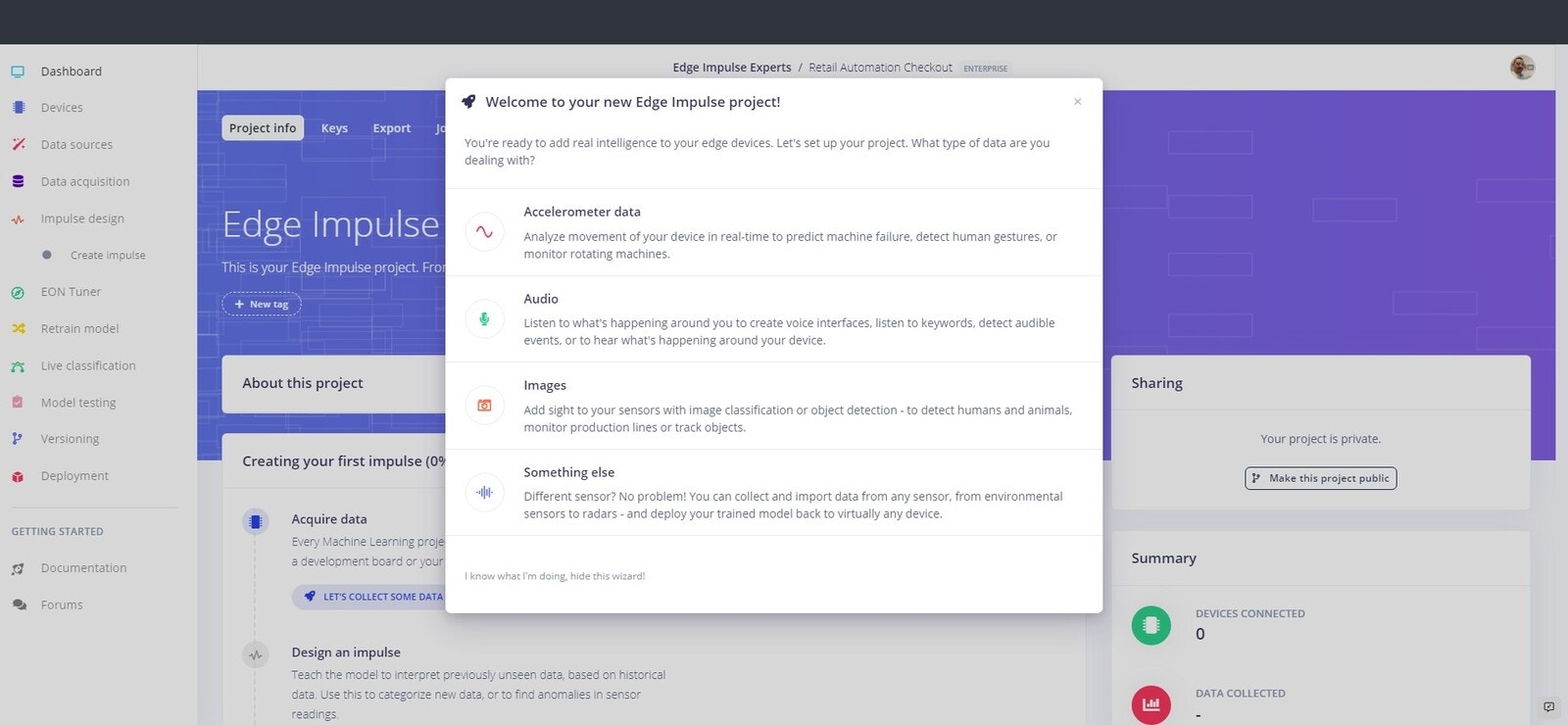

Head over to Edge Impulse and create your account or login. Once logged in you will be taken to the project selection/creation page.Create New Project

Your first step is to create a new project.

Create Edge Impulse project

Choose project type

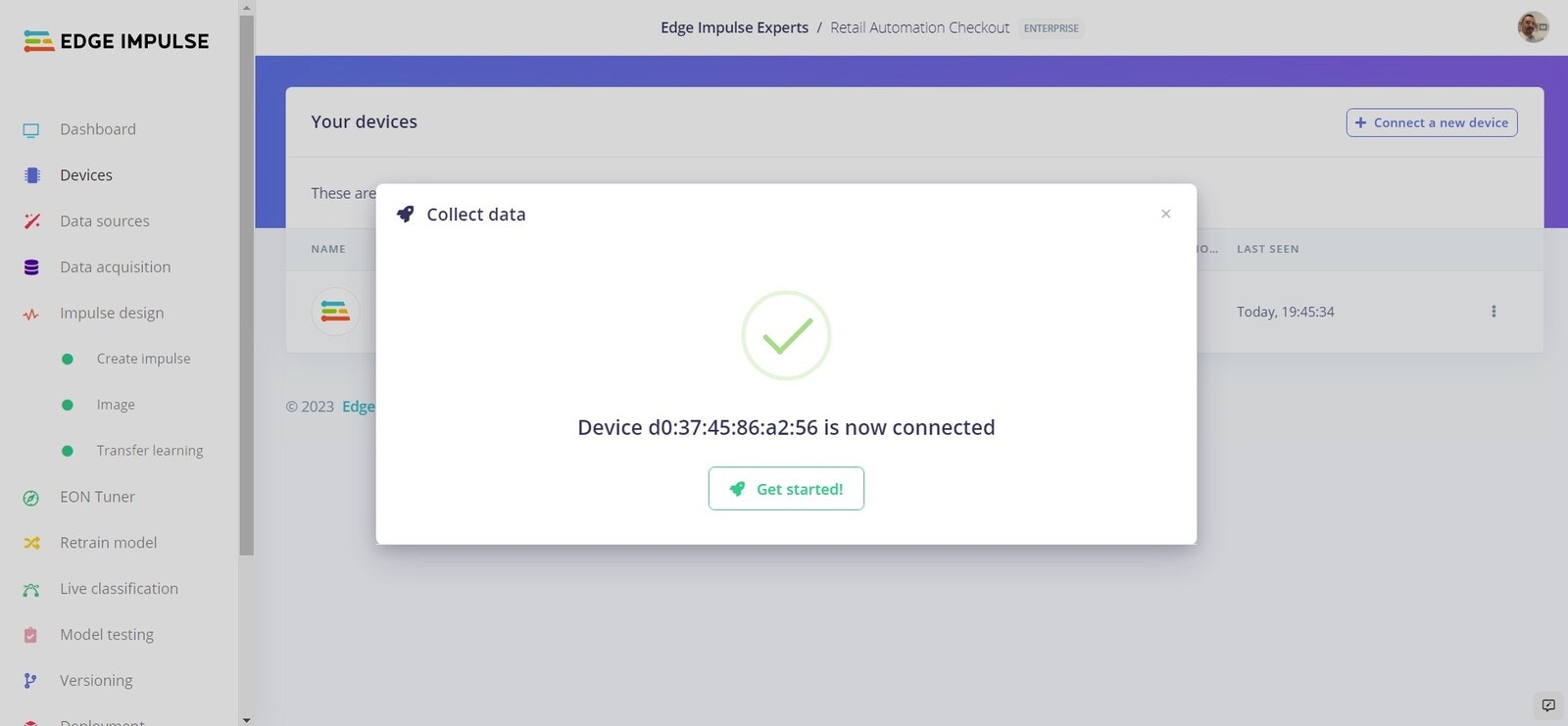

Connect Your Device

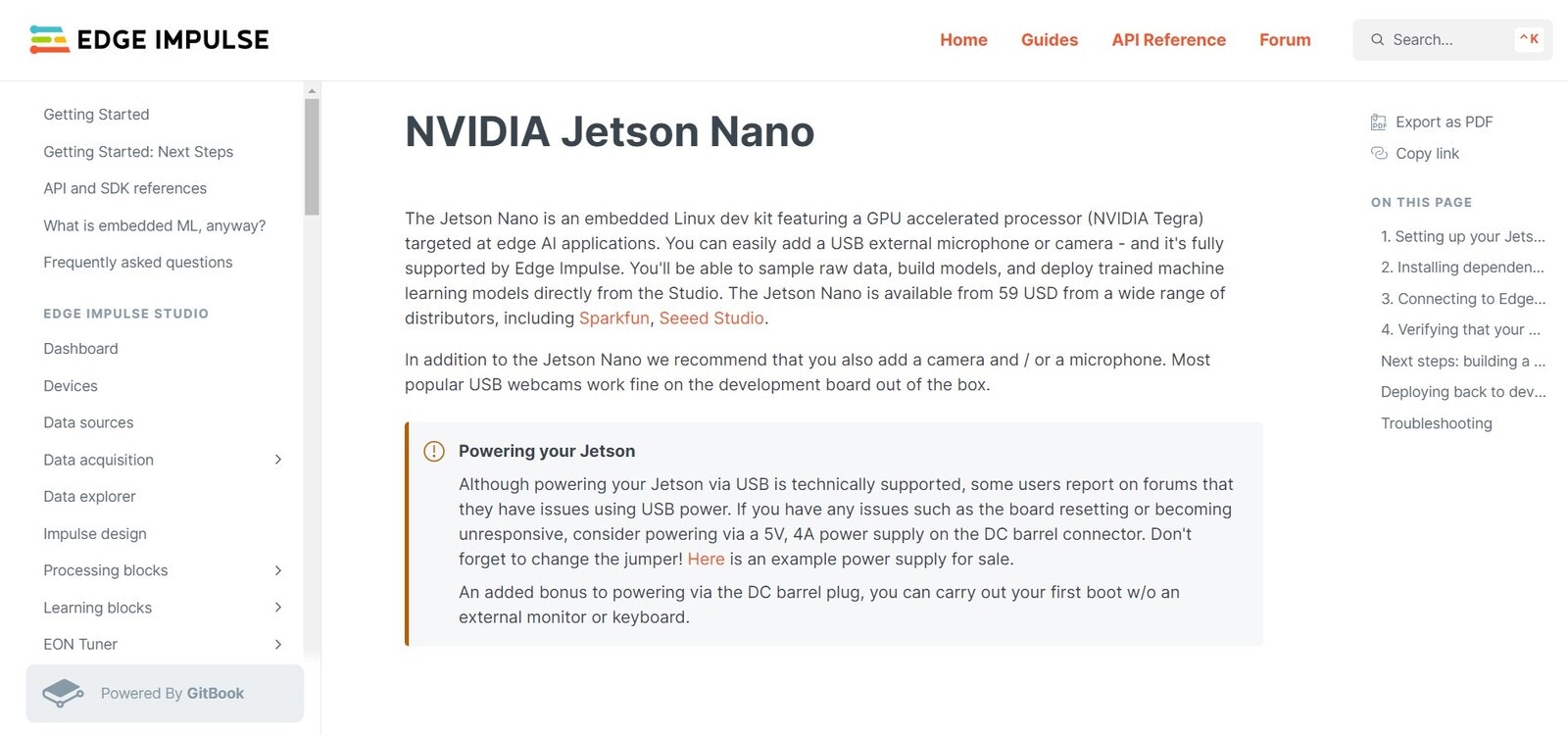

Connect device

- Running the Edge Impulse NVIDIA Jetson Nano setup script

- Connecting your device to the Edge Impulse platform

Device connected to Edge Impulse

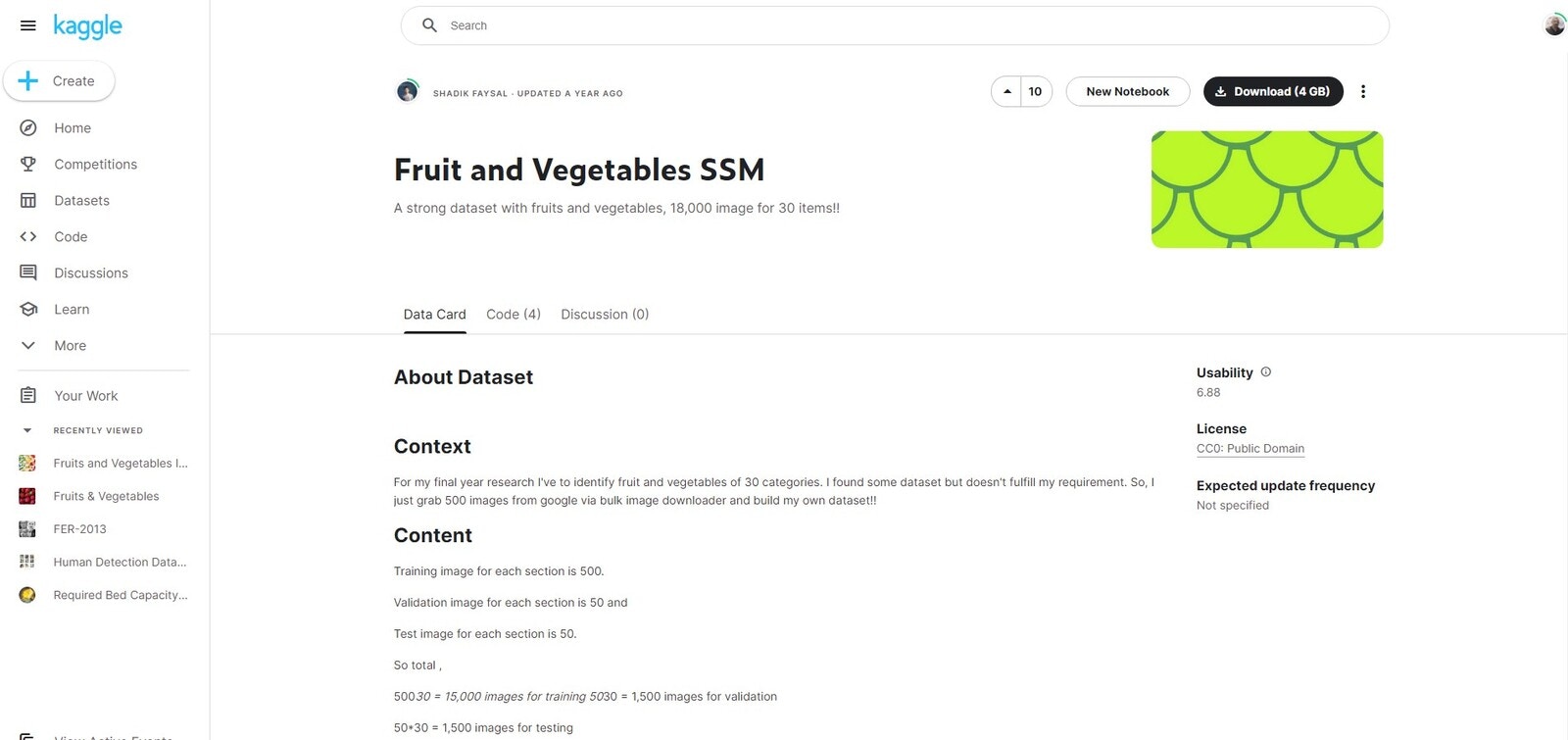

Dataset

Download data

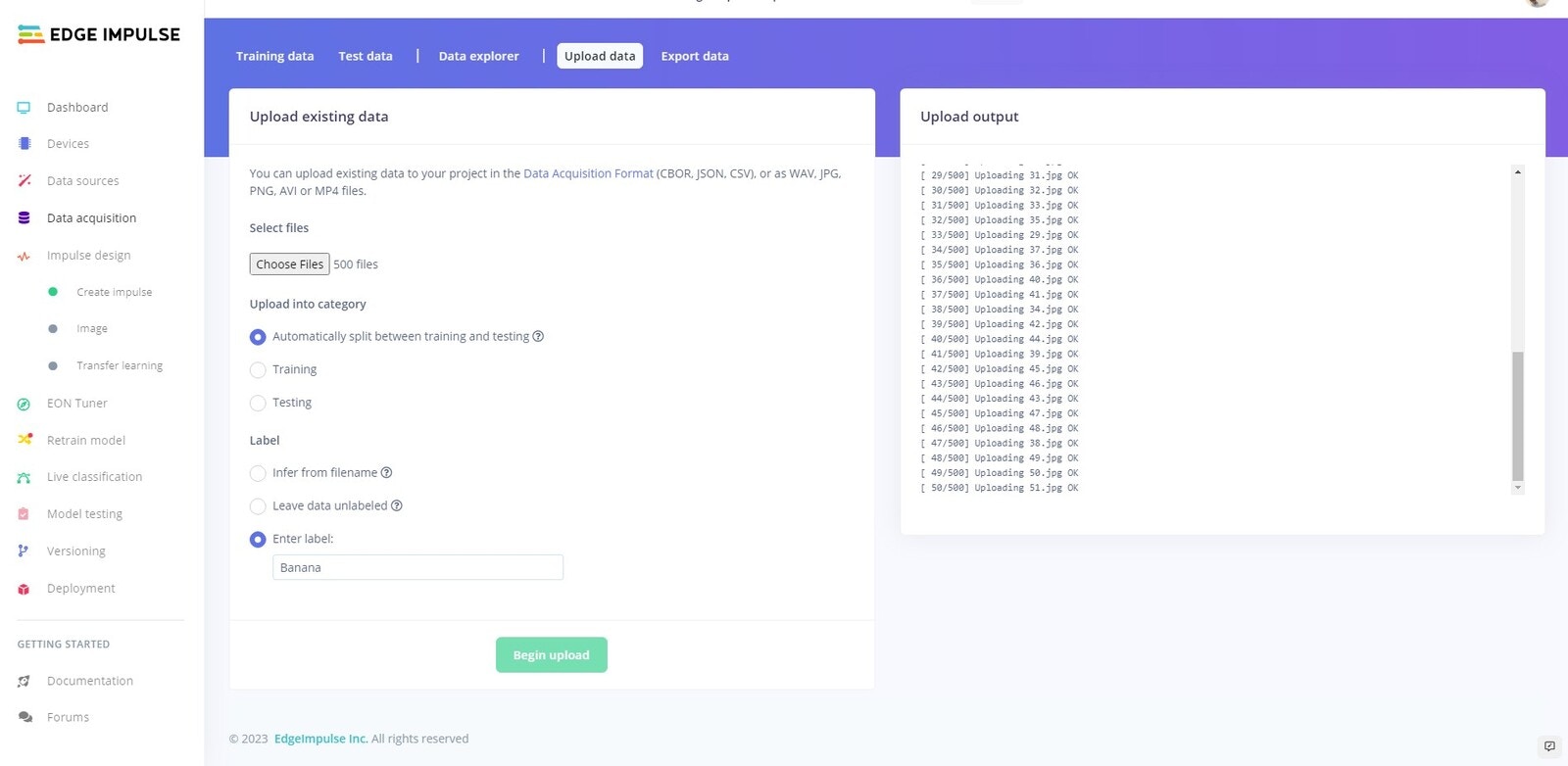

Upload data

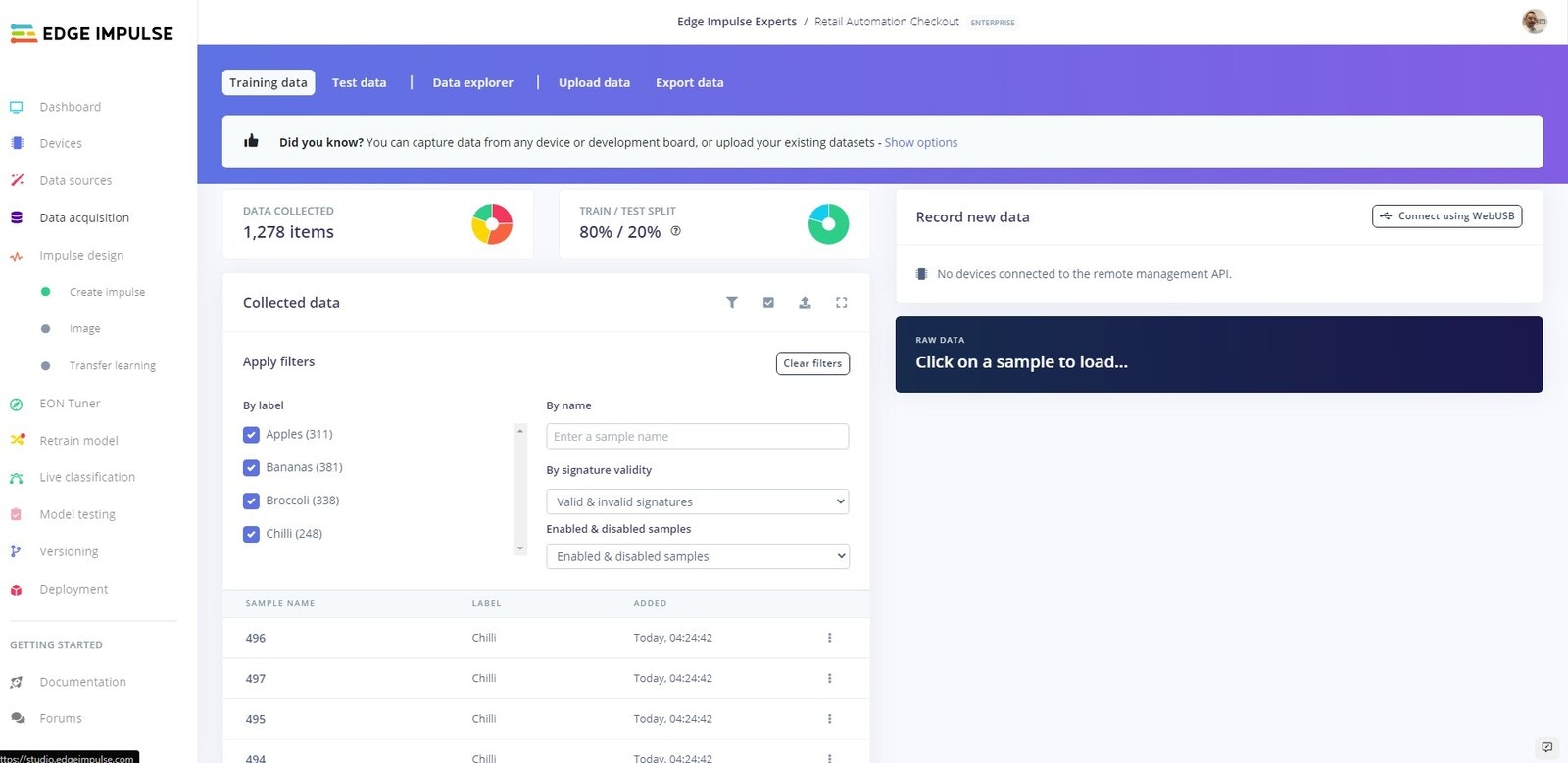

Uploaded data

Create Impulse

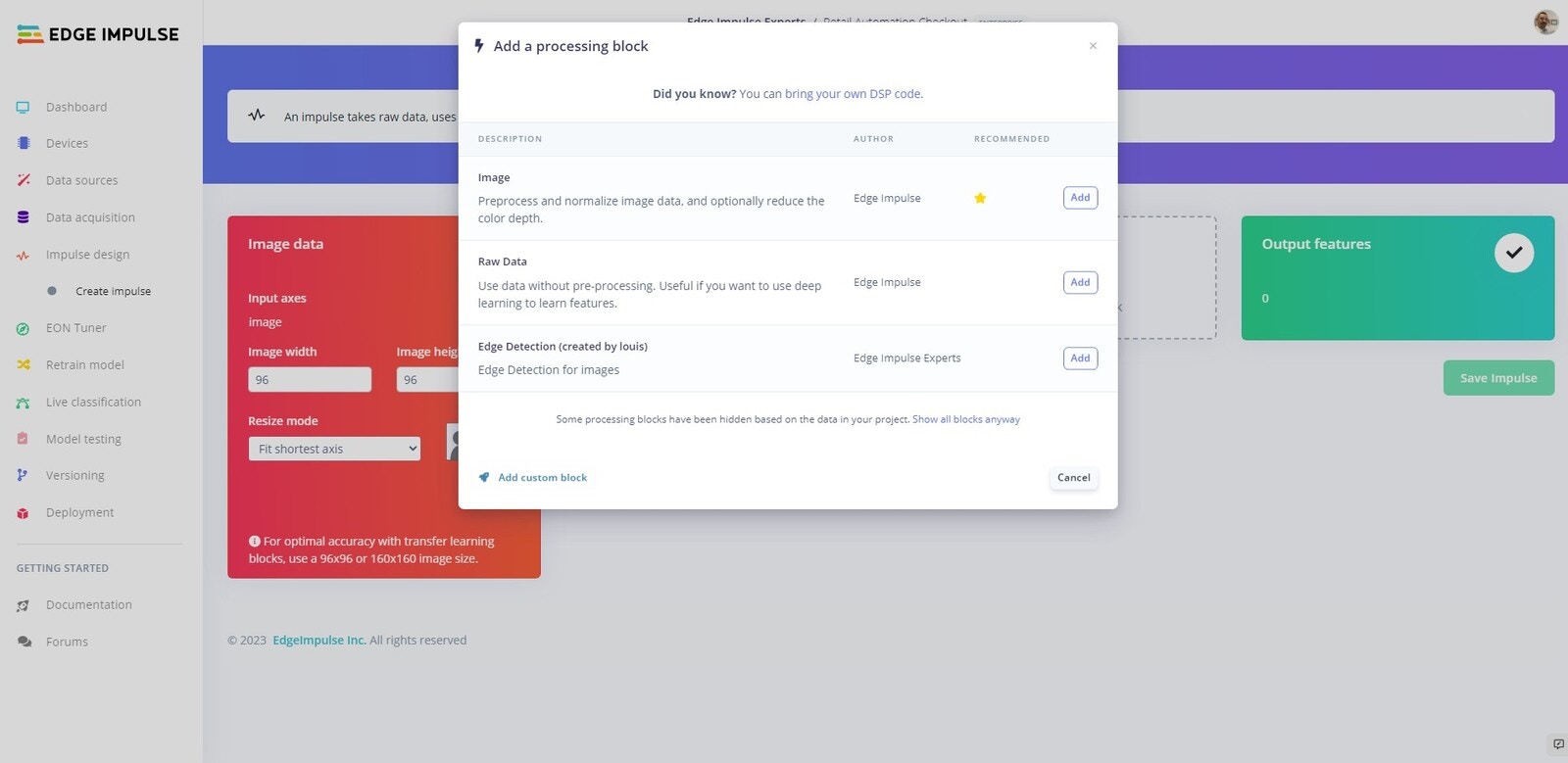

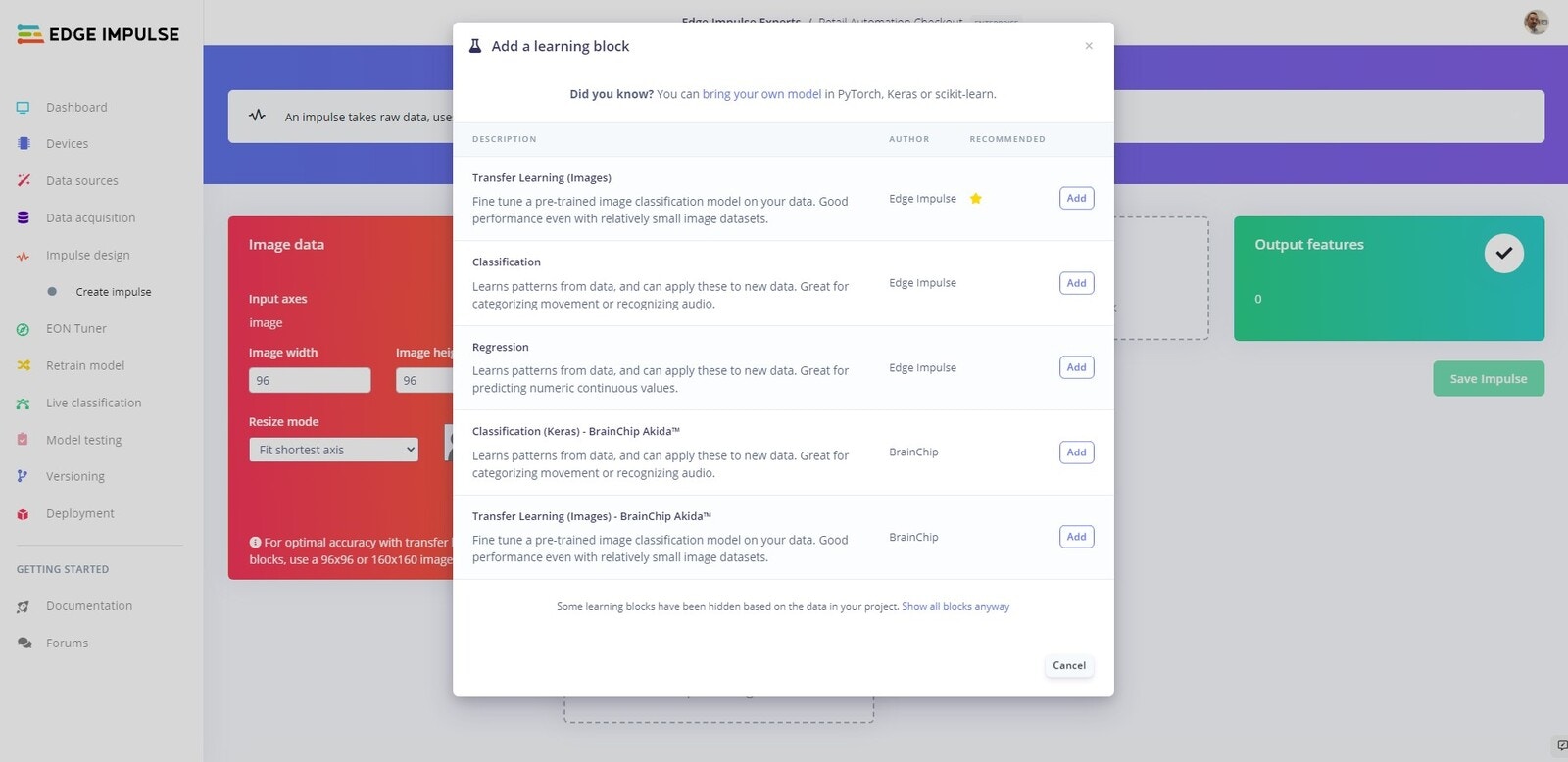

Now we are going to create our neural network and train our model.

Add processing block

Created Impulse

Transfer Learning

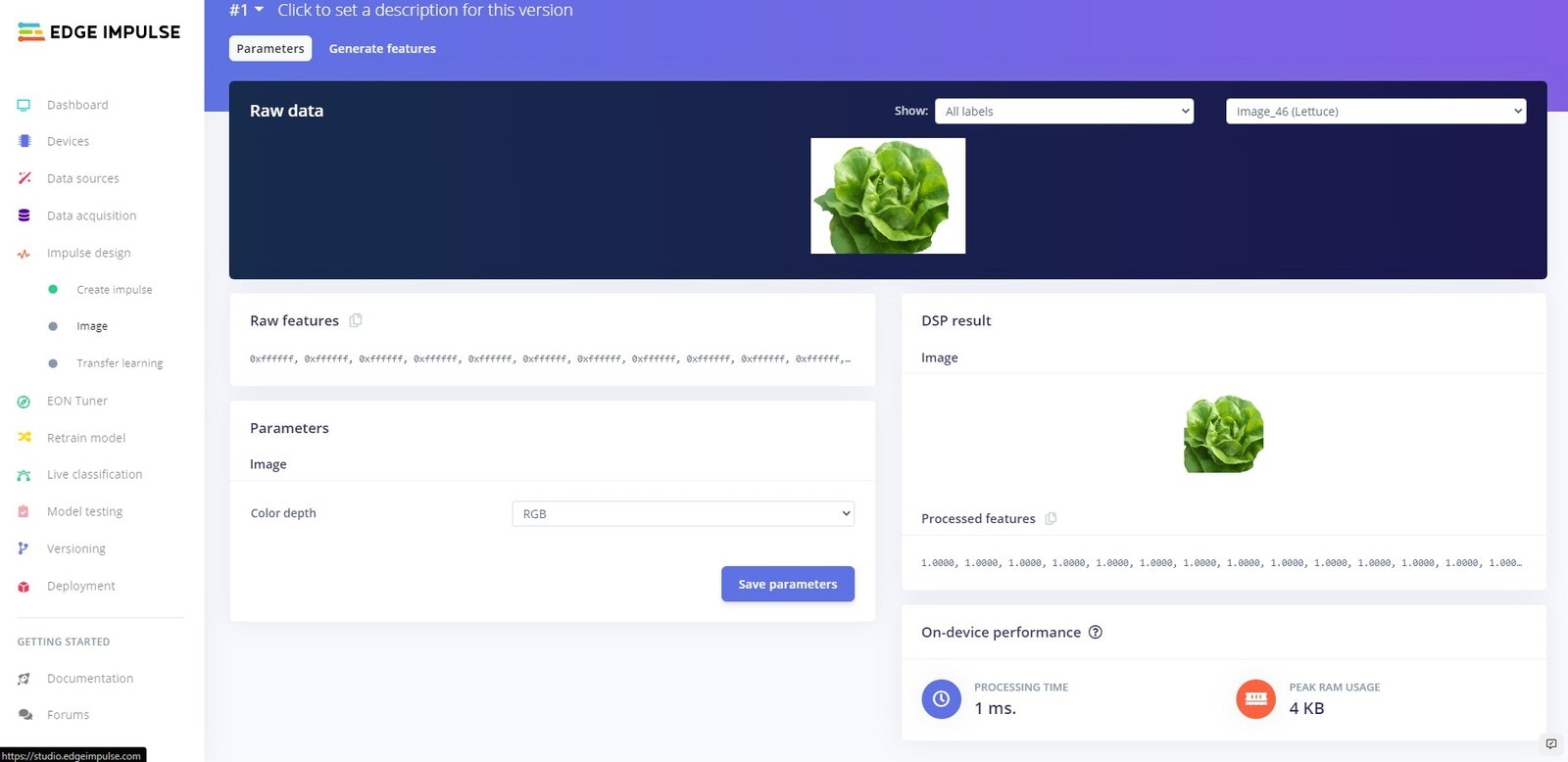

Parameters

Parameters

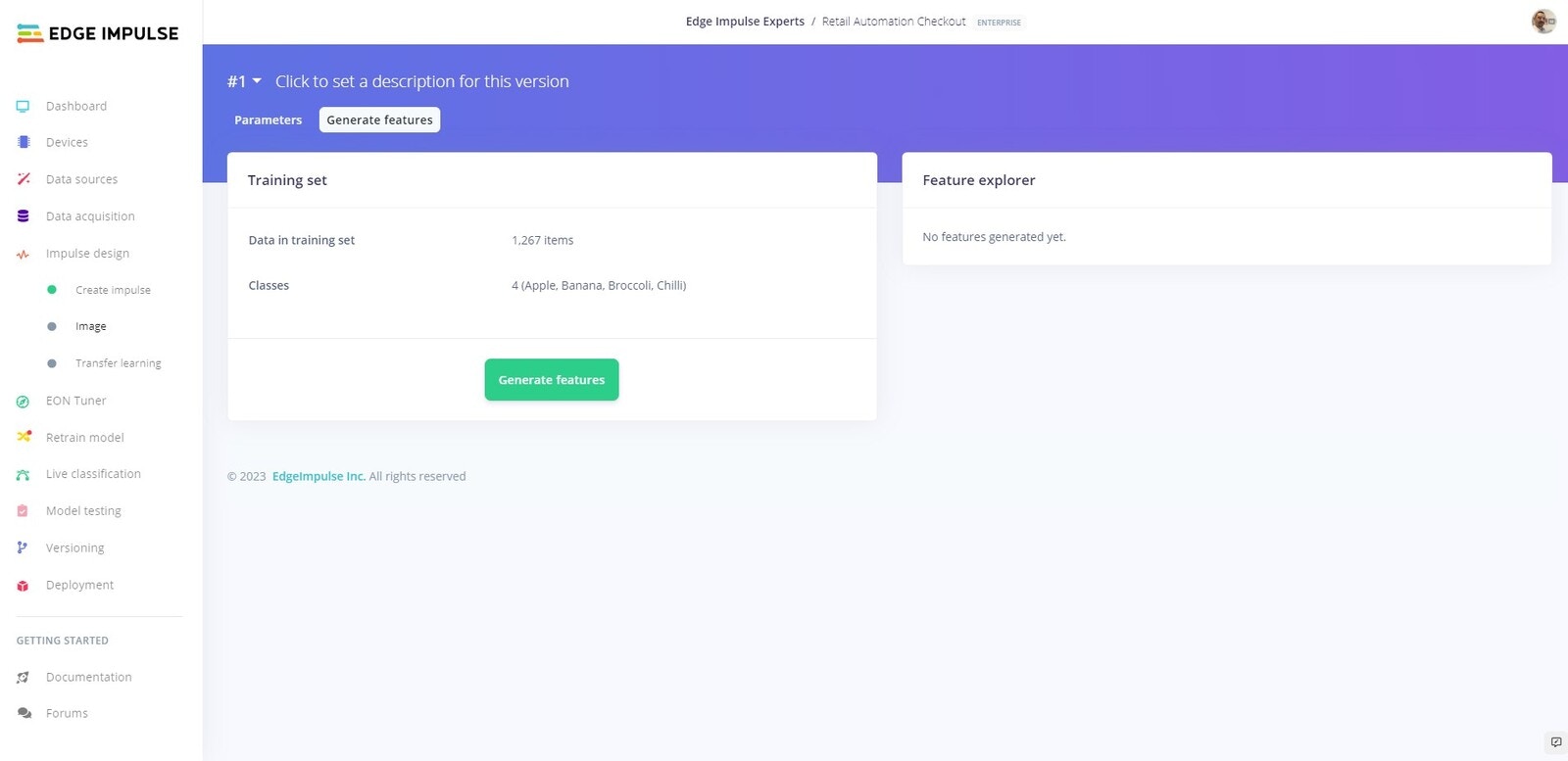

Generate Features

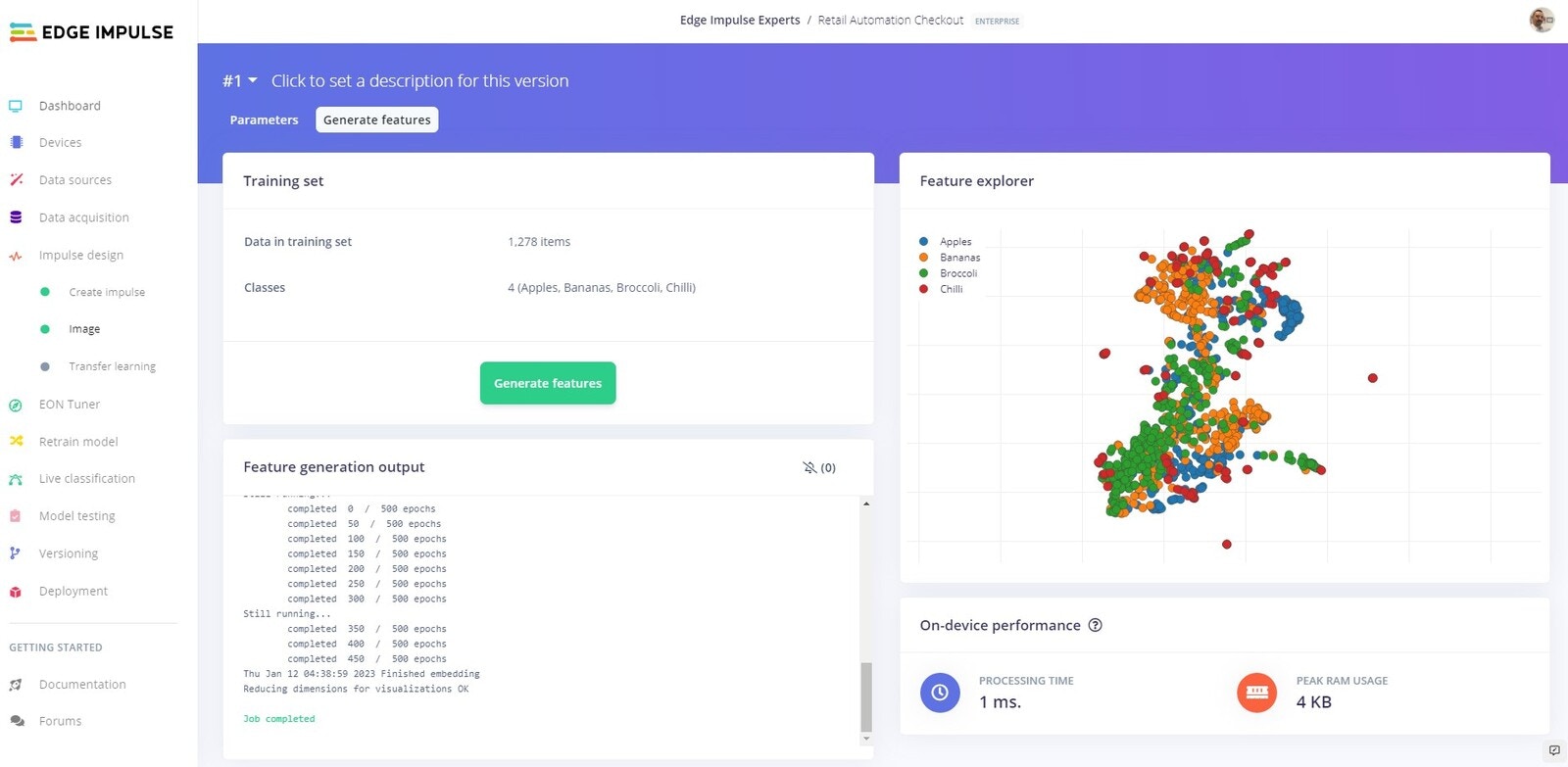

Generate Features

Generated Features

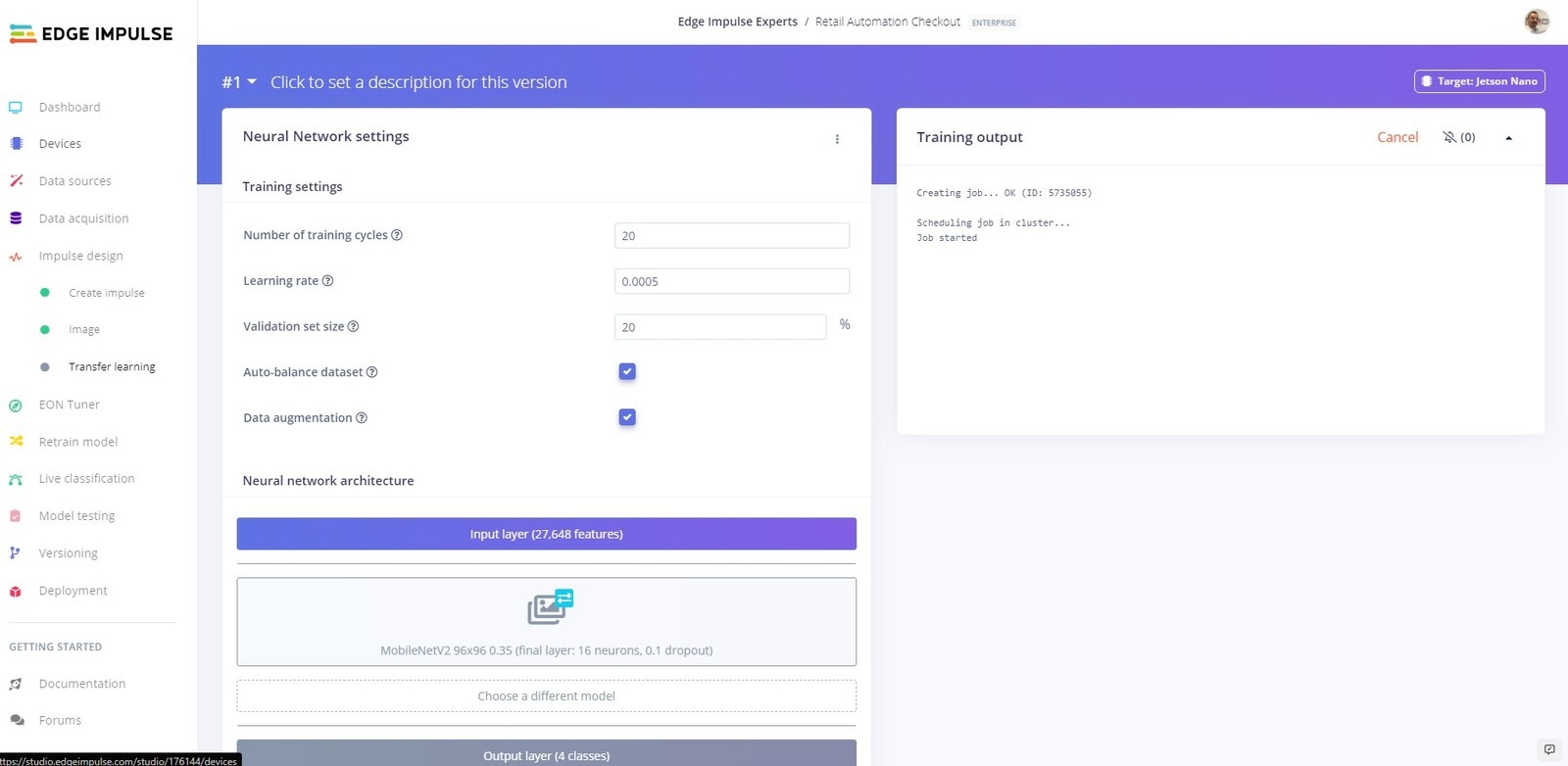

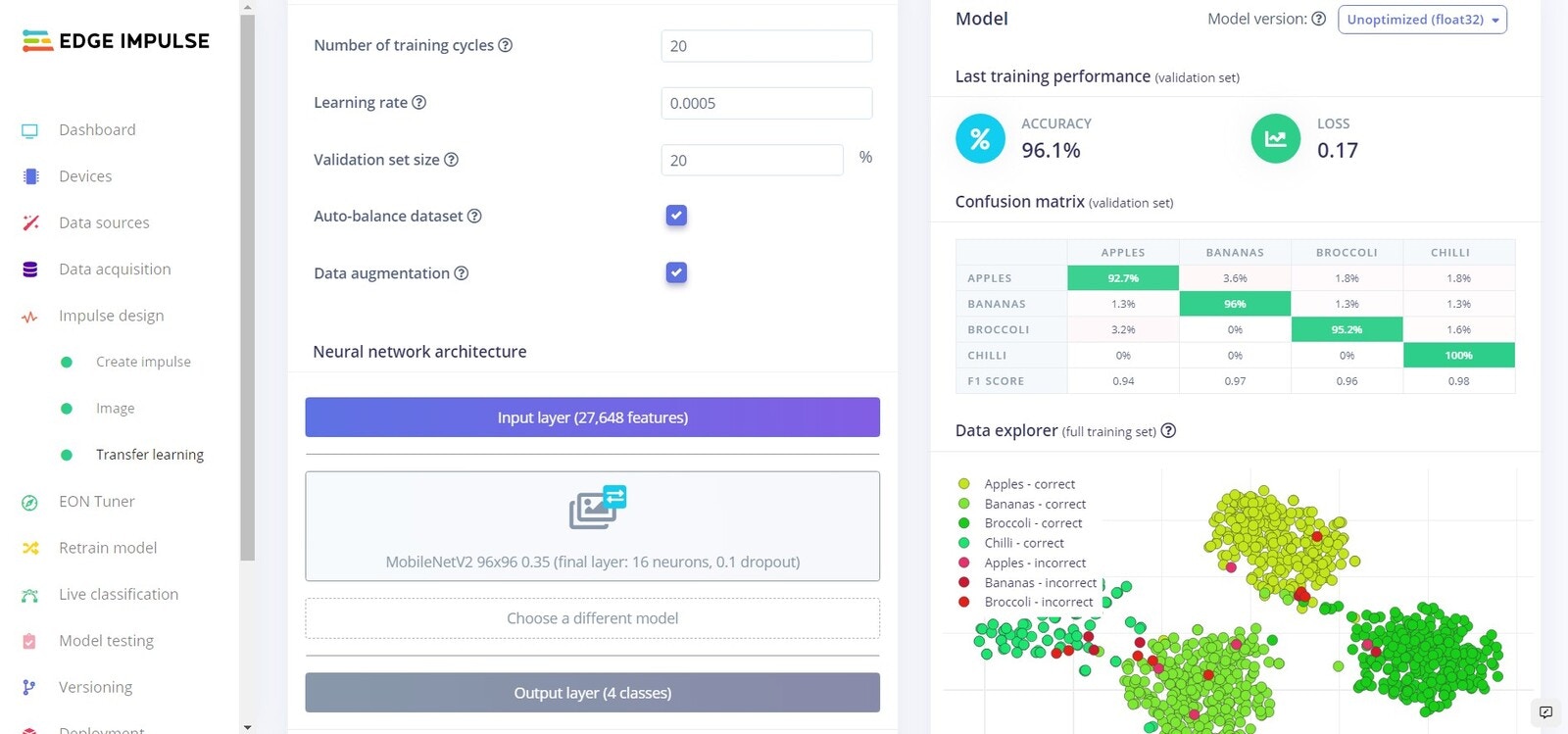

Training

Training

Training complete

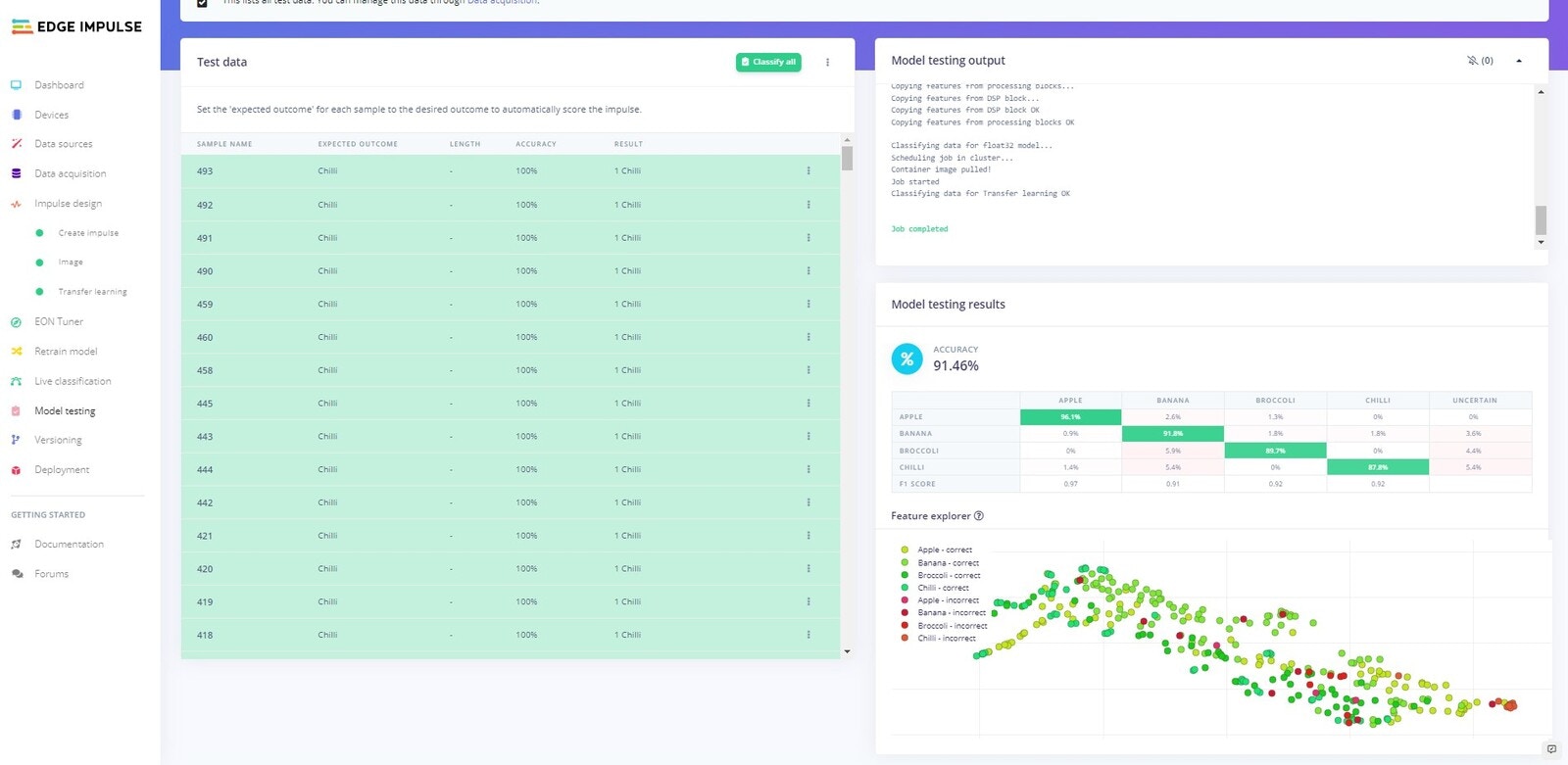

Testing

Platform Testing

Head over to the Model testing tab where you will see all of the unseen test data available. Click on the Classify all and sit back as we test our model.

Test model results

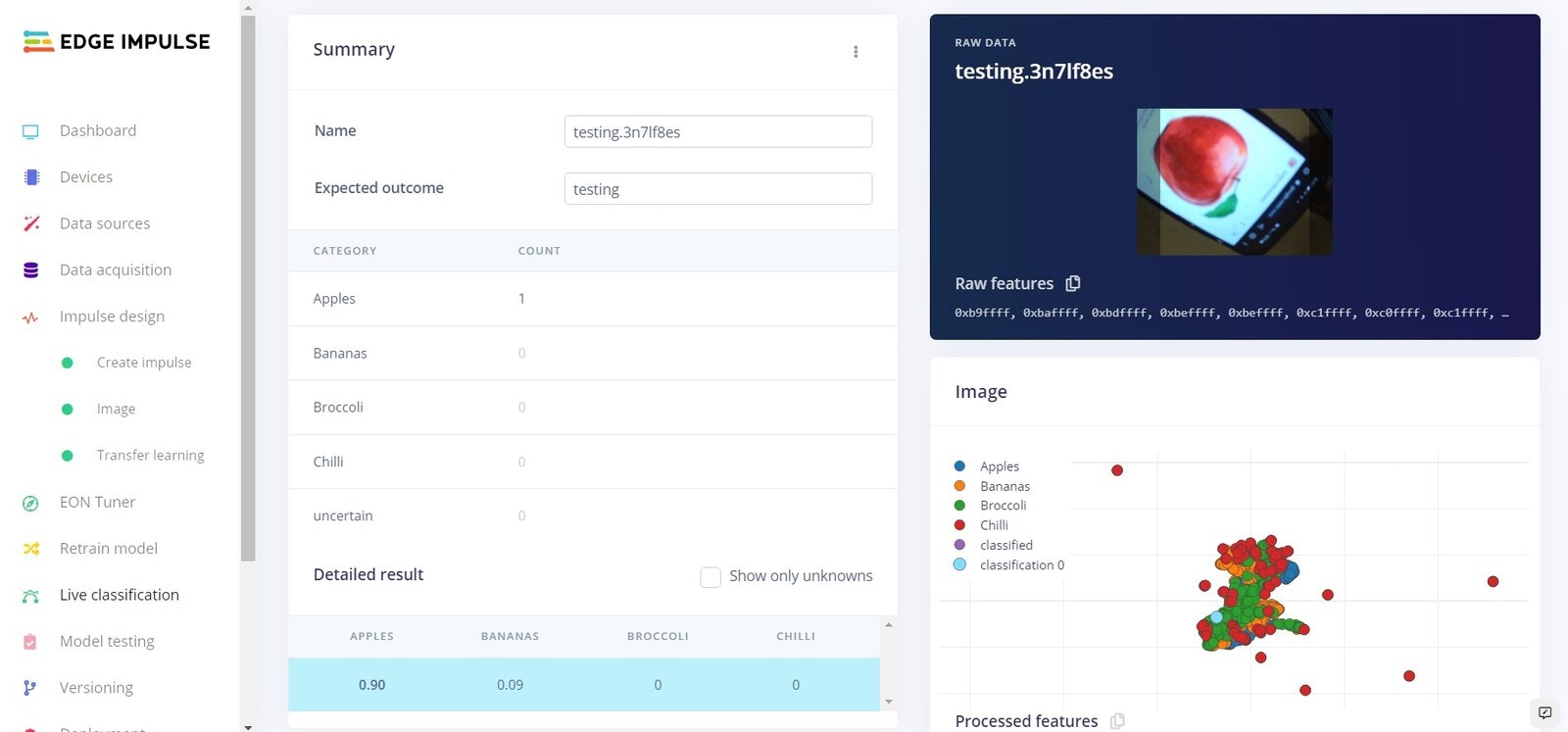

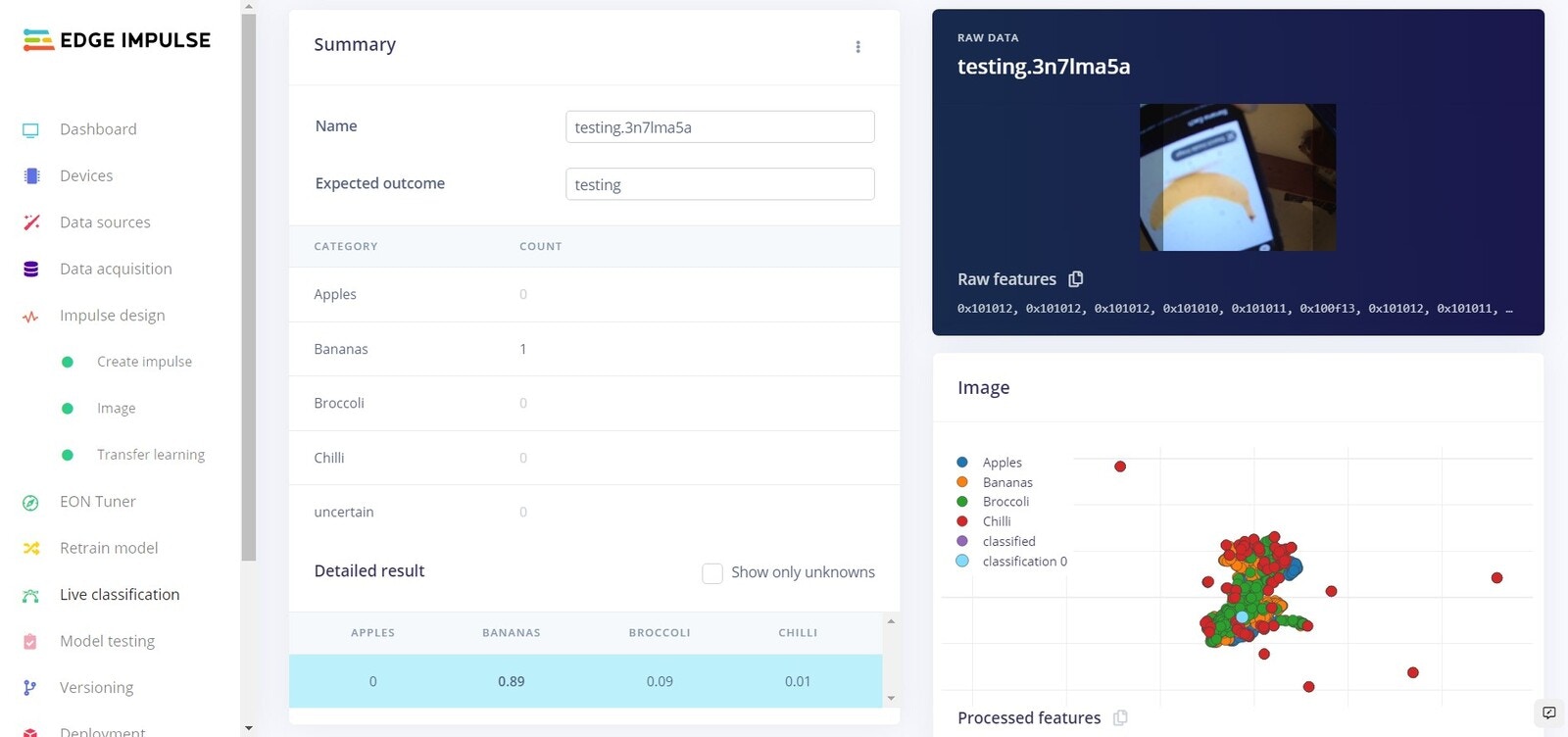

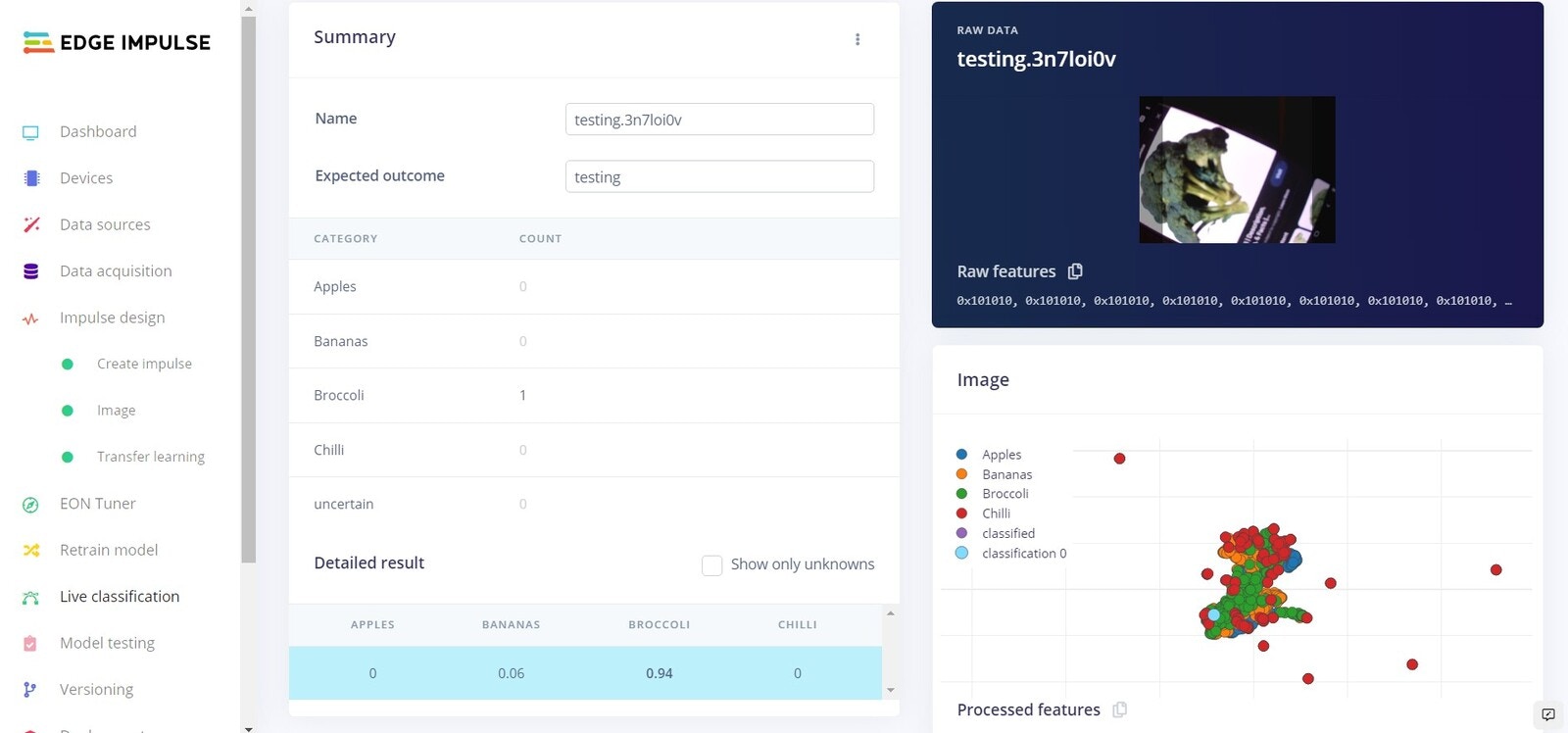

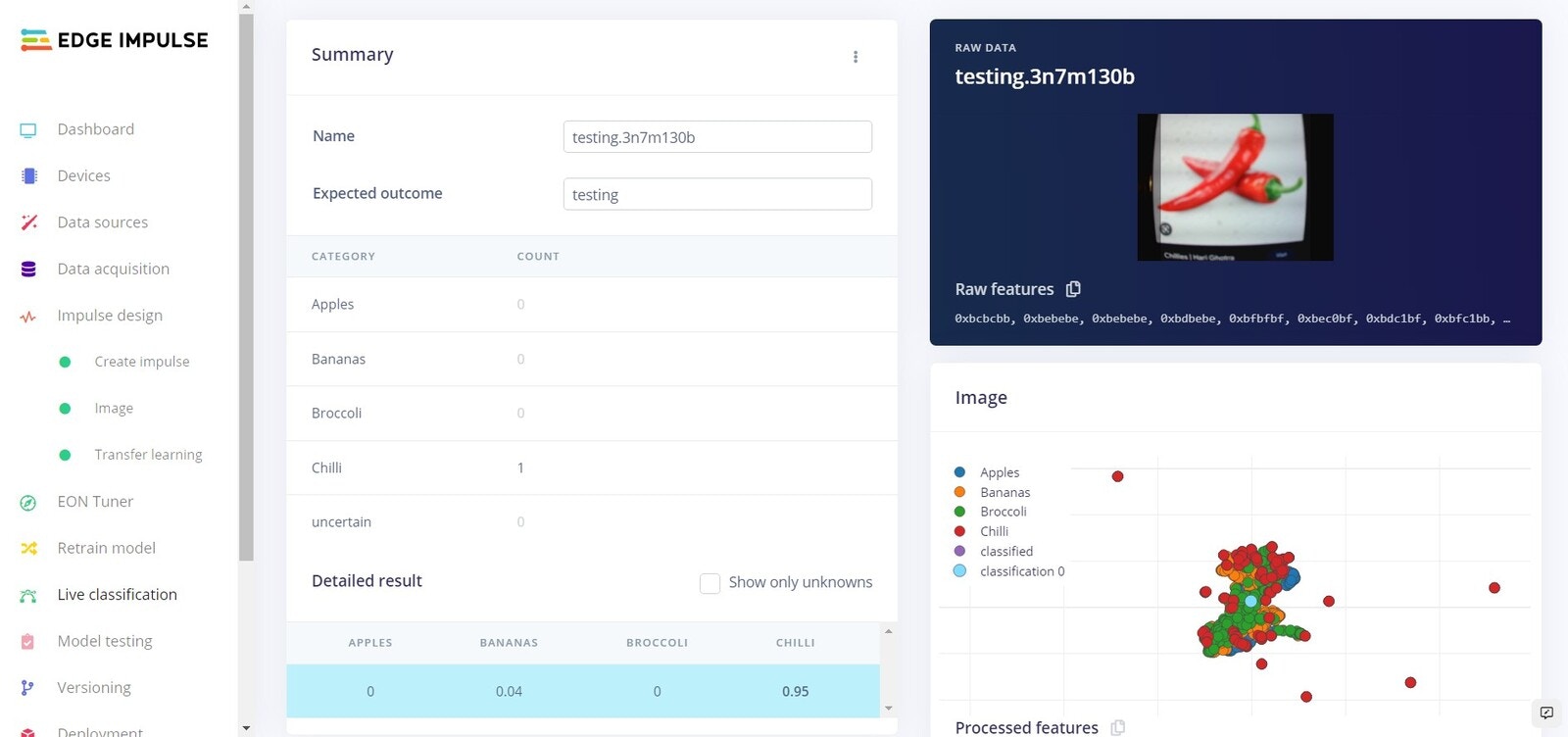

On Device Testing

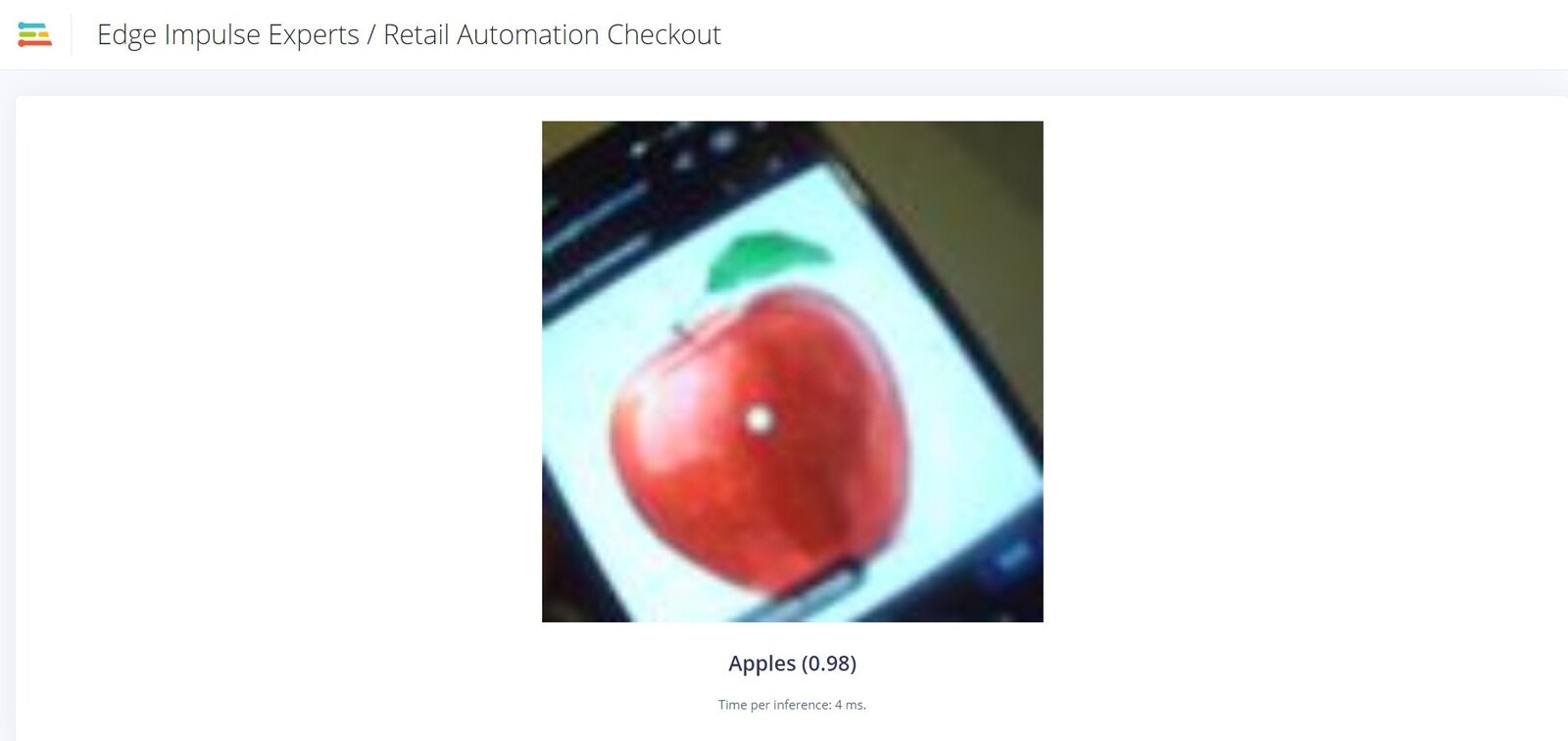

Before we deploy the software to the NVIDIA Jetson Nano, lets test using the Edge Impulse platform whilst connected to the Jetson Nano. For this to work make sure your device is currently connected and that your webcam is attached.

Live testing: Apple

Live testing: Banana

Live testing: Broccoli

Live testing: Chilli

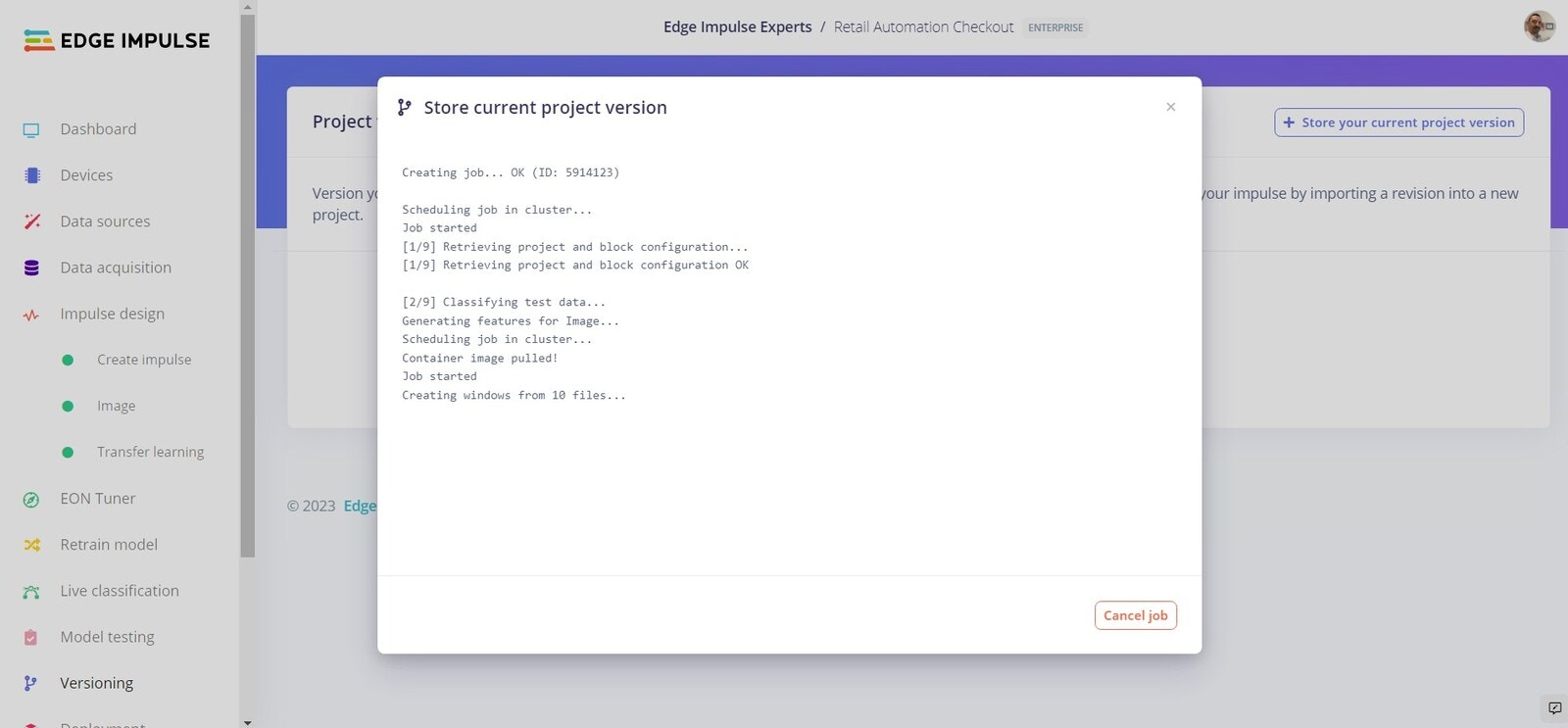

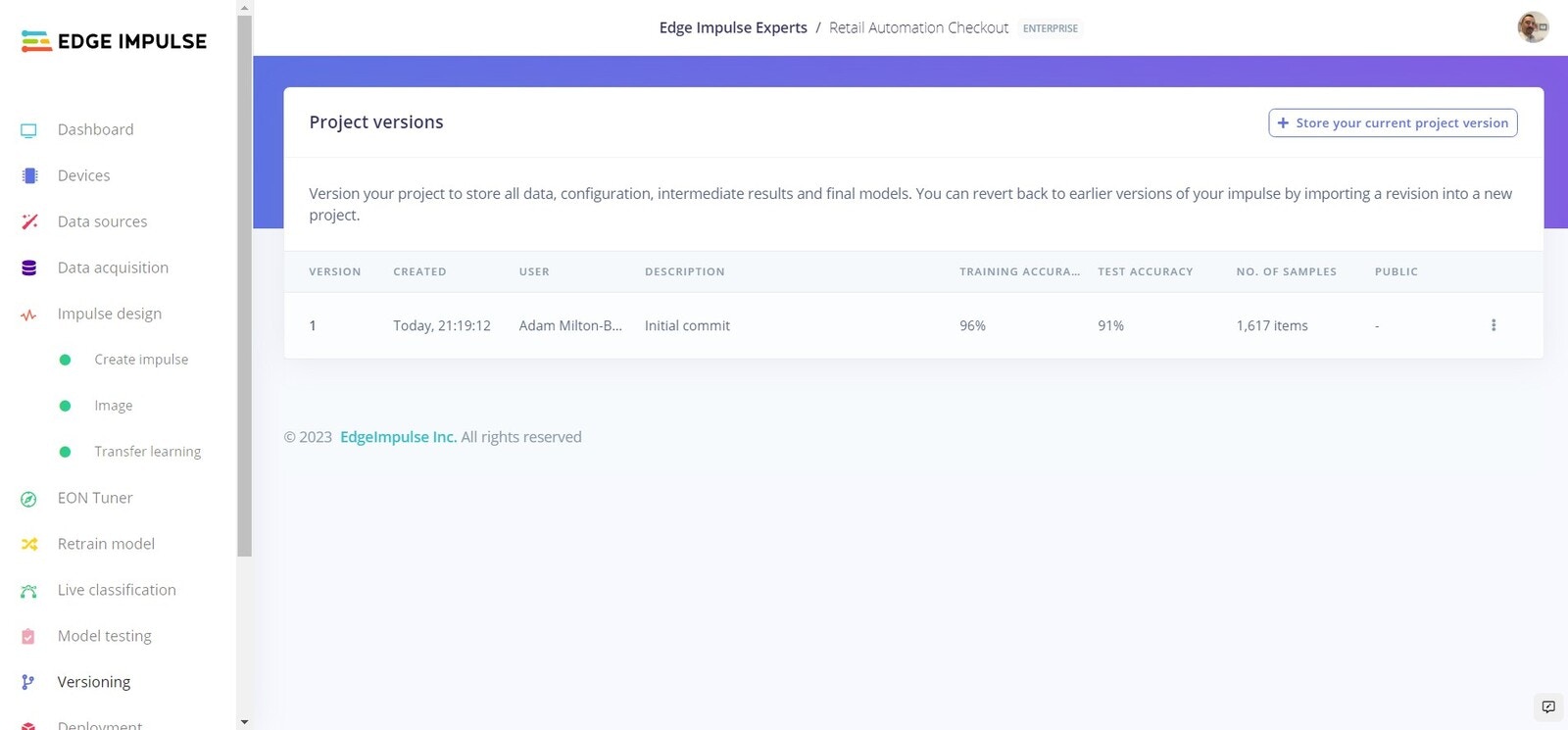

Versioning

Versioning

Versions

Deployment

Now we will deploy the software directly to the NVIDIA Jetson Nano. To do this simply head to the terminal on your Jetson Nano, and enter:

Live Inferencing