This is an advanced feature that requires familiarity with machine learning architectures and model deployment.

It enables models that do not conform to the standard input/output format expected by Edge Impulse but requires manual handling of data preprocessing and postprocessing.

Example use cases

For this tutorial we will focus on an image upscaling example, but this approach can be applied to various models, such as:- Denoising models (for images or audio)

- Super-resolution models (for images or audio)

- Pose estimation models

- Image segmentation models

- Time-series forecasting models

Image upscaling example on Linux or Mac

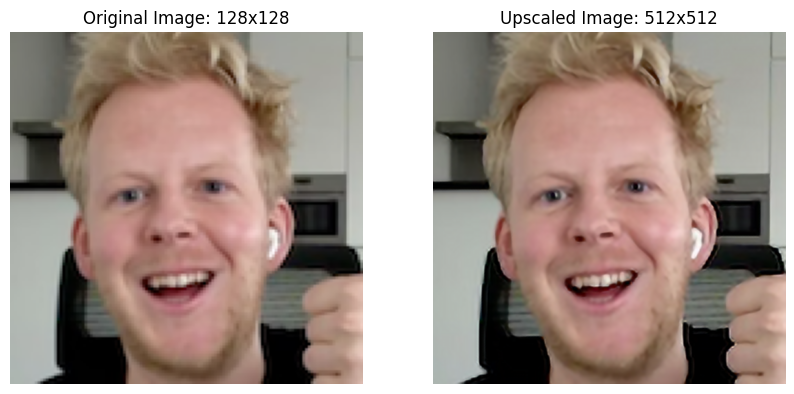

Qualcomm AI Hub provides a variety of pretrained models that can be used for different tasks. Many of them use non-standard input and output shapes, making them suitable candidates for freeform model integration. In this example, we will use the “QuickSRNetSmall” model from Qualcomm AI Hub. You can bring your own model as well, as long as it conforms to the Bring Your Own Model requirements. The QuickSRNetSmall model is a super-resolution model that takes a low-resolution image as input and produces a high-resolution image as output. The model expects an input shape of (128,128,3) flattened to a list and produces an output image 4x the resolution (512, 512, 3). Here is an example of the input and output of the QuickSRNetSmall model:

Converting the model

- Download the QuickSRNetSmall model from Qualcomm AI Hub as a quantized w8a8

.tflitefile. - In Edge Impulse Studio open a new project and on the dashboard click “Upload your model”

- Select your .tflite model file and upload it.

- To configure your model input correctly for this image upscaling example select:

- Model Input: “Image”

- How is your Input scaled: “Pixels ranging 0..1 (not normalized)”

- Resize mode: “Squash”

- Model Output: “Freeform”

- Click “Save model” to complete the upload process.

- Build the model into an .EIM executable for your target device (Linux or macOS deployment options)

- Once the build is complete the file will automatically be downloaded to your computer.

- Follow the instructions on screen to mark the file as executable (e.g.

chmod +x <filename>.eim)

Testing the model locally

- Install the Edge Impulse Linux python SDK and its dependencies (if you haven’t already).

You’ll need a Python 3.6+ environment with pip installed. Run the following commands in your terminal to install the SDK and its dependencies:

- Download a low-resolution test image (replace

byom-freeform-example-input.png) and resize it to 128x128 pixels using an image editing tool or library of your choice.

- Run the following Python script to preprocess the image, run the model (replace

path_to_your.eim), and show the output image:

Conclusion

In this tutorial, we demonstrated how to upload, deploy, and test a pretrained model with non-standard input and output shapes using Edge Impulse’s freeform model integration feature. This approach allows you to leverage a wide range of machine learning models for various applications, even if they do not conform to the traditional input/output formats supported by Edge Impulse. This feature enables complex model cascading use-cases- for example, you could use a denoising model as a preprocessing step before feeding the cleaned data into a traditional Edge Impulse classification model. There are some key considerations to keep in mind when using this feature:- You are responsible for ensuring that the input data is preprocessed correctly to match the model’s expected input shape and format.

- You are also responsible for postprocessing the model’s output to extract meaningful information.

- The model must conform to the Bring Your Own Model requirements.