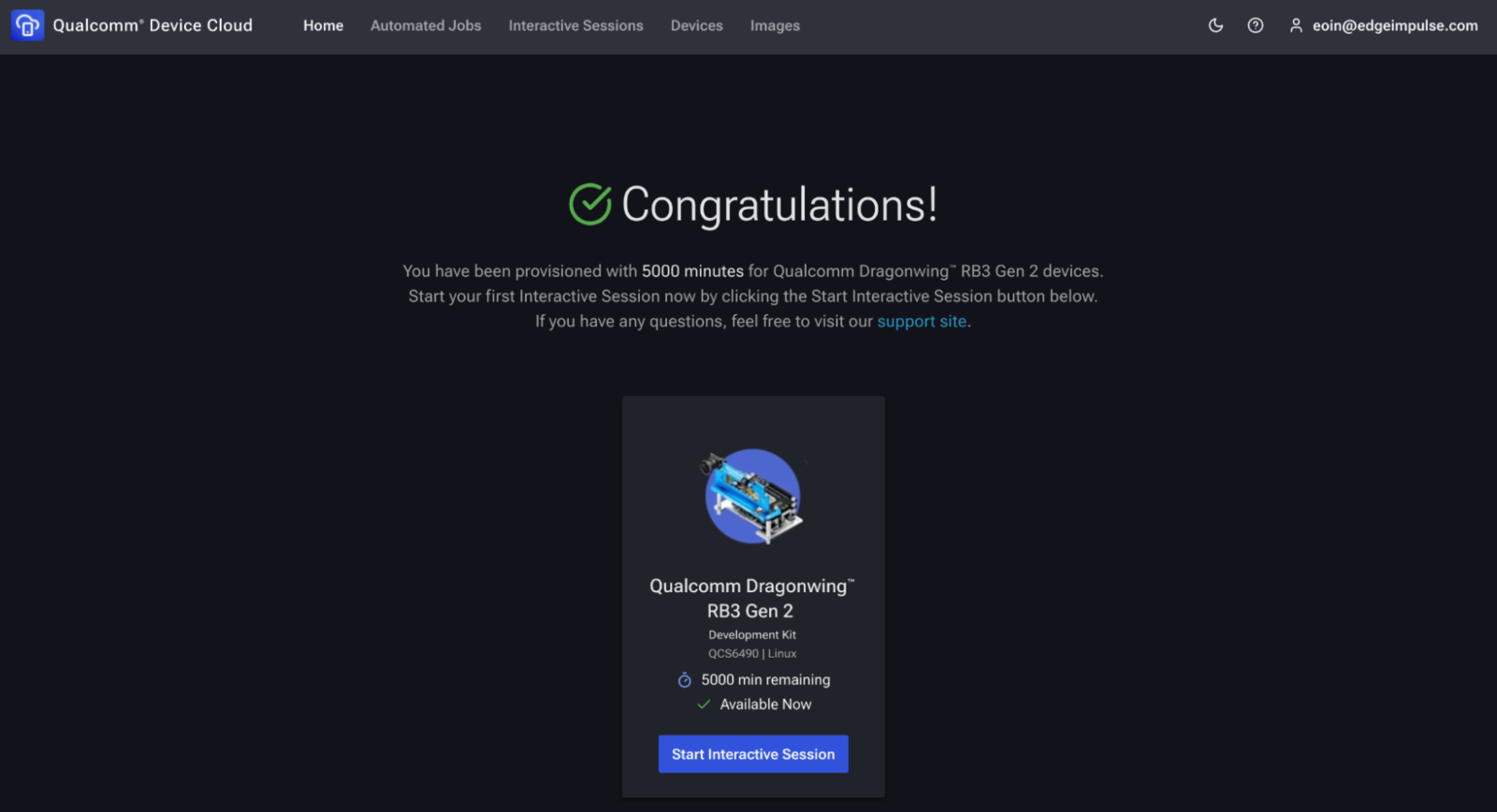

The Qualcomm® Device Cloud lets you remotely access real devices, as an Edge Impulse user this means you can get started without any investment in physical hardware. Gain access to devices like the Qualcomm Dragonwing™ RB3 Gen 2 Dev Kit to get started with AI on Qualcomm® hardware. Users get 5000 minutes of free device time, which is more than enough to run inference on a few static images, and do some initial development before you need to invest in hardware.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

- Spin up a virtual Dragonwing RB3 Gen 2 in Qualcomm Device Cloud (QDC).

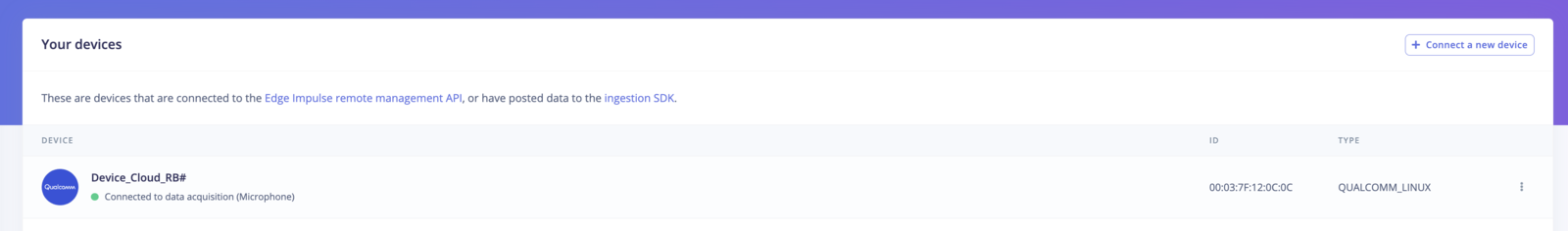

- Install the Edge Impulse CLI on the device.

- Connect the device to Edge Impulse Studio.

- Run AI inference on a static test image.

Prerequisites

- Qualcomm Device Cloud account – Sign up for free access to the Device Cloud.

- Edge Impulse account – Sign up if you don’t already have one.

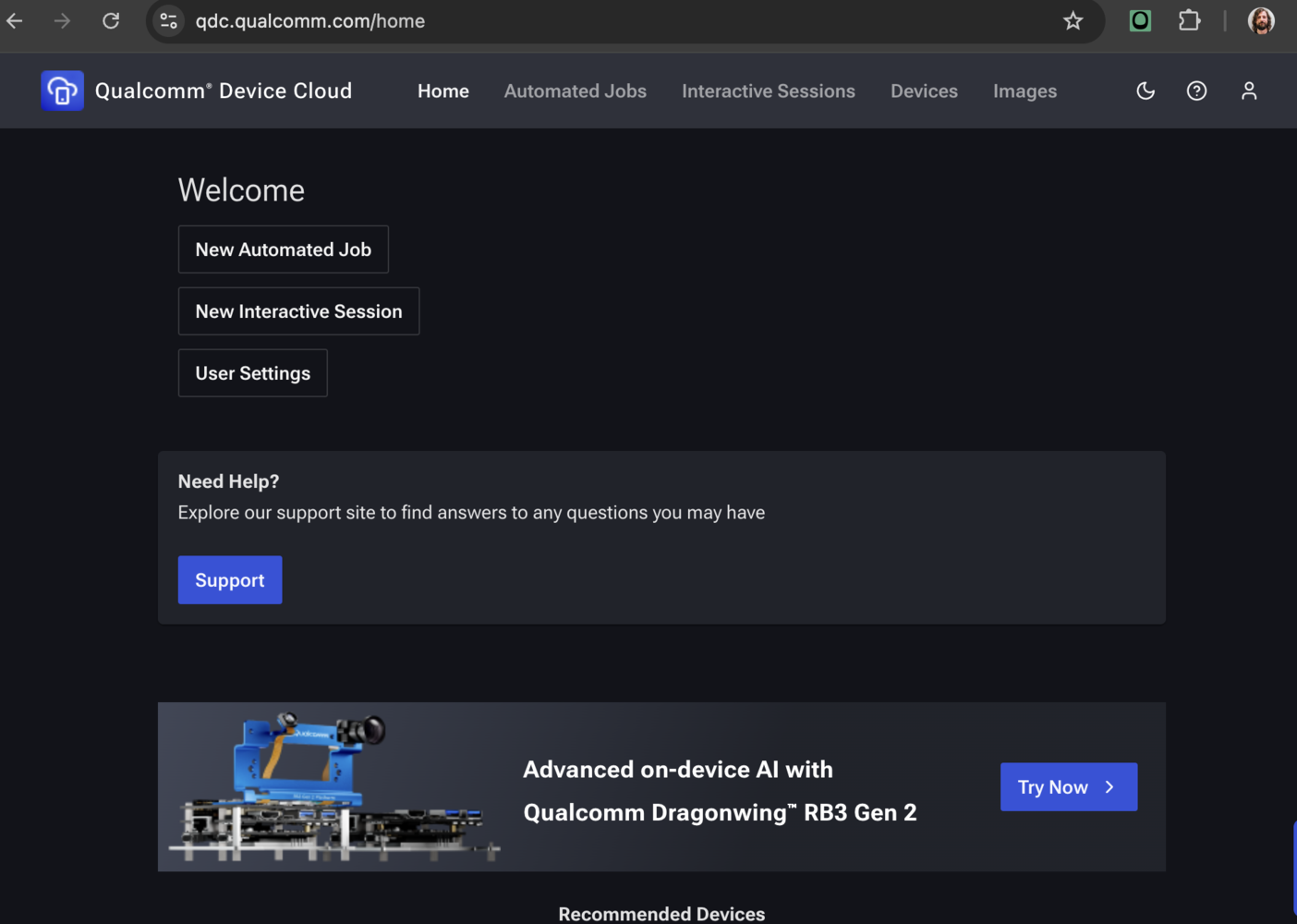

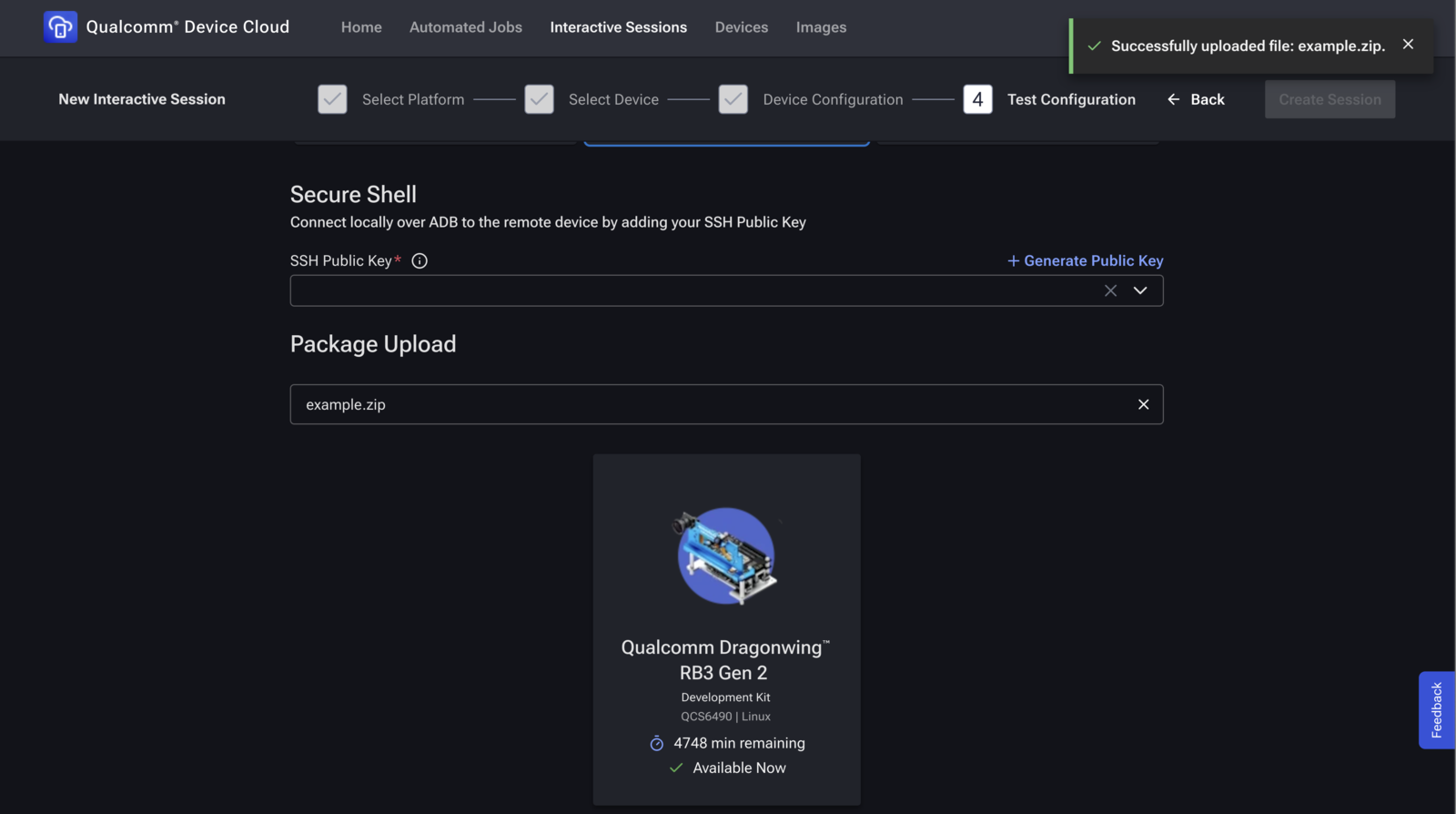

1. Launch an interactive RB3 Gen 2 session

Click the Devices tab in the Qualcomm Device Cloud web UI, then select Advanced on-device AI with Dragonwing™ RB3 Gen 2 You should see a suggestion to Try Now. If you don’t see this option, you may need to request access to the RB3 Gen 2 device type.

- Log in to QDC > Devices > IoT > RB3 Gen 2 > Start Interactive Session.

- Session mode

- SSH only – headless shell (fastest).

- Screen mirroring + SSH – adds VNC if you need the GUI.

- Package upload – This is where you can upload files to the board. Create a zip with your test image (e.g.,

example.jpg) and upload it here.- If you skip this step, you can upload files later using the QDC web UI.

- Click Start. QDC powers on the board and shows your SSH credentials.

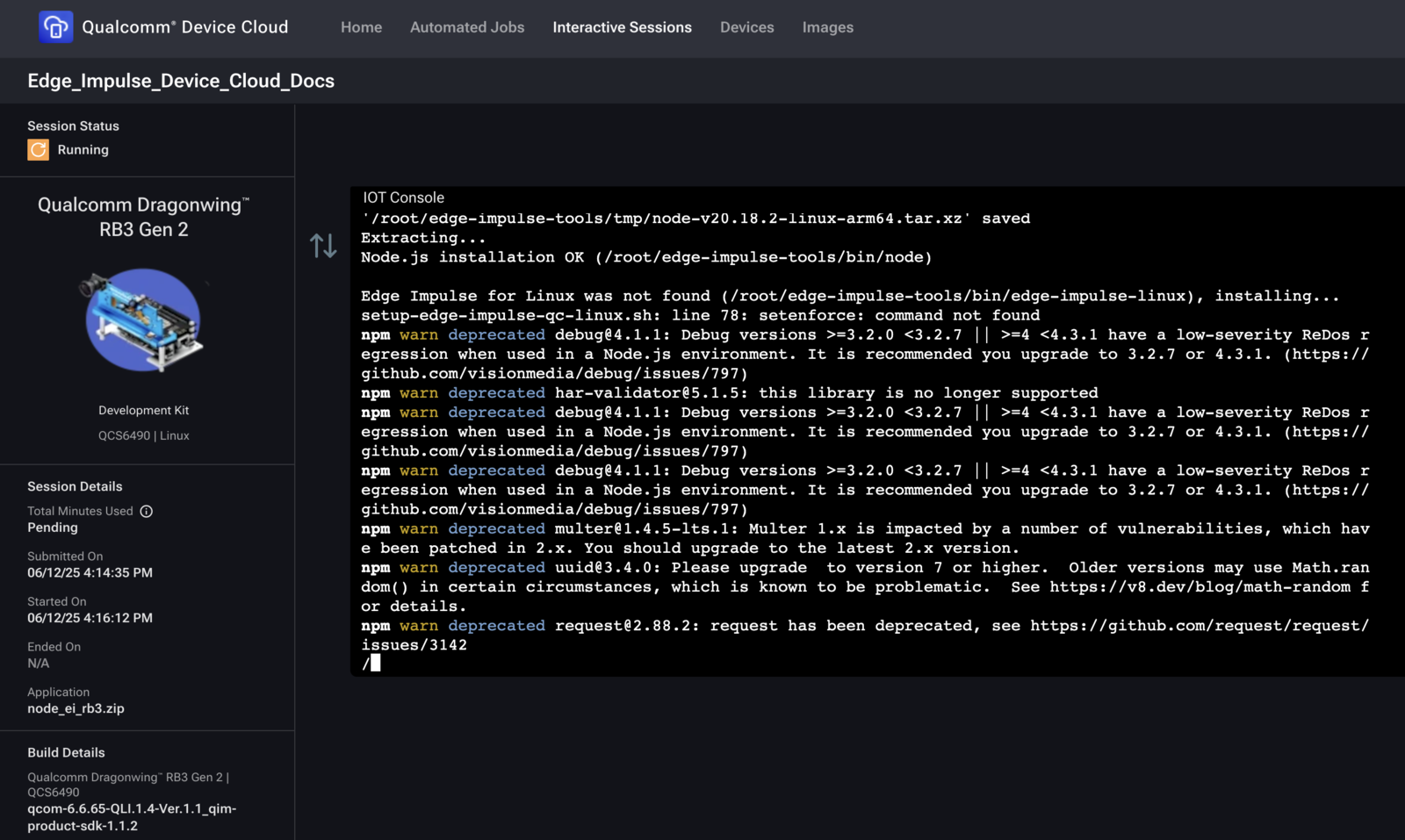

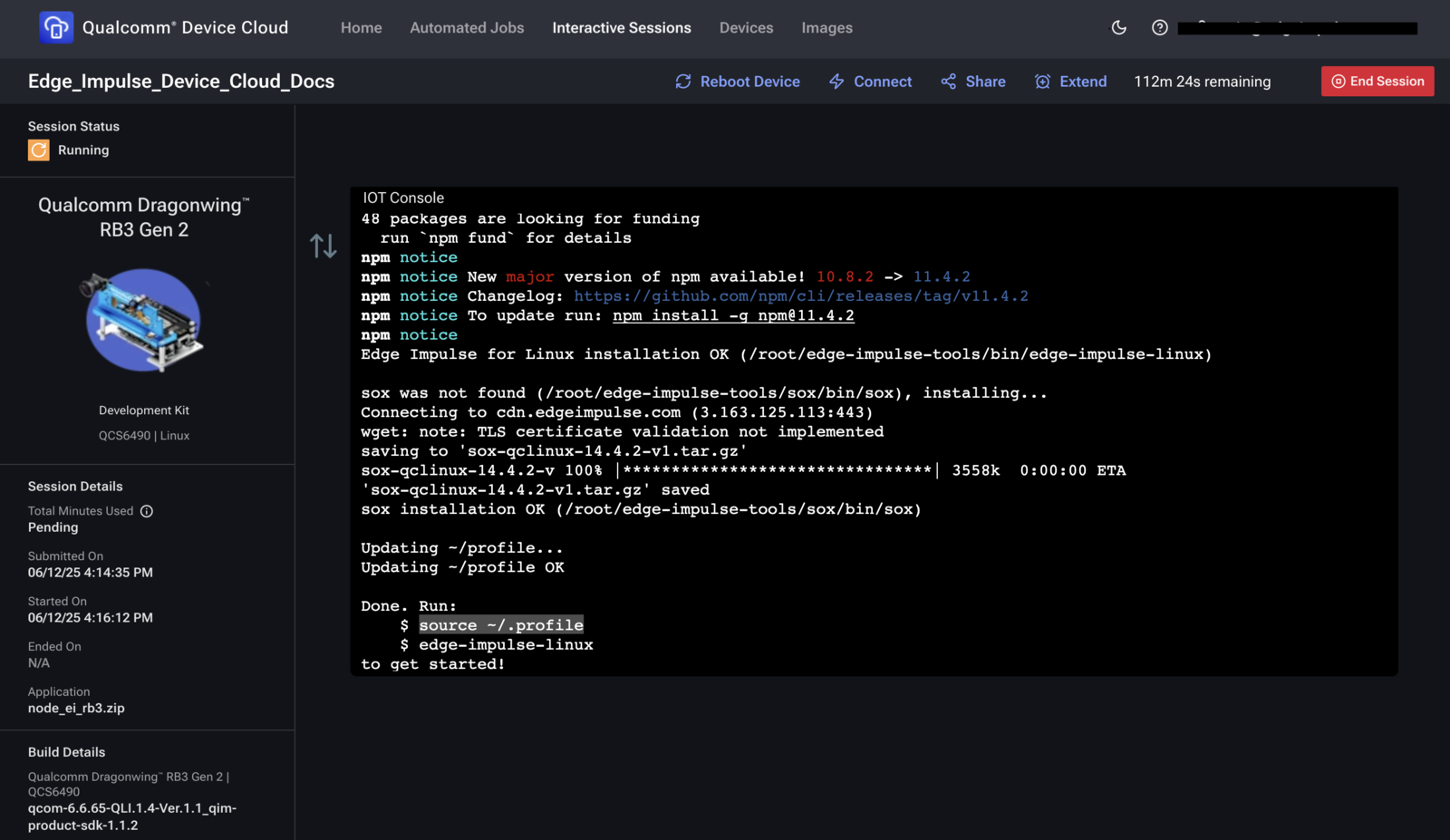

2. Install the Edge Impulse CLI

QDC images are minimal. We have prepared a script to install Node.js and the Edge Impulse CLI once per session:

3. Initialise the CLI environment

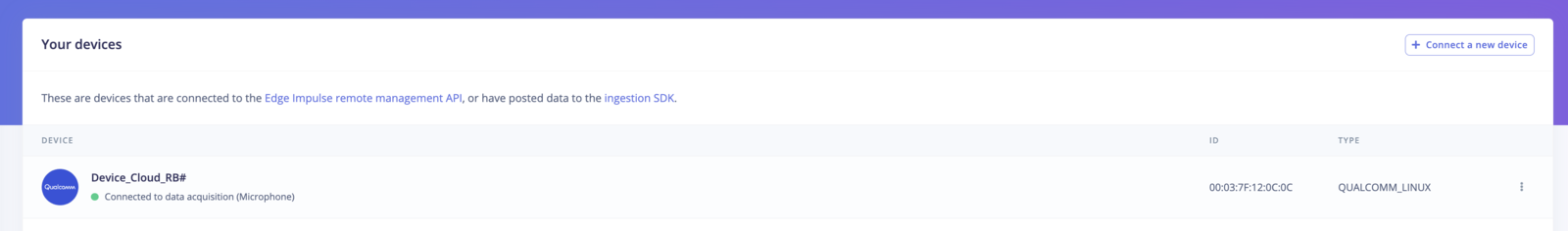

4. Connect the board to Edge Impulse Studio

- Paste the one-time authentication key from Studio > Devices > Connect.

- Select or create a project.

- Camera prompt: Choose None – we will use a static image.

5. Run inference on a static image

5.1 Upload a test image

example.jpg (or any JPEG/PNG) to /data/local/tmp on the board, then move it to your home directory:

5.2 Classify the image

Summary

You now have access to a virtual RB3 Gen 2 in Qualcomm Device Cloud, installed the Edge Impulse CLI, connected the board to Edge Impulse Studio, and ran AI inference on a static test image without physical hardware on your desk.Next steps

From virtual RB3 to QNN acceleration

The virtual RB3 Gen 2 in Device Cloud is a perfect way to validate your Edge Impulse model before you move to a physical Snapdragon device and enable hardware acceleration. In the companion tutorial, QNN Hardware Acceleration on Android, we take the same kind of object detection model and run it on a Snapdragon device with the Qualcomm AI Engine Direct (QNN) TFLite delegate. On a Dragonwing RB3 Gen 2, we measured:| Configuration | DSP (µs) | Inference (µs) | Speedup |

|---|---|---|---|

| Baseline (TFLite CPU/DSP only) | 5,640 | 5,748 | 1.0× |

| With QNN delegate (HTP) | 3,748 | 527 | ~10.9× faster |