You can use any smartphone with a modern browser as a fully-supported client for Edge Impulse. You’ll be able to sample raw data (from the accelerometer, microphone and camera), build models, and deploy machine learning models directly from the studio. Your phone will behave like any other device, and data and models that you create using your mobile phone can also be deployed to embedded devices. The mobile client is open source and hosted on GitHub: edgeimpulse/mobile-client. As there are thousands of different phones and operating system versions we’d love to hear from you there if something is amiss. There’s also a video version of this tutorial:Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Connecting to Edge Impulse

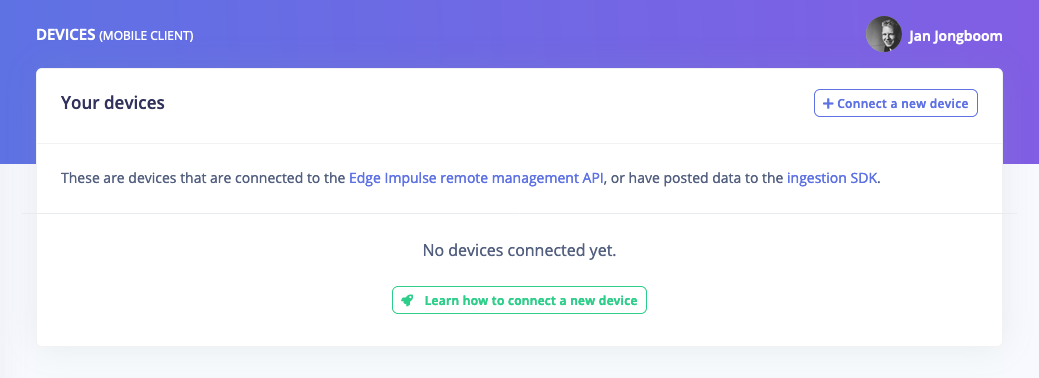

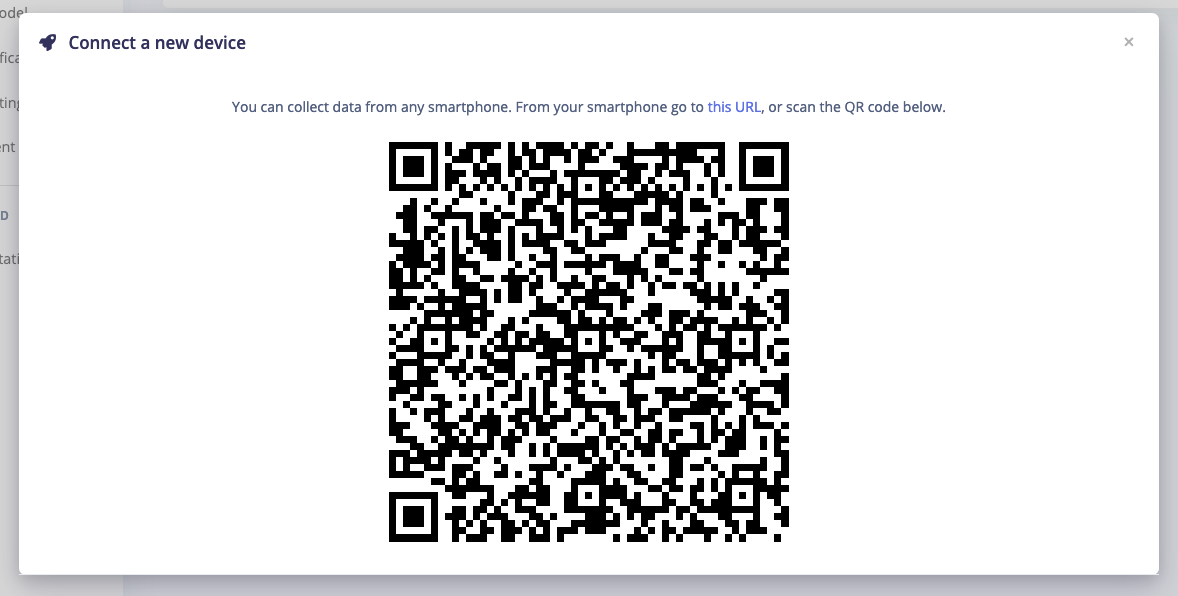

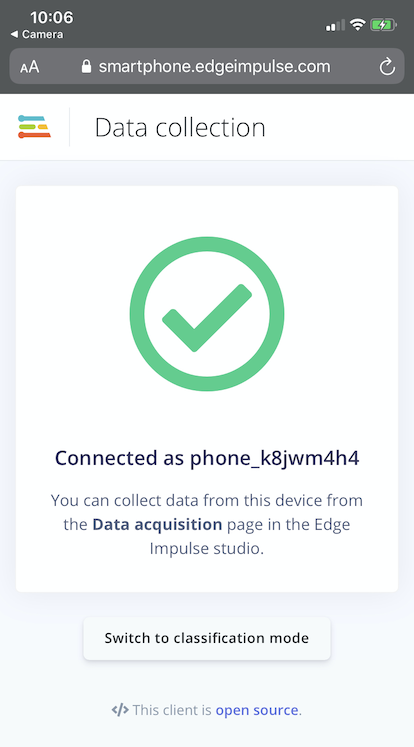

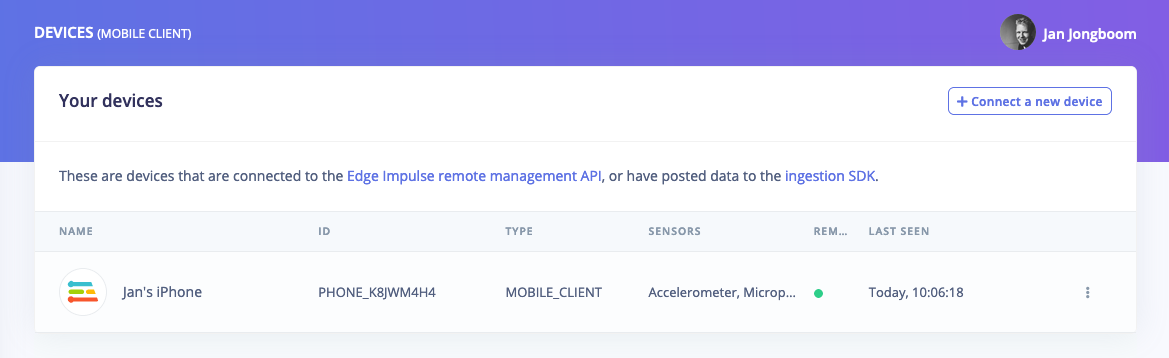

To connect your mobile phone to Edge Impulse, go to your Edge Impulse project, and head to the Devices page. Then click Connect a new device.

⋮.

Next steps: building a machine learning model

With everything set up you can now build your first machine learning model with these tutorials:- Building a continuous motion recognition system.

- Keyword spotting

- Sound recognition

- Image classification

- object detection.

- Object detection with centroids (FOMO)

No data (using Chrome on Android)?

You might need to enable motion sensors in the Chrome settings via Settings > Site settings > Motion sensors.Deploying back to device

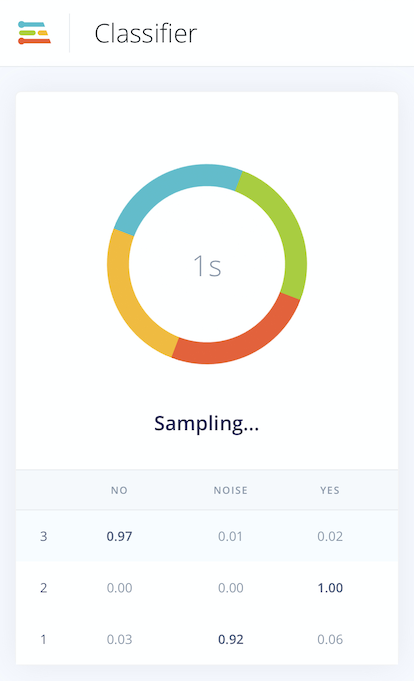

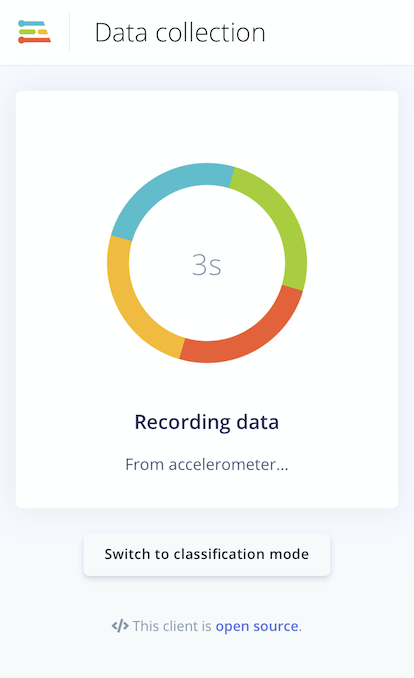

With the impulse designed, trained and verified you can deploy this model back to your phone. This makes the model run without an internet connection, minimizes latency, and runs with minimum power consumption. Edge Impulse can package up the complete impulse - including the signal processing code, neural network weights, and classification code - up in a single WebAssembly package that you can straight from the browser. To do so, just click Switch to classification mode at the bottom of the mobile client. This will first build the impulse, and then samples data from the sensor, run the signal processing code, and then classify the data: