Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Why lower compute time?

Developer accounts on Edge Impulse have limits on their compute time per job. Below are some tips to help you stay within these compute limits.Reduce dataset size

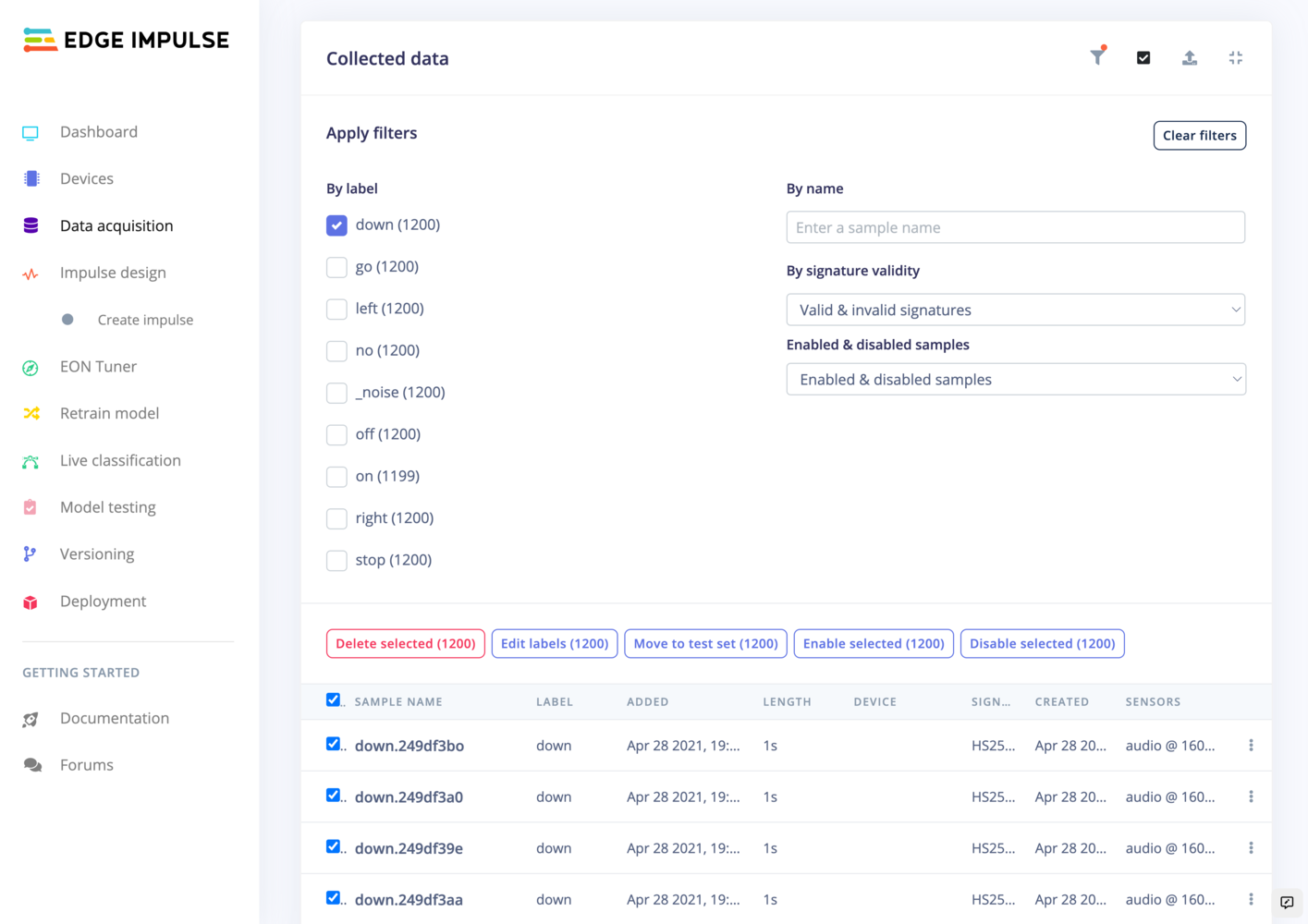

The dataset size has a direct impact on the training time. If you’re reaching the limit, you can reduce decrease your dataset size. To easily reduce your dataset, go to Data acquisition, click on the Filter and Select icons. You can either delete your samples or disable them:

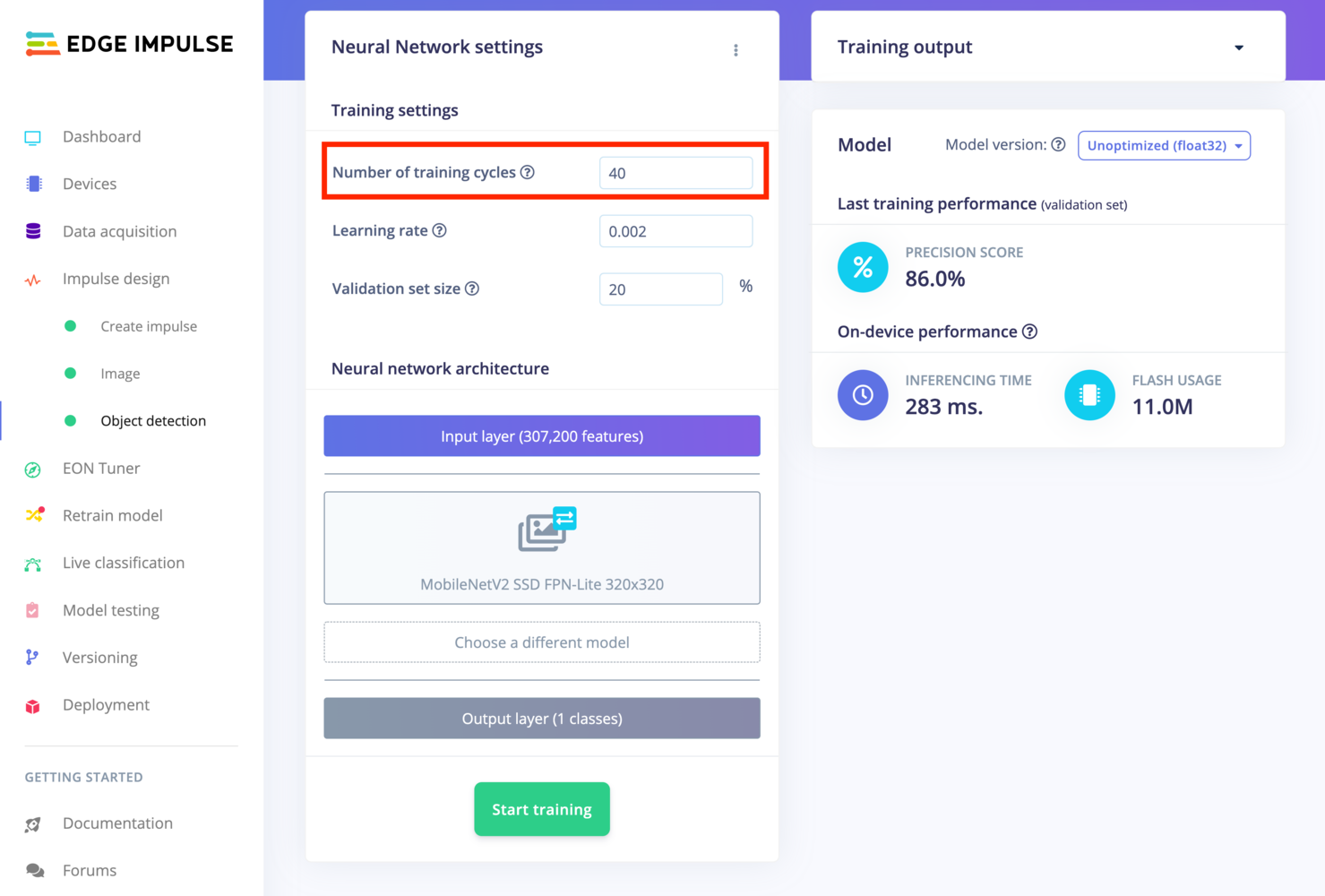

Reduce your number of epochs

An epoch (or training cycle) means one complete pass of the training dataset through the algorithm. Reducing this hyper parameter will reduce the number of times you perform a complete pass through your dataset, thus, lower your training time. To reduce the number of epochs, just lower the Number of training cycles value:

Apply Early Stopping

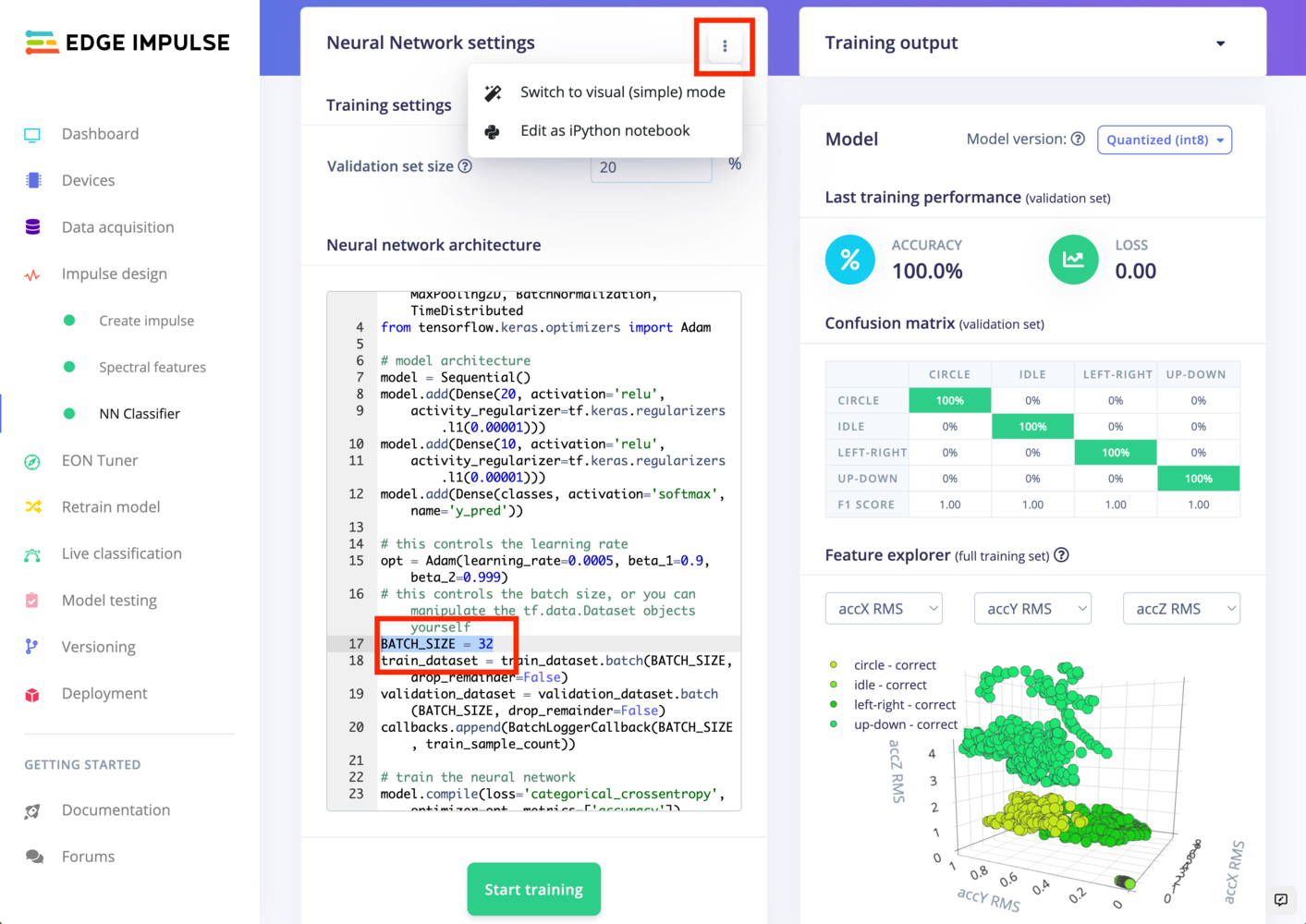

Early Stopping is a technique that helps prevent overfitting by halting the training process at the right time. This approach allows your model to stop training as soon as it starts overfitting, or if further training doesn’t lead to better performance, making your training process more efficient and potentially leading to better model performance. See how to apply Early Stopping in Expert Mode.Increase your batch size

The batch size is a hyperparameter that defines the number of samples to work through before updating the internal model parameters. A training dataset can be divided into one or more batches. The bigger your batch is, the less iterations will be performed. To increase the batch size, on the NN Classifier view, switch to expert mode and change theBATCH_SIZE hyper parameter:

Reduce the complexity of your neural network architecture

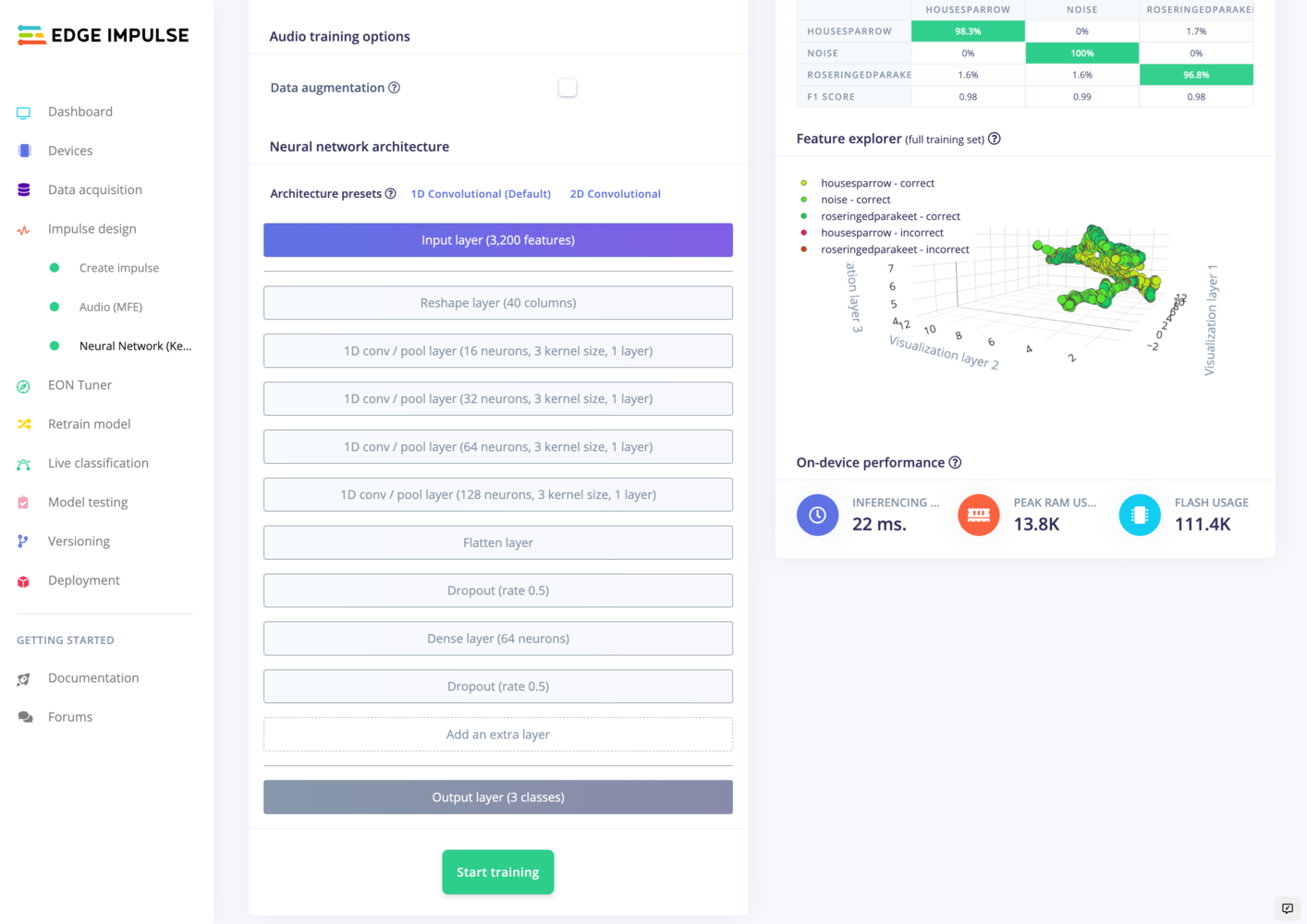

A simple neural network architecture will train faster than a very complex. To reduce the complexity of your NN architecture, remove some of the layers, reduce the number of neurons and kernel size: