Sensor fusion is about combining data from various sensors to gain a more comprehensive understanding of your environment. In this tutorial, we will demonstrate sensor fusion by bringing together high-dimensional audio or image data with time-series sensor data. This combination allows you to extract deeper insights from your sensor data.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Concepts

Multi-impulse vs multi-model vs sensor fusionRunning multi-impulse refers to running two separate projects (different data, different DSP blocks and different models) on the same target. It will require modifying some files in the EI-generated SDKs.Running multi-model refers to running two different models (same data, same DSP block but different tflite models) on the same target. See how to run a motion classifier model and an anomaly detection model on the same device in this tutorial.Sensor fusion refers to the process of combining data from different types of sensors to give more information to the neural network. To extract meaningful information from this data, you can use the same DSP block, multiples DSP blocks, or use neural networks embeddings like we will see in this tutorial.

The Challenge of Handling Multiple Data Types

When you have data coming from multiple sources, such as a microphone capturing audio, a camera capturing images, and sensors collecting time-series data. Integrating these diverse data types can be tricky and conventional methods fall short.The Limitations of Default Workflows

With the standard workflow, if you have data streams from various sources, you might want to create separate DSP blocks for each data type. For instance, if you’re dealing with audio data from microphones, image data from cameras, and time-series sensor data from accelerometers, you could create separate DSP blocks for each. For example:- A spectrogram-based DSP block for audio data

- An image DSP block for image data

- A spectral analysis block for time-series sensor data

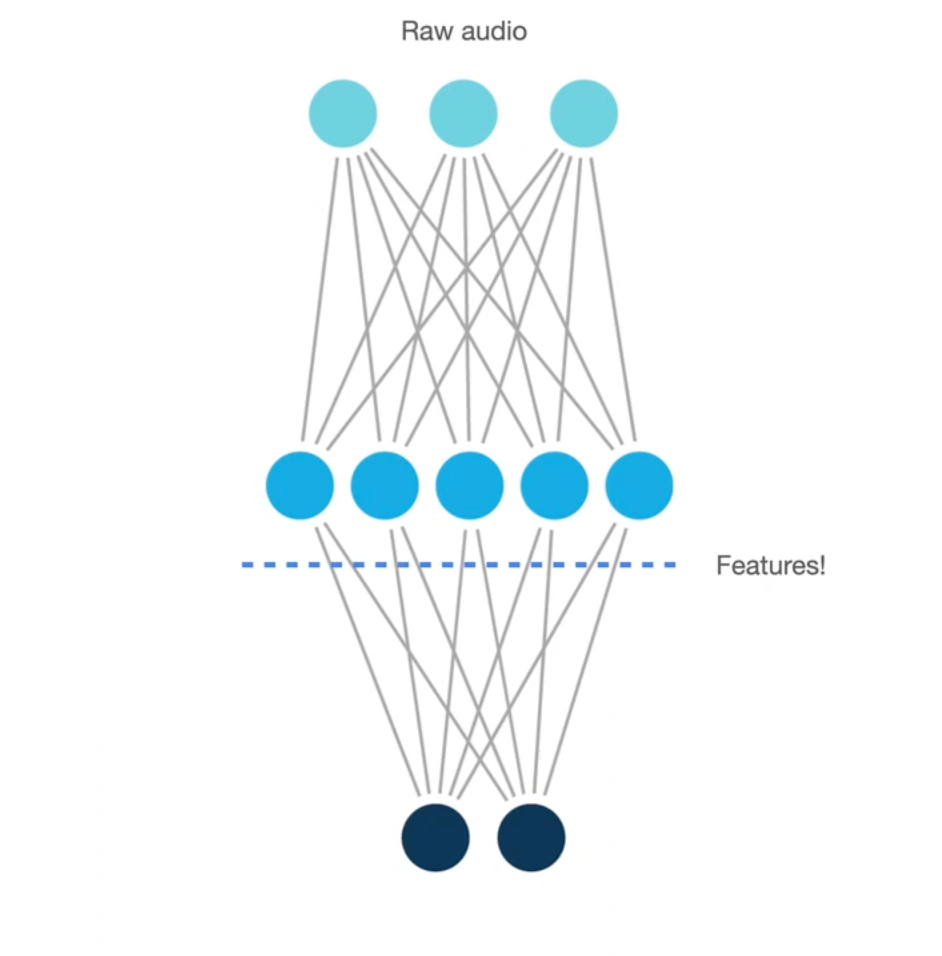

The Role of Embeddings

To bypass the limitation stated above, you may consider using neural networks embeddings. In essence, embeddings are compact, meaningful representations of your data, learned by a neural network. Embeddings are used throughout Edge Impulse, including the Data Explorer and this advanced sensor fusion tutorial. While training the neural network, the model try to find the mathematical formula that best maps the input to the output. This is done by tweaking each neuron (each neuron is a parameter in our formula). The interesting part is that each layer of the neural network will start acting like a feature extracting step but highly tuned for your specific data. Finally, instead of having a classifier for last layer (usually asoftmax layer), we cut the neural network somewhere at the end and we obtained the embeddings.

Sensor Fusion Workflow using Embeddings

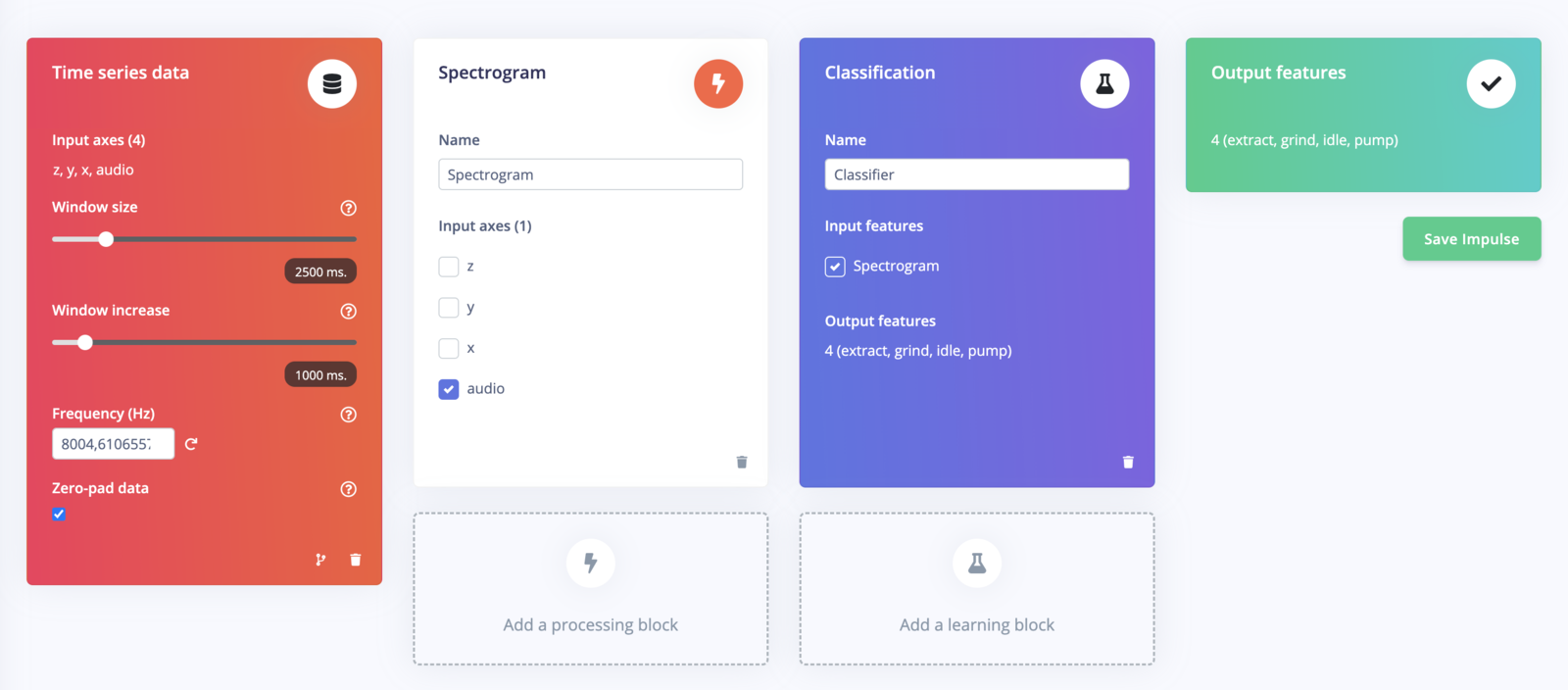

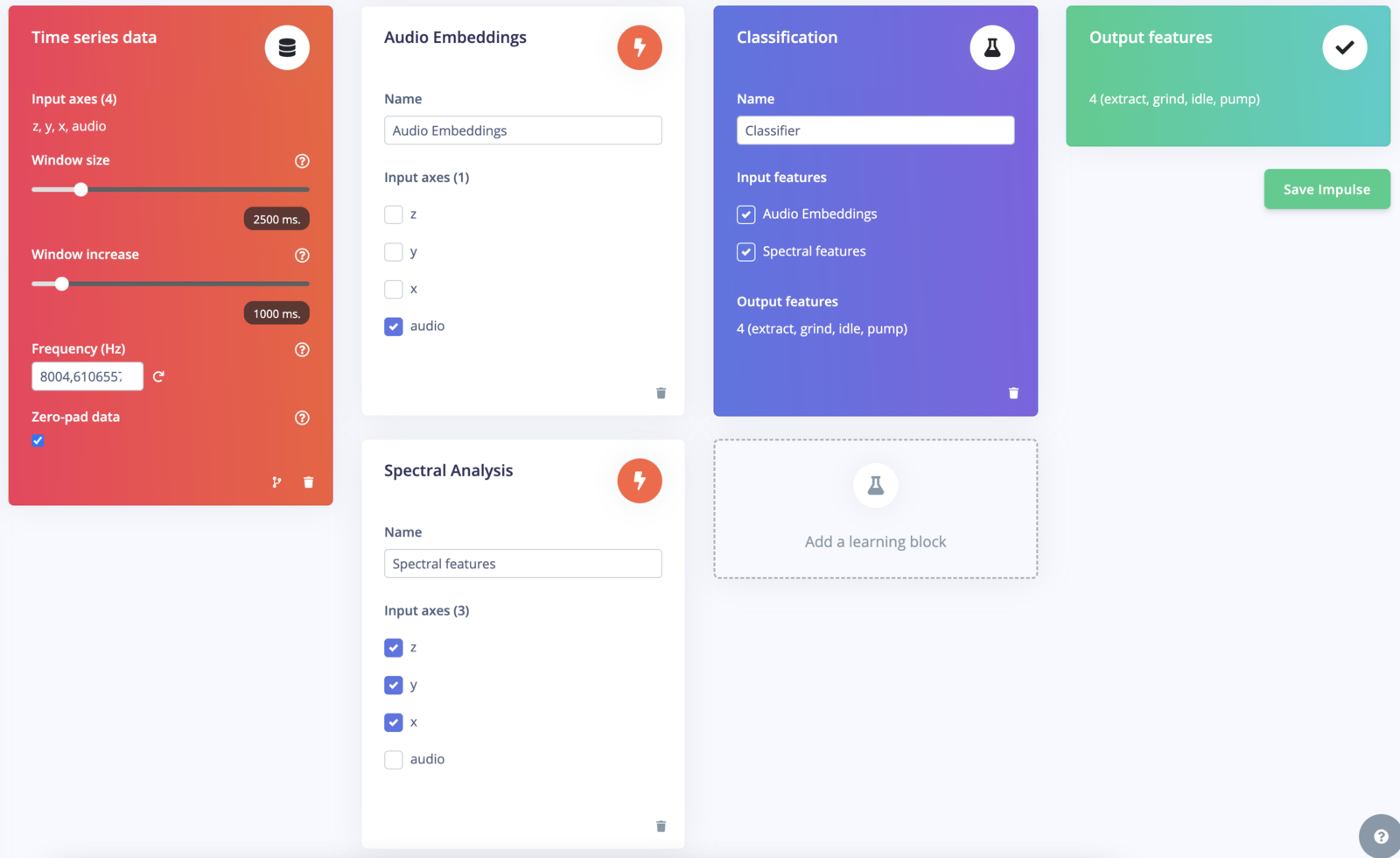

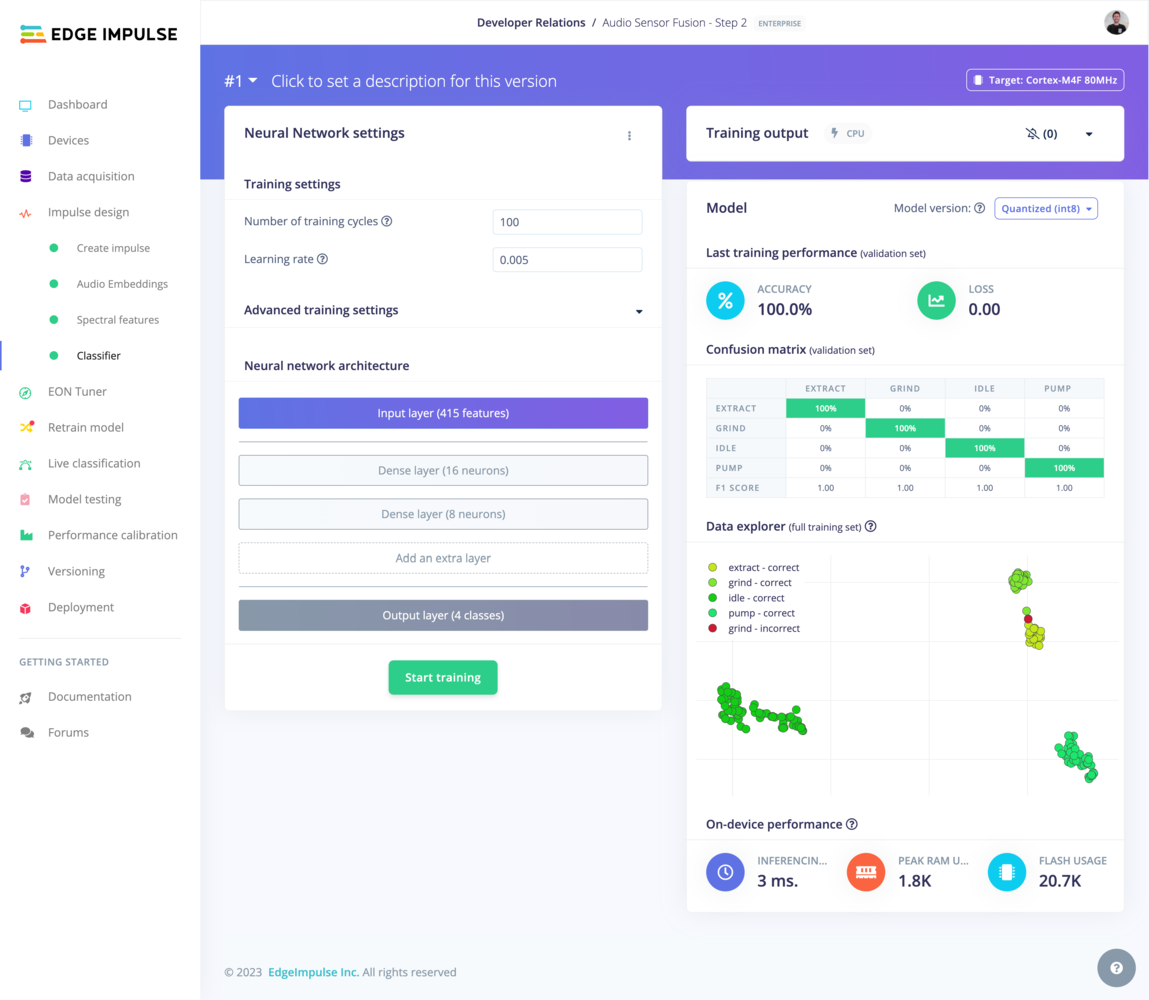

Here’s how we approach advanced sensor fusion with Edge Impulse. In this workflow, we will show how to perform sensor fusion using both audio data and accelerometer data to classify different stages of a grinding coffee machine (grind, idle, pump and extract). First, we are going to use a spectrogram DSP block and a NN classifier using two dense network. This first impulse will then be used to generate the embeddings and will be made available in a custom DSP block. Finally, we are going to train a fully connected layer using features coming from both the generated embeddings and a spectral feature DSP block.

We have develop two Edge Impulse public projects, one publicly available dataset and a Github repository containing the source code to help you follow the steps:

- Dataset: Coffee Machine Stages

- Edge Impulse project 1 (used to generate the embeddings): Audio Sensor Fusion - Step 1

- Edge Impulse project 2 (final impulse): Audio Sensor Fusion - Step 2

- Github repository containing the source code: Sensor fusion using NN Embeddings

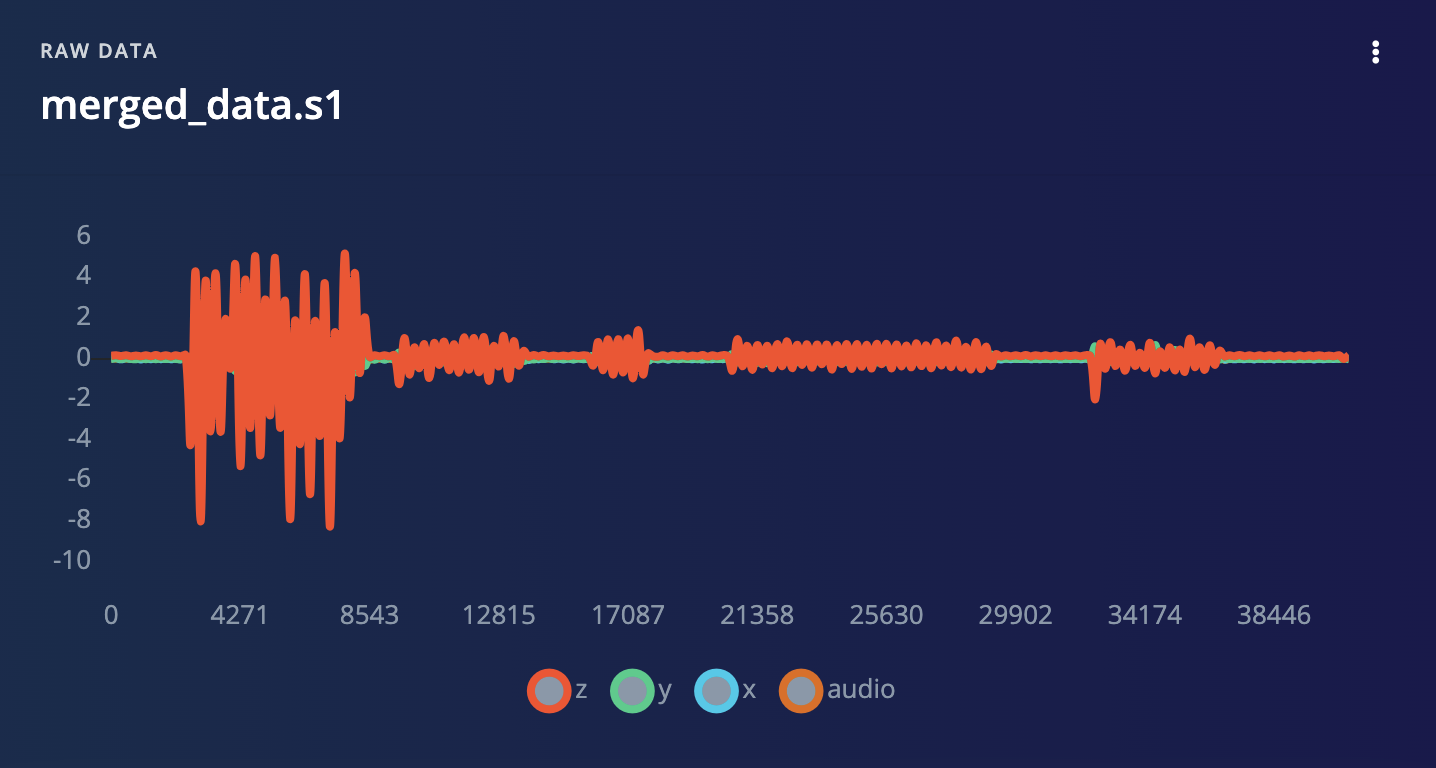

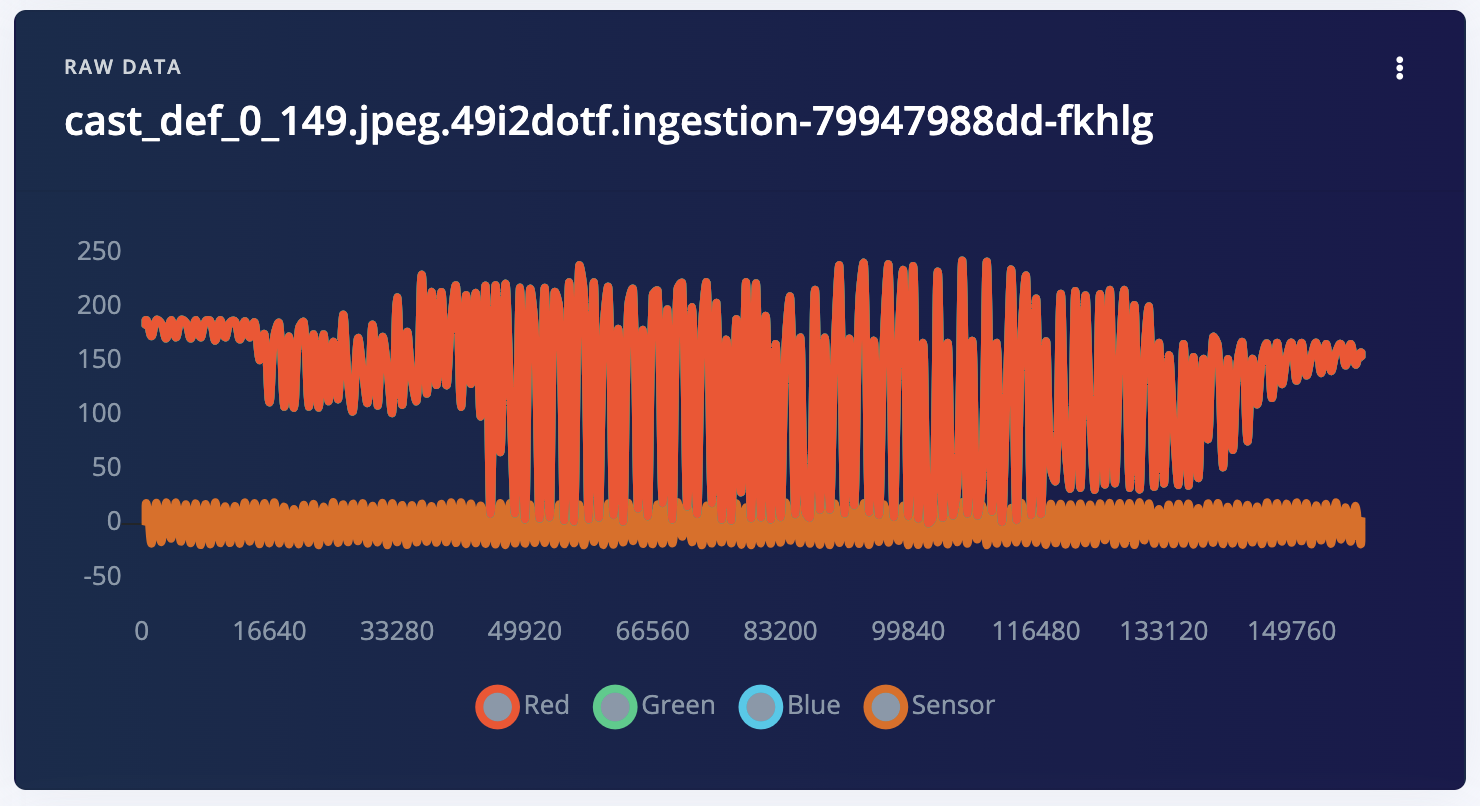

1. Prepare your dataset

The first step is to have input data samples that contain both sensors. In Edge Impulse studio, you can easily visualize time-series data, like audio and accelerometer data.

2. Training Edge Impulse projects for each high-dimensional sensor data

Train separate projects for high dimensional data (audio or image data). Each project contains both a DSP block and a trained neural network.

3. Generate the Embeddings using the exported C++ inferencing SDK

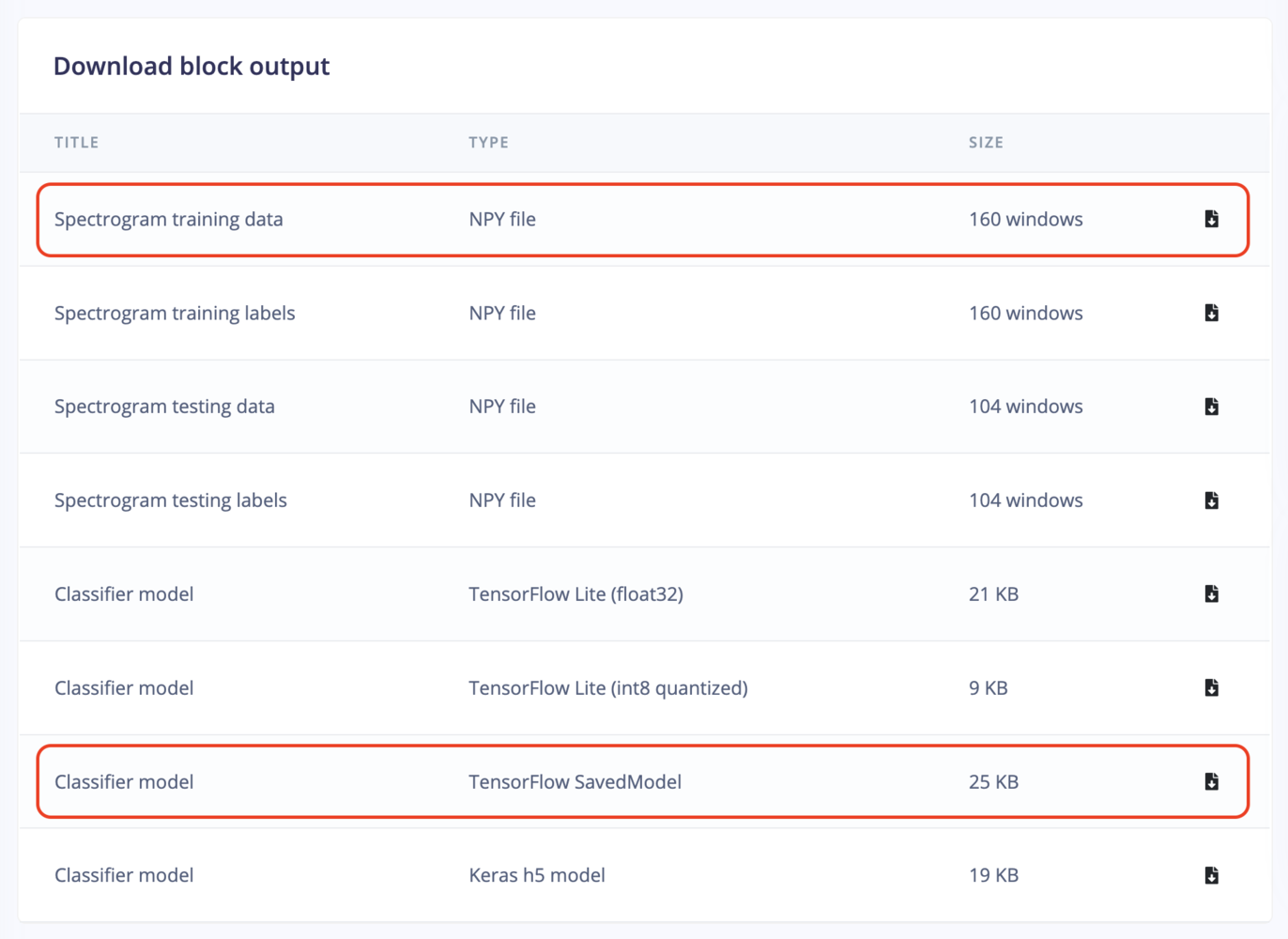

Clone this repository:saved_model). Extract the save_model directory and place it under the /input repository.

Download Test Data: From the same dashboard, download the test or train data NPY file (input.npy). Place this numpy array file under the /input repository. This will allow us to generate a quantized version of the tflite embeddings. Ideally choose the test data if you have some data available.

saved_model_to_embeddings.py script for this conversion for a better understanding.

4. Encapsulate the Embeddings in a Custom DSP Block

To make sensor fusion work seamlessly, Edge Impulse enables you to create custom DSP blocks. These blocks combine the necessary spectrogram/image-processing and neural network components for each data type. Custom DSP Block Configuration: In the DSP block, perform two key operations as specified in thedsp.py script:

- Run the DSP step with fixed parameters.

- Run the neural network.

dsp-blocks/features-from-audio-embeddings/dsp.py to match your DSP configuration:

get_tflite_implementation function returns the TFLite model. Note that the on-device implementation will not be correct initially when generating the C++ library, as only the neural network part is compiled. We will fix this in the final exported C++ Library.

Now publish your new custom DSP block.

5. Create a New Impulse

Multiple DSP Blocks: Create a new impulse with three DSP blocks and a classifier block. The routing should be as follows:- Audio data routed through the custom block.

- Sensor data routed through spectral analysis.

6. Export and Modify the C++ Library

Export as a C++ Library:- In the Edge Impulse platform, export your project as a C++ library.

- Choose the model type that suits your target device (

quantizedvs.float32). - Make sure to select EON compiler option

- Copy the exported C++ library to the

example-cppfolder for easy access.

- In the

model-parameters/model_variables.hfile of the exported C++ library, add a forward declaration for the custom DSP block you created.

&extract_tflite_eon_features into &custom_sensor_fusion_features in the ei_dsp_blocks object.

main.cpp file of the C++ library, implement the custom_sensor_fusion_features block. This block should:

- Call into the Edge Impulse SDK to generate features.

- Execute the rest of the DSP block, including neural network inference.

7. Compile and run the app

- Copy a test sample’s raw features into the

features[]array insource/main.cpp - Enter

make -jin this directory to compile the project. If you encounter any OOM memory error trymake -j4(replace 4 with the number of cores available) - Enter

./build/appto run the application - Compare the output predictions to the predictions of the test sample in the Edge Impulse Studio.