Similarly to the Spectrogram block, the Audio MFE processing block extracts time and frequency features from a signal. However it uses a non-linear scale in the frequency domain, called Mel-scale. It performs well on audio data, mostly for non-voice recognition use cases when sounds to be classified can be distinguished by human ear. GitHub repository containing all DSP block code: edgeimpulse/processing-blocks.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Feature output format

The “Processed features” array has the following format:- Column major, from low frequency to high.

- Number of rows will be equal to the filter number

- Each column represents a single frame

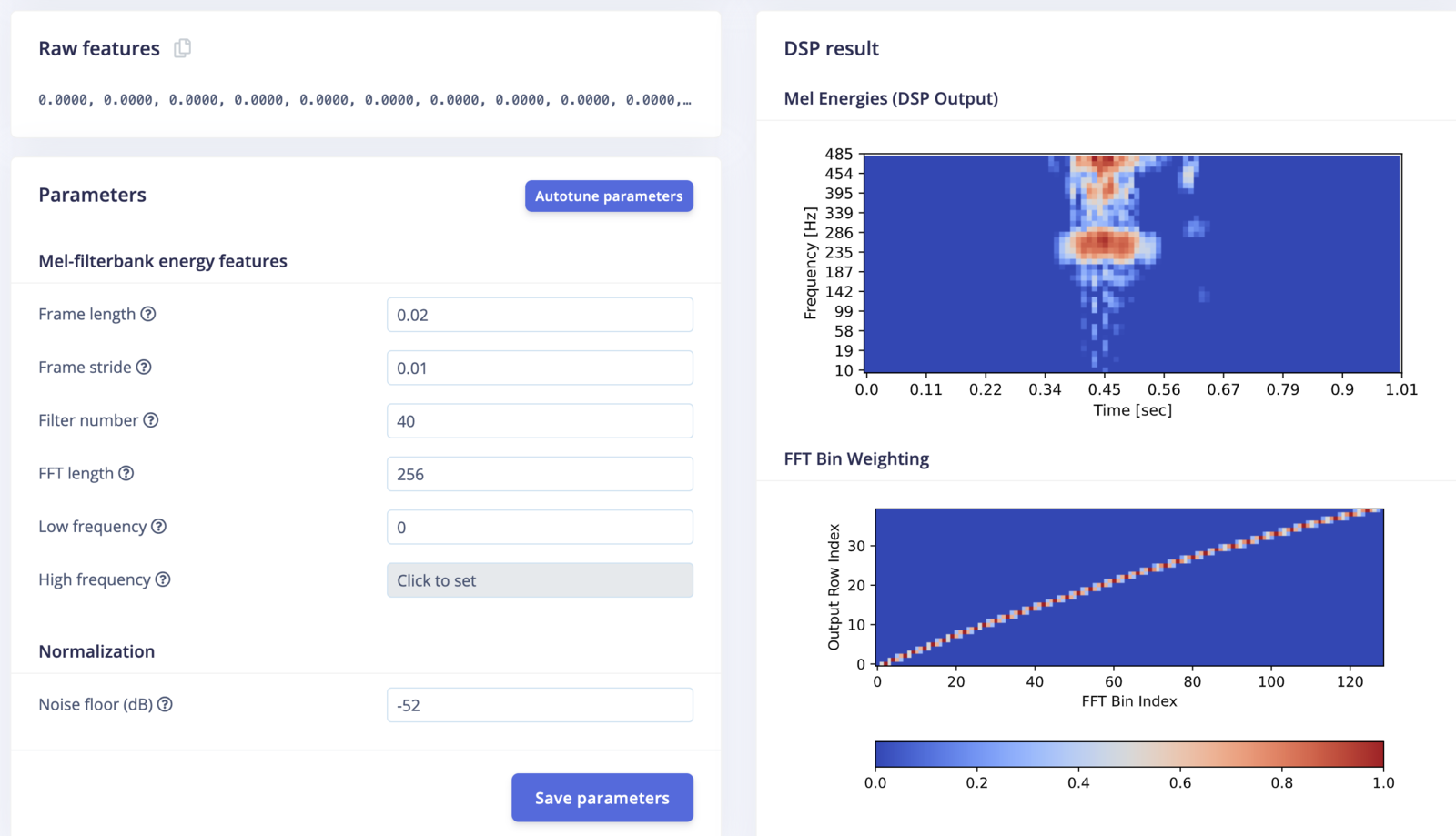

Audio MFE parameters

Compatible with the DSP AutotunerPicking the right parameters for DSP algorithms can be difficult. It often requires a lot of experience and experimenting. The autotuning function makes this process easier by recommending a set of parameters that is tuned for your dataset.

- Frame length: The length of each frame in seconds

- Frame stride: The step between successive frame in seconds

- Filter number: The number of triangular filters applied to the spectrogram

- FFT length: The FFT size

- Low frequency: Lowest band edge of Mel-scale filterbanks

- High frequency: Highest band edge of Mel-scale filterbanks

- Noise floor (dB): signal lower than this level will be dropped