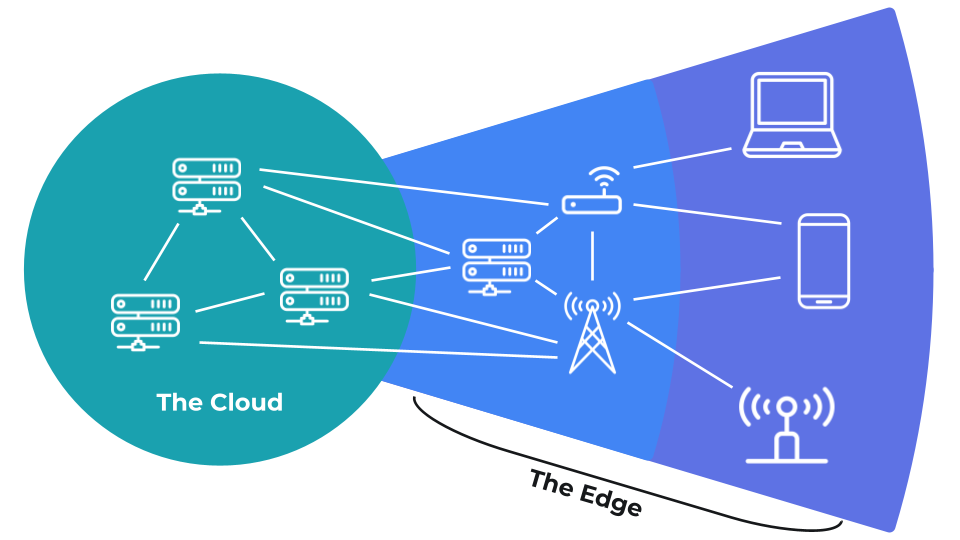

Edge AI is the process of running artificial intelligence (AI) algorithms on devices at the edge of the Internet or other networks. The traditional approach to AI and machine learning (ML) is to use powerful, cloud-based servers to perform model training as well as inference (prediction serving). While edge devices might have limited resources compared to their cloud-based cousins, they offer reduced bandwidth usage, lower latency, and additional data privacy.Documentation Index

Fetch the complete documentation index at: https://docs.edgeimpulse.com/llms.txt

Use this file to discover all available pages before exploring further.

Edge AI series

The following series of articles and videos will guide you through the various concepts and techniques that make up edge AI. We will also present a few case studies that demonstrate how edge AI is being used to solve real-world problems. We encourage you to work through each video and reading section. You will find a quiz at the end of each written section to test your knowledge. At the end of the course, you will find a comprehensive test. If you pass it with a score of at least 80%, you will be sent a digital certificate showing your completion of the course. You may take the test as many times as you like.Contents

We will cover the following concepts with the given learning objectives:- What is edge computing?

- Understand the differences between cloud and edge computing

- Advantages and disadvantages of processing data on edge devices

- What is the Internet of Things (IoT)

- What is machine learning (ML)?

- What are the differences between artificial intelligence, machine learning, and deep learning

- Understand the history of AI

- What are the different categories of machine learning, and what problems do they tackle

- What is edge AI?

- Articulate the difference between training and inference

- How does traditional cloud-based AI inference work

- What are the benefits of running AI algorithms on edge devices

- Examples of edge AI systems

- What are the business implications for future edge AI growth

- How to choose an edge AI device

- Define and provide examples for the different edge computing devices

- How to choose a particular edge computing device for your edge AI application

- Edge AI lifecycle

- How to identify a use case where edge AI can uniquely solve a problem

- Identify constraints to edge AI implementations

- Understand the edge AI pipeline of collecting data, analyzing the data, feature engineering, training a model, testing the model, deploying the model, and monitoring the model’s performance

- What is edge MLOPs?

- Identify the three principles of MLOps: version control, automation, governance

- Describe the benefits of automating various parts of the edge AI lifecycle

- Define operations and maintenance (O&M)

- How does edge MLOps differ from cloud-based MLOps

- Define the causes of model drift: data drift and concept drift

- What is Edge Impulse?

- How does a short learning curve lead to faster go-to-market times

- Articulate the advantages and disadvantages of using an edge AI platform versus building one from scratch

- Case study: Izoelectro

- How is edge AI used to detect anomalies on power lines

- How anomaly detection on edge devices saves power over cloud-based approaches

- Going further and certification

- Resources to dive deeper into the technology and use cases of edge AI

- How to get started with Edge Impulse

- Comprehensive test and certification

The network edge

Edge computing is a strategy where data is processed and stored at the periphery of a computer network. In most cases, processing and storing data on remote servers, especially internet servers, is known as “cloud computing.” The edge includes all computing devices not part of the cloud.